吳恩達機器學習練習2——Logistic迴歸

阿新 • • 發佈:2018-12-19

Logistic迴歸

代價函式

Logistic迴歸是分類演算法,它的輸出值在0和1之間。

h(x)的作用是,對於給定的輸入變數,根據選擇的引數計算輸出變數等於1的可能性(estimated probablity)即h(x)=P(y=1|x;θ)

梯度下降

練習2

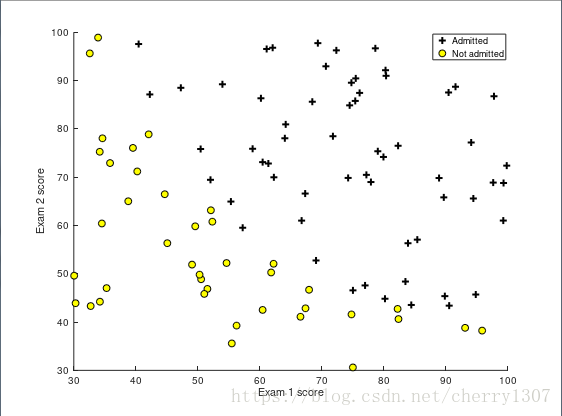

資料集

x1,x2 : score on two exams

y : admissions decision

視覺化資料集

function plotData(X, y) figure; hold on; pos = find(y==1); neg = find(y == 0); plot(X(pos, 1), X(pos, 2), 'k+','LineWidth', 2, 'MarkerSize', 7); plot(X(neg, 1), X(neg, 2), 'ko', 'MarkerFaceColor', 'y','MarkerSize', 7); hold off; end

find()函式的基本功能是返回向量或者矩陣中不為0的元素的位置索引。

如果需要找到其中滿足一定條件的元素,find(X==4)找到矩陣X中值為4的資料的索引

sigmoid函式

function g = sigmoid(z)

g = zeros(size(z));

g = 1./(1+exp.^(-z));

end

代價函式及梯度下降

function [J, grad] = costFunction(theta, X, y) m = length(y); J = 0; grad = zeros(size(theta)); h = sigmoid(X*theta); J = (1/m)*sum((-y)*log(h)-(1-y)*log(1-h)); for j = 1 : size(X,2) grad(j) = (1/m)*sum((h-y).*X(:,j)); end

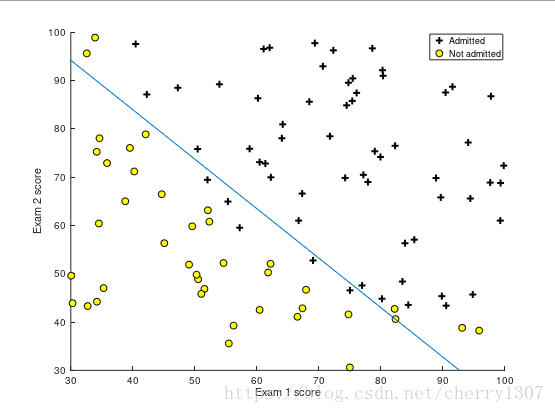

使用fminunc學習引數

% Set options for fminunc

options = optimset('GradObj', 'on', 'MaxIter', 400);

% Run fminunc to obtain the optimal theta

% This function will return theta and the cost

[theta, cost] = fminunc(@(t)(costFunction(t, X, y)), initial_theta, options);

評估

function p = predict(theta, X) m = size(X, 1); p = zeros(m, 1); p = sigmoid(X*theta)>=0.5; end