使用deepfashion實現自己的第一個分類網路

阿新 • • 發佈:2018-12-28

這個過程主要分為三個步驟:

資料預處理

資料處理就是把資料按照一定的格式寫出來,以便網路自己去讀取資料

1準備原始資料

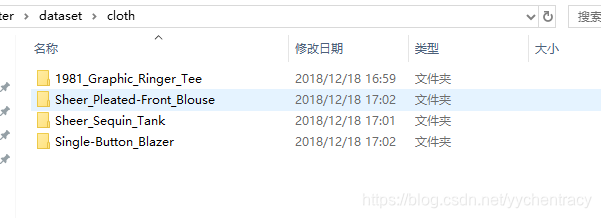

我的cloth資料一共是四個類別,每個類別有衣服47張,一用是188張圖片,這些大小不一的原始圖片轉換成我們訓練需要的shape。

原始資料放在同一個資料夾下面:

2 程式設計實現

製作Tfrecords,讀取Tfrecords資料獲得iamge和label,列印驗證並儲存生成的圖片。

#將原始圖片轉換成需要的大小,並將其儲存 #======================================================================================== import os import tensorflow as tf from PIL import Image #原始圖片的儲存位置 orig_picture = 'dataset/cloth/'#我的資料放在這個問價加下面 #生成圖片的儲存位置 gen_picture = 'dataset/image_data/inputdata/'#E:/Re_train/image_data/inputdata/' #需要的識別型別 classes = {'Graphic_Ringer_Tee','Sheer_Pleated_Front_Blouse','Sheer_Sequin_Tank','Single_Button_Blazer'} #樣本總數 num_samples = 188 #製作TFRecords資料 def create_record(): writer = tf.python_io.TFRecordWriter("cloth_train.tfrecords") for index, name in enumerate(classes): class_path = orig_picture +"/"+ name+"/" for img_name in os.listdir(class_path): img_path = class_path + img_name img = Image.open(img_path) img = img.resize((64, 64)) #設定需要轉換的圖片大小 img_raw = img.tobytes() #將圖片轉化為原生bytes print (index,img_raw) example = tf.train.Example( features=tf.train.Features(feature={ "label": tf.train.Feature(int64_list=tf.train.Int64List(value=[index])), 'img_raw': tf.train.Feature(bytes_list=tf.train.BytesList(value=[img_raw])) })) writer.write(example.SerializeToString()) writer.close() #======================================================================================= def read_and_decode(filename): # 建立檔案佇列,不限讀取的數量 filename_queue = tf.train.string_input_producer([filename]) # create a reader from file queue reader = tf.TFRecordReader() # reader從檔案佇列中讀入一個序列化的樣本 _, serialized_example = reader.read(filename_queue) # get feature from serialized example # 解析符號化的樣本 features = tf.parse_single_example( serialized_example, features={ 'label': tf.FixedLenFeature([], tf.int64), 'img_raw': tf.FixedLenFeature([], tf.string) }) label = features['label'] img = features['img_raw'] img = tf.decode_raw(img, tf.uint8) img = tf.reshape(img, [64, 64, 3]) #img = tf.cast(img, tf.float32) * (1. / 255) - 0.5 label = tf.cast(label, tf.int32) return img, label #======================================================================================= if __name__ == '__main__': create_record() batch = read_and_decode('cloth_train.tfrecords') init_op = tf.group(tf.global_variables_initializer(), tf.local_variables_initializer()) with tf.Session() as sess: #開始一個會話 sess.run(init_op) coord=tf.train.Coordinator() threads= tf.train.start_queue_runners(coord=coord) for i in range(num_samples): example, lab = sess.run(batch)#在會話中取出image和label img=Image.fromarray(example, 'RGB')#這裡Image是之前提到的 if lab==0: img.save(gen_picture+'/'+'Graphic_Ringer_Tee'+'/'+str(i)+str(lab)+'.jpg')#存下圖片;注意cwd後邊加上‘/’ elif lab==1: img.save(gen_picture+'/'+'Sheer_Pleated_Front_Blouse'+'/'+str(i)+str(lab)+'.jpg')#存下圖片;注意cwd後邊加上‘/’ elif lab==2: img.save(gen_picture+'/'+'Sheer_Sequin_Tank'+'/'+str(i)+str(lab)+'.jpg')#存下圖片;注意cwd後邊加上‘/’ elif lab==3: img.save(gen_picture+'/'+'Single_Button_Blazer'+'/'+str(i)+str(lab)+'.jpg')#存下圖片;注意cwd後邊加上‘/’ print(gen_picture+'/'+str(i)+'samples'+str(lab)+'.jpg') print(example, lab) coord.request_stop() coord.join(threads) sess.close() #========================================================================================

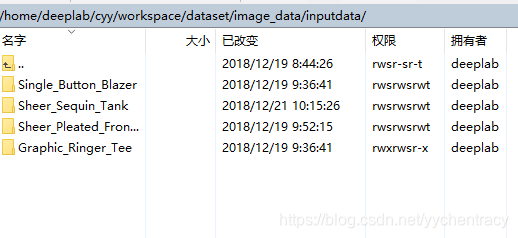

程式執行結束後就會生成下面的四個資料夾,裡面存放就是我們需要的資料

將第一步生成的圖片進行sample和label操作,進行batch處理

import os import math import numpy as np import tensorflow as tf import matplotlib.pyplot as plt #============================================================================ #-----------------生成圖片路徑和標籤的List------------------------------------ train_dir = 'dataset/image_data/inputdata' Graphic_Ringer_Tee = [] label_Graphic_Ringer_Tee = [] Sheer_Pleated_Front_Blouse = [] label_Sheer_Pleated_Front_Blouse = [] Sheer_Sequin_Tank = [] label_Sheer_Sequin_Tank = [] Single_Button_Blazer = [] label_Single_Button_Blazer = [] #step1:獲取'E:/Re_train/image_data/training_image'下所有的圖片路徑名,存放到 #對應的列表中,同時貼上標籤,存放到label列表中。 def get_files(file_dir, ratio): for file in os.listdir(file_dir+'/Graphic_Ringer_Tee'): Graphic_Ringer_Tee.append(file_dir +'/Graphic_Ringer_Tee'+'/'+ file) label_Graphic_Ringer_Tee.append(0) for file in os.listdir(file_dir+'/Sheer_Pleated_Front_Blouse'): Sheer_Pleated_Front_Blouse.append(file_dir +'/Sheer_Pleated_Front_Blouse'+'/'+file) label_Sheer_Pleated_Front_Blouse.append(1) for file in os.listdir(file_dir+'/Sheer_Sequin_Tank'): Sheer_Sequin_Tank.append(file_dir +'/Sheer_Sequin_Tank'+'/'+ file) label_Sheer_Sequin_Tank.append(2) for file in os.listdir(file_dir+'/Single_Button_Blazer'): Single_Button_Blazer.append(file_dir +'/Single_Button_Blazer'+'/'+file) label_Single_Button_Blazer.append(3) #step2:對生成的圖片路徑和標籤List做打亂處理把cat和dog合起來組成一個list(img和lab) image_list = np.hstack((Graphic_Ringer_Tee, Sheer_Pleated_Front_Blouse, Sheer_Sequin_Tank, Single_Button_Blazer)) label_list = np.hstack((label_Graphic_Ringer_Tee, label_Sheer_Pleated_Front_Blouse, label_Sheer_Sequin_Tank, label_Single_Button_Blazer)) #利用shuffle打亂順序 temp = np.array([image_list, label_list]) temp = temp.transpose() np.random.shuffle(temp) #從打亂的temp中再取出list(img和lab) #image_list = list(temp[:, 0]) #label_list = list(temp[:, 1]) #label_list = [int(i) for i in label_list] #return image_list, label_list #將所有的img和lab轉換成list all_image_list = list(temp[:, 0]) all_label_list = list(temp[:, 1]) #將所得List分為兩部分,一部分用來訓練tra,一部分用來測試val #ratio是測試集的比例 n_sample = len(all_label_list) n_val = int(math.ceil(n_sample*ratio)) #測試樣本數 n_train = n_sample - n_val #訓練樣本數 tra_images = all_image_list[0:n_train] tra_labels = all_label_list[0:n_train] tra_labels = [int(float(i)) for i in tra_labels] val_images = all_image_list[n_train:-1] val_labels = all_label_list[n_train:-1] val_labels = [int(float(i)) for i in val_labels] return tra_images, tra_labels, val_images, val_labels #--------------------------------------------------------------------------- #--------------------生成Batch---------------------------------------------- #step1:將上面生成的List傳入get_batch() ,轉換型別,產生一個輸入佇列queue,因為img和lab #是分開的,所以使用tf.train.slice_input_producer(),然後用tf.read_file()從佇列中讀取影象 # image_W, image_H, :設定好固定的影象高度和寬度 # 設定batch_size:每個batch要放多少張圖片 # capacity:一個佇列最大多少 def get_batch(image, label, image_W, image_H, batch_size, capacity): #轉換型別 image = tf.cast(image, tf.string) label = tf.cast(label, tf.int32) # make an input queue input_queue = tf.train.slice_input_producer([image, label]) label = input_queue[1] image_contents = tf.read_file(input_queue[0]) #read img from a queue #step2:將影象解碼,不同型別的影象不能混在一起,要麼只用jpeg,要麼只用png等。 image = tf.image.decode_jpeg(image_contents, channels=3) #step3:資料預處理,對影象進行旋轉、縮放、裁剪、歸一化等操作,讓計算出的模型更健壯。 image = tf.image.resize_image_with_crop_or_pad(image, image_W, image_H) image = tf.image.per_image_standardization(image) #step4:生成batch #image_batch: 4D tensor [batch_size, width, height, 3],dtype=tf.float32 #label_batch: 1D tensor [batch_size], dtype=tf.int32 image_batch, label_batch = tf.train.batch([image, label], batch_size= batch_size, num_threads= 32, capacity = capacity) #重新排列label,行數為[batch_size] label_batch = tf.reshape(label_batch, [batch_size]) image_batch = tf.cast(image_batch, tf.float32) return image_batch, label_batch #========================================================================

建立神經網路模型

#========================================================================= import tensorflow as tf #========================================================================= #網路結構定義 #輸入引數:images,image batch、4D tensor、tf.float32、[batch_size, width, height, channels] #返回引數:logits, float、 [batch_size, n_classes] def inference(images, batch_size, n_classes): #一個簡單的卷積神經網路,卷積+池化層x2,全連線層x2,最後一個softmax層做分類。 #卷積層1 #64個3x3的卷積核(3通道),padding=’SAME’,表示padding後卷積的圖與原圖尺寸一致,啟用函式relu() with tf.variable_scope('conv1') as scope: weights = tf.Variable(tf.truncated_normal(shape=[3,3,3,64], stddev = 1.0, dtype = tf.float32), name = 'weights', dtype = tf.float32) biases = tf.Variable(tf.constant(value = 0.1, dtype = tf.float32, shape = [64]), name = 'biases', dtype = tf.float32) conv = tf.nn.conv2d(images, weights, strides=[1,1,1,1], padding='SAME') pre_activation = tf.nn.bias_add(conv, biases) conv1 = tf.nn.relu(pre_activation, name= scope.name) #池化層1 #3x3最大池化,步長strides為2,池化後執行lrn()操作,區域性響應歸一化,對訓練有利。 with tf.variable_scope('pooling1_lrn') as scope: pool1 = tf.nn.max_pool(conv1, ksize=[1,3,3,1],strides=[1,2,2,1],padding='SAME', name='pooling1') norm1 = tf.nn.lrn(pool1, depth_radius=4, bias=1.0, alpha=0.001/9.0, beta=0.75, name='norm1') #卷積層2 #16個3x3的卷積核(16通道),padding=’SAME’,表示padding後卷積的圖與原圖尺寸一致,啟用函式relu() with tf.variable_scope('conv2') as scope: weights = tf.Variable(tf.truncated_normal(shape=[3,3,64,16], stddev = 0.1, dtype = tf.float32), name = 'weights', dtype = tf.float32) biases = tf.Variable(tf.constant(value = 0.1, dtype = tf.float32, shape = [16]), name = 'biases', dtype = tf.float32) conv = tf.nn.conv2d(norm1, weights, strides = [1,1,1,1],padding='SAME') pre_activation = tf.nn.bias_add(conv, biases) conv2 = tf.nn.relu(pre_activation, name='conv2') #池化層2 #3x3最大池化,步長strides為2,池化後執行lrn()操作, #pool2 and norm2 with tf.variable_scope('pooling2_lrn') as scope: norm2 = tf.nn.lrn(conv2, depth_radius=4, bias=1.0, alpha=0.001/9.0,beta=0.75,name='norm2') pool2 = tf.nn.max_pool(norm2, ksize=[1,3,3,1], strides=[1,1,1,1],padding='SAME',name='pooling2') #全連線層3 #128個神經元,將之前pool層的輸出reshape成一行,啟用函式relu() with tf.variable_scope('local3') as scope: reshape = tf.reshape(pool2, shape=[batch_size, -1]) dim = reshape.get_shape()[1].value weights = tf.Variable(tf.truncated_normal(shape=[dim,128], stddev = 0.005, dtype = tf.float32), name = 'weights', dtype = tf.float32) biases = tf.Variable(tf.constant(value = 0.1, dtype = tf.float32, shape = [128]), name = 'biases', dtype=tf.float32) local3 = tf.nn.relu(tf.matmul(reshape, weights) + biases, name=scope.name) #全連線層4 #128個神經元,啟用函式relu() with tf.variable_scope('local4') as scope: weights = tf.Variable(tf.truncated_normal(shape=[128,128], stddev = 0.005, dtype = tf.float32), name = 'weights',dtype = tf.float32) biases = tf.Variable(tf.constant(value = 0.1, dtype = tf.float32, shape = [128]), name = 'biases', dtype = tf.float32) local4 = tf.nn.relu(tf.matmul(local3, weights) + biases, name='local4') #dropout層 # with tf.variable_scope('dropout') as scope: # drop_out = tf.nn.dropout(local4, 0.8) #Softmax迴歸層 #將前面的FC層輸出,做一個線性迴歸,計算出每一類的得分,在這裡是2類,所以這個層輸出的是兩個得分。 with tf.variable_scope('softmax_linear') as scope: weights = tf.Variable(tf.truncated_normal(shape=[128, n_classes], stddev = 0.005, dtype = tf.float32), name = 'softmax_linear', dtype = tf.float32) biases = tf.Variable(tf.constant(value = 0.1, dtype = tf.float32, shape = [n_classes]), name = 'biases', dtype = tf.float32) softmax_linear = tf.add(tf.matmul(local4, weights), biases, name='softmax_linear') return softmax_linear #----------------------------------------------------------------------------- #loss計算 #傳入引數:logits,網路計算輸出值。labels,真實值,在這裡是0或者1 #返回引數:loss,損失值 def losses(logits, labels): with tf.variable_scope('loss') as scope: cross_entropy =tf.nn.sparse_softmax_cross_entropy_with_logits(logits=logits, labels=labels, name='xentropy_per_example') loss = tf.reduce_mean(cross_entropy, name='loss') tf.summary.scalar(scope.name+'/loss', loss) return loss #-------------------------------------------------------------------------- #loss損失值優化 #輸入引數:loss。learning_rate,學習速率。 #返回引數:train_op,訓練op,這個引數要輸入sess.run中讓模型去訓練。 def trainning(loss, learning_rate): with tf.name_scope('optimizer'): optimizer = tf.train.AdamOptimizer(learning_rate= learning_rate) global_step = tf.Variable(0, name='global_step', trainable=False) train_op = optimizer.minimize(loss, global_step= global_step) return train_op #----------------------------------------------------------------------- #評價/準確率計算 #輸入引數:logits,網路計算值。labels,標籤,也就是真實值,在這裡是0或者1。 #返回引數:accuracy,當前step的平均準確率,也就是在這些batch中多少張圖片被正確分類了。 def evaluation(logits, labels): with tf.variable_scope('accuracy') as scope: correct = tf.nn.in_top_k(logits, labels, 1) correct = tf.cast(correct, tf.float16) accuracy = tf.reduce_mean(correct) tf.summary.scalar(scope.name+'/accuracy', accuracy) return accuracy #========================================================================

網路訓練

#======================================================================

#匯入檔案

import os

import numpy as np

import tensorflow as tf

#import input_data

#import model

#變數宣告

N_CLASSES = 4 #husky,jiwawa,poodle,qiutian

IMG_W = 64 # resize影象,太大的話訓練時間久

IMG_H = 64

BATCH_SIZE =20

CAPACITY = 200

MAX_STEP = 200 # 一般大於10K

learning_rate = 0.0001 # 一般小於0.0001

#獲取批次batch

train_dir = 'dataset/image_data/inputdata' #訓練樣本的讀入路徑

logs_train_dir = 'dataset/log' #logs儲存路徑

#logs_test_dir = 'E:/Re_train/image_data/test' #logs儲存路徑

#train, train_label = input_data.get_files(train_dir)

train, train_label, val, val_label = get_files(train_dir, 0.3)

#訓練資料及標籤

train_batch,train_label_batch = get_batch(train, train_label, IMG_W, IMG_H, BATCH_SIZE, CAPACITY)

#測試資料及標籤

val_batch, val_label_batch = get_batch(val, val_label, IMG_W, IMG_H, BATCH_SIZE, CAPACITY)

#訓練操作定義

train_logits = inference(train_batch, BATCH_SIZE, N_CLASSES)

train_loss = losses(train_logits, train_label_batch)

train_op = trainning(train_loss, learning_rate)

train_acc = evaluation(train_logits, train_label_batch)

#測試操作定義

test_logits = inference(val_batch, BATCH_SIZE, N_CLASSES)

test_loss = losses(test_logits, val_label_batch)

test_acc = evaluation(test_logits, val_label_batch)

#這個是log彙總記錄

summary_op = tf.summary.merge_all()

#產生一個會話

sess = tf.Session()

#產生一個writer來寫log檔案

train_writer = tf.summary.FileWriter(logs_train_dir, sess.graph)

#val_writer = tf.summary.FileWriter(logs_test_dir, sess.graph)

#產生一個saver來儲存訓練好的模型

saver = tf.train.Saver()

#所有節點初始化

sess.run(tf.global_variables_initializer())

#佇列監控

coord = tf.train.Coordinator()

threads = tf.train.start_queue_runners(sess=sess, coord=coord)

#進行batch的訓練

try:

#執行MAX_STEP步的訓練,一步一個batch

for step in np.arange(MAX_STEP):

if coord.should_stop():

break

#啟動以下操作節點,有個疑問,為什麼train_logits在這裡沒有開啟?

_, tra_loss, tra_acc = sess.run([train_op, train_loss, train_acc])

#每隔50步列印一次當前的loss以及acc,同時記錄log,寫入writer

if step % 10 == 0:

print('Step %d, train loss = %.2f, train accuracy = %.2f%%' %(step, tra_loss, tra_acc*100.0))

summary_str = sess.run(summary_op)

train_writer.add_summary(summary_str, step)

#每隔100步,儲存一次訓練好的模型

if (step + 1) == MAX_STEP:

checkpoint_path = os.path.join(logs_train_dir, 'model.ckpt')

saver.save(sess, checkpoint_path, global_step=step)

except tf.errors.OutOfRangeError:

print('Done training -- epoch limit reached')

finally:

coord.request_stop()

#========================================================================

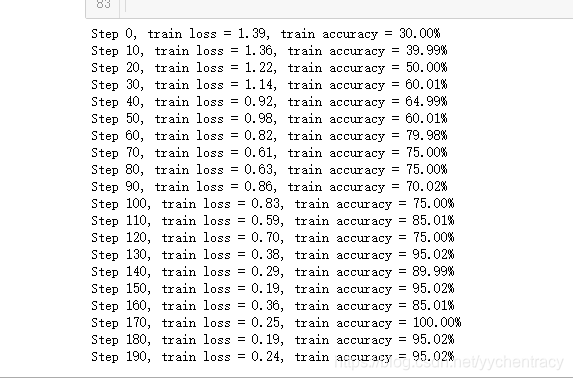

結果如下:

測試

#=============================================================================

from PIL import Image

import numpy as np

import tensorflow as tf

import matplotlib.pyplot as plt

#=======================================================================

#獲取一張圖片

def get_one_image(train):

#輸入引數:train,訓練圖片的路徑

#返回引數:image,從訓練圖片中隨機抽取一張圖片

n = len(train)

ind = np.random.randint(0, n)

img_dir = train[ind] #隨機選擇測試的圖片

img = Image.open(img_dir)

plt.imshow(img)

imag = img.resize([64, 64]) #由於圖片在預處理階段以及resize,因此該命令可略

image = np.array(imag)

return image

#--------------------------------------------------------------------

#測試圖片

def evaluate_one_image(image_array):

with tf.Graph().as_default():

BATCH_SIZE = 1

N_CLASSES = 4

image = tf.cast(image_array, tf.float32)

image = tf.image.per_image_standardization(image)

image = tf.reshape(image, [1, 64, 64, 3])

logit = inference(image, BATCH_SIZE, N_CLASSES)

logit = tf.nn.softmax(logit)

x = tf.placeholder(tf.float32, shape=[64, 64, 3])

# you need to change the directories to yours.

logs_train_dir = 'dataset/log/'

saver = tf.train.Saver()

with tf.Session() as sess:

print("Reading checkpoints...")

ckpt = tf.train.get_checkpoint_state(logs_train_dir)

if ckpt and ckpt.model_checkpoint_path:

global_step = ckpt.model_checkpoint_path.split('/')[-1].split('-')[-1]

saver.restore(sess, ckpt.model_checkpoint_path)

print('Loading success, global_step is %s' % global_step)

else:

print('No checkpoint file found')

prediction = sess.run(logit, feed_dict={x: image_array})

max_index = np.argmax(prediction)

if max_index==0:

print('This is a 0 with possibility %.6f' %prediction[:, 0])

elif max_index==1:

print('This is a 1 with possibility %.6f' %prediction[:, 1])

elif max_index==2:

print('This is a 2 with possibility %.6f' %prediction[:, 2])

else:

print('This is a 3 with possibility %.6f' %prediction[:, 3])

#------------------------------------------------------------------------

if __name__ == '__main__':

train_dir = 'dataset/image_data/inputdata'

train, train_label, val, val_label = get_files(train_dir, 0.3)

img = get_one_image(val) #通過改變引數train or val,進而驗證訓練集或測試集

evaluate_one_image(img)

#===========================================================================

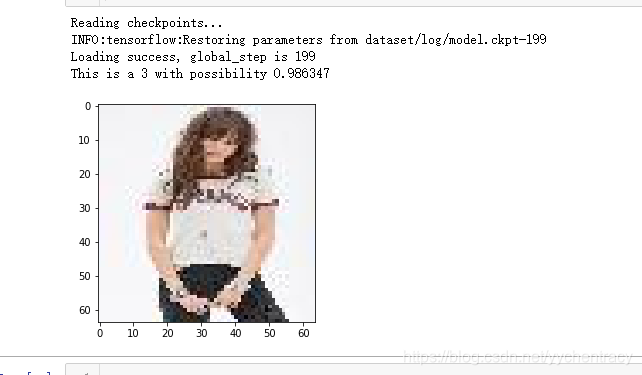

結果如下:

來源:https://blog.csdn.net/yychentracy/article/details/85158010