The 4 Convolutional Neural Network Models That Can Classify Your Fashion Images

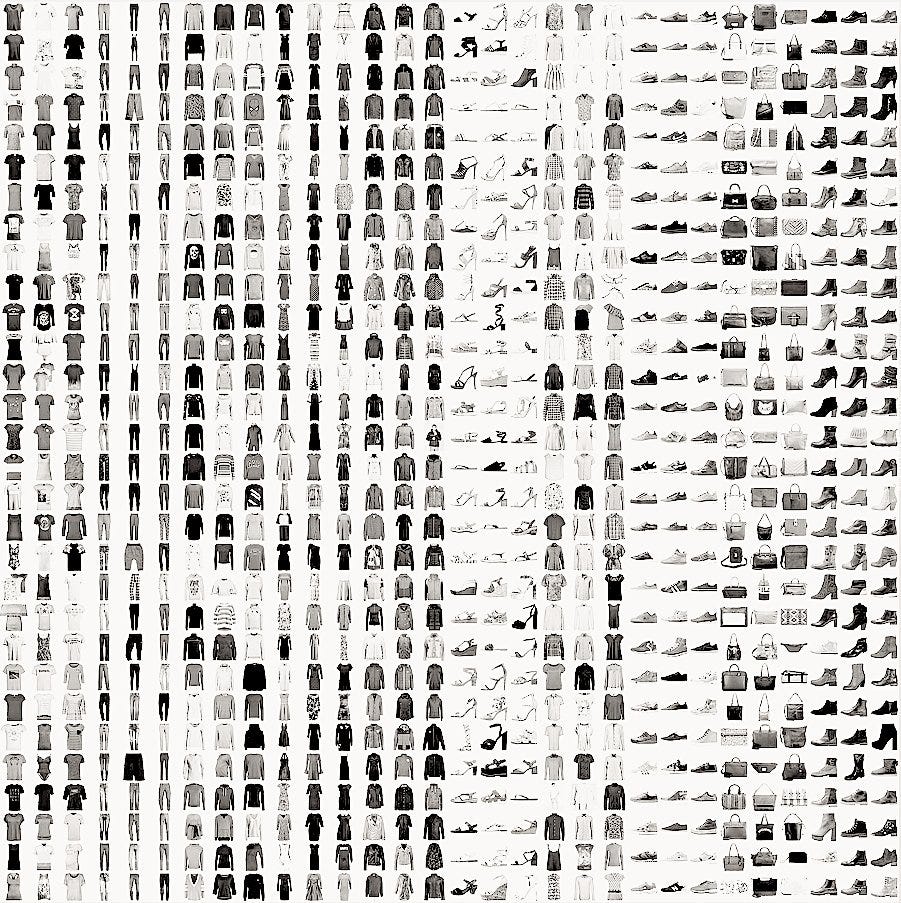

Fashion MNIST Dataset

Recently, Zalando research published a new dataset, which is very similar to the well known MNIST database of handwritten digits. The dataset is designed for machine learning classification tasks and contains in total 60 000 training and 10 000 test images (gray scale) with each 28x28 pixel. Each training and test case is associated with one of ten labels (0–9). Up till here Zalando’s dataset is basically the same as the original handwritten digits data. However, instead of having images of the digits 0–9, Zalando’s data contains (not unsurprisingly) images with 10 different fashion products. Consequently, the dataset is called

The 10 different class labels are:

- 0 T-shirt/top

- 1 Trouser

- 2 Pullover

- 3 Dress

- 4 Coat

- 5 Sandal

- 6 Shirt

- 7 Sneaker

- 8 Bag

- 9 Ankle boot

According to the authors, the Fashion-MNIST data is intended to be a direct drop-in replacement for the old MNIST handwritten digits data, since there were several issues with the handwritten digits. For example, it was possible to correctly distinguish between several digits, by simply looking at a few pixels. Even with linear classifiers it was possible to achieve high classification accuracy. The Fashion-MNIST data promises to be more diverse so that machine learning (ML) algorithms have to learn more advanced features in order to be able to separate the individual classes reliably.

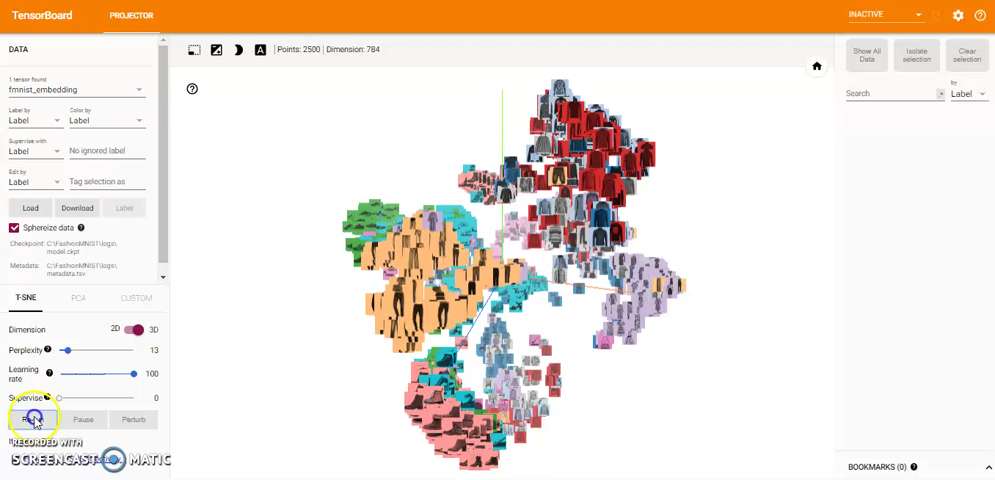

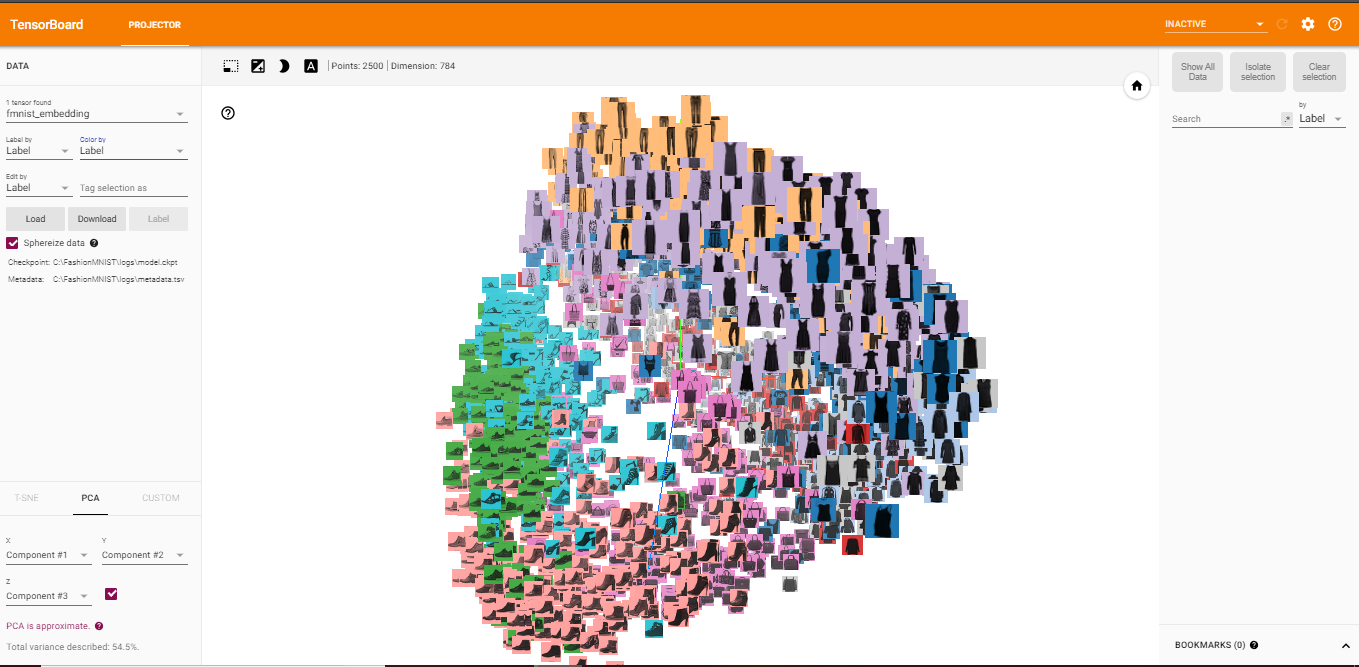

Embedding Visualization of Fashion MNIST

Embedding is a way to map discrete objects (images, words, etc.) to high dimensional vectors. The individual dimensions in these vectors typically have no inherent meaning. Instead, it’s the overall patterns of location and distance between vectors that machine learning takes advantage of. Embeddings, thus, are important for input to machine learning; since classifiers and neural networks, more generally, work on vectors of real numbers. They train best on dense vectors, where all values contribute to define an object.

TensorBoard has a built-in visualizer, called the Embedding Projector, for interactive visualization and analysis of high-dimensional data like embeddings. The embedding projector will read the embeddings from my model checkpoint file. Although it’s most useful for embeddings, it will load any 2D tensor, including my training weights.

Here, I’ll attempt to represent the high-dimensional Fashion MNIST data using TensorBoard. After reading the data and create the test labels, I use this code to build TensorBoard’s Embedding Projector:

The Embedding Projector has three methods of reducing the dimensionality of a data set: two linear and one nonlinear. Each method can be used to create either a two- or three-dimensional view.

Principal Component Analysis: A straightforward technique for reducing dimensions is Principal Component Analysis (PCA). The Embedding Projector computes the top 10 principal components. The menu lets me project those components onto any combination of two or three. PCA is a linear projection, often effective at examining global geometry.

t-SNE: A popular non-linear dimensionality reduction technique is t-SNE. The Embedding Projector offers both two- and three-dimensional t-SNE views. Layout is performed client-side animating every step of the algorithm. Because t-SNE often preserves some local structure, it is useful for exploring local neighborhoods and finding clusters.

Custom: I can also construct specialized linear projections based on text searches for finding meaningful directions in space. To define a projection axis, enter two search strings or regular expressions. The program computes the centroids of the sets of points whose labels match these searches, and uses the difference vector between centroids as a projection axis.

You can view the full code for the visualization steps at this notebook: TensorBoard-Visualization.ipynb

Training CNN Models on Fashion MNIST

Let’s now move to the fun part: I will create a variety of different CNN-based classification models to evaluate performances on Fashion MNIST. I will be building our model using the Keras framework. For more information on the framework, you can refer to the documentation here. Here are the list of models I will try out and compare their results:

- CNN with 1 Convolutional Layer

- CNN with 3 Convolutional Layer

- CNN with 4 Convolutional Layer

- VGG-19 Pre-Trained Model

For all the models (except for the pre-trained one), here is my approach:

- Split the original training data (60,000 images) into 80% training (48,000 images) and 20% validation (12000 images) optimize the classifier, while keeping the test data (10,000 images) to finally evaluate the accuracy of the model on the data it has never seen. This helps to see whether I’m over-fitting on the training data and whether I should lower the learning rate and train for more epochs if validation accuracy is higher than training accuracy or stop over-training if training accuracy shift higher than the validation.

- Train the model for 10 epochs with batch size of 256, compiled with categorical_crossentropy loss function and Adam optimizer.

- Then, add data augmentation, which generates new training samples by rotating, shifting and zooming on the training samples, and train the model on updated data for another 50 epochs.

Here’s the code to load and split the data:

After loading and splitting the data, I preprocess them by reshaping them into the shape the network expects and scaling them so that all values are in the [0, 1] interval. Previously, for instance, the training data were stored in an array of shape (60000, 28, 28) of type uint8 with values in the [0, 255] interval. I transform it into a float32 array of shape (60000, 28 * 28) with values between 0 and 1.

1 — 1-Conv CNN

Here’s the code for the CNN with 1 Convolutional Layer:

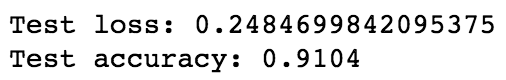

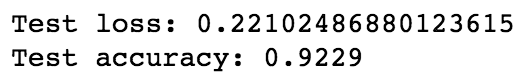

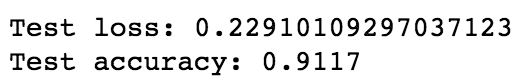

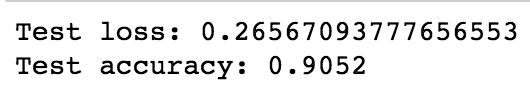

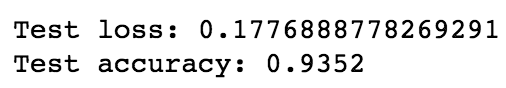

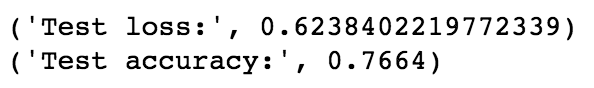

After training the model, here’s the test loss and test accuracy:

After applying data augmentation, here’s the test loss and test accuracy:

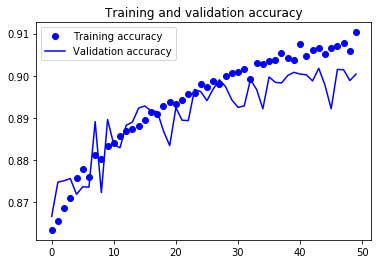

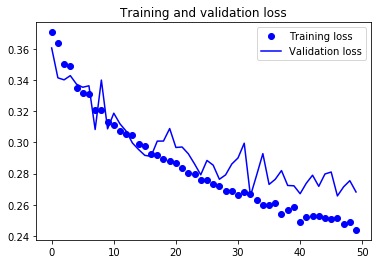

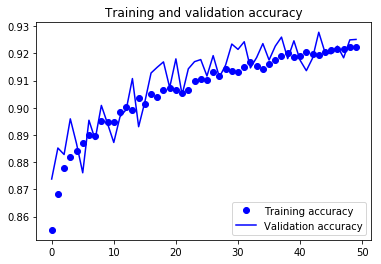

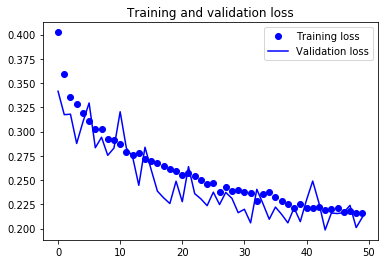

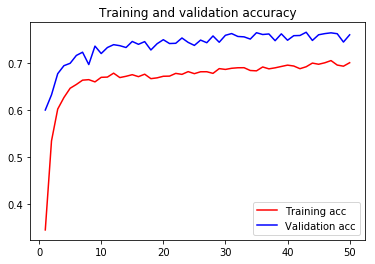

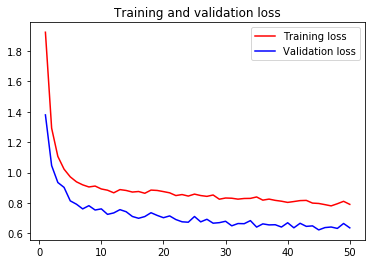

For visual purpose, I plot the training and validation accuracy and loss:

You can view the full code for this model at this notebook: CNN-1Conv.ipynb

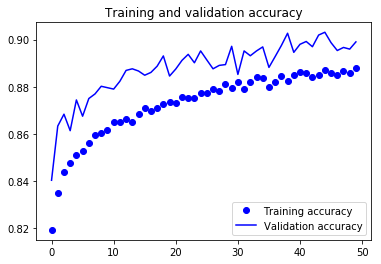

2 — 3-Conv CNN

Here’s the code for the CNN with 3 Convolutional Layer:

After training the model, here’s the test loss and test accuracy:

After applying data augmentation, here’s the test loss and test accuracy:

For visual purpose, I plot the training and validation accuracy and loss:

You can view the full code for this model at this notebook: CNN-3Conv.ipynb

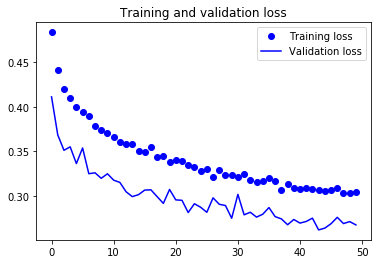

3 — 4-Conv CNN

Here’s the code for the CNN with 4 Convolutional Layer:

After training the model, here’s the test loss and test accuracy:

After applying data augmentation, here’s the test loss and test accuracy:

For visual purpose, I plot the training and validation accuracy and loss:

You can view the full code for this model at this notebook: CNN-4Conv.ipynb

4 — Transfer Learning

A common and highly effective approach to deep learning on small image datasets is to use a pre-trained network. A pre-trained network is a saved network that was previously trained on a large dataset, typically on a large-scale image-classification task. If this original dataset is large enough and general enough, then the spatial hierarchy of features learned by the pre-trained network can effectively act as a generic model of the visual world, and hence its features can prove useful for many different computer-vision problems, even though these new problems may involve completely different classes than those of the original task.

I attempted to implement the VGG19 pre-trained model, which is a widely used ConvNets architecture for ImageNet. Here’s the code you can follow:

After training the model, here’s the test loss and test accuracy:

For visual purpose, I plot the training and validation accuracy and loss:

You can view the full code for this model at this notebook: VGG19-GPU.ipynb

Last Takeaway

The fashion domain is a very popular playground for applications of machine learning and computer vision. The problems in this domain is challenging due to the high level of subjectivity and the semantic complexity of the features involved. I hope that this post has been helpful for you to learn about the 4 different approaches to build your own convolutional neural networks to classify fashion images. You can view all the source code in my GitHub repo at this link. Let me know if you have any questions or suggestions on improvement!

— —

If you enjoyed this piece, I’d love it if you hit the clap button ? so others might stumble upon it. You can find my own code on GitHub, and more of my writing and projects at https://jameskle.com/. You can also follow me on Twitter, email me directly or find me on LinkedIn. Sign up for my newsletter to receive my latest thoughts on data science, machine learning, and artificial intelligence right at your inbox!