tensorflow-神經網路識別手寫數字

阿新 • • 發佈:2019-01-12

- 資料下載連線:http://yann.lecun.com/exdb/mnist/

- 下載t10k-images-idx3-ubyte.gz;t10k-labels-idx1-ubyte.gz;train-images-idx3-ubyte.gz;train-labels-idx1-ubyte.gz

- 簡單神經網路識別手寫數字

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

# 資料下載連線:http://yann.lecun.com/exdb/mnist/

# 下載t10k-images-idx3-ubyte.gz;t10k-labels-idx1-ubyte.gz;train-images-idx3-ubyte.gz;train-labels-idx1-ubyte.gz - 卷積神經網路識別手寫數字

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

def weight_variables(shape):

'''

初始化權重

:param shape:

:return: w 初始化的權重

'''

w = tf.Variable(tf.random_normal(shape=shape, mean=0.0, stddev=1.0))

return w

def bias_variables(shape):

'''

初始化偏置

:param shape:

:return: b 初始化的偏置

'''

b = tf.Variable(tf.constant(0.1, shape=shape))

return b

def model():

'''

自定義卷積模型

一卷積層:32個filter,5*5,strides=1,padding="SAME"; 池化:2*2, strides=2,padding="SAME"

二卷積層:64個filter,5*5,strides=1,padding="SAME";池化:2*2, strides=2

:return: None

'''

# 1. 準備資料佔位符 x[None, 784] y_true[None, 10]

with tf.variable_scope("data"):

x = tf.placeholder(tf.float32, [None, 784])

y_true = tf.placeholder(tf.int32, [None, 10])

# 2. 一卷積層, 卷積、啟用、池化

with tf.variable_scope("conv1"):

# 隨機初始化權重, 偏置

w_conv1 = weight_variables([5,5,1,32])

b_conv1 = bias_variables([32])

# 對x改變形狀[None,784] --> [None, 28, 28, 1]

x_reshape = tf.reshape(x, [-1, 28,28,1])

# 卷積+啟用 [None, 28, 28, 1] --> [None, 28, 28, 32]

x_relu1 = tf.nn.relu(tf.nn.conv2d(x_reshape, w_conv1, strides=[1,1,1,1], padding="SAME") + b_conv1)

# 池化 2*2 [None, 28, 28, 32] --> [None, 14, 14, 32]

x_pool1 = tf.nn.max_pool(x_relu1, ksize=[1,2,2,1], strides=[1,2,2,1], padding="SAME")

# 3. 二卷積層

with tf.variable_scope("conv2"):

# 隨機初始化權重, 偏置

w_conv2 = weight_variables([5, 5, 32, 64])

b_conv2 = bias_variables([64])

# 卷積+啟用 [None, 14, 14, 32] --> [None, 14, 14, 64]

x_relu2 = tf.nn.relu(tf.nn.conv2d(x_pool1, w_conv2, strides=[1, 1, 1, 1], padding="SAME") + b_conv2)

# 池化 2*2 [None, 14, 14, 64] --> [None, 7, 7, 64]

x_pool2 = tf.nn.max_pool(x_relu2, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding="SAME")

# 4. 全連線層 [None,7,7,64] --> [None,7*7*64] * [7*7*64,10] + [10] = [None,10]

with tf.variable_scope("fc"):

# 隨機初始化權重, 偏置

w_fc = weight_variables([7*7*64, 10])

b_fc = bias_variables([10])

# 修改x_pool2形狀

x_fc_reshape = tf.reshape(x_pool2, [-1, 7*7*64])

# 矩陣運算得出每個樣本得10個結果

y_predict = tf.matmul(x_fc_reshape, w_fc) + b_fc

return x, y_true, y_predict

def conv_fc():

# 1. 獲取真實資料

mnist = input_data.read_data_sets("./data/mnist/", one_hot=True)

# 2. 定義模型,獲得輸出

x, y_true, y_predict = model()

# 3. 求出所有樣本的損失,然後求平均值

with tf.variable_scope("soft_cross"):

# 求平均交叉熵損失

loss = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y_true, logits=y_predict))

# 4. 梯度下降求出損失

with tf.variable_scope("optimazer"):

train_op = tf.train.GradientDescentOptimizer(0.0001).minimize(loss)

# 5. 計算準確率

with tf.variable_scope("acc"):

equal_list = tf.equal(tf.arg_max(y_true, 1), tf.arg_max(y_predict, 1))

accuracy = tf.reduce_mean(tf.cast(equal_list, tf.float32))

# 定義一個初始化變數的op

init_op = tf.global_variables_initializer()

# 開啟會話

with tf.Session() as sess:

sess.run(init_op)

# 迴圈訓練

for i in range(3000):

# 取出真實資料中得特徵值和目標值

mnist_x, mnist_y = mnist.train.next_batch(50)

sess.run(train_op, feed_dict={x: mnist_x, y_true: mnist_y})

print("訓練第 %d 步,準確率為:%f " % (i, sess.run(accuracy, feed_dict={x: mnist_x, y_true: mnist_y})))

if __name__ == '__main__':

conv_fc()

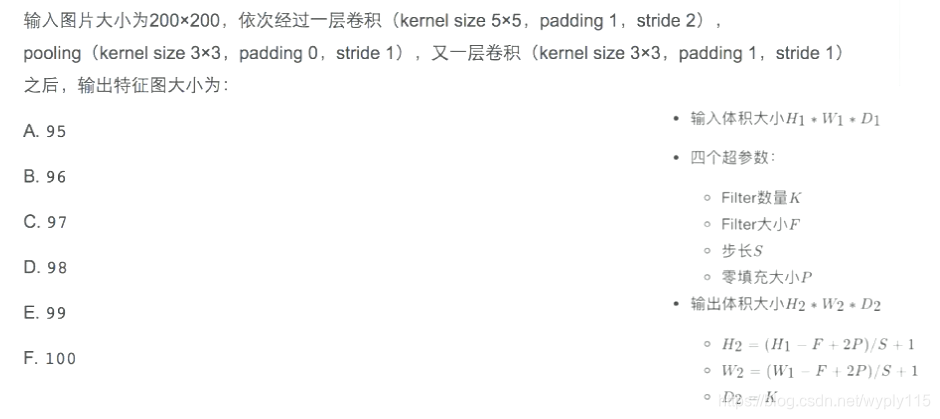

- 一到筆試題

計算過程(通道對輸出不影響):

- 經過一層卷積:長,H2 = (200 - 5 + 2*1)/2 +1 = 99.5 (這裡不是整數是需要自己分析卷積過程,步長為2,0.5步就是1,因為padding=1,padding是填充的0無需觀察,因此結果就是99);長寬一樣,因此不在計算寬。

- 經過pooling,H2 = (99 - 3 + 2*0)/1 +1 = 97

- 又經過一層卷積:H2 = (97 - 3 + 2*1)/1 +1 = 97,因此最終圖片大小輸出為97*97

因此答案是:C. 97