python,tensorflow,CNN實現mnist資料集的訓練與驗證正確率

阿新 • • 發佈:2019-01-12

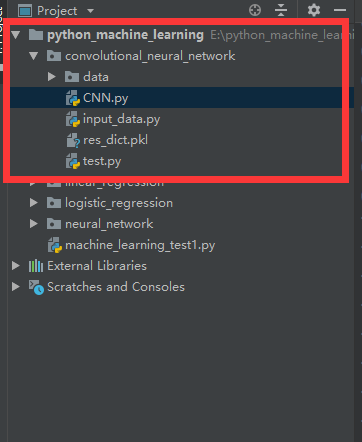

1.工程目錄

2.匯入data和input_data.py

連結:https://pan.baidu.com/s/1EBNyNurBXWeJVyhNeVnmnA

提取碼:4nnl

3.CNN.py

import tensorflow as tf

import matplotlib.pyplot as plt

import input_data

mnist = input_data.read_data_sets('data/', one_hot=True)

trainimg = mnist.train.images

trainlabel = mnist.train.labels

testimg = mnist.test.images

testlabel = mnist.test.labels

print('MNIST ready')

n_input = 784

n_output = 10

weights = {

'wc1': tf.Variable(tf.truncated_normal([3, 3, 1, 64], stddev=0.1)),

'wc2': tf.Variable(tf.truncated_normal([3, 3, 64, 128], stddev=0.1)),

'wd1': tf.Variable(tf.truncated_normal([7*7*128, 1024], stddev=0.1)),

'wd2': tf.Variable(tf.truncated_normal([1024, n_outpot], stddev=0.1)),

}

biases = {

'bc1': tf.Variable(tf.random_normal([64], stddev=0.1)),

'bc2': tf.Variable(tf.random_normal([128], stddev=0.1)),

'bd1': tf.Variable(tf.random_normal([1024], stddev=0.1)),

'bd2': tf.Variable(tf.random_normal([n_outpot], stddev=0.1)),

}

def conv_basic(_input, _w, _b, _keepratio):

_input_r = tf.reshape(_input, shape=[-1, 28, 28, 1])

_conv1 = tf.nn.conv2d(_input_r, _w['wc1'], strides=[1, 1, 1, 1], padding='SAME')

_conv1 = tf.nn.relu(tf.nn.bias_add(_conv1, _b['bc1']))

_pool1 = tf.nn.max_pool(_conv1, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME')

_pool_dr1 = tf.nn.dropout(_pool1, _keepratio)

_conv2 = tf.nn.conv2d(_pool_dr1, _w['wc2'], strides=[1, 1, 1, 1], padding='SAME')

_conv2 = tf.nn.relu(tf.nn.bias_add(_conv2, _b['bc2']))

_pool2 = tf.nn.max_pool(_conv2, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME')

_pool_dr2 = tf.nn.dropout(_pool2, _keepratio)

_densel = tf.reshape(_pool_dr2, [-1, _w['wd1'].get_shape().as_list()[0]])

_fc1 = tf.nn.relu(tf.add(tf.matmul(_densel, _w['wd1']), _b['bd1']))

_fc_dr1 = tf.nn.dropout(_fc1, _keepratio)

_out = tf.add(tf.matmul(_fc_dr1, _w['wd2']), _b['bd2'])

out = {

'input_r': _input_r, 'conv1': _conv1, 'pool1': _pool1, 'pool_dr1': _pool_dr1,

'conv2': _conv2, 'pool2': _pool2, 'pool_dr2': _pool_dr2, 'densel': _densel,

'fc1': _fc1, 'fc_dr1': _fc_dr1, 'out': _out

}

return out

print('CNN READY')

x = tf.placeholder(tf.float32, [None, n_input])

y = tf.placeholder(tf.float32, [None, n_output])

keepratio = tf.placeholder(tf.float32)

_pred = conv_basic(x, weights, biases, keepratio)['out']

cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(_pred, y))

optm = tf.train.AdamOptimizer(learning_rate=0.01).minimize(cost)

_corr = tf.equal(tf.argmax(_pred, 1), tf.argmax(y, 1))

accr = tf.reduce_mean(tf.cast(_corr, tf.float32))

init = tf.global_variables_initializer()

print('GRAPH READY')

sess = tf.Session()

sess.run(init)

training_epochs = 15

batch_size = 16

display_step = 1

for epoch in range(training_epochs):

avg_cost = 0.

total_batch = 10

for i in range(total_batch):

batch_xs, batch_ys = mnist.train.next_batch(batch_size)

sess.run(optm, feed_dict={x: batch_xs, y: batch_ys, keepratio: 0.7})

avg_cost += sess.run(cost, feed_dict={x: batch_xs, y: batch_ys, keepratio: 1.0})/total_batch

if epoch % display_step == 0:

print('Epoch: %03d/%03d cost: %.9f' % (epoch, training_epochs, avg_cost))

train_acc = sess.run(accr, feed_dict={x: batch_xs, y: batch_ys, keepratio: 1.})

print('Training accuracy: %.3f' % (train_acc))

res_dict = {'weight': sess.run(weights), 'biases': sess.run(biases)}

import pickle

with open('res_dict.pkl', 'wb') as f:

pickle.dump(res_dict, f, pickle.HIGHEST_PROTOCOL)

4.test.py

import pickle

import numpy as np

def load_file(path, name):

with open(path+''+name+'.pkl', 'rb') as f:

return pickle.load(f)

res_dict = load_file('', 'res_dict')

print(res_dict['weight']['wc1'])

index = 0

import input_data

mnist = input_data.read_data_sets('data/', one_hot=True)

test_image = mnist.test.images

test_label = mnist.test.labels

import tensorflow as tf

def conv_basic(_input, _w, _b, _keepratio):

_input_r = tf.reshape(_input, shape=[-1, 28, 28, 1])

_conv1 = tf.nn.conv2d(_input_r, _w['wc1'], strides=[1, 1, 1, 1], padding='SAME')

_conv1 = tf.nn.relu(tf.nn.bias_add(_conv1, _b['bc1']))

_pool1 = tf.nn.max_pool(_conv1, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME')

_pool_dr1 = tf.nn.dropout(_pool1, _keepratio)

_conv2 = tf.nn.conv2d(_pool_dr1, _w['wc2'], strides=[1, 1, 1, 1], padding='SAME')

_conv2 = tf.nn.relu(tf.nn.bias_add(_conv2, _b['bc2']))

_pool2 = tf.nn.max_pool(_conv2, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME')

_pool_dr2 = tf.nn.dropout(_pool2, _keepratio)

_densel = tf.reshape(_pool_dr2, [-1, _w['wd1'].shape[0]])

_fc1 = tf.nn.relu(tf.add(tf.matmul(_densel, _w['wd1']), _b['bd1']))

_fc_dr1 = tf.nn.dropout(_fc1, _keepratio)

_out = tf.add(tf.matmul(_fc_dr1, _w['wd2']), _b['bd2'])

out = {

'input_r': _input_r, 'conv1': _conv1, 'pool1': _pool1, 'pool_dr1': _pool_dr1,

'conv2': _conv2, 'pool2': _pool2, 'pool_dr2': _pool_dr2, 'densel': _densel,

'fc1': _fc1, 'fc_dr1': _fc_dr1, 'out': _out

}

return out

n_input = 784

n_output = 10

x = tf.placeholder(tf.float32, [None, n_input])

y = tf.placeholder(tf.float32, [None, n_output])

keepratio = tf.placeholder(tf.float32)

_pred = conv_basic(x, res_dict['weight'], res_dict['biases'], keepratio)['out']

cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(_pred, y))

_corr = tf.equal(tf.argmax(_pred, 1), tf.argmax(y, 1))

accr = tf.reduce_mean(tf.cast(_corr, tf.float32))

init = tf.global_variables_initializer()

sess = tf.Session()

sess.run(init)

training_epochs = 1

batch_size = 1

display_step = 1

for epoch in range(training_epochs):

avg_cost = 0.

total_batch = 10

for i in range(total_batch):

batch_xs, batch_ys = mnist.train.next_batch(batch_size)

if epoch % display_step == 0:

print('_pre:', np.argmax(sess.run(_pred, feed_dict={x: batch_xs, keepratio: 1. })))

print('answer:', np.argmax(batch_ys))