詳解tensorflow訓練自己的資料集實現CNN影象分類

利用卷積神經網路訓練影象資料分為以下幾個步驟

1.讀取圖片檔案

2.產生用於訓練的批次

3.定義訓練的模型(包括初始化引數,卷積、池化層等引數、網路)

4.訓練

1 讀取圖片檔案

def get_files(filename): class_train = [] label_train = [] for train_class in os.listdir(filename): for pic in os.listdir(filename+train_class): class_train.append(filename+train_class+'/'+pic) label_train.append(train_class) temp = np.array([class_train,label_train]) temp = temp.transpose() #shuffle the samples np.random.shuffle(temp) #after transpose, images is in dimension 0 and label in dimension 1 image_list = list(temp[:,0]) label_list = list(temp[:,1]) label_list = [int(i) for i in label_list] #print(label_list) return image_list,label_list

這裡檔名作為標籤,即類別(其資料型別要確定,後面要轉為tensor型別資料)。

然後將image和label轉為list格式資料,因為後邊用到的的一些tensorflow函式接收的是list格式資料。

2 產生用於訓練的批次

def get_batches(image,label,resize_w,resize_h,batch_size,capacity): #convert the list of images and labels to tensor image = tf.cast(image,tf.string) label = tf.cast(label,tf.int64) queue = tf.train.slice_input_producer([image,label]) label = queue[1] image_c = tf.read_file(queue[0]) image = tf.image.decode_jpeg(image_c,channels = 3) #resize image = tf.image.resize_image_with_crop_or_pad(image,resize_w,resize_h) #(x - mean) / adjusted_stddev image = tf.image.per_image_standardization(image) image_batch,label_batch = tf.train.batch([image,label], batch_size = batch_size, num_threads = 64, capacity = capacity) images_batch = tf.cast(image_batch,tf.float32) labels_batch = tf.reshape(label_batch,[batch_size]) return images_batch,labels_batch

首先使用tf.cast轉化為tensorflow資料格式,使用tf.train.slice_input_producer實現一個輸入的佇列。

label不需要處理,image儲存的是路徑,需要讀取為圖片,接下來的幾步就是讀取路徑轉為圖片,用於訓練。

CNN對影象大小是敏感的,第10行圖片resize處理為大小一致,12行將其標準化,即減去所有圖片的均值,方便訓練。

接下來使用tf.train.batch函式產生訓練的批次。

最後將產生的批次做資料型別的轉換和shape的處理即可產生用於訓練的批次。

3 定義訓練的模型

(1)訓練引數的定義及初始化

def init_weights(shape): return tf.Variable(tf.random_normal(shape,stddev = 0.01)) #init weights weights = { "w1":init_weights([3,3,3,16]), "w2":init_weights([3,3,16,128]), "w3":init_weights([3,3,128,256]), "w4":init_weights([4096,4096]), "wo":init_weights([4096,2]) } #init biases biases = { "b1":init_weights([16]), "b2":init_weights([128]), "b3":init_weights([256]), "b4":init_weights([4096]), "bo":init_weights([2]) }

CNN的每層是y=wx+b的決策模型,卷積層產生特徵向量,根據這些特徵向量帶入x進行計算,因此,需要定義卷積層的初始化引數,包括權重和偏置。其中第8行的引數形狀後邊再解釋。

(2)定義不同層的操作

def conv2d(x,w,b):

x = tf.nn.conv2d(x,w,strides = [1,1,1,1],padding = "SAME")

x = tf.nn.bias_add(x,b)

return tf.nn.relu(x)

def pooling(x):

return tf.nn.max_pool(x,ksize = [1,2,2,1],strides = [1,2,2,1],padding = "SAME")

def norm(x,lsize = 4):

return tf.nn.lrn(x,depth_radius = lsize,bias = 1,alpha = 0.001/9.0,beta = 0.75)

這裡只定義了三種層,即卷積層、池化層和正則化層

(3)定義訓練模型

def mmodel(images):

l1 = conv2d(images,weights["w1"],biases["b1"])

l2 = pooling(l1)

l2 = norm(l2)

l3 = conv2d(l2,weights["w2"],biases["b2"])

l4 = pooling(l3)

l4 = norm(l4)

l5 = conv2d(l4,weights["w3"],biases["b3"])

#same as the batch size

l6 = pooling(l5)

l6 = tf.reshape(l6,[-1,weights["w4"].get_shape().as_list()[0]])

l7 = tf.nn.relu(tf.matmul(l6,weights["w4"])+biases["b4"])

soft_max = tf.add(tf.matmul(l7,weights["wo"]),biases["bo"])

return soft_max

模型比較簡單,使用三層卷積,第11行使用全連線,需要對特徵向量進行reshape,其中l6的形狀為[-1,w4的第1維的引數],因此,將其按照“w4”reshape的時候,要使得-1位置的大小為batch_size,這樣,最終再乘以“wo”時,最終的輸出大小為[batch_size,class_num]

(4)定義評估量

def loss(logits,label_batches):

cross_entropy = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=logits,labels=label_batches)

cost = tf.reduce_mean(cross_entropy)

return cost

首先定義損失函式,這是用於訓練最小化損失的必需量

def get_accuracy(logits,labels):

acc = tf.nn.in_top_k(logits,labels,1)

acc = tf.cast(acc,tf.float32)

acc = tf.reduce_mean(acc)

return acc

評價分類準確率的量,訓練時,需要loss值減小,準確率增加,這樣的訓練才是收斂的。

(5)定義訓練方式

def training(loss,lr):

train_op = tf.train.RMSPropOptimizer(lr,0.9).minimize(loss)

return train_op

有很多種訓練方式,可以自行去官網檢視,但是不同的訓練方式可能對應前面的引數定義不一樣,需要另行處理,否則可能報錯。

4 訓練

def run_training():

data_dir = 'C:/Users/wk/Desktop/bky/dataSet/'

image,label = inputData.get_files(data_dir)

image_batches,label_batches = inputData.get_batches(image,label,32,32,16,20)

p = model.mmodel(image_batches)

cost = model.loss(p,label_batches)

train_op = model.training(cost,0.001)

acc = model.get_accuracy(p,label_batches)

sess = tf.Session()

init = tf.global_variables_initializer()

sess.run(init)

coord = tf.train.Coordinator()

threads = tf.train.start_queue_runners(sess = sess,coord = coord)

try:

for step in np.arange(1000):

print(step)

if coord.should_stop():

break

_,train_acc,train_loss = sess.run([train_op,acc,cost])

print("loss:{} accuracy:{}".format(train_loss,train_acc))

except tf.errors.OutOfRangeError:

print("Done!!!")

finally:

coord.request_stop()

coord.join(threads)

sess.close()

神經網路訓練的時候,我們需要將模型儲存下來,方便後面繼續訓練或者用訓練好的模型進行測試。因此,我們需要建立一個saver儲存模型。

def run_training():

data_dir = 'C:/Users/wk/Desktop/bky/dataSet/'

log_dir = 'C:/Users/wk/Desktop/bky/log/'

image,label = inputData.get_files(data_dir)

image_batches,label_batches = inputData.get_batches(image,label,32,32,16,20)

print(image_batches.shape)

p = model.mmodel(image_batches,16)

cost = model.loss(p,label_batches)

train_op = model.training(cost,0.001)

acc = model.get_accuracy(p,label_batches)

sess = tf.Session()

init = tf.global_variables_initializer()

sess.run(init)

saver = tf.train.Saver()

coord = tf.train.Coordinator()

threads = tf.train.start_queue_runners(sess = sess,coord = coord)

try:

for step in np.arange(1000):

print(step)

if coord.should_stop():

break

_,train_acc,train_loss = sess.run([train_op,acc,cost])

print("loss:{} accuracy:{}".format(train_loss,train_acc))

if step % 100 == 0:

check = os.path.join(log_dir,"model.ckpt")

saver.save(sess,check,global_step = step)

except tf.errors.OutOfRangeError:

print("Done!!!")

finally:

coord.request_stop()

coord.join(threads)

sess.close()

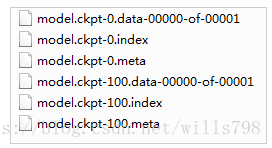

訓練好的模型資訊會記錄在checkpoint檔案中,大致如下:

model_checkpoint_path: "C:/Users/wk/Desktop/bky/log/model.ckpt-100"

all_model_checkpoint_paths: "C:/Users/wk/Desktop/bky/log/model.ckpt-0"

all_model_checkpoint_paths: "C:/Users/wk/Desktop/bky/log/model.ckpt-100"

其餘還會生成一些檔案,分別記錄了模型引數等資訊,後邊測試的時候程式會讀取checkpoint檔案去載入這些真正的資料檔案

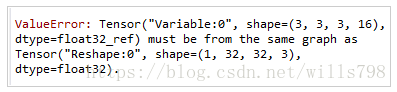

構建好神經網路進行訓練完成後,如果用之前的程式碼直接進行測試,會報shape不符合的錯誤,大致是卷積層的輸入與影象的shape不一致,這是因為上篇的程式碼,將weights和biases定義在了模型的外面,呼叫模型的時候,出現valueError的錯誤。

因此,我們需要將引數定義在模型裡面,載入訓練好的模型引數時,訓練好的引數才能夠真正初始化模型。重寫模型函式如下

def mmodel(images,batch_size):

with tf.variable_scope('conv1') as scope:

weights = tf.get_variable('weights',

shape = [3,3,3, 16],

dtype = tf.float32,

initializer=tf.truncated_normal_initializer(stddev=0.1,dtype=tf.float32))

biases = tf.get_variable('biases',

shape=[16],

dtype=tf.float32,

initializer=tf.constant_initializer(0.1))

conv = tf.nn.conv2d(images, weights, strides=[1,1,1,1], padding='SAME')

pre_activation = tf.nn.bias_add(conv, biases)

conv1 = tf.nn.relu(pre_activation, name= scope.name)

with tf.variable_scope('pooling1_lrn') as scope:

pool1 = tf.nn.max_pool(conv1, ksize=[1,2,2,1],strides=[1,2,2,1],

padding='SAME', name='pooling1')

norm1 = tf.nn.lrn(pool1, depth_radius=4, bias=1.0, alpha=0.001/9.0,

beta=0.75,name='norm1')

with tf.variable_scope('conv2') as scope:

weights = tf.get_variable('weights',

shape=[3,3,16,128],

dtype=tf.float32,

initializer=tf.truncated_normal_initializer(stddev=0.1,dtype=tf.float32))

biases = tf.get_variable('biases',

shape=[128],

dtype=tf.float32,

initializer=tf.constant_initializer(0.1))

conv = tf.nn.conv2d(norm1, weights, strides=[1,1,1,1],padding='SAME')

pre_activation = tf.nn.bias_add(conv, biases)

conv2 = tf.nn.relu(pre_activation, name='conv2')

with tf.variable_scope('pooling2_lrn') as scope:

norm2 = tf.nn.lrn(conv2, depth_radius=4, bias=1.0, alpha=0.001/9.0,

beta=0.75,name='norm2')

pool2 = tf.nn.max_pool(norm2, ksize=[1,2,2,1], strides=[1,1,1,1],

padding='SAME',name='pooling2')

with tf.variable_scope('local3') as scope:

reshape = tf.reshape(pool2, shape=[batch_size, -1])

dim = reshape.get_shape()[1].value

weights = tf.get_variable('weights',

shape=[dim,4096],

dtype=tf.float32,

initializer=tf.truncated_normal_initializer(stddev=0.005,dtype=tf.float32))

biases = tf.get_variable('biases',

shape=[4096],

dtype=tf.float32,

initializer=tf.constant_initializer(0.1))

local3 = tf.nn.relu(tf.matmul(reshape, weights) + biases, name=scope.name)

with tf.variable_scope('softmax_linear') as scope:

weights = tf.get_variable('softmax_linear',

shape=[4096, 2],

dtype=tf.float32,

initializer=tf.truncated_normal_initializer(stddev=0.005,dtype=tf.float32))

biases = tf.get_variable('biases',

shape=[2],

dtype=tf.float32,

initializer=tf.constant_initializer(0.1))

softmax_linear = tf.add(tf.matmul(local3, weights), biases, name='softmax_linear')

return softmax_linear

測試訓練好的模型

首先獲取一張測試影象

def get_one_image(img_dir):

image = Image.open(img_dir)

plt.imshow(image)

image = image.resize([32, 32])

image_arr = np.array(image)

return image_arr

載入模型,計算測試結果

def test(test_file):

log_dir = 'C:/Users/wk/Desktop/bky/log/'

image_arr = get_one_image(test_file)

with tf.Graph().as_default():

image = tf.cast(image_arr, tf.float32)

image = tf.image.per_image_standardization(image)

image = tf.reshape(image, [1,32, 32, 3])

print(image.shape)

p = model.mmodel(image,1)

logits = tf.nn.softmax(p)

x = tf.placeholder(tf.float32,shape = [32,32,3])

saver = tf.train.Saver()

with tf.Session() as sess:

ckpt = tf.train.get_checkpoint_state(log_dir)

if ckpt and ckpt.model_checkpoint_path:

global_step = ckpt.model_checkpoint_path.split('/')[-1].split('-')[-1]

saver.restore(sess, ckpt.model_checkpoint_path)

print('Loading success)

else:

print('No checkpoint')

prediction = sess.run(logits, feed_dict={x: image_arr})

max_index = np.argmax(prediction)

print(max_index)