Java爬蟲實踐:Jsoup+HttpUnit爬取今日頭條、網易、搜狐、鳳凰新聞

阿新 • • 發佈:2019-02-06

0x0 背景

最近學習爬蟲,分析了幾種主流的爬蟲框架,決定使用最原始的兩大框架進行練手:

Jsoup&HttpUnit

其中jsoup可以獲取靜態頁面,並解析頁面標籤,最主要的是,可以採用類似於jquery的語法獲取想要的標籤元素,例如:

//1.獲取url地址的網頁html

html = Jsoup.connect(url).get();

// 2.jsoup獲取新聞<a>標籤

Elements newsATags = html.select("div#headLineDefault")

.select("ul.FNewMTopLis")

.select 但是,有些網頁(例如今日頭條)並非是靜態頁面,而是在首頁載入後通過ajax獲取新聞內容然後用js渲染到頁面上的。對於這種頁面,我們需要使用htmlunit來模擬一個瀏覽器訪問該url,即可獲取該頁面的html字串。程式碼如下:

WebClient webClient = new WebClient(BrowserVersion.CHROME);

webClient.getOptions().setJavaScriptEnabled(true);

webClient.getOptions().setCssEnabled(false); 0x1 搜狐、鳳凰、網易爬蟲

這三家的頁面都是靜態的,因此程式碼都差不多,只要分析頁面標籤找到對應的元素,提取出想要的內容即可。

爬蟲基本步驟為以下四步:

(1)獲取首頁

(2)使用jsoup獲取新聞<a>標籤

(3)從<a>標籤中抽取基本資訊,封裝成News物件

(4)根據新聞url訪問新聞頁面,獲取新聞內容、圖片等

1.爬蟲介面

一個介面,介面有一個抽象方法pullNews用於拉新聞,有一個預設方法用於獲取新聞首頁:

public interface NewsPuller {

void pullNews();

// url:即新聞首頁url

// useHtmlUnit:是否使用htmlunit

default Document getHtmlFromUrl(String url, boolean useHtmlUnit) throws Exception {

if (!useHtmlUnit) {

return Jsoup.connect(url)

//模擬火狐瀏覽器

.userAgent("Mozilla/4.0 (compatible; MSIE 9.0; Windows NT 6.1; Trident/5.0)")

.get();

} else {

WebClient webClient = new WebClient(BrowserVersion.CHROME);

webClient.getOptions().setJavaScriptEnabled(true);

webClient.getOptions().setCssEnabled(false);

webClient.getOptions().setActiveXNative(false);

webClient.getOptions().setCssEnabled(false);

webClient.getOptions().setThrowExceptionOnScriptError(false);

webClient.getOptions().setThrowExceptionOnFailingStatusCode(false);

webClient.getOptions().setTimeout(10000);

HtmlPage htmlPage = null;

try {

htmlPage = webClient.getPage(url);

webClient.waitForBackgroundJavaScript(10000);

String htmlString = htmlPage.asXml();

return Jsoup.parse(htmlString);

} finally {

webClient.close();

}

}

}

}2.搜狐爬蟲

@Component("sohuNewsPuller")

public class SohuNewsPuller implements NewsPuller {

private static final Logger logger = LoggerFactory.getLogger(SohuNewsPuller.class);

@Value("${news.sohu.url}")

private String url;

@Autowired

private NewsService newsService;

@Override

public void pullNews() {

logger.info("開始拉取搜狐新聞!");

// 1.獲取首頁

Document html= null;

try {

html = getHtmlFromUrl(url, false);

} catch (Exception e) {

logger.error("==============獲取搜狐首頁失敗: {}=============", url);

e.printStackTrace();

return;

}

// 2.jsoup獲取新聞<a>標籤

Elements newsATags = html.select("div.focus-news")

.select("div.list16")

.select("li")

.select("a");

// 3.從<a>標籤中抽取基本資訊,封裝成news

HashSet<News> newsSet = new HashSet<>();

for (Element a : newsATags) {

String url = a.attr("href");

String title = a.attr("title");

News n = new News();

n.setSource("搜狐");

n.setUrl(url);

n.setTitle(title);

n.setCreateDate(new Date());

newsSet.add(n);

}

// 4.根據新聞url訪問新聞,獲取新聞內容

newsSet.forEach(news -> {

logger.info("開始抽取搜狐新聞內容:{}", news.getUrl());

Document newsHtml = null;

try {

newsHtml = getHtmlFromUrl(news.getUrl(), false);

Element newsContent = newsHtml.select("div#article-container")

.select("div.main")

.select("div.text")

.first();

String title = newsContent.select("div.text-title").select("h1").text();

String content = newsContent.select("article.article").first().toString();

String image = NewsUtils.getImageFromContent(content);

news.setTitle(title);

news.setContent(content);

news.setImage(image);

newsService.saveNews(news);

logger.info("抽取搜狐新聞《{}》成功!", news.getTitle());

} catch (Exception e) {

logger.error("新聞抽取失敗:{}", news.getUrl());

e.printStackTrace();

}

});

}

}2.鳳凰新聞爬蟲

@Component("ifengNewsPuller")

public class IfengNewsPuller implements NewsPuller {

private static final Logger logger = LoggerFactory.getLogger(IfengNewsPuller.class);

@Value("${news.ifeng.url}")

private String url;

@Autowired

private NewsService newsService;

@Override

public void pullNews() {

logger.info("開始拉取鳳凰新聞!");

// 1.獲取首頁

Document html= null;

try {

html = getHtmlFromUrl(url, false);

} catch (Exception e) {

logger.error("==============獲取鳳凰首頁失敗: {} =============", url);

e.printStackTrace();

return;

}

// 2.jsoup獲取新聞<a>標籤

Elements newsATags = html.select("div#headLineDefault")

.select("ul.FNewMTopLis")

.select("li")

.select("a");

// 3.從<a>標籤中抽取基本資訊,封裝成news

HashSet<News> newsSet = new HashSet<>();

for (Element a : newsATags) {

String url = a.attr("href");

String title = a.text();

News n = new News();

n.setSource("鳳凰");

n.setUrl(url);

n.setTitle(title);

n.setCreateDate(new Date());

newsSet.add(n);

}

// 4.根據新聞url訪問新聞,獲取新聞內容

newsSet.parallelStream().forEach(news -> {

logger.info("開始抽取鳳凰新聞《{}》內容:{}", news.getTitle(), news.getUrl());

Document newsHtml = null;

try {

newsHtml = getHtmlFromUrl(news.getUrl(), false);

Elements contentElement = newsHtml.select("div#main_content");

if (contentElement.isEmpty()) {

contentElement = newsHtml.select("div#yc_con_txt");

}

if (contentElement.isEmpty())

return;

String content = contentElement.toString();

String image = NewsUtils.getImageFromContent(content);

news.setContent(content);

news.setImage(image);

newsService.saveNews(news);

logger.info("抽取鳳凰新聞《{}》成功!", news.getTitle());

} catch (Exception e) {

logger.error("鳳凰新聞抽取失敗:{}", news.getUrl());

e.printStackTrace();

}

});

logger.info("鳳凰新聞抽取完成!");

}

}3.網易爬蟲

@Component("netEasyNewsPuller")

public class NetEasyNewsPuller implements NewsPuller {

private static final Logger logger = LoggerFactory.getLogger(NetEasyNewsPuller.class);

@Value("${news.neteasy.url}")

private String url;

@Autowired

private NewsService newsService;

@Override

public void pullNews() {

logger.info("開始拉取網易熱門新聞!");

// 1.獲取首頁

Document html= null;

try {

html = getHtmlFromUrl(url, false);

} catch (Exception e) {

logger.error("==============獲取網易新聞首頁失敗: {}=============", url);

e.printStackTrace();

return;

}

// 2.jsoup獲取指定標籤

Elements newsA = html.select("div#whole")

.next("div.area-half.left")

.select("div.tabContents")

.first()

.select("tbody > tr")

.select("a[href~=^http://news.163.com.*]");

// 3.從標籤中抽取資訊,封裝成news

HashSet<News> newsSet = new HashSet<>();

newsA.forEach(a -> {

String url = a.attr("href");

News n = new News();

n.setSource("網易");

n.setUrl(url);

n.setCreateDate(new Date());

newsSet.add(n);

});

// 4.根據url訪問新聞,獲取新聞內容

newsSet.forEach(news -> {

logger.info("開始抽取新聞內容:{}", news.getUrl());

Document newsHtml = null;

try {

newsHtml = getHtmlFromUrl(news.getUrl(), false);

Elements newsContent = newsHtml.select("div#endText");

Elements titleP = newsContent.select("p.otitle");

String title = titleP.text();

title = title.substring(5, title.length() - 1);

String image = NewsUtils.getImageFromContent(newsContent.toString());

news.setTitle(title);

news.setContent(newsContent.toString());

news.setImage(image);

newsService.saveNews(news);

logger.info("抽取網易新聞《{}》成功!", news.getTitle());

} catch (Exception e) {

logger.error("新聞抽取失敗:{}", news.getUrl());

e.printStackTrace();

}

});

logger.info("網易新聞拉取完成!");

}

}0x2 今日頭條爬蟲

由於今日頭條頁面中的新聞是通過ajax獲取後加載的,因此需要使用httpunit進行抓取。

主要程式碼如下:

@Component("toutiaoNewsPuller")

public class ToutiaoNewsPuller implements NewsPuller {

private static final Logger logger = LoggerFactory.getLogger(ToutiaoNewsPuller.class);

private static final String TOUTIAO_URL = "https://www.toutiao.com";

@Autowired

private NewsService newsService;

@Value("${news.toutiao.url}")

private String url;

@Override

public void pullNews() {

logger.info("開始拉取今日頭條熱門新聞!");

// 1.load html from url

Document html = null;

try {

html = getHtmlFromUrl(url, true);

} catch (Exception e) {

logger.error("獲取今日頭條主頁失敗!");

e.printStackTrace();

return;

}

// 2.parse the html to news information and load into POJO

Map<String, News> newsMap = new HashMap<>();

for (Element a : html.select("a[href~=/group/.*]:not(.comment)")) {

logger.info("標籤a: \n{}", a);

String href = TOUTIAO_URL + a.attr("href");

String title = StringUtils.isNotBlank(a.select("p").text()) ?

a.select("p").text() : a.text();

String image = a.select("img").attr("src");

News news = newsMap.get(href);

if (news == null) {

News n = new News();

n.setSource("今日頭條");

n.setUrl(href);

n.setCreateDate(new Date());

n.setImage(image);

n.setTitle(title);

newsMap.put(href, n);

} else {

if (a.hasClass("img-wrap")) {

news.setImage(image);

} else if (a.hasClass("title")) {

news.setTitle(title);

}

}

}

logger.info("今日頭條新聞標題拉取完成!");

logger.info("開始拉取新聞內容...");

newsMap.values().parallelStream().forEach(news -> {

logger.info("===================={}====================", news.getTitle());

Document contentHtml = null;

try {

contentHtml = getHtmlFromUrl(news.getUrl(), true);

} catch (Exception e) {

logger.error("獲取新聞《{}》內容失敗!", news.getTitle());

return;

}

Elements scripts = contentHtml.getElementsByTag("script");

scripts.forEach(script -> {

String regex = "articleInfo: \\{\\s*[\\n\\r]*\\s*title: '.*',\\s*[\\n\\r]*\\s*content: '(.*)',";

Pattern pattern = Pattern.compile(regex);

Matcher matcher = pattern.matcher(script.toString());

if (matcher.find()) {

String content = matcher.group(1)

.replace("<", "<")

.replace(">", ">")

.replace(""", "\"")

.replace("=", "=");

logger.info("content: {}", content);

news.setContent(content);

}

});

});

newsMap.values()

.stream()

.filter(news -> StringUtils.isNotBlank(news.getContent()) && !news.getContent().equals("null"))

.forEach(newsService::saveNews);

logger.info("今日頭條新聞內容拉取完成!");

}

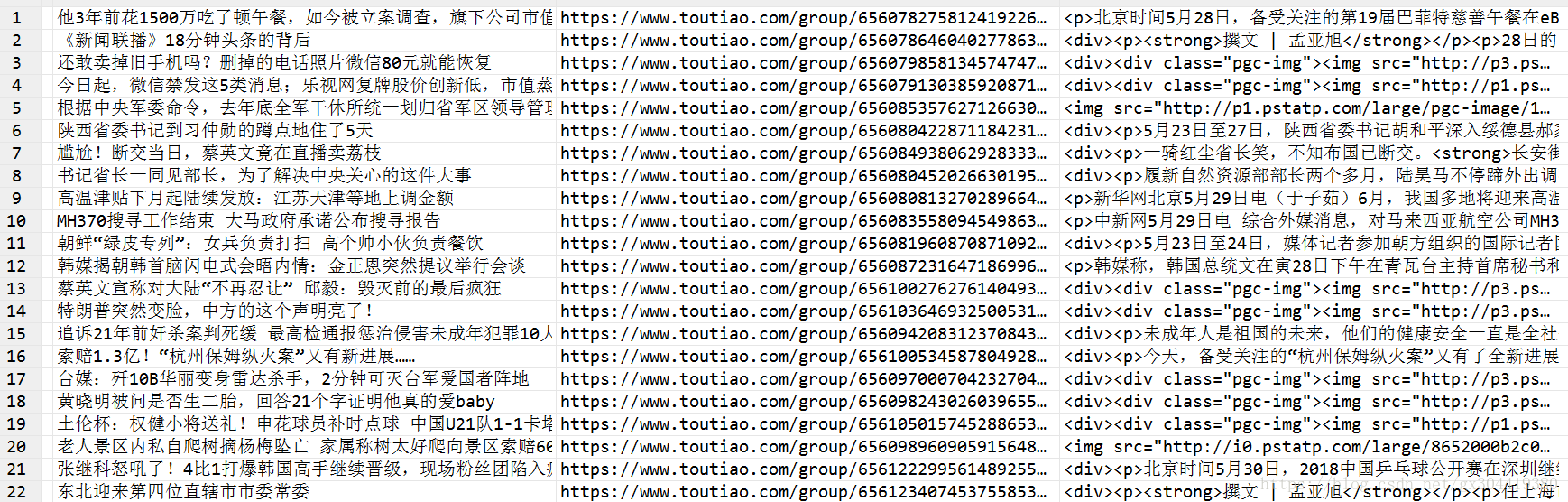

}拉取結果: