Kernel 塊裝置驅動框架

1,總體架構:

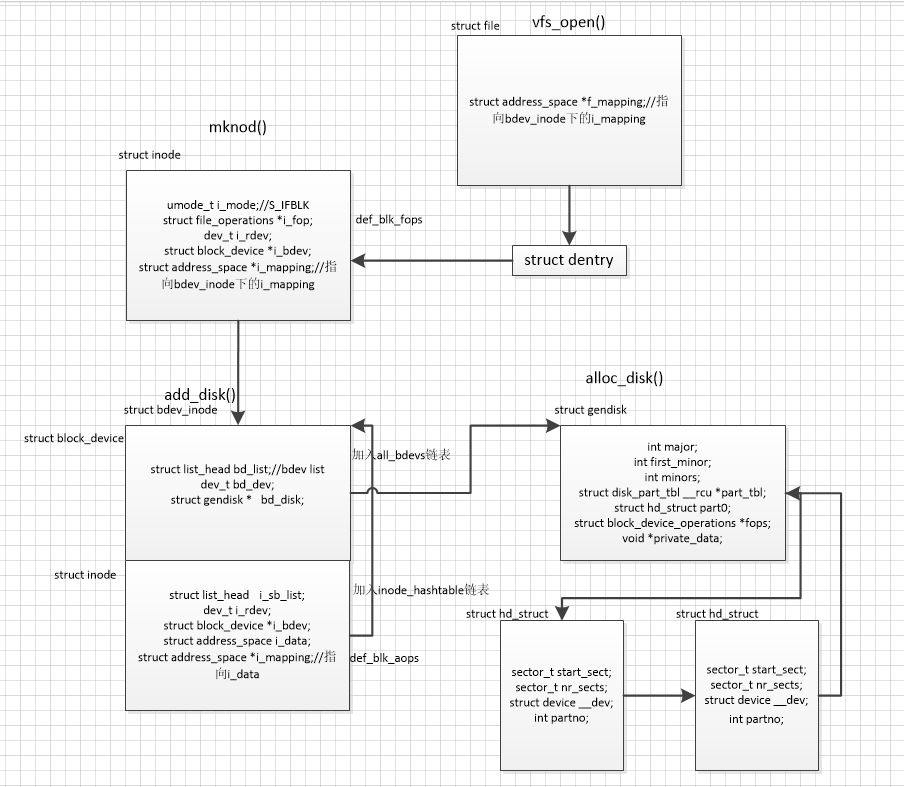

塊裝置驅動框架是Linux裝置最重要的框架之一,涉及核心的vfs,裝置驅動模型等模組,是核心中異常複雜的一個框架。我們先看一下塊裝置設計的主要框架結構,先從總體上對塊裝置有個初步的認識:

2,塊裝置框架分析

1,塊裝置的表示gendisk:

核心使用 struct gendisk 結構例項來表示一個塊裝置。一個塊裝置通常表示一個物理磁碟,每個塊裝置邏輯上可以被分成多個分割槽,每個分割槽用 struct hd_struct 結構表示。

struct gendisk {

/* major, first_minor and minors are input parameters only,

* don't use directly. Use disk_devt() and disk_max_parts().

*/

int major; /* major number of driver */ // 主裝置號

int first_minor; // 起始從裝置號

int minors; /* maximum number of minors, =1 for

* disks that can't be partitioned. */ // 從裝置個數

char disk_name[DISK_NAME_LEN]; /* name of major driver */

char *(*devnode)(struct gendisk *gd, umode_t *mode);

unsigned int events; /* supported events */

unsigned int async_events; /* async events, subset of all */

/* Array of pointers to partitions indexed by partno.

* Protected with matching bdev lock but stat and other

* non-critical accesses use RCU. Always access through

* helpers.

*/

struct disk_part_tbl __rcu *part_tbl; // 分割槽表

struct hd_struct part0; // 整個磁碟分割槽,part_tbl[0]指向part0

const struct block_device_operations *fops; // 塊裝置操作方法

struct request_queue *queue; // 請求佇列(用於請求合併,非同步IO,電梯排程等)

void *private_data; // 裝置特定的資訊

int flags;

struct rw_semaphore lookup_sem;

struct kobject *slave_dir;

struct timer_rand_state *random;

atomic_t sync_io; /* RAID */

struct disk_events *ev;

#ifdef CONFIG_BLK_DEV_INTEGRITY

struct kobject integrity_kobj;

#endif /* CONFIG_BLK_DEV_INTEGRITY */

int node_id;

struct badblocks *bb;

struct lockdep_map lockdep_map;

};

struct hd_struct {

sector_t start_sect; // 起始扇區號

/*

* nr_sects is protected by sequence counter. One might extend a

* partition while IO is happening to it and update of nr_sects

* can be non-atomic on 32bit machines with 64bit sector_t.

*/

sector_t nr_sects; // 扇區個數

seqcount_t nr_sects_seq;

sector_t alignment_offset;

unsigned int discard_alignment;

struct device __dev; // 裝置模型

struct kobject *holder_dir;

int policy, partno; // 分割槽號

struct partition_meta_info *info;

#ifdef CONFIG_FAIL_MAKE_REQUEST

int make_it_fail;

#endif

unsigned long stamp;

atomic_t in_flight[2];

#ifdef CONFIG_SMP

struct disk_stats __percpu *dkstats;

#else

struct disk_stats dkstats;

#endif

struct percpu_ref ref;

struct rcu_head rcu_head;

};

核心提供alloc_disk()介面,實現gendisk和hd_struct的分配和釋放,及初始化。驅動程式需要提供主從裝置號,fops以及裝置特定的private_data。

2,塊裝置的註冊:

驅動程式分配並初始化一個塊裝置之後,通過 add_disk() 介面完成塊裝置的註冊。add_disk()分配一個struct bdev_inode結構,該結構是struct block_device和struct inode的結合體,分別用來鏈入all_bdevs和inode_hashtable連結串列中。其中block_device中的bd_disk用來指向註冊的gendisk。block_device和inode中分別通過bd_dev和i_rdev記錄該gendisk的裝置號。至此,上層可以通過裝置號從all_bdevs或者inode_hashtable連結串列中找到該 bdev_inode結構,然後通過block_device結構的bd_disk找到相應的gendisk,呼叫gendisk的fops操作裝置特定的private_data。

struct bdev_inode {

struct block_device bdev;

struct inode vfs_inode;

};

struct block_device {

dev_t bd_dev; /* not a kdev_t - it's a search key */ // 裝置號

int bd_openers;

struct inode * bd_inode; /* will die */

struct super_block * bd_super;

struct mutex bd_mutex; /* open/close mutex */

void * bd_claiming;

void * bd_holder;

int bd_holders;

bool bd_write_holder;

#ifdef CONFIG_SYSFS

struct list_head bd_holder_disks;

#endif

struct block_device * bd_contains;

unsigned bd_block_size; // 裝置塊大小

u8 bd_partno; // 分割槽號

struct hd_struct * bd_part; // 分割槽結構

/* number of times partitions within this device have been opened. */

unsigned bd_part_count;

int bd_invalidated;

struct gendisk * bd_disk; // 指向的塊裝置

struct request_queue * bd_queue; // 裝置的請求佇列

struct backing_dev_info *bd_bdi;

struct list_head bd_list; // all_devs連結串列節點

/*

* Private data. You must have bd_claim'ed the block_device

* to use this. NOTE: bd_claim allows an owner to claim

* the same device multiple times, the owner must take special

* care to not mess up bd_private for that case.

*/

unsigned long bd_private;

/* The counter of freeze processes */

int bd_fsfreeze_count;

/* Mutex for freeze */

struct mutex bd_fsfreeze_mutex;

} __randomize_layout;

struct inode {

umode_t i_mode;

unsigned short i_opflags;

kuid_t i_uid;

kgid_t i_gid;

unsigned int i_flags;

#ifdef CONFIG_FS_POSIX_ACL

struct posix_acl *i_acl;

struct posix_acl *i_default_acl;

#endif

const struct inode_operations *i_op;

struct super_block *i_sb;

struct address_space *i_mapping; // 指向 i_data,核心初始化i_data結構,並預設初始化i_mapping指向i_data

#ifdef CONFIG_SECURITY

void *i_security;

#endif

/* Stat data, not accessed from path walking */

unsigned long i_ino;

/*

* Filesystems may only read i_nlink directly. They shall use the

* following functions for modification:

*

* (set|clear|inc|drop)_nlink

* inode_(inc|dec)_link_count

*/

union {

const unsigned int i_nlink;

unsigned int __i_nlink;

};

dev_t i_rdev; // 裝置號

loff_t i_size;

struct timespec64 i_atime;

struct timespec64 i_mtime;

struct timespec64 i_ctime;

spinlock_t i_lock; /* i_blocks, i_bytes, maybe i_size */

unsigned short i_bytes;

u8 i_blkbits;

u8 i_write_hint;

blkcnt_t i_blocks;

#ifdef __NEED_I_SIZE_ORDERED

seqcount_t i_size_seqcount;

#endif

/* Misc */

unsigned long i_state;

struct rw_semaphore i_rwsem;

unsigned long dirtied_when; /* jiffies of first dirtying */

unsigned long dirtied_time_when;

struct hlist_node i_hash;

struct list_head i_io_list; /* backing dev IO list */

#ifdef CONFIG_CGROUP_WRITEBACK

struct bdi_writeback *i_wb; /* the associated cgroup wb */

/* foreign inode detection, see wbc_detach_inode() */

int i_wb_frn_winner;

u16 i_wb_frn_avg_time;

u16 i_wb_frn_history;

#endif

struct list_head i_lru; /* inode LRU list */

struct list_head i_sb_list; // inode_hashtable連結串列節點

struct list_head i_wb_list; /* backing dev writeback list */

union {

struct hlist_head i_dentry;

struct rcu_head i_rcu;

};

atomic64_t i_version;

atomic_t i_count;

atomic_t i_dio_count;

atomic_t i_writecount;

#ifdef CONFIG_IMA

atomic_t i_readcount; /* struct files open RO */

#endif

const struct file_operations *i_fop; /* former ->i_op->default_file_ops */

struct file_lock_context *i_flctx;

struct address_space i_data; // 核心初始化 aops 方法集

struct list_head i_devices;

union {

struct pipe_inode_info *i_pipe;

struct block_device *i_bdev; // 指向block_device結構

struct cdev *i_cdev;

char *i_link;

unsigned i_dir_seq;

};

__u32 i_generation;

#ifdef CONFIG_FSNOTIFY

__u32 i_fsnotify_mask; /* all events this inode cares about */

struct fsnotify_mark_connector __rcu *i_fsnotify_marks;

#endif

#if IS_ENABLED(CONFIG_FS_ENCRYPTION)

struct fscrypt_info *i_crypt_info;

#endif

void *i_private; /* fs or device private pointer */

} __randomize_layout;

3,塊裝置節點註冊:

塊裝置gendisk分配,初始化並註冊進核心之後,此時使用者還無法進行訪問,必須通過建立塊裝置節點的方式使得使用者可以訪問該裝置。核心提供類似 ext2_mknod()的方法在檔案系統/dev/下建立一個塊裝置inode,包含該塊裝置的裝置號。這樣,使用者可以通過開啟該塊裝置節點,根據該節點中的裝置號去尋找相應的bdev。

4,塊裝置的開啟和讀寫:

塊裝置的開啟,讀寫遵循linux的vfs框架架構。這裡簡要描述一下塊裝置的開啟在vfs層中的邏輯:使用者呼叫系統呼叫open()開啟一個塊裝置的時候後,kernel呼叫vfs_open(),通過dentry,載入裝置檔案 inode,根據裝置檔案inode的i_mode為S_IFBLK,將裝置檔案inode的i_fop賦值為def_blk_fops,通過呼叫def_blk_fops的open()方法,查詢到註冊進核心的bdev,將裝置inode的i_bdev指向塊裝置的bdev,並將file和裝置inode的i_mapping都指向bdev_inode的i_mapping。

當用戶通過開啟的檔案file去讀寫時,通過標準的vfs操作,呼叫vfs_read()或者vfs_write()。這兩個函式呼叫def_blk_fops的read_iter()和write_iter()方法。這兩個方法內部呼叫file->f_mapping->aops->readpage()或者file->f_mapping->aops->writepage()實現檔案的讀寫。readpage()和writepage()最終呼叫submit_bh()構建request並提交request_queue。

3,塊裝置驅動編寫

從上面塊裝置框架分析可以看出,塊裝置涉及的鏈路非常長,光是嵌入在vfs層中的邏輯就很錯綜複雜,更何況還有裝置的非同步請求佇列的機制等等。好在linux幫助我們實現了健壯而又靈活的框架,使得我們編寫一個塊裝置驅動程式相對容易許多。

(1)首先,由於歷史問題,塊裝置的物理結構被設計成 磁頭,柱面和扇區的組合。核心使用512位元組作為內部扇區大小參與計算等,但硬體可能實現512,1024和2048等作為扇區大小,因此,裝置驅動程式在同核心介面互動時,要注意扇區大小的轉換。

(2)塊裝置驅動程式一般通過alloc_disk()和add_disk()介面分配和註冊。需要手動設定gendisk的fops(用於裝置開啟,關閉和配置ioctl等的方法),及 private_data。

(3)使用核心介面分配並初始化塊裝置的request_queue。如果讀寫操作使用裝置的request_queue,需要提供request_fn方法,以便進行實際的request處理。如果讀寫操作不適用request_queue,可以直接提供make_request_fn方法,這樣讀寫操作在呼叫submit_bh()方法時,立即呼叫自定義的make_request_fn,實現資料的及時讀寫。

示例