文字相似性熱度統計演算法實現(一)-整句熱度統計

1. 場景描述

軟體老王在上一節介紹到相似性熱度統計的4個需求(文字相似性熱度統計(python版)),根據需求要從不同維度進行統計:

(1)分組不分句熱度統計(根據某列首先進行分組,然後再對描述類列進行相似性統計);

(2)分組分句熱度統計(根據某列首先進行分組,然後對描述類列按照標點符號進行拆分,然後再對這些句進行熱度統計);

(3)整句及分句熱度統計;(對描述類列/按標點符號進行分句,進行熱度統計)

(4)熱詞統計(對描述類類進行熱詞統計,反饋改方式做不不大)

2. 解決方案

熱詞統計統計對業務沒啥幫助,軟體老王就是用了jieba分詞,已經包含在其他幾個需求中了,不再介紹了,直接介紹整句及分句熱度統計,方案包含完整的excel讀入,結果寫入到excel及導航到明細等。

2.1 完整程式碼

完整程式碼,有需要的朋友可以直接拿走,不想看程式碼介紹的,可以直接拿走執行。

import jieba.posseg as pseg import jieba.analyse import xlwt import openpyxl from gensim import corpora, models, similarities import re #停詞函式 def StopWordsList(filepath): wlst = [w.strip() for w in open(filepath, 'r', encoding='utf8').readlines()] return wlst def str_to_hex(s): return ''.join([hex(ord(c)).replace('0x', '') for c in s]) # jieba分詞 def seg_sentence(sentence, stop_words): stop_flag = ['x', 'c', 'u', 'd', 'p', 't', 'uj', 'f', 'r'] sentence_seged = pseg.cut(sentence) outstr = [] for word, flag in sentence_seged: if word not in stop_words and flag not in stop_flag: outstr.append(word) return outstr if __name__ == '__main__': #1 這些是jieba分詞的自定義詞典,軟體老王這裡新增的格式行業術語,格式就是文件,一列一個詞一行就行了, # 這個幾個詞典軟體老王就不上傳了,可註釋掉。 jieba.load_userdict("g1.txt") jieba.load_userdict("g2.txt") jieba.load_userdict("g3.txt") #2 停用詞,簡單理解就是這次詞不分割,這個軟體老王找的網上通用的,會提交下。 spPath = 'stop.txt' stop_words = StopWordsList(spPath) #3 excel處理 wbk = xlwt.Workbook(encoding='ascii') sheet = wbk.add_sheet("軟體老王sheet") # sheet名稱 sheet.write(0, 0, '表頭-軟體老王1') sheet.write(0, 1, '表頭-軟體老王2') sheet.write(0, 2, '導航-連結到明細sheet表') wb = openpyxl.load_workbook('軟體老王-source.xlsx') ws = wb.active col = ws['B'] # 4 相似性處理 rcount = 1 texts = [] orig_txt = [] key_list = [] name_list = [] sheet_list = [] for cell in col: if cell.value is None: continue if not isinstance(cell.value, str): continue item = cell.value.strip('\n\r').split('\t') # 製表格切分 string = item[0] if string is None or len(string) == 0: continue else: textstr = seg_sentence(string, stop_words) texts.append(textstr) orig_txt.append(string) dictionary = corpora.Dictionary(texts) feature_cnt = len(dictionary.token2id.keys()) corpus = [dictionary.doc2bow(text) for text in texts] tfidf = models.LsiModel(corpus) index = similarities.SparseMatrixSimilarity(tfidf[corpus], num_features=feature_cnt) result_lt = [] word_dict = {} count =0 for keyword in orig_txt: count = count+1 print('開始執行,第'+ str(count)+'行') if keyword in result_lt or keyword is None or len(keyword) == 0: continue kw_vector = dictionary.doc2bow(seg_sentence(keyword, stop_words)) sim = index[tfidf[kw_vector]] result_list = [] for i in range(len(sim)): if sim[i] > 0.5: if orig_txt[i] in result_lt and orig_txt[i] not in result_list: continue result_list.append(orig_txt[i]) result_lt.append(orig_txt[i]) if len(result_list) >0: word_dict[keyword] = len(result_list) if len(result_list) >= 1: sname = re.sub(u"([^\u4e00-\u9fa5\u0030-\u0039\u0041-\u005a\u0061-\u007a])", "", keyword[0:10])+ '_'\ + str(len(result_list)+ len(str_to_hex(keyword))) + str_to_hex(keyword)[-5:] sheet_t = wbk.add_sheet(sname) # Excel單元格名字 for i in range(len(result_list)): sheet_t.write(i, 0, label=result_list[i]) #5 按照熱度排序 -軟體老王 with open("rjlw.txt", 'w', encoding='utf-8') as wf2: orderList = list(word_dict.values()) orderList.sort(reverse=True) count = len(orderList) for i in range(count): for key in word_dict: if word_dict[key] == orderList[i]: key_list.append(key) word_dict[key] = 0 wf2.truncate() #6 寫入目標excel for i in range(len(key_list)): sheet.write(i+rcount, 0, label=key_list[i]) sheet.write(i+rcount, 1, label=orderList[i]) if orderList[i] >= 1: shname = re.sub(u"([^\u4e00-\u9fa5\u0030-\u0039\u0041-\u005a\u0061-\u007a])", "", key_list[i][0:10]) \ + '_'+ str(orderList[i]+ len(str_to_hex(key_list[i])))+ str_to_hex(key_list[i])[-5:] link = 'HYPERLINK("#%s!A1";"%s")' % (shname, shname) sheet.write(i+rcount, 2, xlwt.Formula(link)) rcount = rcount + len(key_list) key_list = [] orderList = [] texts = [] orig_txt = [] wbk.save('軟體老王-target.xls')

2.2 程式碼說明

(1) #1 以下程式碼 是jieba分詞的自定義詞典,軟體老王這裡新增的格式行業術語,格式就是文件,就一列,一個詞一行就行了, 這個幾個行業詞典軟體老王就不上傳了,可註釋掉。

jieba.load_userdict("g1.txt")

jieba.load_userdict("g2.txt")

jieba.load_userdict("g3.txt")(2) #2 停用詞,簡單理解就是這些詞不拆分,這個檔案軟體老王是從網上找的通用的,也可以不用。

spPath = 'stop.txt' stop_words = StopWordsList(spPath)

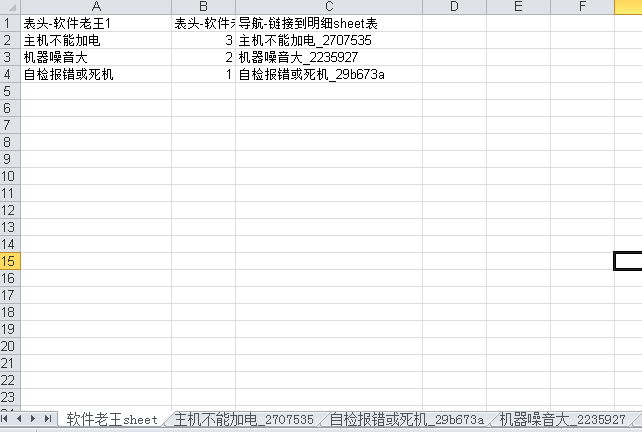

(3) #3 excel處理,這裡新增了名稱為“軟體老王sheet”的sheet,表頭有三個,分別為“表頭-軟體老王1”,“表頭-軟體老王2”,“導航-連結到明細sheet表”,其中“導航-連結到明細sheet表”帶超連結,可以導航到明細資料。

wbk = xlwt.Workbook(encoding='ascii')

sheet = wbk.add_sheet("軟體老王sheet") # sheet名稱

sheet.write(0, 0, '表頭-軟體老王1')

sheet.write(0, 1, '表頭-軟體老王2')

sheet.write(0, 2, '導航-連結到明細sheet表')

wb = openpyxl.load_workbook('軟體老王-source.xlsx')

ws = wb.active

col = ws['B'](4)# 4 相似性處理

演算法原理在(文字相似性熱度統計(python版)中有詳細說明。

rcount = 1

texts = []

orig_txt = []

key_list = []

name_list = []

sheet_list = []

for cell in col:

if cell.value is None:

continue

if not isinstance(cell.value, str):

continue

item = cell.value.strip('\n\r').split('\t') # 製表格切分

string = item[0]

if string is None or len(string) == 0:

continue

else:

textstr = seg_sentence(string, stop_words)

texts.append(textstr)

orig_txt.append(string)

dictionary = corpora.Dictionary(texts)

feature_cnt = len(dictionary.token2id.keys())

corpus = [dictionary.doc2bow(text) for text in texts]

tfidf = models.LsiModel(corpus)

index = similarities.SparseMatrixSimilarity(tfidf[corpus], num_features=feature_cnt)

result_lt = []

word_dict = {}

count =0

for keyword in orig_txt:

count = count+1

print('開始執行,第'+ str(count)+'行')

if keyword in result_lt or keyword is None or len(keyword) == 0:

continue

kw_vector = dictionary.doc2bow(seg_sentence(keyword, stop_words))

sim = index[tfidf[kw_vector]]

result_list = []

for i in range(len(sim)):

if sim[i] > 0.5:

if orig_txt[i] in result_lt and orig_txt[i] not in result_list:

continue

result_list.append(orig_txt[i])

result_lt.append(orig_txt[i])

if len(result_list) >0:

word_dict[keyword] = len(result_list)

if len(result_list) >= 1:

sname = re.sub(u"([^\u4e00-\u9fa5\u0030-\u0039\u0041-\u005a\u0061-\u007a])", "", keyword[0:10])+ '_'\

+ str(len(result_list)+ len(str_to_hex(keyword))) + str_to_hex(keyword)[-5:]

sheet_t = wbk.add_sheet(sname) # Excel單元格名字

for i in range(len(result_list)):

sheet_t.write(i, 0, label=result_list[i])(5) #5 按照熱度高低排序 -軟體老王

with open("rjlw.txt", 'w', encoding='utf-8') as wf2:

orderList = list(word_dict.values())

orderList.sort(reverse=True)

count = len(orderList)

for i in range(count):

for key in word_dict:

if word_dict[key] == orderList[i]:

key_list.append(key)

word_dict[key] = 0

wf2.truncate()(6) #6 寫入目標excel-軟體老王

for i in range(len(key_list)):

sheet.write(i+rcount, 0, label=key_list[i])

sheet.write(i+rcount, 1, label=orderList[i])

if orderList[i] >= 1:

shname = re.sub(u"([^\u4e00-\u9fa5\u0030-\u0039\u0041-\u005a\u0061-\u007a])", "", key_list[i][0:10]) \

+ '_'+ str(orderList[i]+ len(str_to_hex(key_list[i])))+ str_to_hex(key_list[i])[-5:]

link = 'HYPERLINK("#%s!A1";"%s")' % (shname, shname)

sheet.write(i+rcount, 2, xlwt.Formula(link))

rcount = rcount + len(key_list)

key_list = []

orderList = []

texts = []

orig_txt = []

wbk.save('軟體老王-target.xls')2.3 效果圖

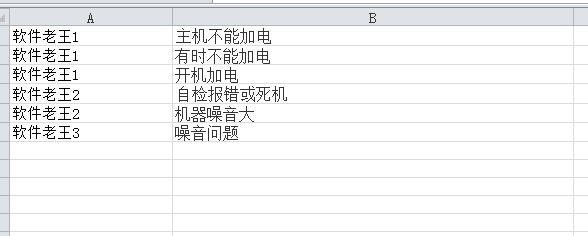

(1)軟體老王-source.xlsx

(2)軟體老王-target.xls

(3)簡單說明

真實資料不太方便公佈,隨意造了幾個演示資料說明下效果格式。

I’m 「軟體老王」,如果覺得還可以的話,關注下唄,後續更新秒知!歡迎討論區、同名公眾號留言交流