Kubernetes 完整二進位制部署(精品)

目錄

- 1、基礎環境

- 2、部署DNS

- 3、準備自簽證書

- 4、部署Docker環境

- 5、私有倉庫Harbor部署

- 6、部署Master節點

- 6.1、部署Etcd叢集

- 6.2、部署kube-apiserver叢集

- 6.2.1、建立cliient證書

- 6.2.2、簽發kube-apiserver證書

- 6.2.3、kube-apiserver配置

- 6.3、L4反向代理

- 6.3.1、部署Nginx

- 6.3.2、部署keepalived

- 6.4、部署controller-manager

- 6.5、部署kube-scheduler

- 7、部署Node節點服務

- 7.1、部署Kubelet

- 7.1.1、簽發kubelet證書

- 7.1.2、kubelet配置

- 7.1.3、準備pause基礎映象

- 7.1.4、建立kubelet啟動指令碼

- 7.2、部署kube-proxy

- 7.2.1、簽發kube-proxy證書

- 7.2.2、Kube-proxy配置

- 7.2.3、建立kube-proxy啟動指令碼

- 7.1、部署Kubelet

- 8、驗證叢集

1、基礎環境

1.安裝epel-release

$ yum install epel-release -y

2.保證系統核心版本為3.10.x以上

$ uname -a

Linux k8s-node01 3.10.0-693.el7.x86_64

3.關閉防火牆和selinux

$ systemctl stop firewalld && systemctl disable firewalld $ sed -i.bak 's/SELINUX=enforcing/SELINUX=disabled/' /etc/selinux/config $ setenforce 0

4.時間同步

$ echo '#time sync by lidao at 2017-03-08' >>/var/spool/cron/root

$ echo '*/5 * * * * /usr/sbin/ntpdate pool.ntp.org >/dev/null 2>&1' >>/var/spool/cron/root

$ crontab -l

5.核心優化

$ cat >>/etc/sysctl.conf<<EOF net.ipv4.tcp_fin_timeout = 2 net.ipv4.ip_forward = 1 net.ipv4.tcp_tw_reuse = 1 net.ipv4.tcp_tw_recycle = 1 net.ipv4.tcp_syncookies = 1 net.ipv4.tcp_keepalive_time = 600 net.ipv4.ip_local_port_range = 4000 65000 net.ipv4.tcp_max_syn_backlog = 16384 net.ipv4.tcp_max_tw_buckets = 36000 net.ipv4.route.gc_timeout = 100 net.ipv4.tcp_syn_retries = 1 net.ipv4.tcp_synack_retries = 1 net.core.somaxconn = 16384 net.core.netdev_max_backlog = 16384 net.ipv4.tcp_max_orphans = 16384 EOF $ sysctl -p

6.安裝必要工具

$ yum install wget net-tools telnet tree nmap sysstat lrzsz dos2unix bind-utils -y

2、部署DNS

bind9服務來實現DNS,在ingress中實現七層代理,在實驗環境中就得繫結hosts方式實現訪問,而且容器也沒辦法繫結hosts,這裡通過DNS來實現。

1.安裝bind9軟體(hdss7-11)

$ yum install bind -y

2.主配置檔案

$ vim /etc/named.conf

listen-on port 53 { 10.4.7.11; }; # dns監聽地址

allow-query { any; }; # 允許所有主機訪問dns服務

forwarders { 10.4.7.2; }; # 指定上級dns

recursion yes; # dns採用遞迴演算法查詢(另一種是迭代)

dnssec-enable no; # 節約資源將其關閉

dnssec-validation no; # 節約資源將其關閉

配置檔案語法校驗

# 沒有報錯說明語法沒問題

$ named-checkconf

2.區域配置檔案

定義了兩個域,都為主DNS,執行本機update

$ vim /etc/named.rfc1912.zones

zone "host.com" IN {

type master;

file "host.com.zone";

allow-update { 10.4.7.11; };

};

zone "od.com" IN {

type master;

file "od.com.zone";

allow-update { 10.4.7.11; };

};

3.配置區域資料檔案

- /var/named/host.com.zone

$ORIGIN host.com.

$TTL 600 ; 10 minutes

@ IN SOA dns.host.com. dnsadmin.host.com. (

2019011001 ; serial

10800 ; refresh (3 hours)

900 ; retry (15 minutes)

604800 ; expire (1 week)

86400 ; minimum (1 day)

)

NS dns.host.com.

$TTL 60 ; 1 minute

dns A 10.4.7.11

HDSS7-11 A 10.4.7.11

HDSS7-12 A 10.4.7.12

HDSS7-21 A 10.4.7.21

HDSS7-22 A 10.4.7.22

HDSS7-200 A 10.4.7.200

- /var/named/od.com.zone

$ORIGIN od.com.

$TTL 600 ; 10 minutes

@ IN SOA dns.od.com. dnsadmin.od.com. (

2019011001 ; serial

10800 ; refresh (3 hours)

900 ; retry (15 minutes)

604800 ; expire (1 week)

86400 ; minimum (1 day)

)

NS dns.od.com.

$TTL 60 ; 1 minute

dns A 10.4.7.11

4.啟動dns服務

$ systemctl start named && systemctl enable named

5.驗證是否可解析

$ dig -t A hdss7-21.host.com @10.4.7.11 +short

10.4.7.21

$ dig -t A hdss7-200.host.com @10.4.7.11 +short

10.4.7.200

6.DNS客戶端配置

所有節點

- 修改網絡卡dns方式

# 修改網絡卡配置檔案DNS1

$ vim /etc/sysconfig/network-scripts/ifcfg-eth0

DNS1=10.4.7.11

$ systemctl restart network

# 測試ping

$ ping baidu.com

PING baidu.com (39.156.69.79) 56(84) bytes of data.

64 bytes from 39.156.69.79 (39.156.69.79): icmp_seq=1 ttl=128 time=47.0 ms

64 bytes from 39.156.69.79 (39.156.69.79): icmp_seq=2 ttl=128 time=48.3 ms

$ ping hdss7-21.host.com

PING HDSS7-21.host.com (10.4.7.21) 56(84) bytes of data.

64 bytes from 10.4.7.21 (10.4.7.21): icmp_seq=1 ttl=64 time=0.821 ms

64 bytes from 10.4.7.21 (10.4.7.21): icmp_seq=2 ttl=64 time=0.598 ms

- 新增search(短域名)

$ vim /etc/resolv.conf

# Generated by NetworkManager

search host.com

nameserver 10.4.7.2

# Ping短域名

$ ping hdss7-200

PING HDSS7-200.host.com (10.4.7.200) 56(84) bytes of data.

64 bytes from 10.4.7.200 (10.4.7.200): icmp_seq=1 ttl=64 time=1.18 ms

64 bytes from 10.4.7.200 (10.4.7.200): icmp_seq=2 ttl=64 time=0.456 ms

3、準備自簽證書

運維主機hdss7-200.host.com上:

1.安裝CFSSL

$ wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -O /usr/bin/cfssl

$ wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -O /usr/bin/cfssl-json

$ wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -O /usr/bin/cfssl-certinfo

$ chmod +x /usr/bin/cfssl*

- 關於cfssl工具:

- cfssl:證書籤發的主要工具

- cfssl-json:將cfssl生成的整數(json格式)變為檔案承載式證書

- cfssl-certinfo:驗證證書的資訊

2.建立生成CA證書籤名請求(csr)的json配置檔案

自簽證書會有個根證書ca(需權威機構簽發/可自籤)

$ vim /opt/certs/ca-csr.json

{

"CN": "Sky",

"hosts": [

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

],

"ca": {

"expiry": "175200h"

}

}

CN:瀏覽器使用該欄位驗證網站是否合法,一般寫的是域名,非常重要

C:國家

ST:州/省

L:地區/城市

O:組織名稱/公司名稱

OU:組織單位名稱,公司部門

3.生成CA證書和私鑰

$ cfssl gencert -initca ca-csr.json | cfssl-json -bare ca

2020/01/10 13:58:49 [INFO] generating a new CA key and certificate from CSR

2020/01/10 13:58:49 [INFO] generate received request

2020/01/10 13:58:49 [INFO] received CSR

2020/01/10 13:58:49 [INFO] generating key: rsa-2048

2020/01/10 13:58:49 [INFO] encoded CSR

2020/01/10 13:58:49 [INFO] signed certificate with serial number 214125439771303219718649555160058070055859759808

$ ll

total 16

-rw-r--r-- 1 root root 328 Jan 10 13:53 ca-csr.json # 請求檔案

-rw------- 1 root root 1675 Jan 10 13:58 ca-key.pem # 私鑰

-rw-r--r-- 1 root root 993 Jan 10 13:58 ca.csr

-rw-r--r-- 1 root root 1346 Jan 10 13:58 ca.pem # 證書

4、部署Docker環境

hdss7-200.host.com,hdss7-21.host.com,hdss7-22.host.com上:

1.一鍵安裝Docker-ce

$ curl -fsSL https://get.docker.com | bash -s docker --mirror Aliyun

$ docker version

2.配置檔案

$ mkdir /etc/docker/

$ mkdir -p /data/docker

$ vim /etc/docker/daemon.json

{

"graph": "/data/docker",

"storage-driver": "overlay2",

"insecure-registries": ["registry.access.redhat.com","quay.io","harbor.od.com"],

"registry-mirrors": ["https://q2gr04ke.mirror.aliyuncs.com"],

"bip": "172.7.21.1/24",

"exec-opts": ["native.cgroupdriver=systemd"],

"live-restore": true

}

bip:172.7.x.1/24,x按照宿主機IP地址最後一位來設定

3.啟動docker

$ systemctl restart docker.service && systemctl enable docker.service

5、私有倉庫Harbor部署

hdss7-200.host.com上:

1.下載軟體二進位制包並解壓

https://github.com/goharbor/harbor

$ tar xf harbor-offline-installer-v1.8.1.tgz -C /opt/

$ mv /opt/harbor/ /opt/harbor-v1.8.1

$ ln -s /opt/harbor-v1.8.1/ /opt/harbor

2.配置檔案

$ vim /opt/harbor/harbor.yml

hostname: harbor.od.com

http:

port: 180

data_volume: /data/harbor

location: /data/harbor/logs

建立相應目錄

$ mkdir -p /data/harbor/logs

3.安裝docker-compose

用於編排harbor

$ yum install docker-compose -y

4.啟動harbor

$ sh /opt/harbor/install.sh

$ docker-compose ps

5.基於Nginx實現代理訪問Harbor

$ yum install nginx -y

$ vim /etc/nginx/conf.d/harbor.od.com.conf

server {

listen 80;

server_name harbor.od.com;

client_max_body_size 1000m;

location / {

proxy_pass http://127.0.0.1:180;

}

}

配置說明:使用者訪問url:harbor.od.com 埠80 將其流量代理到 127.0.0.1:180

啟動nginx

$ nginx -t

$ systemctl start nginx && systemctl enable nginx

6.hdss7-11上新增dns A記錄

$ vi /var/named/od.com.zone

harbor A 10.4.7.200

注意serial前滾一個序號

重啟dns並測試

$ systemctl restart named

$ dig -t A harbor.od.com +short

10.4.7.200

7.瀏覽器訪問:harbor.od.com

使用者名稱:admin、密碼:Harbor12345

8.harbor上新建一個名:public 公開專案

9.從docker.io拉取nginx映象

$ docker pull nginx:1.7.9

# 等價於

$ docker pull docker.io/library/nginx:1.7.9

將從公網下載的nginx打上剛才建立的harbor倉庫下public專案的tag

# 找到nginx image id將其打上new tag

$ docker tag 84581e99d807 harbor.od.com/public/nginx:v1.7.9

# 需先登入harbor

$ docker login harbor.od.com

Username: admin

Password:

# 然後在推送映象到私有倉庫

$ docker push harbor.od.com/public/nginx:v1.7.9

6、部署Master節點

6.1、部署Etcd叢集

叢集規劃

| 主機名 | 角色 | ip |

|---|---|---|

| hdss7-12.host.com | etcd lead | 10.4.7.12 |

| hdss7-21.host.com | etcd follow | 10.4.7.21 |

| hdss7-22.host.com | etcd follow | 10.4.7.22 |

注意:這裡部署文件以hdss7-12.host.com主機為例,另外兩臺主機安裝部署方法類似

1.建立基於根證書的config配置檔案

運維主機hdss7-200上:

$ vim /opt/certs/ca-config.json

{

"signing": {

"default": {

"expiry": "175200h"

},

"profiles": {

"server": {

"expiry": "175200h",

"usages": [

"signing",

"key encipherment",

"server auth"

]

},

"client": {

"expiry": "175200h",

"usages": [

"signing",

"key encipherment",

"client auth"

]

},

"peer": {

"expiry": "175200h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

證書型別

client:客戶端使用,用於服務端認證客戶端,例如etcdctl、etcd proxy、fleetctl、docker客戶端。

server:服務端使用,客戶端以此驗證服務端身份,例如docker服務端、kube-apiserver

peer:雙向證書,用於etcd叢集成員間通訊

2.建立生成etcd自簽證書籤名請求(csr)的json配置檔案

運維主機hdss7-200上:

$ vim /opt/certs/etcd-peer-csr.json

{

"CN": "k8s-etcd",

"hosts": [

"10.4.7.11",

"10.4.7.12",

"10.4.7.21",

"10.4.7.22"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

]

}

hosts:新增部署etcd機器的IP地址,儘量多預留幾個

3.生成etcd證書和私鑰

運維主機hdss7-200上:

$ cd /opt/certs

$ cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=peer etcd-peer-csr.json |cfssl-json -bare etcd-peer

2020/01/10 15:05:30 [INFO] generate received request

2020/01/10 15:05:30 [INFO] received CSR

2020/01/10 15:05:30 [INFO] generating key: rsa-2048

2020/01/10 15:05:30 [INFO] encoded CSR

2020/01/10 15:05:30 [INFO] signed certificate with serial number 257419759502713087580344599035913411225571544160

2020/01/10 15:05:30 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

檢查生成的證書、私鑰

$ ll etcd-peer*

-rw-r--r-- 1 root root 364 Jan 10 15:03 etcd-peer-csr.json

-rw------- 1 root root 1675 Jan 10 15:05 etcd-peer-key.pem

-rw-r--r-- 1 root root 1062 Jan 10 15:05 etcd-peer.csr

-rw-r--r-- 1 root root 1428 Jan 10 15:05 etcd-peer.pem

4.建立etcd使用者

hdss7-12.host.com上:

$ useradd -s /sbin/nologin -M etcd

5.下載軟體、解壓,做軟連線

hdss7-12.host.com上:

etcd下載地址

$ tar xf etcd-v3.1.20-linux-amd64.tar.gz -C /opt/

$ mv /opt/etcd-v3.1.20-linux-amd64/ /opt/etcd-v3.1.20

$ ln -s /opt/etcd-v3.1.20/ /opt/etcd

6.建立目錄,拷貝證書、私鑰

hdss7-12.host.com上:

- 建立目錄

$ mkdir -p /opt/etcd/certs /data/etcd /data/logs/etcd-server

- 拷貝證書

將運維主機上生成的ca.pem、etcd-peer-key.pem、etcd-peer.pem拷貝到/opt/etcd/certs目錄中,注意私鑰檔案許可權600

$ ll -l

total 12

-rw-r--r-- 1 root root 1346 Jan 27 12:04 ca.pem

-rw------- 1 root root 1675 Jan 27 12:03 etcd-peer-key.pem

-rw-r--r-- 1 root root 1432 Jan 27 12:03 etcd-peer.pem

- 修改許可權

$ chown -R etcd.etcd /opt/etcd-v3.1.20 /data/etcd/ /data/logs/etcd-server/

必須使用etcd使用者啟動

7.建立etcd服務啟動指令碼

hdss7-12.host.com上:

$ vim /opt/etcd/etcd-server-startup.sh

#!/bin/sh

./etcd --name etcd-server-7-12 \

--data-dir /data/etcd/etcd-server \

--listen-peer-urls https://10.4.7.12:2380 \

--listen-client-urls https://10.4.7.12:2379,http://127.0.0.1:2379 \

--quota-backend-bytes 8000000000 \

--initial-advertise-peer-urls https://10.4.7.12:2380 \

--advertise-client-urls https://10.4.7.12:2379,http://127.0.0.1:2379 \

--initial-cluster etcd-server-7-12=https://10.4.7.12:2380,etcd-server-7-21=https://10.4.7.21:2380,etcd-server-7-22=https://10.4.7.22:2380 \

--ca-file ./certs/ca.pem \

--cert-file ./certs/etcd-peer.pem \

--key-file ./certs/etcd-peer-key.pem \

--client-cert-auth \

--trusted-ca-file ./certs/ca.pem \

--peer-ca-file ./certs/ca.pem \

--peer-cert-file ./certs/etcd-peer.pem \

--peer-key-file ./certs/etcd-peer-key.pem \

--peer-client-cert-auth \

--peer-trusted-ca-file ./certs/ca.pem \

--log-output stdout

配置引數說明

| 引數 | 說明 |

|---|---|

| --listen-peer-urls | 本member側使用,用於監聽其他member傳送資訊的地址。ip為全0代表監聽本member側所有介面 |

| --listen-client-urls | 本member側使用,用於監聽etcd客戶傳送資訊的地址。ip為全0代表監聽本member側所有介面 |

| --initial-advertise-peer-urls | 其他member使用,其他member通過該地址與本member互動資訊。一定要保證從其他member能可訪問該地址。靜態配置方式下,該引數的value一定要同時在--initial-cluster引數中存在。memberID的生成受--initial-cluster-token和--initial-advertise-peer-urls影響。 |

| --advertise-client-urls | etcd客戶使用,客戶通過該地址與本member互動資訊。一定要保證從客戶側能可訪問該地址 |

| --initial-cluster | etcd叢集所有節點配置,多個用逗號隔開 |

| -quota-backend-bytes | 指定etcd儲存配額超過指定大小後引發報警 |

| --client-cert-auth | 啟動客戶端證書進行身份驗證 |

| --log-output | 指定“ stdout”或“ stderr”以跳過日誌記錄,即使在systemd或逗號分隔的輸出目標列表下執行時也是如此。 |

詳細請點選

給指令碼新增執行許可權

$ chmod +x /opt/etcd/etcd-server-startup.sh

8.建立etcd-server的啟動配置

hdss7-12.host.com上:

安裝supervisor(優勢:自動拉起掛掉的程式)

$ yum install supervisor -y

$ systemctl start supervisord && systemctl enable supervisord

將etcd啟動指令碼交給supervisor管理

$ vim /etc/supervisord.d/etcd-server.ini

[program:etcd-server-7-12]

command=/opt/etcd/etcd-server-startup.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/etcd ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected

quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=etcd ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/etcd-server/etcd.stdout.log ; stdout log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

killasgroup=true

stopasgroup=true

注意:etcd叢集各主機啟動配置略有不同,配置其它節點時注意修改;

9.啟動etcd

hdss7-12.host.com上:

$ supervisorctl update

etcd-server-7-12: added process group

檢查是否啟動

$ supervisorctl status

etcd-server-7-12 RUNNING pid 5029, uptime 0:02:11

$ netstat -lntup | grep "etcd"

tcp 0 0 10.4.7.12:2379 0.0.0.0:* LISTEN 5030/./etcd

tcp 0 0 127.0.0.1:2379 0.0.0.0:* LISTEN 5030/./etcd

tcp 0 0 10.4.7.12:2380 0.0.0.0:* LISTEN 5030/./etcd

10.檢查叢集狀態(必須在三節點起來後)

$ /opt/etcd/etcdctl cluster-health

member 988139385f78284 is healthy: got healthy result from http://127.0.0.1:2379

member 5a0ef2a004fc4349 is healthy: got healthy result from http://127.0.0.1:2379

member f4a0cb0a765574a8 is healthy: got healthy result from http://127.0.0.1:2379

cluster is healthy

檢查叢集角色

$ ./etcdctl member list

988139385f78284: name=etcd-server-7-22 peerURLs=https://10.4.7.22:2380 clientURLs=http://127.0.0.1:2379,https://10.4.7.22:2379 isLeader=false

5a0ef2a004fc4349: name=etcd-server-7-21 peerURLs=https://10.4.7.21:2380 clientURLs=http://127.0.0.1:2379,https://10.4.7.21:2379 isLeader=false

f4a0cb0a765574a8: name=etcd-server-7-12 peerURLs=https://10.4.7.12:2380 clientURLs=http://127.0.0.1:2379,https://10.4.7.12:2379 isLeader=true

6.2、部署kube-apiserver叢集

叢集規劃

| 主機名 | 角色 | ip |

|---|---|---|

| hdss7-21.host.com | kube-apiserver | 10.4.7.21 |

| hdss7-22.host.com | kube-apiserver | 10.4.7.22 |

| hdss7-11.host.com | 4層負載均衡 | 10.4.7.11 |

| hdss7-12.host.com | 4層負載均衡 | 10.4.7.12 |

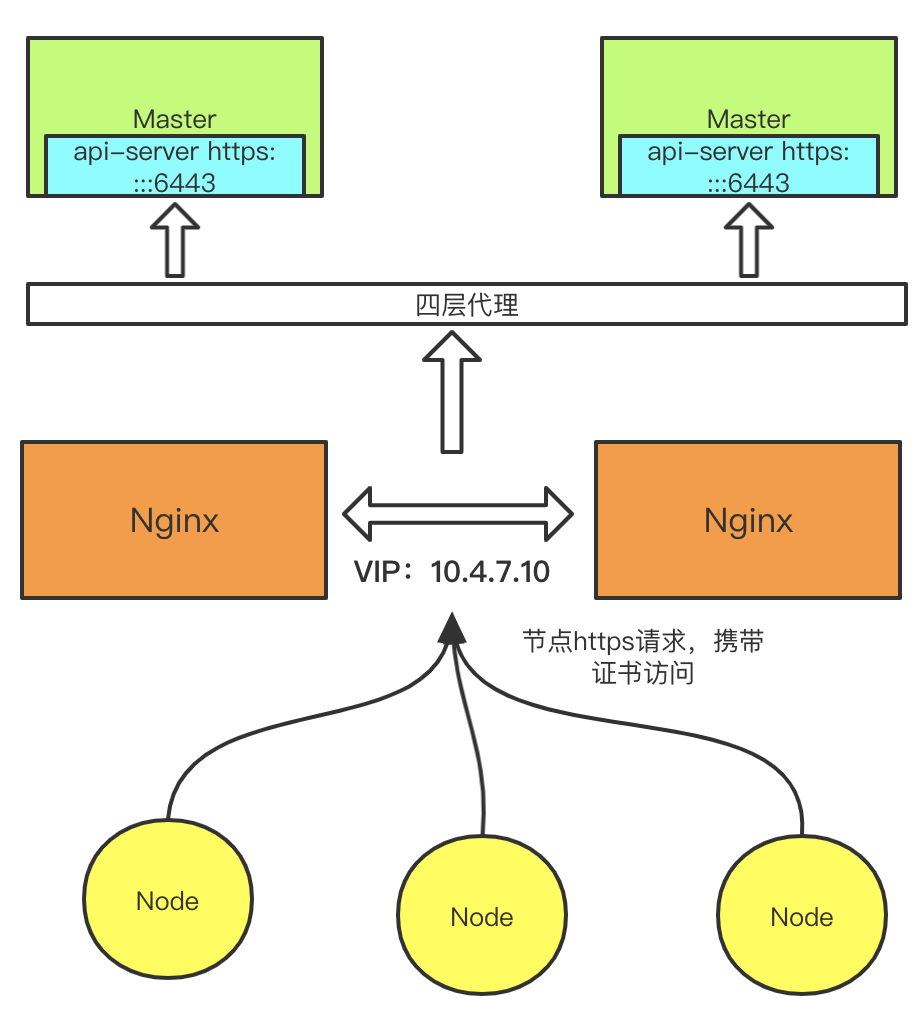

注意:這裡10.4.7.11和10.4.7.12使用nginx做4層負載均衡,用keepalived跑一個VIP:10.4.7.10,代理兩個kube-apiserver,實現高可用

這類部署文件以hdss7-21.host.com主機為例,另外一臺運算節點部署方法類似

下載軟體,解壓,做軟連線

hdss7-21.host.com上:

kubernetes官方Github地址

kuberneetes下載地址

$ tar xf /opt/src/kubernetes-server-linux-amd64-v1.15.2.tar.gz -C /opt/

$ mv /opt/kubernetes/ /opt/kubernetes-v1.15.2

$ ln -s /opt/kubernetes-v1.15.2/ /opt/kubernetes

6.2.1、建立cliient證書

運維主機hdss7-200上:

1建立生成證書籤名請求(csr)的json配置檔案

$ vim /opt/certs/client-csr.json

{

"CN": "k8s-node",

"hosts": [

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

]

}

2.生成client證書和私鑰

$ cd /opt/certs/

$ cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=client client-csr.json |cfssl-json -bare client

2020/01/10 16:16:35 [INFO] generate received request

2020/01/10 16:16:35 [INFO] received CSR

2020/01/10 16:16:35 [INFO] generating key: rsa-2048

\2020/01/10 16:16:36 [INFO] encoded CSR

2020/01/10 16:16:36 [INFO] signed certificate with serial number 294650890732881478597150479545220844543007627512

2020/01/10 16:16:36 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

3.檢查生成的證書、私鑰

$ ll client*

-rw-r--r-- 1 root root 280 Jan 10 16:15 client-csr.json

-rw------- 1 root root 1679 Jan 10 16:16 client-key.pem

-rw-r--r-- 1 root root 993 Jan 10 16:16 client.csr

-rw-r--r-- 1 root root 1363 Jan 10 16:16 client.pem

6.2.2、簽發kube-apiserver證書

運維主機hdss7-200上:

1.建立生成證書籤名請求(csr)的json配置檔案

$ vim /opt/certs/apiserver-csr.json

{

"CN": "k8s-apiserver",

"hosts": [

"127.0.0.1",

"192.168.0.1",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local",

"10.4.7.10",

"10.4.7.21",

"10.4.7.22",

"10.4.7.23"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

]

}

注意:

- hosts 欄位指定授權使用該證書的 IP 或域名列表,這裡列出了 VIP 、apiserver節點 IP、kubernetes 服務 IP 和域名;

- 域名最後字元不能是 . (如不能為kubernetes.default.svc.cluster.local. ),否則解析時失敗,提示: x509:cannot parse dnsName "kubernetes.default.svc.cluster.local." ;

- 如果使用非 cluster.local 域名,如 opsnull.com ,則需要修改域名列表中的最後兩個域名為: kubernetes.default.svc.opsnull 、 kubernetes.default.svc.opsnull.com

- kubernetes 服務 IP 是 apiserver 自動建立的,一般是 --service-cluster-ip-range 引數指定的網段的第一個IP,後續可以通過如下命令獲取:

$ kubectl get svc kubernetes

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 192.168.0.1 <none> 443/TCP 4d

2.生成api-server證書和私鑰

$ cd /opt/certs

$ cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=server apiserver-csr.json |cfssl-json -bare apiserver

2020/01/10 16:21:06 [INFO] generate received request

2020/01/10 16:21:06 [INFO] received CSR

2020/01/10 16:21:06 [INFO] generating key: rsa-2048

2020/01/10 16:21:07 [INFO] encoded CSR

2020/01/10 16:21:07 [INFO] signed certificate with serial number 533398970701884951320970228765072309875544569205

2020/01/10 16:21:07 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

3.檢查生成的證書、私鑰

$ ll apiserver*

-rw-r--r-- 1 root root 566 Jan 10 16:19 apiserver-csr.json

-rw------- 1 root root 1679 Jan 10 16:21 apiserver-key.pem

-rw-r--r-- 1 root root 1249 Jan 10 16:21 apiserver.csr

-rw-r--r-- 1 root root 1598 Jan 10 16:21 apiserver.pem

6.2.3、kube-apiserver配置

hdss7-21上:

1.建立目錄存放證書和私鑰以及配置檔案

$ mkdir /opt/kubernetes/server/bin/cert /opt/kubernetes/server/bin/conf

/opt/kubernetes/server/bin/cert:存放證書

/opt/kubernetes/server/bin/conf:存放啟動配置檔案

2.拷貝證書、私鑰,注意私鑰檔案屬性600

$ ll # 三套證書

total 24

-rw------- 1 root root 1679 Jan 10 16:32 apiserver-key.pem

-rw-r--r-- 1 root root 1598 Jan 10 16:32 apiserver.pem

-rw------- 1 root root 1675 Jan 10 16:32 ca-key.pem

-rw-r--r-- 1 root root 1346 Jan 10 16:32 ca.pem

-rw------- 1 root root 1679 Jan 10 16:32 client-key.pem

-rw-r--r-- 1 root root 1363 Jan 10 16:32 client.pem

3.建立api-server審計策略檔案

$ vi /opt/kubernetes/server/bin/conf/audit.yaml

apiVersion: audit.k8s.io/v1beta1 # This is required.

kind: Policy

# Don't generate audit events for all requests in RequestReceived stage.

omitStages:

- "RequestReceived"

rules:

# Log pod changes at RequestResponse level

- level: RequestResponse

resources:

- group: ""

# Resource "pods" doesn't match requests to any subresource of pods,

# which is consistent with the RBAC policy.

resources: ["pods"]

# Log "pods/log", "pods/status" at Metadata level

- level: Metadata

resources:

- group: ""

resources: ["pods/log", "pods/status"]

# Don't log requests to a configmap called "controller-leader"

- level: None

resources:

- group: ""

resources: ["configmaps"]

resourceNames: ["controller-leader"]

# Don't log watch requests by the "system:kube-proxy" on endpoints or services

- level: None

users: ["system:kube-proxy"]

verbs: ["watch"]

resources:

- group: "" # core API group

resources: ["endpoints", "services"]

# Don't log authenticated requests to certain non-resource URL paths.

- level: None

userGroups: ["system:authenticated"]

nonResourceURLs:

- "/api*" # Wildcard matching.

- "/version"

# Log the request body of configmap changes in kube-system.

- level: Request

resources:

- group: "" # core API group

resources: ["configmaps"]

# This rule only applies to resources in the "kube-system" namespace.

# The empty string "" can be used to select non-namespaced resources.

namespaces: ["kube-system"]

# Log configmap and secret changes in all other namespaces at the Metadata level.

- level: Metadata

resources:

- group: "" # core API group

resources: ["secrets", "configmaps"]

# Log all other resources in core and extensions at the Request level.

- level: Request

resources:

- group: "" # core API group

- group: "extensions" # Version of group should NOT be included.

# A catch-all rule to log all other requests at the Metadata level.

- level: Metadata

# Long-running requests like watches that fall under this rule will not

# generate an audit event in RequestReceived.

omitStages:

- "RequestReceived"

4.建立啟動指令碼

$ vim /opt/kubernetes/server/bin/kube-apiserver.sh

#!/bin/bash

./kube-apiserver \

--apiserver-count 2 \

--insecure-port 8080 \

--secure-port 6443 \

--audit-log-path /data/logs/kubernetes/kube-apiserver/audit-log \

--audit-policy-file ./conf/audit.yaml \

--authorization-mode RBAC \

--client-ca-file ./cert/ca.pem \

--requestheader-client-ca-file ./cert/ca.pem \

--enable-admission-plugins NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,MutatingAdmissionWebhook,ValidatingAdmissionWebhook,ResourceQuota \

--etcd-cafile ./cert/ca.pem \

--etcd-certfile ./cert/client.pem \

--etcd-keyfile ./cert/client-key.pem \

--etcd-servers https://10.4.7.12:2379,https://10.4.7.21:2379,https://10.4.7.22:2379 \

--service-account-key-file ./cert/ca-key.pem \

--service-cluster-ip-range 192.168.0.0/16 \

--service-node-port-range 3000-29999 \

--target-ram-mb=1024 \

--kubelet-client-certificate ./cert/client.pem \

--kubelet-client-key ./cert/client-key.pem \

--log-dir /data/logs/kubernetes/kube-apiserver \

--tls-cert-file ./cert/apiserver.pem \

--tls-private-key-file ./cert/apiserver-key.pem \

--v 2

service-cluster-ip-range:指定service IP(cluster ip)範圍

配置引數說明

| 引數 | 說明 |

|---|---|

| --apiserver-count | 指定叢集執行模式,多臺 kube-apiserver 會通過 leader選舉產生一個工作節點,其它節點處於阻塞狀態 |

| --authorization-mode | 開啟指定授權模式,拒絕未授權的請求,預設值:AlwaysAllow;以逗號分隔的列表:AlwaysAllow,AlwaysDeny,ABAC,Webhook,RBAC,Node |

| --enable-admission-plugins | 啟用指定外掛 |

| --etcd-servers | etcd伺服器列表(格式://ip:port),逗號分隔 |

| --service-account-key-file | 包含PEM編碼的x509 RSA或ECDSA私有或者公共金鑰的檔案。用於驗證service account token。指定的檔案可以包含多個值。引數可以被指定多個不同的檔案。如未指定,--tls-private-key-file將被使用。如果提供了--service-account-signing-key,則必須指定該引數 |

| --service-cluster-ip-range | CIDR表示IP範圍,用於分配服務叢集IP(service ip)。不能與分配給pod節點的IP重疊 (default 10.0.0.0/24) |

| --service-node-port-range | 為NodePort服務保留的埠範圍。預設值 30000-32767 |

| --kubelet-client-certificate、kubelet-client-key | 如果指定,則使用 https 訪問 kubelet APIs;需要為證書對應的使用者(上面 kubernetes*.pem 證書的使用者為 kubernetes) 使用者定義 RBAC 規則,否則訪問 kubelet API 時提示未授權 |

| --tls-cert-file、tls-private-key-file | 使用 https 輸出 metrics 時使用的 Server 證書和祕鑰 |

| --insecure-port | HTTP服務,預設埠8080,預設IP是本地主機,修改標識--insecure-bind-address,在HTTP中沒有認證和授權檢查 |

| --secure-port | HTTPS服務,預設埠6443,預設IP是首個非本地主機的網路介面,修改標識--bind-address,設定證書和祕鑰的標識,--tls-cert-file,--tls-private-key-file,認證方式,令牌檔案或者客戶端證書,使用基於策略的授權方式 |

給指令碼新增執行許可權

$ chmod +x /opt/kubernetes/server/bin/kube-apiserver.sh

5.建立api-server的啟動配置

$ vi /etc/supervisord.d/kube-apiserver.ini

[program:kube-apiserver-7-21]

command=/opt/kubernetes/server/bin/kube-apiserver.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-apiserver/apiserver.stdout.log ; stderr log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

killasgroup=true

stopasgroup=true

6.建立日誌目錄

$ mkdir -p /data/logs/kubernetes/kube-apiserver

7.啟動並檢查

$ supervisorctl update

$ supervisorctl status

etcd-server-7-22 RUNNING pid 4013, uptime 1:12:36

kube-apiserver-7-22 RUNNING pid 4596, uptime 0:00:31

8.檢視api-server埠

$ netstat -lntup | egrep "8080|6443"

tcp 0 0 127.0.0.1:8080 0.0.0.0:* LISTEN 20375/./kube-apiser

tcp6 0 0 :::6443 :::* LISTEN 20375/./kube-apiser

6.3、L4反向代理

hdss7-11和hdss7-12上基於nginx實現L4反向代理排程到後端的kubernetes api-server:

所有Node節點的k8s元件:kubelet,kube-proxy會去訪問https://10.4.7.10:7443這個地址,並攜帶證書

6.3.1、部署Nginx

1.安裝nginx

$ yum install nginx -y

2.nginx配置檔案

$ vim /etc/nginx/nginx.conf # 黏貼到http標籤外

stream {

# kubernetes api-server ip地址以及https埠

upstream kube-apiserver {

server 10.4.7.21:6443 max_fails=3 fail_timeout=30s;

server 10.4.7.22:6443 max_fails=3 fail_timeout=30s;

}

# 監聽7443埠,將其接收的流量轉發至指定proxy_pass

server {

listen 7443;

proxy_connect_timeout 2s;

proxy_timeout 900s;

proxy_pass kube-apiserver;

}

}

3.啟動nginx

$ systemctl start nginx && systemctl enable nginx

6.3.2、部署keepalived

1.安裝keepalived

$ yum install keepalived -y

2.監聽指令碼

$ vi /etc/keepalived/check_port.sh

#!/bin/bash

# keepalived 監控埠指令碼

# 使用方法:

# 在keepalived的配置檔案中

# vrrp_script check_port {#建立一個vrrp_script指令碼,檢查配置

# script "/etc/keepalived/check_port.sh 6379" #配置監聽的埠

# interval 2 #檢查指令碼的頻率,單位(秒)

# }

CHK_PORT=$1

if [ -n "$CHK_PORT" ];then

PORT_PROCESS=`ss -lnt|grep $CHK_PORT|wc -l`

if [ $PORT_PROCESS -eq 0 ];then

echo "Port $CHK_PORT Is Not Used,End."

exit 1

fi

else

echo "Check Port Cant Be Empty!"

fi

新增可執行許可權

$ chmod +x /etc/keepalived/check_port.sh

3.keepalived主配置檔案

$ vi /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id 10.4.7.11

}

vrrp_script chk_nginx {

# 呼叫指令碼檢測nginx監聽的7443埠是否存在

script "/etc/keepalived/check_port.sh 7443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 251

priority 100

advert_int 1

# 當前主機IP

mcast_src_ip 10.4.7.11

nopreempt

# 高可用認證

authentication {

auth_type PASS

auth_pass 11111111

}

track_script {

chk_nginx

}

# 虛擬IP

virtual_ipaddress {

10.4.7.10

}

}

4.keepalived備配置檔案

$ vi /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id 10.4.7.12

}

vrrp_script chk_nginx {

script "/etc/keepalived/check_port.sh 7443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state BACKUP

interface eth0

virtual_router_id 251

mcast_src_ip 10.4.7.12

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 11111111

}

track_script {

chk_nginx

}

virtual_ipaddress {

10.4.7.10

}

}

5.啟動

$ systemctl start keepalived.service && systemctl enable keepalived.service

6.4、部署controller-manager

hdss7-21.host.com和hdss7-22.host.com都部署了api-server,並且暴露了127.0.0.1:8080埠,也就是隻能當前機器訪問,那麼controller-manager也是部署到當前機器,那就可以通過非安全埠8080直接訪問到本機的api-server,即訪問快捷/速度快又不需要證書認證。

叢集規劃

| 主機名 | 角色 | ip |

|---|---|---|

| hdss7-21.host.com | controller-manager | 10.4.7.21 |

| hdss7-22.host.com | controller-manager | 10.4.7.22 |

注意:這裡部署文件以hdds7-21.host.com主機為例,另外一臺運算節點安裝部署方法類似

1.建立啟動指令碼

$ vim /opt/kubernetes/server/bin/kube-controller-manager.sh

#!/bin/sh

./kube-controller-manager \

--cluster-cidr 172.7.0.0/16 \

--leader-elect true \

--log-dir /data/logs/kubernetes/kube-controller-manager \

--master http://127.0.0.1:8080 \

--service-account-private-key-file ./cert/ca-key.pem \

--service-cluster-ip-range 192.168.0.0/16 \

--root-ca-file ./cert/ca.pem \

--v 2

配置引數說明

| 引數 | 說明 |

|---|---|

| --cluster-cidr | 叢集中Pod的CIDR範圍, |

| --master | kubernetes api server的地址,將會覆蓋kubeconfig設定的值 |

| --service-cluster-ip-range | 叢集service的cidr範圍,需要--allocate-node-cidrs設定為true |

| --leader-elect | 多個master情況設定為true保證高可用,進行leader選舉 |

| --leader-elect-lease-duration duration | 當leader-elect設定為true生效,選舉過程中非leader候選等待選舉的時間間隔(default 15s) |

| --leader-elect-renew-deadline duration | eader選舉過程中在停止leading,再次renew時間間隔,小於或者等於leader-elect-lease-duration duration,也是leader-elect設定為true生效(default 10s) |

| --leader-elect-retry-period duration | 當leader-elect設定為true生效,獲取leader或者重新選舉的等待間隔(default 2s) |

2.調整檔案許可權,建立日誌存放目錄

$ chmod +x /opt/kubernetes/server/bin/kube-controller-manager.sh

$ mkdir -p /data/logs/kubernetes/kube-controller-manager

3.建立controller-manager的啟動配置

$ vi /etc/supervisord.d/kube-conntroller-manager.ini

[program:kube-controller-manager-7-21]

command=/opt/kubernetes/server/bin/kube-controller-manager.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-controller-manager/controller.stdout.log ; stderr log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

killasgroup=true

stopasgroup=true

4.啟動並檢查

$ supervisorctl update

$ supervisorctl status

etcd-server-7-21 RUNNING pid 4148, uptime 2:07:47

kube-apiserver-7-21 RUNNING pid 4544, uptime 1:02:36

kube-controller-manager-7-21 RUNNING pid 4690, uptime 0:00:32

6.5、部署kube-scheduler

hdss7-21.host.com和hdss7-22.host.com都部署了api-server,並且暴露了127.0.0.1:8080埠,也就是隻能當前機器訪問,那麼kube-scheduler也是部署到當前機器,那就可以通過非安全埠8080直接訪問到本機的api-server,即訪問快捷/速度快又不需要證書認證。

叢集規劃

| 主機名 | 角色 | ip |

|---|---|---|

| hdss7-21.host.com | kube-scheduler | 10.4.7.21 |

| hdss7-22.host.com | kube-scheduler | 10.4.7.22 |

注意:這裡部署文件以hdds7-21.host.com主機為例,另外一臺運算節點安裝部署方法類似

1.建立啟動指令碼

$ vim /opt/kubernetes/server/bin/kube-scheduler.sh

#!/bin/sh

./kube-scheduler \

--leader-elect \

--log-dir /data/logs/kubernetes/kube-scheduler \

--master http://127.0.0.1:8080 \

--v 2

master:指定api-server

2.調整檔案許可權,建立目錄

$ chmod +x /opt/kubernetes/server/bin/kube-scheduler.sh

$ mkdir -p /data/logs/kubernetes/kube-scheduler

3.建立controller-manager的啟動配置

$ vi /etc/supervisord.d/kube-scheduler.ini

[program:kube-scheduler-7-21]

command=/opt/kubernetes/server/bin/kube-scheduler.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-scheduler/scheduler.stdout.log ; stderr log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

killasgroup=true

stopasgroup=true

4.啟動並檢查

$ supervisorctl update

$ supervisorctl status

etcd-server-7-21 RUNNING pid 4148, uptime 2:11:12

kube-apiserver-7-21 RUNNING pid 4544, uptime 1:06:01

kube-controller-manager-7-21 RUNNING pid 4690, uptime 0:03:57

kube-scheduler-7-21 RUNNING pid 4727, uptime 0:00:32

5.檢查叢集健康狀態

$ ln -s /opt/kubernetes/server/bin/kubectl /usr/bin/kubectl

$ kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-2 Healthy {"health": "true"}

etcd-0 Healthy {"health": "true"}

etcd-1 Healthy {"health": "true"}

7、部署Node節點服務

7.1、部署Kubelet

叢集規劃

| 主機名 | 角色 | ip |

|---|---|---|

| hdss7-21.host.com | kubelet | 10.4.7.21 |

| hdss7-22.host.com | kubelet | 10.4.7.22 |

注意:這裡部署文件以hdds7-21.host.com主機為例,另外一臺運算節點安裝部署方法類似

7.1.1、簽發kubelet證書

運維主機hdss7-200.host.com上:

1.建立生成證書籤名請求(csr)的json配置檔案

$ vim /opt/certs/kubelet-csr.json

{

"CN": "k8s-kubelet",

"hosts": [

"127.0.0.1",

"10.4.7.10",

"10.4.7.21",

"10.4.7.22",

"10.4.7.23",

"10.4.7.24",

"10.4.7.25",

"10.4.7.26",

"10.4.7.27",

"10.4.7.28"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

]

}

2.生成證書和私鑰

$ cd /opt/certs

$ cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=server kubelet-csr.json | cfssl-json -bare kubelet

2020/01/10 20:15:39 [INFO] generate received request

2020/01/10 20:15:39 [INFO] received CSR

2020/01/10 20:15:39 [INFO] generating key: rsa-2048

2020/01/10 20:15:40 [INFO] encoded CSR

2020/01/10 20:15:40 [INFO] signed certificate with serial number 526251135664766815056179206511844993208257685250

2020/01/10 20:15:40 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

3.檢查證書和私鑰

$ ll kubelet*

-rw-r--r-- 1 root root 452 Jan 10 20:15 kubelet-csr.json

-rw------- 1 root root 1675 Jan 10 20:15 kubelet-key.pem

-rw-r--r-- 1 root root 1115 Jan 10 20:15 kubelet.csr

-rw-r--r-- 1 root root 1468 Jan 10 20:15 kubelet.pem

7.1.2、kubelet配置

hdss7-21.host.com上:

1.拷貝證書到各運算節點,並建立配置(證書、私鑰,注意私鑰檔案許可權600)

$ ll /opt/kubernetes/server/bin/cert/

total 32

-rw------- 1 root root 1679 Jan 10 16:32 apiserver-key.pem

-rw-r--r-- 1 root root 1598 Jan 10 16:32 apiserver.pem

-rw------- 1 root root 1675 Jan 10 16:32 ca-key.pem

-rw-r--r-- 1 root root 1346 Jan 10 16:32 ca.pem

-rw------- 1 root root 1679 Jan 10 16:32 client-key.pem

-rw-r--r-- 1 root root 1363 Jan 10 16:32 client.pem

-rw------- 1 root root 1675 Jan 10 20:20 kubelet-key.pem

-rw-r--r-- 1 root root 1468 Jan 10 20:20 kubelet.pem

2.建立kubelet配置檔案

基於https的方式訪問到nginx反代的vip

# 進入指定目錄

$ cd /opt/kubernetes/server/bin/conf/

# 指定根證書和api-server的vip

$ kubectl config set-cluster myk8s \

--certificate-authority=/opt/kubernetes/server/bin/cert/ca.pem \

--embed-certs=true \

--server=https://10.4.7.10:7443 \

--kubeconfig=kubelet.kubeconfig

# 拿客戶端金鑰和api-server通訊

$ kubectl config set-credentials k8s-node \

--client-certificate=/opt/kubernetes/server/bin/cert/client.pem \

--client-key=/opt/kubernetes/server/bin/cert/client-key.pem \

--embed-certs=true \

--kubeconfig=kubelet.kubeconfig

# 以k8s-node使用者去訪問api-server(該使用者需要授權)

$ kubectl config set-context myk8s-context \

--cluster=myk8s \

--user=k8s-node \

--kubeconfig=kubelet.kubeconfig

$ kubectl config use-context myk8s-context --kubeconfig=kubelet.kubeconfig

- 關於

kubeconfig檔案- 這是一個k8s使用者的配置檔案

- 它裡面含有證書資訊

- 證書過期或更換,需要同步替換該檔案

3.建立授權資源配置檔案k8s-node.yaml

建立一次即可,用於給k8s-node這個訪問賬戶授權,許可權為k8s節點

$ vim /opt/kubernetes/server/bin/conf/k8s-node.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: k8s-node

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:node

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: k8s-node

- User account是為人設計的,而service account則是為Pod中的程序呼叫Kubernetes API而設計;

- User account是跨namespace的,而service account則是僅侷限它所在的namespace

4.使用kubctl建立

$ kubectl create -f /opt/kubernetes/server/bin/conf/k8s-node.yaml

7.1.3、準備pause基礎映象

pause映象是k8s裡必不可少的以pod方式執行業務容器時的一個基礎容器。

運維主機hdss7-200.host.com上:

1.下載

$ docker pull kubernetes/pause

2.提交至私有倉庫(harbor)中

$ docker tag f9d5de079539 harbor.od.com/public/pause:latest

$ docker push harbor.od.com/public/pause:latest

7.1.4、建立kubelet啟動指令碼

hdss7-21.host.com上:

1.啟動指令碼

$ vim /opt/kubernetes/server/bin/kubelet.sh

#!/bin/sh

./kubelet \

--anonymous-auth=false \

--cgroup-driver systemd \

--cluster-dns 192.168.0.2 \

--cluster-domain cluster.local \

--runtime-cgroups=/systemd/system.slice \

--kubelet-cgroups=/systemd/system.slice \

--fail-swap-on="false" \

--client-ca-file ./cert/ca.pem \

--tls-cert-file ./cert/kubelet.pem \

--tls-private-key-file ./cert/kubelet-key.pem \

--hostname-override hdss7-22.host.com \

--image-gc-high-threshold 20 \

--image-gc-low-threshold 10 \

--kubeconfig ./conf/kubelet.kubeconfig \

--log-dir /data/logs/kubernetes/kube-kubelet \

--pod-infra-container-image harbor.od.com/public/pause:latest \

--root-dir /data/kubelet

cluster-dns:指定叢集內部dns地址

hostname-override:當前機器主機名

pod-infra-container-image:pause映象拉取地址

kubeconfig:指定上面建立的上下文配置檔案

引數配置解析

| 引數 | 說明 |

|---|---|

| --anonymous-auth | 允許匿名請求到 kubelet 服務。未被另一個身份驗證方法拒絕的請求被視為匿名請求。匿名請求包含系統的使用者名稱: anonymous ,以及系統的組名: unauthenticated (預設 true ) |

| --cgroup-driver | 可選值有cgroupfs和systemd(預設cgroupfs)與docker驅動一致 |

| --cluster-dns | DNS 伺服器的IP列表,多個用逗號分隔 |

| --cluster-domain | 叢集域名, kubelet 將配置所有容器除了主機搜尋域還將搜尋當前域 |

| --fail-swap-on | 如果設定為true則啟動kubelet失敗(default true) |

| --hostname-override | cluster中的node name |

| --image-gc-high-threshold | 磁碟使用率最大值,超過此值將執行映象垃圾回收(default 85) |

| --image-gc-low-threshold | 磁碟使用率最大值,低於此值將停止映象垃圾回收(default 80) |

| --kubeconfig | 用來指定如何連線到 API server |

| --pod-infra-container-image | 每個 pod 中的 network/ipc 名稱空間容器將使用的pause映象 |

| --root-dir | kubelet 的工作目錄 |

建立目錄

$ mkdir -p /data/logs/kubernetes/kube-kubelet /data/kubelet

給指令碼新增+x許可權

$ chmod +x /opt/kubernetes/server/bin/kubelet.sh

2.建立kubelet的啟動配置