【k8s學習筆記】使用 kubeadm 部署 v1.18.5 版本 Kubernetes叢集

阿新 • • 發佈:2020-07-04

## 說明

本文系搭建kubernetes v1.18.5 叢集筆記,使用三臺虛擬機器作為 CentOS 測試機,安裝kubeadm、kubelet、kubectl均使用yum安裝,網路元件選用的是 flannel

行文中難免出現錯誤,如果讀者有高見,請評論與我交流

如需轉載請註明原始出處 [https://www.cnblogs.com/hellxz/p/use-kubeadm-init-kubernetes-cluster.html](https://www.cnblogs.com/hellxz/p/use-kubeadm-init-kubernetes-cluster.html)

## 環境準備

部署叢集沒有特殊說明均使用root使用者執行命令

### 硬體資訊

| ip | hostname | mem | disk | explain |

| -------------- | ----------- | ---- | ---- | ---------------- |

| 192.168.87.145 | kube-master | 4 GB | 20GB | k8s 控制平臺節點 |

| 192.168.87.146 | kube-node1 | 4 GB | 20GB | k8s 執行節點1 |

| 192.168.87.147 | kube-node2 | 4 GB | 20GB | k8s 執行節點2 |

### 軟體資訊

| software | version |

| ---------- | ------------------------------------ |

| CentOS | CentOS Linux release 7.7.1908 (Core) |

| Kubernetes | v1.18.5 |

| Docker | 19.03.12 |

### 保證環境正確性

| purpose | commands |

| -------------------- | ------------------------------------------------------------ |

| 保證叢集各節點互通 | `ping -c 3 ` |

| 保證MAC地址唯一 | `ip link` 或 `ifconfig -a` |

| 保證叢集內主機名唯一 | 查詢 `hostnamectl status`,修改 `hostnamectl set-hostname ` |

| 保證系統產品uuid唯一 | `dmidecode -s system-uuid` 或 `sudo cat /sys/class/dmi/id/product_uuid` |

> 修改MAC地址參考命令:

>

> ```bash

> ifconfig eth0 down

> ifconfig eth0 hw ether 00:0C:18:EF:FF:ED

> ifconfig eth0 up

> ```

>

> 如product_uuid不唯一,請考慮重灌CentOS系統

### 確保埠開放正常

kube-master節點埠檢查:

| Protocol | Direction | Port Range | Purpose |

| -------- | --------- | ---------- | ----------------------- |

| TCP | Inbound | 6443* | kube-api-server |

| TCP | Inbound | 2379-2380 | etcd API |

| TCP | Inbound | 10250 | Kubelet API |

| TCP | Inbound | 10251 | kube-scheduler |

| TCP | Inbound | 10252 | kube-controller-manager |

kube-node*節點埠檢查:

| Protocol | Direction | Port Range | Purpose |

| -------- | --------- | ----------- | ----------------- |

| TCP | Inbound | 10250 | Kubelet API |

| TCP | Inbound | 30000-32767 | NodePort Services |

> 如果你對主機的防火牆配置不是很自信,可以關掉防火牆:

>

> ```bash

> systemctl disable --now firewalld

> ```

>

> 或者 清除iptables規則 (慎用)

>

> ```bash

> iptables -F

> ```

### 配置主機互信

分別在**各節點**配置hosts對映:

```bash

cat >> /etc/hosts < /etc/sysctl.d/k8s.conf < /etc/docker/daemon.json <

#當前會話立即更新docker組

newgrp docker

```

## 部署kubernetes叢集

未特殊說明,各節點均需執行如下步驟

### 新增kubernetes源

```bash

cat > /etc/yum.repos.d/kubernetes.repo <

KUBE_VERSION=v1.18.5

PAUSE_VERSION=3.2

CORE_DNS_VERSION=1.6.7

ETCD_VERSION=3.4.3-0

# pull kubernetes images from hub.docker.com

docker pull kubeimage/kube-proxy-amd64:$KUBE_VERSION

docker pull kubeimage/kube-controller-manager-amd64:$KUBE_VERSION

docker pull kubeimage/kube-apiserver-amd64:$KUBE_VERSION

docker pull kubeimage/kube-scheduler-amd64:$KUBE_VERSION

# pull aliyuncs mirror docker images

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause:$PAUSE_VERSION

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:$CORE_DNS_VERSION

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:$ETCD_VERSION

# retag to k8s.gcr.io prefix

docker tag kubeimage/kube-proxy-amd64:$KUBE_VERSION k8s.gcr.io/kube-proxy:$KUBE_VERSION

docker tag kubeimage/kube-controller-manager-amd64:$KUBE_VERSION k8s.gcr.io/kube-controller-manager:$KUBE_VERSION

docker tag kubeimage/kube-apiserver-amd64:$KUBE_VERSION k8s.gcr.io/kube-apiserver:$KUBE_VERSION

docker tag kubeimage/kube-scheduler-amd64:$KUBE_VERSION k8s.gcr.io/kube-scheduler:$KUBE_VERSION

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause:$PAUSE_VERSION k8s.gcr.io/pause:$PAUSE_VERSION

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:$CORE_DNS_VERSION k8s.gcr.io/coredns:$CORE_DNS_VERSION

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:$ETCD_VERSION k8s.gcr.io/etcd:$ETCD_VERSION

# untag origin tag, the images won't be delete.

docker rmi kubeimage/kube-proxy-amd64:$KUBE_VERSION

docker rmi kubeimage/kube-controller-manager-amd64:$KUBE_VERSION

docker rmi kubeimage/kube-apiserver-amd64:$KUBE_VERSION

docker rmi kubeimage/kube-scheduler-amd64:$KUBE_VERSION

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/pause:$PAUSE_VERSION

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:$CORE_DNS_VERSION

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:$ETCD_VERSION

```

指令碼新增可執行許可權,執行指令碼拉取映象:

```bash

chmod +x get-k8s-images.sh

./get-k8s-images.sh

```

拉取完成,執行 `docker images` 檢視映象

### 初始化kube-master

僅 kube-master 節點需要執行此步驟

**修改kubelet配置預設cgroup driver**

```bash

cat > /var/lib/kubelet/config.yaml < init.default.yaml

```

**測試環境是否正常**(WARNING是正常的)

```bash

kubeadm init phase preflight [--config kubeadm-init.yaml]

```

> 上圖提示Warning是正常的,校驗不了k8s資訊是因為連不上被ban的網站,最後一個提示是因我本地未關閉防火牆,請我看清楚必要放行的埠號是否暢通

**初始化master** 10.244.0.0/16是flannel固定使用的IP段,設定取決於網路元件要求

```bash

kubeadm init --pod-network-cidr=10.244.0.0/16 --kubernetes-version=v1.18.5 [--config kubeadm-init.yaml]

```

輸出如下:

```bash

[root@kube-master k8s]# kubeadm init --pod-network-cidr=10.244.0.0/16 --kubernetes-version=v1.18.5

W0703 18:49:19.076654 16469 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io]

[init] Using Kubernetes version: v1.18.5

[preflight] Running pre-flight checks

[WARNING Firewalld]: firewalld is active, please ensure ports [6443 10250] are open or your cluster may not function correctly

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [kube-master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.87.145]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [kube-master localhost] and IPs [192.168.87.145 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [kube-master localhost] and IPs [192.168.87.145 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

W0703 18:49:23.039913 16469 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"

[control-plane] Creating static Pod manifest for "kube-scheduler"

W0703 18:49:23.040907 16469 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 21.505101 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.18" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node kube-master as control-plane by adding the label "node-role.kubernetes.io/master=''"

[mark-control-plane] Marking the node kube-master as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: 2b7cfv.6bhz4z3a3vzyg498

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.87.145:6443 --token 2b7cfv.6bhz4z3a3vzyg498 \

--discovery-token-ca-cert-hash sha256:79bd63d82634f9953cc9d6b5a923fa87c973f0c3fd9ed7270167052dd834c026

```

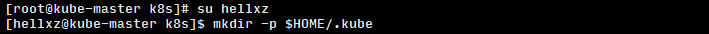

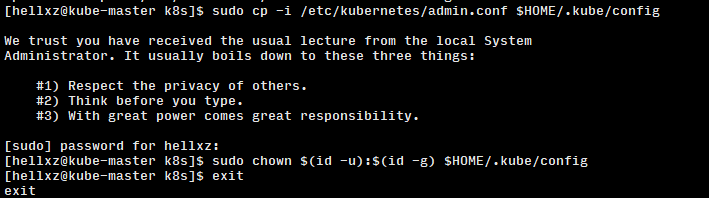

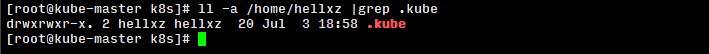

**為日常使用叢集的使用者新增kubectl使用許可權**

```bash

su hellxz

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

exit

```

**配置master認證**

```bash

echo 'export KUBECONFIG=/etc/kubernetes/admin.conf' >> /etc/profile

. /etc/profile

```

> 如果不配置這個,會提示如下輸出:

>

> ```bash

> The connection to the server localhost:8080 was refused - did you specify the right host or port?

> ```

>

>

>

> 此時master節點已經初始化成功,但是還未完裝網路元件,還無法與其他節點通訊

**安裝網路元件,以flannel為例**

```bash

cd ~/k8s

yum install -y wget

#下載flannel最新配置檔案

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

kubectl apply -f kube-flannel.yml

```

**檢視kube-master節點狀態**

```bash

kubectl get nodes

```

> 如果STATUS提示`NotReady`,可以通過 `kubectl describe node kube-master` 檢視具體的描述資訊,效能差的伺服器到達Ready狀態時間會長些

**備份映象供其他節點使用**

在kube-master節點將映象備份出來,便於後續傳輸給其他node節點,當然有映象倉庫更好

```bash

docker save k8s.gcr.io/kube-proxy:v1.18.5 \

k8s.gcr.io/kube-apiserver:v1.18.5 \

k8s.gcr.io/kube-controller-manager:v1.18.5 \

k8s.gcr.io/kube-scheduler:v1.18.5 \

k8s.gcr.io/pause:3.2 \

k8s.gcr.io/coredns:1.6.7 \

k8s.gcr.io/etcd:3.4.3-0 > k8s-imagesV1.18.5.tar

```

### 初始化kube-node*節點並加入叢集

**拷貝映象到node節點**,以kube-node1舉例,node2不再累述

```bash

#此時命令在kube-node*節點上執行

mkdir ~/k8s

scp root@kube-master:/root/k8s/k8s-imagesV1.18.5.tar ~/k8s

```

**獲取加入kubernetes命令**,未忘可不選

剛才在初始化kube-master節點時,有在最後輸出其加入叢集的命令,假如我沒記下來,那怎麼辦呢?

訪問kube-master輸入建立新token命令,同時輸出加入叢集的命令:

```bash

kubeadm token create --print-join-command

```

**在kube-node*節點上執行加入叢集命令**

```bash

kubeadm join 192.168.87.145:6443 --token jdyzyq.icwlpkm36kgs6nqh --discovery-token-ca-cert-hash sha256:24f9b05fa10307ef6fff4132e0ec3c8b54917d4ff440b36108908aca588d8be7

```

### 檢視叢集節點狀態

```bash

kubectl get nodes

```

**參考**

> - 《Kubernetes權威指南》第4版

> - 官方文件 https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/install-kubeadm/

至此,本文結束,感謝閱讀,如果對你有幫助,歡迎點推薦,如果有問題,請在下方留言。