kafka安裝以及入門

阿新 • • 發佈:2017-08-12

producer simple rod 0.11 scribe 開啟 image inux put

一、安裝

下載最新版kafka,Apache Kafka,然後上傳到Linux,我這裏有三臺機器,192.168.127.129,130,131 。

進入上傳目錄,解壓到/usr/local目錄下

tar -zxvf kafka_2.11-0.11.0.0.tgz -C /usr/local/

進入/usr/local目錄

cd /usr/local

然後改一下名字(這一步可有可無)

mv kafka_2.11-0.11.0.0/ kafka

進入kafka目錄,編輯配置文件

cd kafka/

vi config/server.properties

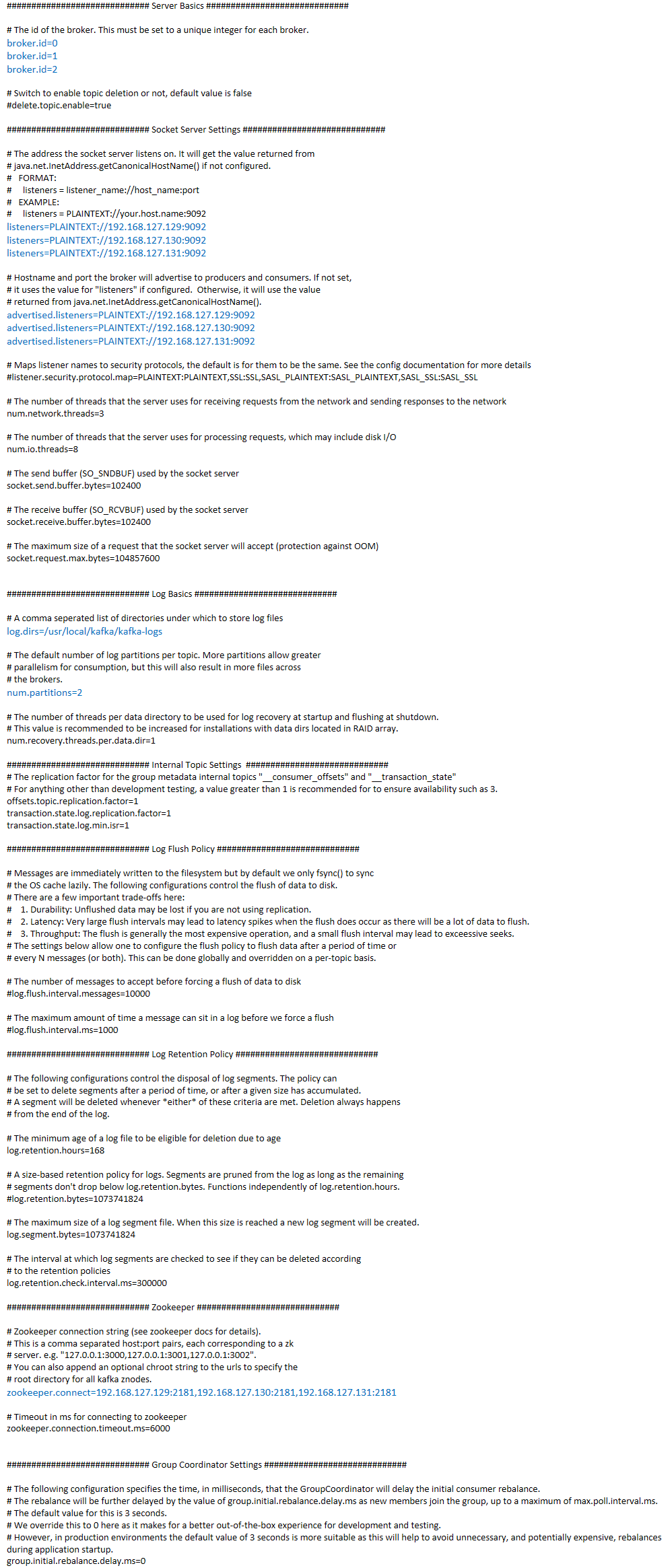

配置文件:有的地方三臺機器不一樣哦,不一樣的你都能看出來

編輯好之後,進入kafka目錄並且建立日誌目錄

mkdir kafka-logs

開啟kafka的通信端口9092

firewall-cmd --zone=public --add-port=9092/tcp --permanent systemctl restart firewalld

啟動Zookeeper,如果你不知道Zookeeper安裝的話,就找我的文章,有一篇是關於Zookeeper安裝的。

然後啟動kafka

cd /usr/local/kafka/bin/ ./kafka-server-start.sh /usr/local/kafka/config/server.properties &

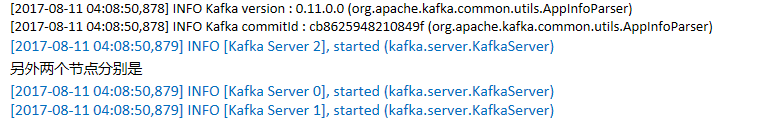

最後出現

就是成功了,進入Zookeeper客戶端

除了activemq,zookeeper,dubbo,root,都是kafka相關的

二、入門

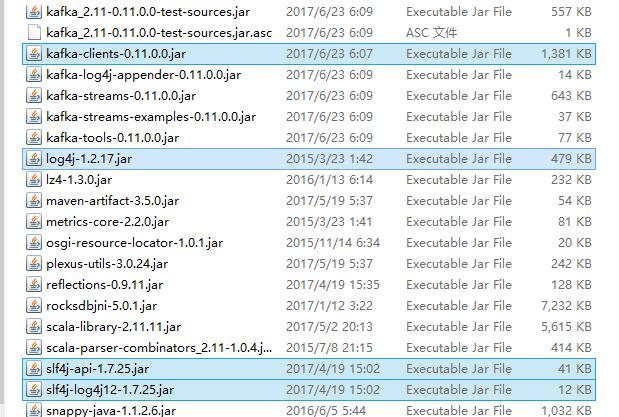

依賴jar包,請找到kafka的libs目錄

生產者: import java.util.Properties; import org.apache.kafka.clients.producer.KafkaProducer; import org.apache.kafka.clients.producer.Producer; import org.apache.kafka.clients.producer.ProducerRecord;public class SimpleProducer { public static void main(String[] args) throws InterruptedException { Properties props = new Properties(); props.put("bootstrap.servers", "192.168.127.129:9092,192.168.127.130:9092,192.168.127.131:9092"); props.put("acks", "all"); props.put("retries", 0); props.put("batch.size", 16384); props.put("linger.ms", 1); props.put("buffer.memory", 33554432); props.put("key.serializer", "org.apache.kafka.common.serialization.StringSerializer"); props.put("value.serializer", "org.apache.kafka.common.serialization.StringSerializer"); Producer<String, String> producer = new KafkaProducer<>(props); for (int i = 0; i < 10; i++){ //ProducerRecord<String, String>(String topic, String key, String value) producer.send(new ProducerRecord<String, String>("my-topic", "fruit" + i, "banana" + i)); Thread.sleep(1000); } producer.close(); } }

消費者: import java.util.Arrays; import java.util.Properties; import org.apache.kafka.clients.consumer.ConsumerRecord; import org.apache.kafka.clients.consumer.ConsumerRecords; import org.apache.kafka.clients.consumer.KafkaConsumer; public class SimpleConsumer { public static void main(String[] args) { Properties props = new Properties(); props.put("bootstrap.servers", "192.168.127.129:9092,192.168.127.130:9092,192.168.127.131:9092"); props.put("group.id", "testGroup"); props.put("enable.auto.commit", "true"); props.put("auto.commit.interval.ms", "1000"); props.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer"); props.put("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer"); KafkaConsumer<String, String> consumer = new KafkaConsumer<>(props); //訂閱的topic,多個用逗號隔開 consumer.subscribe(Arrays.asList("my-topic")); while (true) { ConsumerRecords<String, String> records = consumer.poll(100); for (ConsumerRecord<String, String> record : records) System.out.printf("offset = %d, key = %s, value = %s%n", record.offset(), record.key(), record.value()); } //consumer.close(); } }

先啟動消費者,再啟動生產者

你會發現消費者每隔1秒輸出一行數據。

kafka安裝以及入門