機器學習--特徵工程1--標準化

阿新 • • 發佈:2018-11-16

sklearn.preprocessing

https://scikit-learn.org/stable/modules/preprocessing.html

結合sklearn來學習一下資料的預處理過程:

安裝 pip install -U scikit-learn

sklearn原始碼位置:

C:\Users\chen\AppData\Local\Programs\Python\Python37\Lib\site-packages\sklearn\preprocessing

資料的標準化處理:大多數scikit庫都需要將資料進行標準化處理:

Gaussian with zero mean and unit variance 均值為0 單位方差的高斯分佈資料

1. 使用StandardScaler()可以實現標準化資料 均值=0 單位方差:

from sklearn import preprocessing

import numpy as np

X_train = np.array([[1.,-1.,2.],[2.,0.,0.],[0.,1.,-1.]])

scaler = preprocessing.StandardScaler().fix(X_train)

scaler.transform(X_train)

scaler.mean_

scaler.scale_原始碼位置:data.py

建構函式: 是否複製 是否均值化 是否單位方差化

Parameters ---------- copy : boolean, optional, default True If False, try to avoid a copy and do inplace scaling instead. This is not guaranteed to always work inplace; e.g. if the data is not a NumPy array or scipy.sparse CSR matrix, a copy may still be returned. with_mean : boolean, True by default If True, center the data before scaling. This does not work (and will raise an exception) when attempted on sparse matrices, because centering them entails building a dense matrix which in common use cases is likely to be too large to fit in memory. with_std : boolean, True by default If True, scale the data to unit variance (or equivalently, unit standard deviation). def __init__(self, copy=True, with_mean=True, with_std=True): self.with_mean = with_mean self.with_std = with_std self.copy = copy

fit函式 求解均值以及方差:

def fit(self, X, y=None):

"""Compute the mean and std to be used for later scaling.

Parameters

----------

X : {array-like, sparse matrix}, shape [n_samples, n_features]

The data used to compute the mean and standard deviation

used for later scaling along the features axis.

y

Ignored

"""

# Reset internal state before fitting

self._reset()

return self.partial_fit(X, y)transform函式:進行均值和方差轉化 Perform standardization by centering and scaling

def transform(self, X, y='deprecated', copy=None):

"""Perform standardization by centering and scaling

Parameters

----------

X : array-like, shape [n_samples, n_features]

The data used to scale along the features axis.

y : (ignored)

.. deprecated:: 0.19

This parameter will be removed in 0.21.

copy : bool, optional (default: None)

Copy the input X or not.

"""

if not isinstance(y, string_types) or y != 'deprecated':

warnings.warn("The parameter y on transform() is "

"deprecated since 0.19 and will be removed in 0.21",

DeprecationWarning)

check_is_fitted(self, 'scale_')

copy = copy if copy is not None else self.copy

X = check_array(X, accept_sparse='csr', copy=copy, warn_on_dtype=True,

estimator=self, dtype=FLOAT_DTYPES,

force_all_finite='allow-nan')

if sparse.issparse(X):

if self.with_mean:

raise ValueError(

"Cannot center sparse matrices: pass `with_mean=False` "

"instead. See docstring for motivation and alternatives.")

if self.scale_ is not None:

inplace_column_scale(X, 1 / self.scale_)

else:

if self.with_mean:

X -= self.mean_

if self.with_std:

X /= self.scale_

return X2.歸一化資料,將特徵資料歸一化於某個範圍 MinMaxScaler /MaxAbsScaler

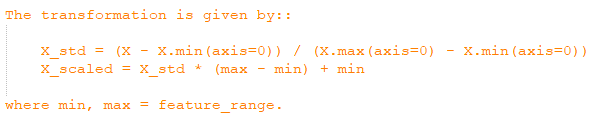

MinMaxScaler實現資料歸一化[0,1]

例項:

X_train = np.array([[ 1., -1., 2.],[ 2., 0., 0.],[ 0., 1., -1.]])

min_max_scaler = preprocessing.MinMaxScaler()

X_train_minmax = min_max_scaler.fit_transform(X_train)歸一化計算公式:

建構函式 可以指定範圍

Parameters

----------

feature_range : tuple (min, max), default=(0, 1)

Desired range of transformed data.

copy : boolean, optional, default True

Set to False to perform inplace row normalization and avoid a

copy (if the input is already a numpy array).

def __init__(self, feature_range=(0, 1), copy=True):

self.feature_range = feature_range

self.copy = copyfit方法,計算特徵的最大值以及最小值

def fit(self, X, y=None):

"""Compute the minimum and maximum to be used for later scaling.

Parameters

----------

X : array-like, shape [n_samples, n_features]

The data used to compute the per-feature minimum and maximum

used for later scaling along the features axis.

"""

# Reset internal state before fitting

self._reset()

return self.partial_fit(X, y)transform根據指定範圍進行範圍處理

def transform(self, X):

"""Scaling features of X according to feature_range.

Parameters

----------

X : array-like, shape [n_samples, n_features]

Input data that will be transformed.

"""

check_is_fitted(self, 'scale_')

X = check_array(X, copy=self.copy, dtype=FLOAT_DTYPES,

force_all_finite="allow-nan")

X *= self.scale_

X += self.min_

return XMaxAbsScaler資料歸一化[-1,1]

例項:

X_train = np.array([[ 1., -1., 2.],[ 2., 0., 0.],[ 0., 1., -1.]])

max_abs_scaler = preprocessing.MaxAbsScaler()

X_train_maxabs = max_abs_scaler.fit_transform(X_train)fit計算絕對值的最大值:

def fit(self, X, y=None):

"""Compute the maximum absolute value to be used for later scaling.

Parameters

----------

X : {array-like, sparse matrix}, shape [n_samples, n_features]

The data used to compute the per-feature minimum and maximum

used for later scaling along the features axis.

"""

# Reset internal state before fitting

self._reset()

return self.partial_fit(X, y)transform將資料除以絕對值最大值,歸一化到[-1,1]

def transform(self, X):

"""Scale the data

Parameters

----------

X : {array-like, sparse matrix}

The data that should be scaled.

"""

check_is_fitted(self, 'scale_')

X = check_array(X, accept_sparse=('csr', 'csc'), copy=self.copy,

estimator=self, dtype=FLOAT_DTYPES,

force_all_finite='allow-nan')

if sparse.issparse(X):

inplace_column_scale(X, 1.0 / self.scale_)

else:

X /= self.scale_

return X3.稀疏資料處理

4.極端資料處理

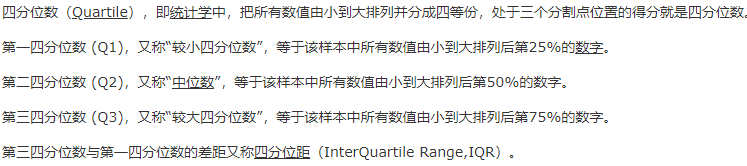

利用RobustScaler來處理極端值 利用分位數的概念來處理極端值

Scale features using statistics that are robust to outliers.

This Scaler removes the median and scales the data according to

the quantile range (defaults to IQR: Interquartile Range).

The IQR is the range between the 1st quartile (25th quantile)

and the 3rd quartile (75th quantile).

建構函式:

Parameters

----------

with_centering : boolean, True by default

If True, center the data before scaling.

This will cause ``transform`` to raise an exception when attempted on

sparse matrices, because centering them entails building a dense

matrix which in common use cases is likely to be too large to fit in

memory.

with_scaling : boolean, True by default

If True, scale the data to interquartile range.

quantile_range : tuple (q_min, q_max), 0.0 < q_min < q_max < 100.0

Default: (25.0, 75.0) = (1st quantile, 3rd quantile) = IQR

Quantile range used to calculate ``scale_``.

.. versionadded:: 0.18

copy : boolean, optional, default is True

If False, try to avoid a copy and do inplace scaling instead.

This is not guaranteed to always work inplace; e.g. if the data is

not a NumPy array or scipy.sparse CSR matrix, a copy may still be

returned.

def __init__(self, with_centering=True, with_scaling=True,

quantile_range=(25.0, 75.0), copy=True):

self.with_centering = with_centering

self.with_scaling = with_scaling

self.quantile_range = quantile_range

self.copy = copy