LeNet_5網路實現

阿新 • • 發佈:2018-11-19

本章所需知識:

- 沒有基礎的請觀看深度學習系列視訊

- tensorflow

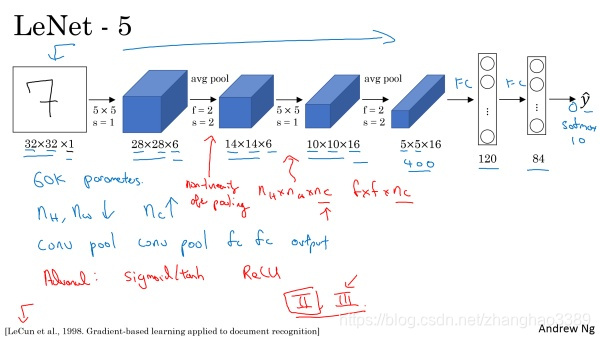

恩達老師的視覺化極強的網路結構圖:

接著加上我自己使用Tensorflow實現的程式碼:

LeNet_5網路

import tensorflow as tf import tensorflow.examples.tutorials.mnist.input_data as input_data mnist = input_data.read_data_sets("MNIST_data/", one_hot=True) # 匯入資料集 '''LeNet_5, 可以識別圖片中的手寫數字, 針對灰度影象訓練的''' class LeNet_5: def __init__(self): self.in_x = tf.placeholder(dtype=tf.float32, shape=[None, 28, 28, 1], name="in_x") self.in_y = tf.placeholder(dtype=tf.float32, shape=[None, 10], name="in_y") # 卷積層 (batch, 28, 28, 1) -> (batch, 24, 24, 6) self.conv1 = tf.layers.Conv2D(filters=6, kernel_size=5, strides=(1, 1), kernel_initializer=tf.truncated_normal_initializer(stddev=tf.sqrt(1 / 6))) # 池化層 (batch, 24, 24, 6) -> (batch, 12, 12, 6) self.pool1 = tf.layers.AveragePooling2D(pool_size=(2, 2), strides=(2, 2)) # 卷積層 (batch, 12, 12, 6) -> (batch, 8, 8, 16) self.conv2 = tf.layers.Conv2D(filters=16, kernel_size=5, strides=(1, 1), kernel_initializer=tf.truncated_normal_initializer(stddev=tf.sqrt(1 / 16))) # 池化層 (batch, 8, 8, 16) -> (batch, 4, 4, 16) self.pool2 = tf.layers.AveragePooling2D(pool_size=(2, 2), strides=(2, 2)) # 全連結層 (batch, 4*4*16(256)) -> (batch, 120) self.fc1 = tf.layers.Dense(120, kernel_initializer=tf.truncated_normal_initializer(stddev=tf.sqrt(1 / 120))) # 全連結層 (batch, 120) -> (batch, 10) self.fc2 = tf.layers.Dense(10, kernel_initializer=tf.truncated_normal_initializer(stddev=tf.sqrt(1 / 10))) def forward(self): # 因為是還原LeNet5 所以使用sigmoid self.conv1_out = tf.nn.sigmoid(self.conv1(self.in_x)) # 將圖片傳入 conv1 self.pool1_out = self.pool1(self.conv1_out) # 將 conv1 的輸出傳入 pool1 self.conv2_out = tf.nn.sigmoid(self.conv2(self.pool1_out)) # 將 pool1 的輸出傳入 conv2 self.pool2_out = self.pool2(self.conv2_out) # 將 conv2 的輸出傳入 pool2 self.flat = tf.reshape(self.pool2_out, shape=[-1, 256]) # 將 pool2 的輸出reshape成 (batch, -1(-1指這裡的256,具體看計算出的圖大小)) self.fc1_out = tf.nn.sigmoid(self.fc1(self.flat)) # 將 reshape 後的圖傳入 fc1 self.fc2_out = tf.nn.softmax(self.fc2(self.fc1_out)) # 將 fc1 的輸出傳入 fc2 def backward(self): # 後向計算 self.loss = tf.reduce_mean((self.fc2_out - self.in_y) ** 2) # 均方差計算損失 self.opt = tf.train.AdamOptimizer().minimize(self.loss) # 使用Adam優化器優化損失 def acc(self): # 精度計算(可不寫, 不影響網路使用) self.acc1 = tf.equal(tf.argmax(self.fc2_out, 1), tf.argmax(self.in_y, 1)) self.accaracy = tf.reduce_mean(tf.cast(self.acc1, dtype=tf.float32)) if __name__ == '__main__': net = LeNet_5() # 建立LeNet_5的物件 net.forward() # 執行前向計算 net.backward() # 執行後向計算 net.acc() # 執行精度計算 init = tf.global_variables_initializer() # 初始化所有tensorflow變數 with tf.Session() as sess: sess.run(init) for i in range(10000): train_x, train_y = mnist.train.next_batch(100) # 取出mnist訓練集的 100 批資料和標籤 train_x_flat = train_x.reshape([-1, 28, 28, 1]) # 將資料整型 # 將資料傳入網路,並得到計算後的精度和損失 acc, loss, _ = sess.run(fetches=[net.accaracy, net.loss, net.opt], feed_dict={net.in_x: train_x_flat, net.in_y: train_y}) if i % 100 == 0: # 每訓練100次列印一次訓練集精度和損失 print("訓練集精度:|", acc) print("訓練集損失:|", loss) test_x, test_y = mnist.test.next_batch(100) # 取出100批測試集資料進行測試 test_x_flat = test_x.reshape([-1, 28, 28, 1]) # 同上 # 同上 test_acc, test_loss = sess.run(fetches=[net.accaracy, net.loss], feed_dict={net.in_x: test_x_flat, net.in_y: test_y}) print('----------') print("驗證集精度:|", test_acc) # 列印驗證集精度 print("驗證集損失:|", test_loss) # 列印驗證集損失 print('--------------------')

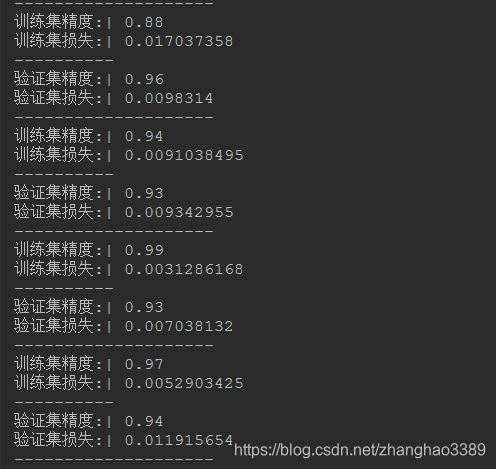

最後附上訓練截圖: