機器學習小目標——Task8

1. 任務

【任務八-特徵工程2】分別用IV值和隨機森林挑選特徵,再構建模型,進行模型評估

2. 用IV值特徵選擇

2.1 IV值

IV的全稱是Information Value,中文意思是資訊價值,或者資訊量

2.1.1 IV的計算

為了介紹IV的計算方法,我們首先需要認識和理解另一個概念——WOE,因為IV的計算是以WOE為基礎的。

2.1.2 WOE

WOE的全稱是“Weight of Evidence”,即證據權重。WOE是對原始自變數的一種編碼形式。

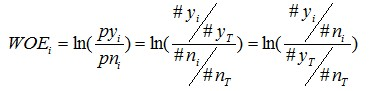

要對一個變數進行WOE編碼,需要首先把這個變數進行分組處理(也叫離散化、分箱等等,說的都是一個意思)。分組後,對於第i組,WOE的計算公式如下:

pyi是這個組中響應客戶(風險模型中,對應的是違約客戶,總之,指的是模型中預測變數取值為“是”或者說1的個體)佔所有樣本中所有響應客戶的比例,pni是這個組中未響應客戶佔樣本中所有未響應客戶的比例,#yi是這個組中響應客戶的數量,#ni是這個組中未響應客戶的數量,#yT是樣本中所有響應客戶的數量,#nT是樣本中所有未響應客戶的數量。

WOE越大,這種差異越大,這個分組裡的樣本響應的可能性就越大,WOE越小,差異越小,這個分組裡的樣本響應的可能性就越小

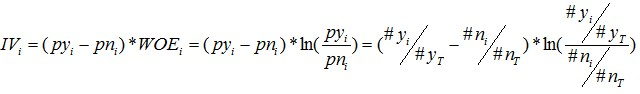

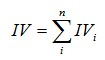

2.1.3 IV的計算公式

有了一個變數各分組的IV值,我們就可以計算整個變數的IV值,方法很簡單,就是把各分組的IV相加:

3. 用隨機森林特徵選擇

3.1 特徵重要性

隨機森林一個重要特徵:能夠計算單個特徵變數的重要性。

3.1.1 計算方法

-

對於隨機森林中的每一顆決策樹,使用相應的OOB(袋外資料)資料來計算它的袋外資料誤差,記為errOOB1.

-

隨機地對袋外資料OOB所有樣本的特徵X加入噪聲干擾(就可以隨機的改變樣本在特徵X處的值),再次計算它的袋外資料誤差,記為errOOB2.

-

假設隨機森林中有Ntree棵樹,那麼對於特徵X的重要性=∑(errOOB2-errOOB1)/Ntree,之所以可以用這個表示式來作為相應特徵的重要性的度量值是因為:若給某個特徵隨機加入噪聲之後,袋外的準確率大幅度降低,則說明這個特徵對於樣本的分類結果影響很大,也就是說它的重要程度比較高。

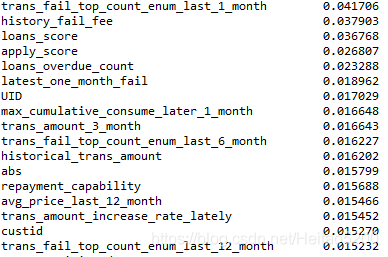

實驗結果

程式碼

#!/usr/bin/env python 3.6

#-*- coding:utf-8 -*-

# @File : IV.py

# @Date : 2018-11-27

# @Author : 黑桃

# @Software: PyCharm

#

# # -*- coding:utf-8 -*-

# # Author:Bemyid

# from fancyimpute import BiScaler, KNN, NuclearNormMinimization

import pandas as pd

import math

import numpy as np

from scipy import stats

from sklearn.utils.multiclass import type_of_target

from sklearn.linear_model import LogisticRegression

from sklearn import svm

from sklearn.tree import DecisionTreeClassifier

from xgboost.sklearn import XGBClassifier

from lightgbm.sklearn import LGBMClassifier

from mlxtend.classifier import StackingClassifier

from sklearn.metrics import accuracy_score, roc_auc_score

import warnings

import pickle

from sklearn.ensemble import RandomForestClassifier

warnings.filterwarnings("ignore")

path = "E:/MyPython/Machine_learning_GoGoGo/"

"""

0 讀取特徵

"""

print("0 讀取特徵")

f = open(path + 'feature/feature_V2.pkl', 'rb')

train, test, y_train, y_test = pickle.load(f)

data = pd.read_csv(path + 'data_set/data.csv',encoding='gbk')

f.close()

def predict(clf, train, test, y_train, y_test):

# 預測

y_train_pred = clf.predict(train)

y_test_pred = clf.predict(test)

y_train_proba = clf.predict_proba(train)[:, 1]

y_test_proba = clf.predict_proba(test)[:, 1]

# 準確率

print('[準確率]')

print('訓練集:', '%s' % accuracy_score(y_train, y_train_pred), end=' ')

print('測試集:', '%s' % accuracy_score(y_test, y_test_pred))

# auc取值:用roc_auc_score或auc

print('[auc值]')

print('訓練集:', '%s' % roc_auc_score(y_train, y_train_proba), end=' ')

print('測試集:', '%s' % roc_auc_score(y_test, y_test_proba))

"""

2 隨機森林挑選特徵

"""

forest = RandomForestClassifier(oob_score=True,n_estimators=100, random_state=0,n_jobs=1)

forest.fit(train, y_train)

print('袋外分數:', forest.oob_score_)

predict(forest,train, test, y_train, y_test)

feature_impotance1 = sorted(zip(map(lambda x: '%.4f'%x, forest.feature_importances_), list(train.columns)), reverse=True)

print(feature_impotance1[:10])

def woe(X, y, event=1):

res_woe = []

iv_dict = {}

for feature in X.columns:

x = X[feature].values

# 1) 連續特徵離散化

if type_of_target(x) == 'continuous':

x = discrete(x)

# 2) 計算該特徵的woe和iv

# woe_dict, iv = woe_single_x(x, y, feature, event)

woe_dict, iv = woe_single_x(x, y, feature, event)

iv_dict[feature] = iv

res_woe.append(woe_dict)

return iv_dict

def discrete(x):

# 使用5等分離散化特徵

res = np.zeros(x.shape)

for i in range(5):

point1 = stats.scoreatpercentile(x, i * 20)

point2 = stats.scoreatpercentile(x, (i + 1) * 20)

x1 = x[np.where((x >= point1) & (x <= point2))]

mask = np.in1d(x, x1)

res[mask] = i + 1 # 將[i, i+1]塊內的值標記成i+1

return res

def woe_single_x(x, y, feature, event=1):

# event代表預測正例的標籤

event_total = sum(y == event)

non_event_total = y.shape[-1] - event_total

iv = 0

woe_dict = {}

for x1 in set(x): # 遍歷各個塊

y1 = y.reindex(np.where(x == x1)[0])

event_count = sum(y1 == event)

non_event_count = y1.shape[-1] - event_count

rate_event = event_count / event_total

rate_non_event = non_event_count / non_event_total

if rate_event == 0:

rate_event = 0.0001

# woei = -20

elif rate_non_event == 0:

rate_non_event = 0.0001

# woei = 20

woei = math.log(rate_event / rate_non_event)

woe_dict[x1] = woei

iv += (rate_event - rate_non_event) * woei

return woe_dict, iv

import warnings

warnings.filterwarnings("ignore")

iv_dict = woe(train, y_train)

iv = sorted(iv_dict.items(), key = lambda x:x[1],reverse = True)

useless = []

for feature in train.columns:

if feature in [t[1] for t in feature_impotance1[50:]] and feature in [t[1] for t in feature_impotance2[50:]]:

useless.append(feature)

print(feature, iv_dict[feature])

train.drop(useless, axis = 1, inplace = True)

test.drop(useless, axis = 1, inplace = True)

importance = forest.feature_importances_

imp_result = pd.DataFrame(importance)

imp_result.columns=["impor"]

rf_impc = pd.Series(forest.feature_importances_, index=train.columns).sort_values(ascending=False)

lr = LogisticRegression(C = 0.1, penalty = 'l1')

svm_linear = svm.SVC(C = 0.01, kernel = 'linear', probability=True)

svm_poly = svm.SVC(C = 0.01, kernel = 'poly', probability=True)

svm_rbf = svm.SVC(gamma = 0.01, C =0.01 , probability=True)

svm_sigmoid = svm.SVC(C = 0.01, kernel = 'sigmoid',probability=True)

dt = DecisionTreeClassifier(max_depth=5,min_samples_split=50,min_samples_leaf=60, max_features=9, random_state =2333)

xgb = XGBClassifier(learning_rate =0.1, n_estimators=80, max_depth=3, min_child_weight=5,

gamma=0.2, subsample=0.8, colsample_bytree=0.8, reg_alpha=1e-5,

objective= 'binary:logistic', nthread=4,scale_pos_weight=1, seed=27)

lgb = LGBMClassifier(learning_rate =0.1, n_estimators=100, max_depth=3, min_child_weight=11,

gamma=0.1, subsample=0.5, colsample_bytree=0.9, reg_alpha=1e-5,

nthread=4,scale_pos_weight=1, seed=27)

sclf = StackingClassifier(classifiers=[svm_linear, svm_poly, svm_rbf, svm_sigmoid, dt, xgb, lgb],

meta_classifier=lr, use_probas=True,average_probas=False)

sclf.fit(train, y_train.values)

predict(sclf, train, test, y_train, y_test)

[準確率] 訓練集: 0.8264 測試集: 0.7917

[auc值] 訓練集: 0.8921 測試集: 0.7895