Flume-NG + HDFS + HIVE 日誌收集分析

[[email protected] apache-flume-1.3.0-bin]# cat /data/apache-flume-1.3.0-bin/conf/flume.conf# Define a memory channel called c1 on a1a1.channels.c1.type = memory# Define an Avro source called r1 on a1 and tell it# to bind to 0.0.0.0:41414. Connect it to channel c1.a1.sources.r1.channels = c1a1.sources.r1.type = avroa1.sources.r1.bind = 0.0.0.0a1.sources.r1.port = 41414a1.sinks.k1.type = hdfsa1.sinks.k1.channel = c1a1.sinks.k1.hdfs.path = hdfs://namenode:8020/user/hive/warehouse/squida1.sinks.k1.hdfs.filePrefix = events-a1.sinks.k1.hdfs.fileType = DataStreama1.sinks.k1.hdfs.writeFormat = Texta1.sinks.k1.hdfs.rollSize = 0a1.sinks.k1.hdfs.rollInterval= 0a1.sinks.k1.hdfs.rollCount = 600000a1.sinks.k1.hdfs.rollInterval = 600## Finally, now that we've defined all of our components, tell# a1 which ones we want to activate.a1.channels = c1a1.sources = r1a1.sinks = k1

執行Agent:

bin/flume-ng agent --conf ./conf/ -f conf/flume.conf -Dflume.root.logger=DEBUG,console -n a1

執行以上命令後, flume-ng將會在啟動avro Source監聽41414埠, 等待日誌進入。引數 “-Dflume.root.logger=DEBUG,console”僅為debug用途,這樣當log資料進入的時候可以清新看到具體情況,請勿在真實環境使用,否則terminal會被log淹沒。以上配置檔案將會把41414埠偵測到的日誌寫入HDFS hdfs://namenode:8020/user/hive/warehouse/squid. 且每 600000 行roll out成一個一個新檔案。

Client:

bin/flume-ng avro-client --conf conf -H collector01 -p 41414 -F /root/1024.txt -Dflume.root.logger=DEBUG,console

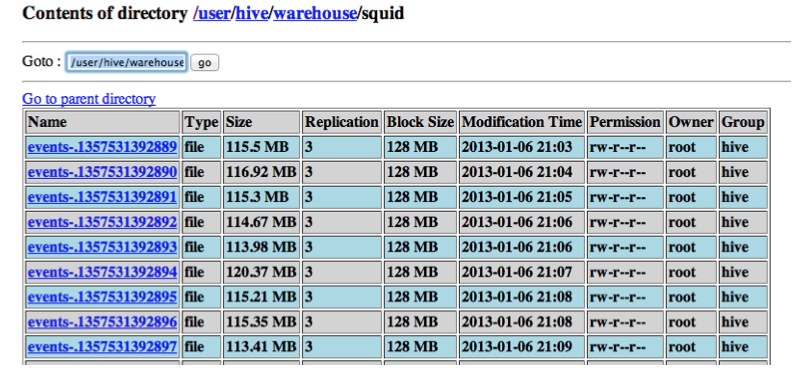

在客戶主機上執行此命令,將會把日誌檔案 /root/1024.txt上傳到collector01:41414埠。flume-ng當然也可以配置檢控日誌檔案的變化(tail -F logfile),參看exec source的文件。一下為HDFS中收集到的日誌: 使用HiVE分析資料:

使用HiVE分析資料:

Hive 將會利用hdfs中的log進行分析, 你需要寫好相應的分析SQL語句,hive將呼叫 map reduce完成你的分析任務。我測試用的log是squid log,log entry如下:

1356867313.430 109167 10.10.10.1 TCP_MISS/200 51498 CONNECT securepics.example.com:443 – HIER_DIRECT/securepics.example.com -

若要適應hive分析,就需要在hdfs資料的基礎上create table. 而最重要的一步就是根據log的內容來寫正則表示式,匹配log中的每一列。[ ]*([0-9]*)[^ ]*[ ]*([^ ]*) ([^ ]*) ([^ |^ /]*)/([0-9]*) ([0-9]*) ([^ ]*) ((?:([^:]*)://)?([^/:]+):?([0-9]*)?(/?[^ ]*)) ([^ ]*) ([^/]+)/([^ ]+) (.*)只能使用basic re是很讓人頭疼的,意味著沒法使用d, s, w這樣的語法。在寫以上正則的時候,這個網站http://rubular.com幫了我大忙。可以動態檢視到正則的結果。在Hive中執行如下命令建立Table:

hive>

CREATE EXTERNAL TABLE IF NOT EXISTS squidtable(ttamp STRING, duration STRING,clientip STRING, action STRING, http_status STRING, bytes STRING, method STRING,uri STRING, proto STRING, uri_host STRING, uri_port STRING, uri_path STRING,username STRING, hierarchy STRING, server_ip STRING, content_type STRING)ROW FORMAT SERDE ‘org.apache.hadoop.hive.contrib.serde2.RegexSerDe’WITH SERDEPROPERTIES (“input.regex” = “[ ]*([0-9]*)[^ ]*[ ]*([^ ]*) ([^ ]*) ([^ |^ /]*)/([0-9]*) ([0-9]*) ([^ ]*) ((?:([^:]*)://)?([^/:]+):?([0-9]*)?(/?[^ ]*)) ([^ ]*) ([^/]+)/([^ ]+) (.*)”,“output.format.string” = “%1$s %2$s %3$s %4$s %5$s %6$s %7$s %8$s %9$s %10$s %11$s %12$s %13$s %14$s %15$s %16$s”)STORED AS TEXTFILELOCATION ‘/user/hive/warehouse/squid’;

一些分析SQL例子:

# How many log entry inside tableselect count(*) from squidtable;# How many log entry inside table with client ip 10.10.10.1select count(*) from squidtable where clientip = "10.10.10.1";# some advance querySELECT clientip, COUNT(1) AS numrequest FROM squidtable GROUP BY clientip SORTBY numrequest DESC LIMIT 10;