AI應用開發基礎傻瓜書系列附錄-基本數學導數公式

基本函式導數公式

附錄:基本數學導數公式

這篇文章的內容更多的是一些可能要用到的數學公式的導數公式和推導,是一種理論基礎,感興趣的同學可以仔細瞅瞅,想直接上手的同學也可以直接跳過這一篇~

大家可以mark一下,以便以後用到時過來查一下,當成字典。

下面進入正題!

- \(y=c\)

\[y'=0 \tag 1\]

- \(y=x^a\)

\[y'=ax^{a-1} \tag 2\]

- \(y=log_ax\)

\[y'=\frac{1}{x}log_ae=\frac{1}{xlna} \tag 3\]

\[(因為log_ae=\frac{1}{log_ea}=\frac{1}{lna})\]

- \(y=lnx\)

\[y'=\frac{1}{x} \tag4\]

- \(y=a^x\)

\[y'=a^xlna \tag5\]

- \(y=e^x\)

\[y'=e^x \tag6\]

- \(y=e^{-x}\)

\[y'=-e^{-x} \tag7\]

- 正弦函式\(y=sin(x)\)

\[y'=cos(x) \tag 8\]

- 餘弦函式 \(y=cos(x)\)

\[y'=-sin(x) \tag 9\]

- 正切函式 \(y=tg(x)\)

\[y'=sec^2(x)=\frac{1}{cos^2x} \tag{10}\]

- 餘切函式 \(y=ctg(x)\)

\[y'=-csc^2(x) \tag{11}\]

- 反正弦函式 \(y=arcsin(x)\)

\[y'=\frac{1}{\sqrt{1-x^2}} \tag{12}\]

- 反餘弦函式 \(y=arccos(x)\)

\[y'=-\frac{1}{\sqrt{1-x^2}} \tag{13}\]

- 反正切函式 \(y=arctan(x)\)

\[y'=\frac{1}{1+x^2} \tag{14}\]

- 反餘切函式 \(y=arcctg(x)\)

\[y'=-\frac{1}{1+x^2} \tag{15}\]

- 雙曲正弦函式 \(y=sinh(x)=(e^x-e^{-x})/2\)

\[y'=cosh(x) \tag{16}\]

- 雙曲餘弦函式 \(y=cosh(x)=(e^x+e^{-x})/2\)

\[y'=sinh(x) \tag{17}\]

- 雙曲正切函式\(y=tanh(x)=(e^x-e^{-x})/(e^x+e^{-x})\)

\[y'=sech^2(x)=1-tanh^2(x) \tag{18}\]

- 雙曲餘切函式\(y=coth(x)=(e^x+e^{-x})/(e^x-e^{-x})\)

\[y'=-csch^2(x) \tag{19}\]

- 雙曲正割函式\(y=sech(x)=2/(e^x+e^{-x})\)

\[y'=-sech(x)*tanh(x) \tag{20}\]

- 雙曲餘割函式\(y=csch(x)=2/(e^x-e^{-x})\)

\[y'=-csch(x)*coth(x) \tag{21}\]

導數四則運算

- \[[u(x) + v(x)]’ = u’(x) + v’(x) \tag{30}\]

- \[[u(x) - v(x)]’ = u’(x) - v’(x) \tag{31}\]

- \[[u(x)*v(x)]’ = u’(x)*v(x) + v’(x)*u(x) \tag{32}\]

- \[[\frac{u(x)}{v(x)}]'=\frac{u'(x)v(x)-v'(x)u(x)}{v^2(x)} \tag{33}\]

偏導數

- 如\(Z=f(x,y)\)

則Z對x的偏導可以理解為當y是個常數時,Z單獨對x求導:

\[Z'_x=f'_x(x,y)=\frac{\partial{Z}}{\partial{x}} \tag{40}\]

則Z對y的偏導可以理解為當x是個常數時,Z單獨對y求導:

\[Z'_y=f'_y(x,y)=\frac{\partial{Z}}{\partial{y}} \tag{41}\]

在二元函式中,偏導的何意義,就是對任意的\(y=y_0\)的取值,在二元函式曲面上做一個\(y=y_0\)切片,得到\(Z = f(x, y_0)\)的曲線,這條曲線的一階導數就是Z對x的偏導。對\(x=x_0\)同樣,就是Z對y的偏導。

複合函式求導(鏈式法則)

- 如果 \(y=f(u), u=g(x)\) 則

\[y'_x = f'(u)*u'(x) = y'_u*u'_x=\frac{dy}{du}*\frac{du}{dx} \tag{50}\]

- 如果\(y=f(u),u=g(v),v=h(x)\) 則

\[ \frac{dy}{dx}=f'(u)*g'(v)*h'(x)=\frac{dy}{du}*\frac{du}{dv}*\frac{dv}{dx} \tag{51} \]

- 如\(Z=f(U,V)\),通過中間變數\(U = g(x,y), V=h(x,y)\)成為x,y的複合函式\(Z=f[g(x,y),h(x,y)]\)

則

\[ \frac{\partial{Z}}{\partial{x}}=\frac{\partial{Z}}{\partial{U}} * \frac{\partial{U}}{\partial{x}} + \frac{\partial{Z}}{\partial{V}} * \frac{\partial{V}}{\partial{x}} \tag{52} \]

\[ \frac{\partial{Z}}{\partial{y}}=\frac{\partial{Z}}{\partial{U}} * \frac{\partial{U}}{\partial{y}} + \frac{\partial{Z}}{\partial{V}} * \frac{\partial{V}}{\partial{y}} \]

矩陣求導

如\(A,B,X\)都是矩陣,

則

\[ B\frac{\partial{(AX)}}{\partial{X}} = A^TB \tag{60} \]

\[ B\frac{\partial{(XA)}}{\partial{X}} = BA^T \tag{61} \]

\[ \frac{\partial{(X^TA)}}{\partial{X}} = \frac{\partial{(A^TX)}}{\partial{X}}=A \tag{62} \]

\[ \frac{\partial{(A^TXB)}}{\partial{X}} = AB^T \tag{63} \]

\[ \frac{\partial{(A^TX^TB)}}{\partial{X}} = BA^T \tag{64} \]

啟用函式求導

sigmoid函式:\(A = \frac{1}{1+e^{-Z}}\)

利用公式30,令:\(u=1,v=1+e^{-Z}\) 則

\[

A'_Z = \frac{u'v-v'u}{v^2}=\frac{0-(1+e^{-Z})'}{(1+e^{-Z})^2} \tag{70}

\]

\[

=\frac{-e^{-Z}}{(1+e^{-Z})^2}

=\frac{1-(1+e^{-Z})}{(1+e^{-Z})^2}

\]

\[

=(\frac{1}{1+e^{-Z}})^2-\frac{1}{1+e^{-Z}}

\]

\[

=A^2-A=A(1-A)

\]

tanh函式:\(A=\frac{e^{Z}-e^{-Z}}{e^{Z}+e^{-Z}}\)

利用公式23,令:\(u={e^{Z}-e^{-Z}},v=e^{Z}+e^{-Z}\) 則

\[

A'_Z=\frac{u'v-v'u}{v^2} \tag{71}

\]

\[

=\frac{(e^{Z}-e^{-Z})'(e^{Z}+e^{-Z})-(e^{Z}+e^{-Z})'(e^{Z}-e^{-Z})}{(e^{Z}+e^{-Z})^2}

\]

\[

=\frac{(e^{Z}+e^{-Z})(e^{Z}+e^{-Z})-(e^{Z}-e^{-Z})(e^{Z}-e^{-Z})}{(e^{Z}+e^{-Z})^2}

\]

\[

=\frac{(e^{Z}+e^{-Z})^2-(e^{Z}-e^{-Z})^2}{(e^{Z}+e^{-Z})^2}

\]

\[

=1-(\frac{(e^{Z}-e^{-Z}}{e^{Z}+e^{-Z}})^2=1-A^2

\]

反向傳播四大公式推導

著名的反向傳播四大公式是:

\[\delta^{L} = \nabla_{a}C \odot \sigma_{'}(Z^L) \tag{80}\]

\[\delta^{l} = ((W^{l + 1})^T\delta^{l+1})\odot\sigma_{'}(Z^l) \tag{81}\]

\[\frac{\partial{C}}{\partial{b_j^l}} = \delta_j^l \tag{82}\]

\[\frac{\partial{C}}{\partial{w_{jk}^{l}}} = a_k^{l-1}\delta_j^l \tag{83}\]

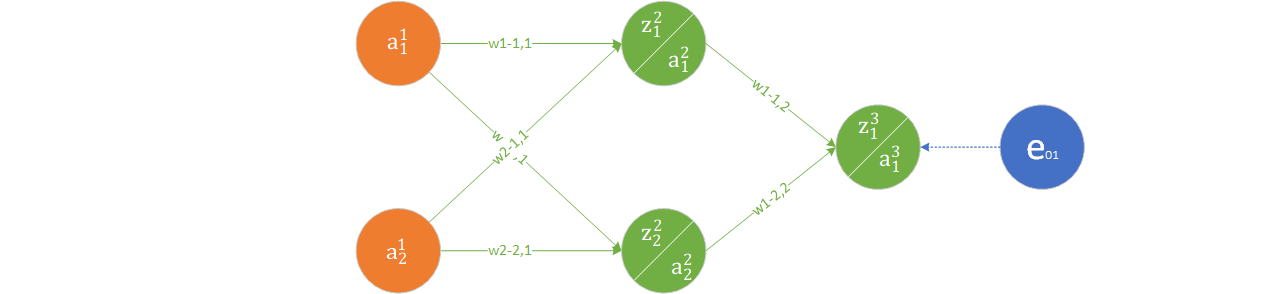

下面我們用一個簡單的兩個神經元的全連線神經網路來直觀解釋一下這四個公式,

每個結點的輸入輸出標記如圖上所示,使用MSE作為計算loss的函式,那麼可以得到這張計算圖中的計算過公式如下所示:

\[e_{01} = \frac{1}{2}(y-a_1^3)^2\]

\[a_1^3 = sigmoid(z_1^3)\]

\[z_1^3 = (w_{11}^2 * a_1^2 + w_{12}^2 * a_2^2 + b_1^3)\]

\[a_1^2 = sigmoid(z_1^2)\]

\[z_1^2 = (w_{11}^1 * a_1^1 + w_{12}^1 * a_2^1 + b_1^2)\]

我們按照反向傳播中梯度下降的原理來對損失求梯度,計算過程如下:

\[\frac{\partial{e_{o1}}}{\partial{w_{11}^2}} = \frac{\partial{e_{o1}}}{\partial{a_{1}^3}}\frac{\partial{a_{1}^3}}{\partial{z_{1}^3}}\frac{\partial{z_{1}^3}}{\partial{w_{11}^2}}=\frac{\partial{e_{o1}}}{\partial{a_{1}^3}}\frac{\partial{a_{1}^3}}{\partial{z_{1}^3}}a_{1}^2\]

\[\frac{\partial{e_{o1}}}{\partial{w_{12}^2}} = \frac{\partial{e_{o1}}}{\partial{a_{1}^3}}\frac{\partial{a_{1}^3}}{\partial{z_{1}^3}}\frac{\partial{z_{1}^3}}{\partial{w_{12}^2}}=\frac{\partial{e_{o1}}}{\partial{a_{1}^3}}\frac{\partial{a_{1}^3}}{\partial{z_{1}^3}}a_{2}^2\]

\[\frac{\partial{e_{o1}}}{\partial{w_{11}^1}} = \frac{\partial{e_{o1}}}{\partial{a_{1}^3}}\frac{\partial{a_{1}^3}}{\partial{z_{1}^3}}\frac{\partial{z_{1}^3}}{\partial{a_{1}^2}}\frac{\partial{a_{1}^2}}{\partial{z_{1}^2}}\frac{\partial{z_{1}^2}}{\partial{w_{11}^1}} = \frac{\partial{e_{o1}}}{\partial{a_{1}^3}}\frac{\partial{a_{1}^3}}{\partial{z_{1}^3}}\frac{\partial{z_{1}^3}}{\partial{a_{1}^2}}\frac{\partial{a_{1}^2}}{\partial{z_{1}^2}}a_1^1\]

\[=\frac{\partial{e_{o1}}}{\partial{a_{1}^3}}\frac{\partial{a_{1}^3}}{\partial{z_{1}^3}}w_{11}^2\frac{\partial{a_{1}^2}}{\partial{z_{1}^2}}a_1^1\]

\[\frac{\partial{e_{o1}}}{\partial{w_{12}^1}} = \frac{\partial{e_{o1}}}{\partial{a_{1}^3}}\frac{\partial{a_{1}^3}}{\partial{z_{1}^3}}\frac{\partial{z_{1}^3}}{\partial{a_{2}^2}}\frac{\partial{a_{2}^2}}{\partial{z_{1}^2}}\frac{\partial{z_{1}^2}}{\partial{w_{12}^1}} = \frac{\partial{e_{o1}}}{\partial{a_{1}^3}}\frac{\partial{a_{1}^3}}{\partial{z_{1}^3}}\frac{\partial{z_{1}^3}}{\partial{a_{2}^2}}\frac{\partial{a_{2}^2}}{\partial{z_{1}^2}}a_2^2\]

\[=\frac{\partial{e_{o1}}}{\partial{a_{1}^3}}\frac{\partial{a_{1}^3}}{\partial{z_{1}^3}}w_{12}^2\frac{\partial{a_{2}^2}}{\partial{z_{1}^2}}a_2^2\]

上述式中,\(\frac{\partial{a}}{\partial{z}}\)是啟用函式的導數,即\(\sigma^{'}(z)\)項。觀察到在求偏導數過程中有共同項\(\frac{\partial{e_{o1}}}{\partial{a_{1}^3}}\frac{\partial{a_{1}^3}}{\partial{z_{1}^3}}\),採用\(\delta\)符號記錄,用矩陣形式表示,

即:

\[\delta^L = [\frac{\partial{e_{o1}}}{\partial{a_{i}^L}}\frac{\partial{a_{i}^L}}{\partial{z_{i}^L}}] = \nabla_{a}C\odot\sigma^{'}(Z^L)\]

上述式中,\([a_i]\)表示一個元素是a的矩陣,\(\nabla_{a}C\)表示將損失\(C\)對\(a\)求梯度,\(\odot\)表示矩陣element wise的乘積(也就是矩陣對應位置的元素相乘)。

從上面的推導過程中,我們可以得出\(\delta\)矩陣的遞推公式:

\[\delta^{L-1} = (W^L)^T[\frac{\partial{e_{o1}}}{\partial{a_{i}^L}}\frac{\partial{a_{i}^L}}{\partial{z_{i}^L}}]\odot\sigma^{'}(Z^{L - 1})\]

所以在反向傳播過程中只需要逐層利用上一層的\(\delta^l\)進行遞推即可。

相對而言,這是一個非常直觀的結果,這份推導過程也是不嚴謹的。下面,我們會從比較嚴格的數學定義角度進行推導,首先要補充一些定義。

標量對矩陣導數的定義

假定\(y\)是一個標量,\(X\)是一個\(N \times M\)大小的矩陣,有\(y=f(X)\), \(f()\)是一個函式。我們來看\(df\)應該如何計算。

首先給出定義:

\[ df = \sum_j^M\sum_i^N \frac{\partial{f}}{\partial{x_{ij}}}dx_{ij} \]

下面我們引入矩陣跡的概念,所謂矩陣的跡,就是矩陣對角線元素之和。也就是說:

\[ tr(X) = \sum_i x_{ii} \]

引入跡的概念後,我們來看上面的梯度計算是不是可以用跡來表達呢?

\[ \frac{\partial{f}}{\partial{X}} = \begin{pmatrix} \frac{\partial{f}}{\partial{x_{11}}} & \frac{\partial{f}}{\partial{x_{12}}} & \dots & \frac{\partial{f}}{\partial{x_{1M}}} \\ \frac{\partial{f}}{\partial{x_{21}}} & \frac{\partial{f}}{\partial{x_{22}}} & \dots & \frac{\partial{f}}{\partial{x_{2M}}} \\ \vdots & \vdots & \ddots & \vdots \\ \frac{\partial{f}}{\partial{x_{N1}}} & \frac{\partial{f}}{\partial{x_{N2}}} & \dots & \frac{\partial{f}}{\partial{x_{NM}}} \end{pmatrix} \tag{90} \]

\[ dX = \begin{pmatrix} dx_{11} & d{x_{12}} & \dots & d{x_{1M}} \\ d{x_{21}} & d{x_{22}} & \dots & d{x_{2M}} \\ \vdots & \vdots & \ddots & \vdots \\ d{x_{N1}} & d{x_{N2}} & \dots & d{x_{NM}} \end{pmatrix} \tag{91} \]

我們來看矩陣\((90)\)的轉置和矩陣\((91)\)乘積的對角線元素

\[ {({(\frac{\partial{f}}{\partial{X}})}^TdX)}_{jj} = \sum_i^N\frac{\partial{f}}{\partial{x_{ij}}}dx_{ij} \]

因此,

\[ tr({(\frac{\partial{f}}{\partial{X}})}^TdX) = \sum_j^M\sum_i^N\frac{\partial{f}}{\partial{x_{ij}}}dx_{ij} = df = tr(df) \tag{92} \]

上式的最後一個等號是因為\(df\)是一個標量,標量的跡就等於其本身。

矩陣跡和導數的部分性質

這裡將會給出部分矩陣的跡和導數的性質,作為後面推導過程的參考。性子急的同學可以姑且預設這是一些結論。

\[

d(X + Y) = dX + dY \tag{93}

\]

\[

d(XY) = (dX)Y + X(dY)\tag{94}

\]

\[

dX^T = {(dX)}^T \tag{95}

\]

\[

d(tr(X)) = tr(dX) \tag{96}

\]

\[

d(X \odot Y) = dX \odot Y + X \odot dY \tag{97}

\]

\[

d(f(X)) = f^{'}(X) \odot dX \tag{98}

\]

\[

tr(XY) = tr(YX) \tag{99}

\]

\[

tr(A^T (B \odot C)) = tr((A \odot B)^T C) \tag{100}

\]

以上各性質的證明方法類似,我們選取式(94)作為證明的示例:

\[ Z = XY \]

則Z中的任意一項是

\[

z_{ij} = \sum_k x_{ik}y_{kj} \\

dz_{ij} = \sum_k d(x_{ik}y_{kj}) = \sum_k (dx_{ik}) y_{kj} + \sum_k x_{ik} (dy_{kj}) = ((dX)Y)_{ij} + (X(dY))_{ij}

\]

從上式可見,\(dZ\)的每一項和\((dX)Y + X(dY)\)的每一項都是相等的。因此,可以得出式(94)成立。

神經網路有關公式證明:

首先,來看一個通用情況,已知\(f = A^TXB\),\(A,B\)是常向量,希望得到\(\frac{\partial{f}}{\partial{X}}\),推導過程如下

根據式(94),

\[ df = d(A^TXB) = d(A^TX)B + A^TX(dB) = d(A^TX)B + 0 = d(A^T)XB+A^TdXB = A^TdXB \]

由於\(df\)是一個標量,標量的跡等於本身,同時利用公式(99):

\[ df = tr(df) = tr(A^TdXB) = tr(BA^TdX) \]

由於公式(92):

\[ tr(df) = tr({(\frac{\partial{f}}{\partial{X}})}^TdX) \]

可以得到:

\[ (\frac{\partial{f}}{\partial{X}})^T = BA^T \]

\[ \frac{\partial{f}}{\partial{X}} = AB^T \tag{101} \]我們來看全連線層的情況

\[ Y = WX + B\]

取全連線層其中一個元素

\[ y = wX + b\]

這裡的\(w\)是權重矩陣的一行,尺寸是\(1 \times M\),X是一個大小為\(M \times 1\)的向量,y是一個標量,若新增一個大小是1的單位陣,上式整體保持不變:

\[ y = (w^T)^TXI + b\]

利用式(92),可以得到

\[ \frac{\partial{y}}{\partial{X}} = I^Tw^T = w^T\]

因此在誤差傳遞的四大公式中,在根據上層傳遞回來的誤差\(\delta\)繼續傳遞的過程中,利用鏈式法則,有

\[\delta^{L-1} = (W^L)^T \delta^L \odot \sigma^{'}(Z^{L - 1})\]

同理,若將\(y=wX+b\)視作:

\[ y = IwX + b \]

那麼利用式(92),可以得到:

\[ \frac{\partial{y}}{\partial{w}} = X^T\]

使用softmax和交叉熵來計算損失的情況下

\[ l = - Y^Tlog(softmax(Z))\]

式中,\(y\)是資料的標籤,\(Z\)是網路預測的輸出,\(y\)和\(Z\)的維度是\(N \times 1\)。經過softmax處理作為概率。希望能夠得到\(\frac{\partial{l}}{\partial{Z}}\),下面是推導的過程:

\[ softmax(Z) = \frac{exp(Z)}{\boldsymbol{1}^Texp(Z)} \]

其中, \(\boldsymbol{1}\)是一個維度是\(N \times 1\)的全1向量。將softmax表示式代入損失函式中,有

\[ dl = -Y^T d(log(softmax(Z)))\\ = -Y^T d (log\frac{exp(Z)}{\boldsymbol{1}^Texp(Z)}) \\ = -Y^T dZ + Y^T \boldsymbol{1}d(log(\boldsymbol{1}^Texp(Z))) \tag{102} \]

下面來化簡式(102)的後半部分,利用式(98):

\[ d(log(\boldsymbol{1}^Texp(Z))) = log^{'}(\boldsymbol{1}^Texp(Z)) \odot dZ = \frac{\boldsymbol{1}^T(exp(Z)\odot dZ)}{\boldsymbol{1}^Texp(Z)} \]

利用式(100),可以得到

\[ tr(Y^T \boldsymbol{1}\frac{\boldsymbol{1}^T(exp(Z)\odot dZ)}{\boldsymbol{1}^Texp(Z)}) = tr(Y^T \boldsymbol{1}\frac{(\boldsymbol{1} \odot (exp(Z))^T dZ)}{\boldsymbol{1}^Texp(Z)}) = tr(Y^T \boldsymbol{1}\frac{exp(Z)^T dZ}{\boldsymbol{1}^Texp(Z)}) = tr(Y^T \boldsymbol{1} softmax(Z)^TdZ) \tag{103} \]

將式(103)代入式(102)並兩邊取跡,可以得到:

\[ dl = tr(dl) = tr(-y^T dZ + y^T\boldsymbol{1}softmax(Z)^TdZ) = tr((\frac{\partial{l}}{\partial{Z}})^TdZ) \]

在分類問題中,一個標籤中只有一項會是1,所以\(Y^T\boldsymbol{1} = 1\),因此有

\[ \frac{\partial{l}}{\partial{Z}} = softmax(Z) - Y \]

這也就是在損失函式中計算反向傳播的誤差的公式。

參考資料

本系列部落格連結: