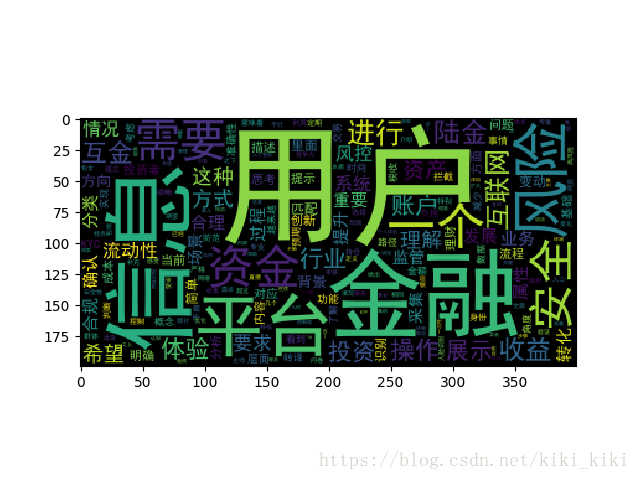

利用Python3做詞頻統計和詞雲圖

阿新 • • 發佈:2018-12-22

起源:

因看到一篇滿眼是字的文章,故希望能夠快速的檢索出關鍵字,所以嘗試用Python3來實現。

程式碼

import jieba

import numpy

import codecs

import pandas

import matplotlib.pyplot as plt

from wordcloud import WordCloud

file = codecs.open(r"ljs.txt")

content = file.read()

file.close()

segment=[]

segs=jieba.cut(content)

for seg in segs:

if