深度學習AI美顏系列---AI美顏磨皮演算法一

首先說明一點,為什麼本結內容是“AI美顏磨皮演算法一”?而不是“AI美顏磨皮演算法”?

AI美顏磨皮演算法目前還沒有具體定義,各大公司也都處於摸索階段,因此,這裡只是依據自己的實現方案做了區分,本文演算法與下一篇“AI美顏磨皮演算法二”在演算法角度,有著很大的差異,由此做了區分。

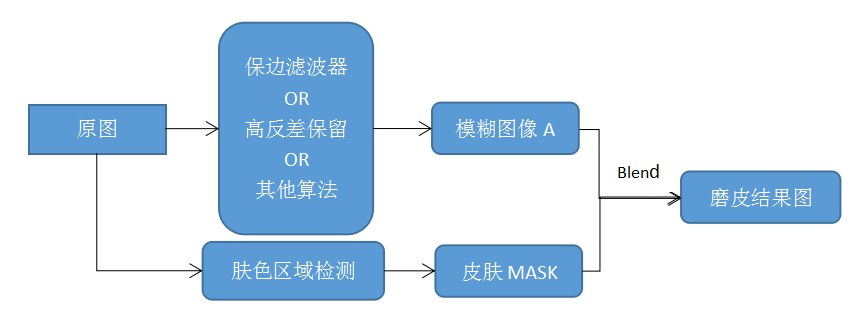

先看一下磨皮演算法的一般流程:

這個流程圖是一般傳統的磨皮演算法流程圖,而本文將基於這個流程圖,結合深度學習做一些改進。

在這個流程圖中,主要的模組有兩個:濾波模組和膚色區域檢測模組;

濾波模組中,包含了三種演算法:

1,保邊濾波器濾波演算法

該方法是指通過一些具有保留邊緣的能力的濾波器,來將影象磨平,達到面板平滑的目的;

這類濾波器主要有:

①雙邊濾波器

②導向濾波器

③Surface Blur表面模糊濾波器

④區域性均值濾波器

⑤加權最小二乘濾波器(WLS濾波器)

⑥Smart blur等等,詳情可參考本人部落格。

此方法面板區域比較平滑,細節較少,需要後期新增細節資訊,來保留一些自然的紋理;

2,高反差減弱演算法

高反差保留演算法是指通過高反差來得到面板細節的MASK,根據MASK中細節區域,比如面板中的斑點區域位置,將原圖對應區域進行顏色減淡處理,以此來達到斑點弱化,美膚的目的;

該方法在保留紋理的同時,減弱了面板瑕疵與斑點的顏色,使得面板看起來比較光滑自然;

3,其他演算法

這裡是指一些未知的演算法,當然已知的也有,比如:基於保邊濾波和高反差的磨皮演算法,該方法同時對原圖做了

面板區域識別檢測模組

目前常用的面板檢測主要是基於顏色空間的面板顏色統計方法;

該方法具有較高的誤檢率,容易將類膚色判定為膚色,這樣就導致了非面板區域影象被濾波器平滑掉了,也就是不該磨皮的影象區域被模糊了;

重點來了,下面我們在傳統磨皮演算法流程中使用深度學習來改進或者提高我們磨皮的質量,比如:使用深度學習進行面板區域分割,得到更為精確的面板區域,從而使得我們最後的磨皮效果超越傳統演算法的效果;

下面,我們介紹基於深度學習的面板區域分割:

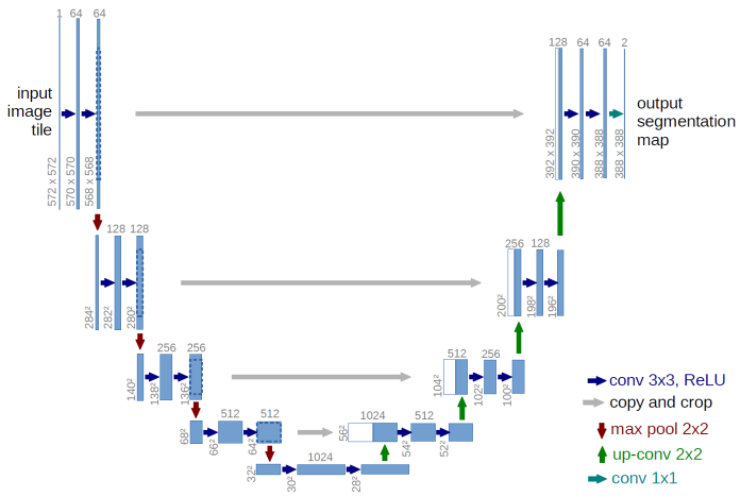

分割的方法有很多,CNN/FCN/UNet/DenseNet等等,這裡我們使用UNet進行面板分割:

Unet做影象分割,參考論文如:UNet:Convolutional Networks for Biomedical Image Segmentation.

它最開始的網路模型如下:

這是一個全卷積神經網路,輸入和輸出都是影象,沒有全連線層,較淺的高解析度層用來解決畫素定位的問題,較深的層用來解決畫素分類的問題;

左邊進行卷積和下采樣,同時保留當前結果,右邊進行上取樣時將上取樣結果和左邊對應結果進行融合,以此來提高分割效果;

這個網路中左右是不對稱的,後來改進的Unet基本上在影象解析度上呈現出對稱的樣式,本文這裡使用Keras來實現,網路結構如下:

Layer (type) Output Shape Param # Connected to

==================================================================================================

input_1 (InputLayer) (None, 256, 256, 3) 0

__________________________________________________________________________________________________

conv2d_1 (Conv2D) (None, 256, 256, 32) 896 input_1[0][0]

__________________________________________________________________________________________________

batch_normalization_1 (BatchNor (None, 256, 256, 32) 128 conv2d_1[0][0]

__________________________________________________________________________________________________

activation_1 (Activation) (None, 256, 256, 32) 0 batch_normalization_1[0][0]

__________________________________________________________________________________________________

conv2d_2 (Conv2D) (None, 256, 256, 32) 9248 activation_1[0][0]

__________________________________________________________________________________________________

batch_normalization_2 (BatchNor (None, 256, 256, 32) 128 conv2d_2[0][0]

__________________________________________________________________________________________________

activation_2 (Activation) (None, 256, 256, 32) 0 batch_normalization_2[0][0]

__________________________________________________________________________________________________

max_pooling2d_1 (MaxPooling2D) (None, 128, 128, 32) 0 activation_2[0][0]

__________________________________________________________________________________________________

conv2d_3 (Conv2D) (None, 128, 128, 64) 18496 max_pooling2d_1[0][0]

__________________________________________________________________________________________________

batch_normalization_3 (BatchNor (None, 128, 128, 64) 256 conv2d_3[0][0]

__________________________________________________________________________________________________

activation_3 (Activation) (None, 128, 128, 64) 0 batch_normalization_3[0][0]

__________________________________________________________________________________________________

conv2d_4 (Conv2D) (None, 128, 128, 64) 36928 activation_3[0][0]

__________________________________________________________________________________________________

batch_normalization_4 (BatchNor (None, 128, 128, 64) 256 conv2d_4[0][0]

__________________________________________________________________________________________________

activation_4 (Activation) (None, 128, 128, 64) 0 batch_normalization_4[0][0]

__________________________________________________________________________________________________

max_pooling2d_2 (MaxPooling2D) (None, 64, 64, 64) 0 activation_4[0][0]

__________________________________________________________________________________________________

conv2d_5 (Conv2D) (None, 64, 64, 128) 73856 max_pooling2d_2[0][0]

__________________________________________________________________________________________________

batch_normalization_5 (BatchNor (None, 64, 64, 128) 512 conv2d_5[0][0]

__________________________________________________________________________________________________

activation_5 (Activation) (None, 64, 64, 128) 0 batch_normalization_5[0][0]

__________________________________________________________________________________________________

conv2d_6 (Conv2D) (None, 64, 64, 128) 147584 activation_5[0][0]

__________________________________________________________________________________________________

batch_normalization_6 (BatchNor (None, 64, 64, 128) 512 conv2d_6[0][0]

__________________________________________________________________________________________________

activation_6 (Activation) (None, 64, 64, 128) 0 batch_normalization_6[0][0]

__________________________________________________________________________________________________

max_pooling2d_3 (MaxPooling2D) (None, 32, 32, 128) 0 activation_6[0][0]

__________________________________________________________________________________________________

conv2d_7 (Conv2D) (None, 32, 32, 256) 295168 max_pooling2d_3[0][0]

__________________________________________________________________________________________________

batch_normalization_7 (BatchNor (None, 32, 32, 256) 1024 conv2d_7[0][0]

__________________________________________________________________________________________________

activation_7 (Activation) (None, 32, 32, 256) 0 batch_normalization_7[0][0]

__________________________________________________________________________________________________

conv2d_8 (Conv2D) (None, 32, 32, 256) 590080 activation_7[0][0]

__________________________________________________________________________________________________

batch_normalization_8 (BatchNor (None, 32, 32, 256) 1024 conv2d_8[0][0]

__________________________________________________________________________________________________

activation_8 (Activation) (None, 32, 32, 256) 0 batch_normalization_8[0][0]

__________________________________________________________________________________________________

max_pooling2d_4 (MaxPooling2D) (None, 16, 16, 256) 0 activation_8[0][0]

__________________________________________________________________________________________________

conv2d_9 (Conv2D) (None, 16, 16, 512) 1180160 max_pooling2d_4[0][0]

__________________________________________________________________________________________________

batch_normalization_9 (BatchNor (None, 16, 16, 512) 2048 conv2d_9[0][0]

__________________________________________________________________________________________________

activation_9 (Activation) (None, 16, 16, 512) 0 batch_normalization_9[0][0]

__________________________________________________________________________________________________

conv2d_10 (Conv2D) (None, 16, 16, 512) 2359808 activation_9[0][0]

__________________________________________________________________________________________________

batch_normalization_10 (BatchNo (None, 16, 16, 512) 2048 conv2d_10[0][0]

__________________________________________________________________________________________________

activation_10 (Activation) (None, 16, 16, 512) 0 batch_normalization_10[0][0]

__________________________________________________________________________________________________

max_pooling2d_5 (MaxPooling2D) (None, 8, 8, 512) 0 activation_10[0][0]

__________________________________________________________________________________________________

conv2d_11 (Conv2D) (None, 8, 8, 1024) 4719616 max_pooling2d_5[0][0]

__________________________________________________________________________________________________

batch_normalization_11 (BatchNo (None, 8, 8, 1024) 4096 conv2d_11[0][0]

__________________________________________________________________________________________________

activation_11 (Activation) (None, 8, 8, 1024) 0 batch_normalization_11[0][0]

__________________________________________________________________________________________________

conv2d_12 (Conv2D) (None, 8, 8, 1024) 9438208 activation_11[0][0]

__________________________________________________________________________________________________

batch_normalization_12 (BatchNo (None, 8, 8, 1024) 4096 conv2d_12[0][0]

__________________________________________________________________________________________________

activation_12 (Activation) (None, 8, 8, 1024) 0 batch_normalization_12[0][0]

__________________________________________________________________________________________________

up_sampling2d_1 (UpSampling2D) (None, 16, 16, 1024) 0 activation_12[0][0]

__________________________________________________________________________________________________

concatenate_1 (Concatenate) (None, 16, 16, 1536) 0 activation_10[0][0]

up_sampling2d_1[0][0]

__________________________________________________________________________________________________

conv2d_13 (Conv2D) (None, 16, 16, 512) 7078400 concatenate_1[0][0]

__________________________________________________________________________________________________

batch_normalization_13 (BatchNo (None, 16, 16, 512) 2048 conv2d_13[0][0]

__________________________________________________________________________________________________

activation_13 (Activation) (None, 16, 16, 512) 0 batch_normalization_13[0][0]

__________________________________________________________________________________________________

conv2d_14 (Conv2D) (None, 16, 16, 512) 2359808 activation_13[0][0]

__________________________________________________________________________________________________

batch_normalization_14 (BatchNo (None, 16, 16, 512) 2048 conv2d_14[0][0]

__________________________________________________________________________________________________

activation_14 (Activation) (None, 16, 16, 512) 0 batch_normalization_14[0][0]

__________________________________________________________________________________________________

conv2d_15 (Conv2D) (None, 16, 16, 512) 2359808 activation_14[0][0]

__________________________________________________________________________________________________

batch_normalization_15 (BatchNo (None, 16, 16, 512) 2048 conv2d_15[0][0]

__________________________________________________________________________________________________

activation_15 (Activation) (None, 16, 16, 512) 0 batch_normalization_15[0][0]

__________________________________________________________________________________________________

up_sampling2d_2 (UpSampling2D) (None, 32, 32, 512) 0 activation_15[0][0]

__________________________________________________________________________________________________

concatenate_2 (Concatenate) (None, 32, 32, 768) 0 activation_8[0][0]

up_sampling2d_2[0][0]

__________________________________________________________________________________________________

conv2d_16 (Conv2D) (None, 32, 32, 256) 1769728 concatenate_2[0][0]

__________________________________________________________________________________________________

batch_normalization_16 (BatchNo (None, 32, 32, 256) 1024 conv2d_16[0][0]

__________________________________________________________________________________________________

activation_16 (Activation) (None, 32, 32, 256) 0 batch_normalization_16[0][0]

__________________________________________________________________________________________________

conv2d_17 (Conv2D) (None, 32, 32, 256) 590080 activation_16[0][0]

__________________________________________________________________________________________________

batch_normalization_17 (BatchNo (None, 32, 32, 256) 1024 conv2d_17[0][0]

__________________________________________________________________________________________________

activation_17 (Activation) (None, 32, 32, 256) 0 batch_normalization_17[0][0]

__________________________________________________________________________________________________

conv2d_18 (Conv2D) (None, 32, 32, 256) 590080 activation_17[0][0]

__________________________________________________________________________________________________

batch_normalization_18 (BatchNo (None, 32, 32, 256) 1024 conv2d_18[0][0]

__________________________________________________________________________________________________

activation_18 (Activation) (None, 32, 32, 256) 0 batch_normalization_18[0][0]

__________________________________________________________________________________________________

up_sampling2d_3 (UpSampling2D) (None, 64, 64, 256) 0 activation_18[0][0]

__________________________________________________________________________________________________

concatenate_3 (Concatenate) (None, 64, 64, 384) 0 activation_6[0][0]

up_sampling2d_3[0][0]

__________________________________________________________________________________________________

conv2d_19 (Conv2D) (None, 64, 64, 128) 442496 concatenate_3[0][0]

__________________________________________________________________________________________________

batch_normalization_19 (BatchNo (None, 64, 64, 128) 512 conv2d_19[0][0]

__________________________________________________________________________________________________

activation_19 (Activation) (None, 64, 64, 128) 0 batch_normalization_19[0][0]

__________________________________________________________________________________________________

conv2d_20 (Conv2D) (None, 64, 64, 128) 147584 activation_19[0][0]

__________________________________________________________________________________________________

batch_normalization_20 (BatchNo (None, 64, 64, 128) 512 conv2d_20[0][0]

__________________________________________________________________________________________________

activation_20 (Activation) (None, 64, 64, 128) 0 batch_normalization_20[0][0]

__________________________________________________________________________________________________

conv2d_21 (Conv2D) (None, 64, 64, 128) 147584 activation_20[0][0]

__________________________________________________________________________________________________

batch_normalization_21 (BatchNo (None, 64, 64, 128) 512 conv2d_21[0][0]

__________________________________________________________________________________________________

activation_21 (Activation) (None, 64, 64, 128) 0 batch_normalization_21[0][0]

__________________________________________________________________________________________________

up_sampling2d_4 (UpSampling2D) (None, 128, 128, 128 0 activation_21[0][0]

__________________________________________________________________________________________________

concatenate_4 (Concatenate) (None, 128, 128, 192 0 activation_4[0][0]

up_sampling2d_4[0][0]

__________________________________________________________________________________________________

conv2d_22 (Conv2D) (None, 128, 128, 64) 110656 concatenate_4[0][0]

__________________________________________________________________________________________________

batch_normalization_22 (BatchNo (None, 128, 128, 64) 256 conv2d_22[0][0]

__________________________________________________________________________________________________

activation_22 (Activation) (None, 128, 128, 64) 0 batch_normalization_22[0][0]

__________________________________________________________________________________________________

conv2d_23 (Conv2D) (None, 128, 128, 64) 36928 activation_22[0][0]

__________________________________________________________________________________________________

batch_normalization_23 (BatchNo (None, 128, 128, 64) 256 conv2d_23[0][0]

__________________________________________________________________________________________________

activation_23 (Activation) (None, 128, 128, 64) 0 batch_normalization_23[0][0]

__________________________________________________________________________________________________

conv2d_24 (Conv2D) (None, 128, 128, 64) 36928 activation_23[0][0]

__________________________________________________________________________________________________

batch_normalization_24 (BatchNo (None, 128, 128, 64) 256 conv2d_24[0][0]

__________________________________________________________________________________________________

activation_24 (Activation) (None, 128, 128, 64) 0 batch_normalization_24[0][0]

__________________________________________________________________________________________________

up_sampling2d_5 (UpSampling2D) (None, 256, 256, 64) 0 activation_24[0][0]

__________________________________________________________________________________________________

concatenate_5 (Concatenate) (None, 256, 256, 96) 0 activation_2[0][0]

up_sampling2d_5[0][0]

__________________________________________________________________________________________________

conv2d_25 (Conv2D) (None, 256, 256, 32) 27680 concatenate_5[0][0]

__________________________________________________________________________________________________

batch_normalization_25 (BatchNo (None, 256, 256, 32) 128 conv2d_25[0][0]

__________________________________________________________________________________________________

activation_25 (Activation) (None, 256, 256, 32) 0 batch_normalization_25[0][0]

__________________________________________________________________________________________________

conv2d_26 (Conv2D) (None, 256, 256, 32) 9248 activation_25[0][0]

__________________________________________________________________________________________________

batch_normalization_26 (BatchNo (None, 256, 256, 32) 128 conv2d_26[0][0]

__________________________________________________________________________________________________

activation_26 (Activation) (None, 256, 256, 32) 0 batch_normalization_26[0][0]

__________________________________________________________________________________________________

conv2d_27 (Conv2D) (None, 256, 256, 32) 9248 activation_26[0][0]

__________________________________________________________________________________________________

batch_normalization_27 (BatchNo (None, 256, 256, 32) 128 conv2d_27[0][0]

__________________________________________________________________________________________________

activation_27 (Activation) (None, 256, 256, 32) 0 batch_normalization_27[0][0]

__________________________________________________________________________________________________

conv2d_28 (Conv2D) (None, 256, 256, 1) 33 activation_27[0][0]

==================================================================================================UNet網路程式碼如下:

def get_unet_256(input_shape=(256, 256, 3),

num_classes=1):

inputs = Input(shape=input_shape)

# 256

down0 = Conv2D(32, (3, 3), padding='same')(inputs)

down0 = BatchNormalization()(down0)

down0 = Activation('relu')(down0)

down0 = Conv2D(32, (3, 3), padding='same')(down0)

down0 = BatchNormalization()(down0)

down0 = Activation('relu')(down0)

down0_pool = MaxPooling2D((2, 2), strides=(2, 2))(down0)

# 128

down1 = Conv2D(64, (3, 3), padding='same')(down0_pool)

down1 = BatchNormalization()(down1)

down1 = Activation('relu')(down1)

down1 = Conv2D(64, (3, 3), padding='same')(down1)

down1 = BatchNormalization()(down1)

down1 = Activation('relu')(down1)

down1_pool = MaxPooling2D((2, 2), strides=(2, 2))(down1)

# 64

down2 = Conv2D(128, (3, 3), padding='same')(down1_pool)

down2 = BatchNormalization()(down2)

down2 = Activation('relu')(down2)

down2 = Conv2D(128, (3, 3), padding='same')(down2)

down2 = BatchNormalization()(down2)

down2 = Activation('relu')(down2)

down2_pool = MaxPooling2D((2, 2), strides=(2, 2))(down2)

# 32

down3 = Conv2D(256, (3, 3), padding='same')(down2_pool)

down3 = BatchNormalization()(down3)

down3 = Activation('relu')(down3)

down3 = Conv2D(256, (3, 3), padding='same')(down3)

down3 = BatchNormalization()(down3)

down3 = Activation('relu')(down3)

down3_pool = MaxPooling2D((2, 2), strides=(2, 2))(down3)

# 16

down4 = Conv2D(512, (3, 3), padding='same')(down3_pool)

down4 = BatchNormalization()(down4)

down4 = Activation('relu')(down4)

down4 = Conv2D(512, (3, 3), padding='same')(down4)

down4 = BatchNormalization()(down4)

down4 = Activation('relu')(down4)

down4_pool = MaxPooling2D((2, 2), strides=(2, 2))(down4)

# 8

center = Conv2D(1024, (3, 3), padding='same')(down4_pool)

center = BatchNormalization()(center)

center = Activation('relu')(center)

center = Conv2D(1024, (3, 3), padding='same')(center)

center = BatchNormalization()(center)

center = Activation('relu')(center)

# center

up4 = UpSampling2D((2, 2))(center)

up4 = concatenate([down4, up4], axis=3)

up4 = Conv2D(512, (3, 3), padding='same')(up4)

up4 = BatchNormalization()(up4)

up4 = Activation('relu')(up4)

up4 = Conv2D(512, (3, 3), padding='same')(up4)

up4 = BatchNormalization()(up4)

up4 = Activation('relu')(up4)

up4 = Conv2D(512, (3, 3), padding='same')(up4)

up4 = BatchNormalization()(up4)

up4 = Activation('relu')(up4)

# 16

up3 = UpSampling2D((2, 2))(up4)

up3 = concatenate([down3, up3], axis=3)

up3 = Conv2D(256, (3, 3), padding='same')(up3)

up3 = BatchNormalization()(up3)

up3 = Activation('relu')(up3)

up3 = Conv2D(256, (3, 3), padding='same')(up3)

up3 = BatchNormalization()(up3)

up3 = Activation('relu')(up3)

up3 = Conv2D(256, (3, 3), padding='same')(up3)

up3 = BatchNormalization()(up3)

up3 = Activation('relu')(up3)

# 32

up2 = UpSampling2D((2, 2))(up3)

up2 = concatenate([down2, up2], axis=3)

up2 = Conv2D(128, (3, 3), padding='same')(up2)

up2 = BatchNormalization()(up2)

up2 = Activation('relu')(up2)

up2 = Conv2D(128, (3, 3), padding='same')(up2)

up2 = BatchNormalization()(up2)

up2 = Activation('relu')(up2)

up2 = Conv2D(128, (3, 3), padding='same')(up2)

up2 = BatchNormalization()(up2)

up2 = Activation('relu')(up2)

# 64

up1 = UpSampling2D((2, 2))(up2)

up1 = concatenate([down1, up1], axis=3)

up1 = Conv2D(64, (3, 3), padding='same')(up1)

up1 = BatchNormalization()(up1)

up1 = Activation('relu')(up1)

up1 = Conv2D(64, (3, 3), padding='same')(up1)

up1 = BatchNormalization()(up1)

up1 = Activation('relu')(up1)

up1 = Conv2D(64, (3, 3), padding='same')(up1)

up1 = BatchNormalization()(up1)

up1 = Activation('relu')(up1)

# 128

up0 = UpSampling2D((2, 2))(up1)

up0 = concatenate([down0, up0], axis=3)

up0 = Conv2D(32, (3, 3), padding='same')(up0)

up0 = BatchNormalization()(up0)

up0 = Activation('relu')(up0)

up0 = Conv2D(32, (3, 3), padding='same')(up0)

up0 = BatchNormalization()(up0)

up0 = Activation('relu')(up0)

up0 = Conv2D(32, (3, 3), padding='same')(up0)

up0 = BatchNormalization()(up0)

up0 = Activation('relu')(up0)

# 256

classify = Conv2D(num_classes, (1, 1), activation='sigmoid')(up0)

model = Model(inputs=inputs, outputs=classify)

#model.compile(optimizer=RMSprop(lr=0.0001), loss=bce_dice_loss, metrics=[dice_coeff])

return model輸入為256X256X3的彩色圖,輸出為256X256X1的MASK,訓練引數如下:

model.compile(optimizer = "adam", loss = 'binary_crossentropy', metrics = ["accuracy"])

model.fit(image_train, label_train,epochs=100,verbose=1,validation_split=0.2, shuffle=True,batch_size=8)效果圖如下:

本人這裡訓練集中樣本標定是把人臉區域都當作了膚色區域,因此沒有排除五官區域,如果要得到不包含五官的面板區域,只需要替換相應樣本就可以了。

拿到了精確的膚色區域,我們就可以更新磨皮演算法,這裡給出一組效果圖:

大家可以看到,基於顏色空間的傳統磨皮演算法始終無法精確區分面板區域與類膚色區域,因此在頭髮的地方也做了磨皮操作,導致頭髮紋理細節丟失,而基於Unet面板分割的磨皮演算法則可以很好的區分面板與頭髮這種類膚色區域,進而將頭髮的紋理細節保留,達到該磨皮的地方磨皮,不該磨皮的地方不磨,效果明顯優於傳統方法。

目前美圖秀秀,天天P圖等主流公司也都已經使用了基於深度學習膚色分割的演算法來提高磨皮的效果,這裡給大家簡單介紹一下,幫助大家更好的理解。

當然,使用深度學習的方法來改進傳統方法,只是一個模式,因此這裡文章標題為AI美顏磨皮演算法一,在AI美顏磨皮演算法二中,本人將完全拋棄傳統方法,完全基於深度學習來實現磨皮美顏的效果。

最後,本人使用的訓練樣本來源於網路中的lfw訓練集,大家可以搜尋一下,很容易就可以找到了,當然,如果你要精確的樣本集,並且不包含五官區域,那還是自己標記的好,本人QQ1358009172