scrapy 爬取淘寶商品評論資訊

阿新 • • 發佈:2018-12-24

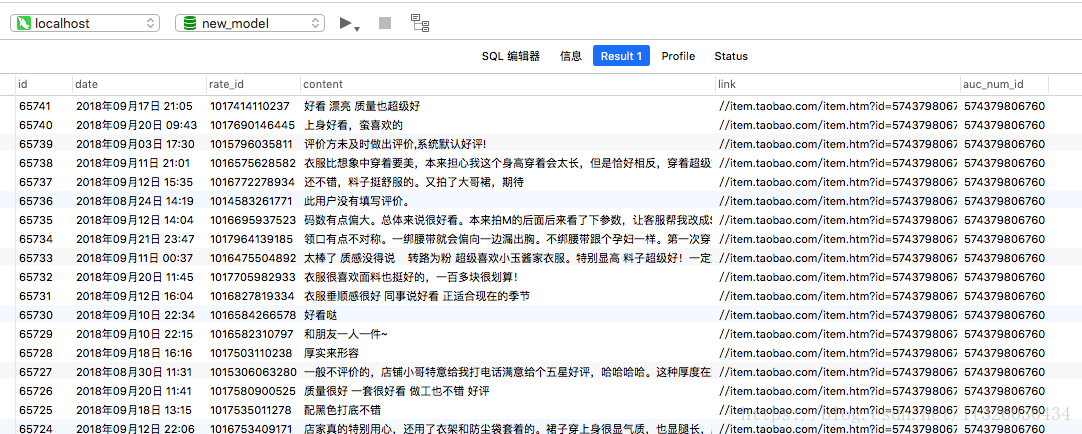

爬蟲最後要達到的效果,是將某分類下,第一頁的所有商品的評論儲存至mysql中。

具體會儲存評論日期、評論id、評論內容、商品連結和商品id。

爬蟲部分程式碼

# -*- coding: utf-8 -*- import scrapy import re import requests import math import json from scrapy.loader import ItemLoader from taobao_test.items import GoodsItem class LianyiqunSpider(scrapy.Spider): name = 'lianyiqun' allowed_domains = ['item.taobao.com', 'rate.taobao.com', 's.taobao.com' ] start_urls = [ '這裡填寫要抓取的商品分類主頁連結' ] # 解析"連衣裙"分類頁面(第一頁商品) def parse(self, response): # 獲取儲存列表用的script標籤 js_script = response.css('script::text')[4].extract() # 獲取儲存列表用的json g_page_config = re.findall('g_page_config = ([\s\S]*)g_srp_loadCss', js_script)[0] g_page_config_json = json.loads(g_page_config.strip()[0:-1]) # 訪問商品連結 auctions = g_page_config_json['mods']['itemlist']['data']['auctions'] for a in auctions: yield scrapy.Request(url="http://" + a['detail_url'], callback=self.goods_detail) # 解析商品資訊 def goods_detail(self, response): # 獲取到頁面渲染的第一個指令碼的資料結構 first_js_script = response.css('script::text')[0].extract() # 正則匹配到g_config欄位 g_config = re.findall('var g_config = ([\s\S]*)g_config\.tadInfo', first_js_script)[0] # 正則匹配,拿到頁面的評論url rate_counter_api = re.findall("rateCounterApi : '//(.*)',", g_config)[0] # 訪問獲取評論的url rate_count_response = requests.get("http://" + rate_counter_api) # 獲取評論數量 rate_count = re.findall('"count":(.*)}', rate_count_response.text)[0] # 拿到data_list_api_url,這個能夠匹配到域名 data_list_api_url = response.css('#reviews::attr(data-listapi)').extract()[0] # 獲取到評論的url feed_rate_list_url = re.findall('//(.*)\?', data_list_api_url)[0] # 寶貝id auttion_num_id = re.findall('auctionNumId=([\d]*)&', data_list_api_url)[0] # 設定一個值,一頁獲取的評論數量 page_size = 20 # 計算一共有多少頁的評論 pages = math.ceil(int(rate_count) / page_size) # 迭代一共有多少頁,然後分別請求每一頁評論 for current_page_number in range(1, pages): yield scrapy.Request(url="http://" + feed_rate_list_url + "?auctionNumId=" + auttion_num_id + "¤tPageNum=" + str(current_page_number) + "&pageSize=" + str(page_size), callback=self.parse_rate_list) # 解析具體的評論 def parse_rate_list(self, response): # 將響應資訊轉換成json格式 goods_rate_data_json = json.loads(response.text.strip()[1:-1]) # 獲取到具體的評論資訊,就是json資訊獲取 comments = goods_rate_data_json['comments'] for comment in comments: # ItemLoader方式 goods_item_loader = ItemLoader(item=GoodsItem(), response=response) # 評論時間 goods_item_loader.add_value('date', comment['date']) # 評論id goods_item_loader.add_value('rate_id', comment['rateId']) # 評論內容 goods_item_loader.add_value('content', comment['content']) auction = comment['auction'] # 商品的連結地址 goods_item_loader.add_value('link', auction['link']) # 商品id goods_item_loader.add_value('auc_num_id', auction['aucNumId']) yield goods_item_loader.load_item()

items程式碼

# -*- coding: utf-8 -*- import scrapy from scrapy.loader.processors import MapCompose from scrapy.loader.processors import Join def parse_field(text): return str(text).strip() class TaobaoTestItem(scrapy.Item): # define the fields for your item here like: # name = scrapy.Field() pass class GoodsItem(scrapy.Item): # 評論時間 date = scrapy.Field( input_processor=MapCompose(parse_field), output_processor=Join(), ) # 評論id rate_id = scrapy.Field( input_processor=MapCompose(parse_field), output_processor=Join(), ) # 評論內容 content = scrapy.Field( input_processor=MapCompose(parse_field), output_processor=Join(), ) # 商品連結 link = scrapy.Field( input_processor=MapCompose(parse_field), output_processor=Join(), ) # 商品id auc_num_id = scrapy.Field( input_processor=MapCompose(parse_field), output_processor=Join(), ) def get_insert_sql(self): insert_sql = """ insert into rate(date,rate_id,content,link,auc_num_id) values (%s,%s,%s,%s,%s) """ params = (self["date"], self["rate_id"], self["content"], self["link"], self["auc_num_id"]) return insert_sql, params

main程式碼

#coding:utf-8

from scrapy.cmdline import execute

import sys

import os

sys.path.append(os.path.dirname(os.path.abspath(__file__)))

execute(["scrapy", "crawl", "lianyiqun"])

pipelines程式碼

# -*- coding: utf-8 -*- # Define your item pipelines here # # Don't forget to add your pipeline to the ITEM_PIPELINES setting # See: https://doc.scrapy.org/en/latest/topics/item-pipeline.html import codecs import json import MySQLdb.cursors from twisted.enterprise import adbapi import pymysql class TaobaoTestPipeline(object): def process_item(self, item, spider): return item class JsonPipeline(object): def __init__(self): self.file = codecs.open("jsondata.json", "w", encoding="utf-8") def process_item(self, item, spider): lines = json.dumps(dict(item), ensure_ascii=False) + "\n" self.file.write(lines) return item def spider_close(self): self.file.close() class writeMysql(object): def __init__(self): self.client = pymysql.connect( host='localhost', port=3306, user='root', passwd='123456', db='new_model', charset='utf8' ) self.cur = self.client.cursor() def process_item(self, item, spider): insert_sql, params = item.get_insert_sql() self.cur.execute(insert_sql, params) self.client.commit() return item def handle_error(self, failure, item, spider): print(failure)

原始碼以上傳至git,可點選直接檢視git地址,有疑問的同學可提Issues或在部落格下方留言~