Two Examples of Minimum Error Pruning(reprint)

The first example

Expected Error Pruning

Approximate expected error assuming that we prune at a particular node.

Approximate backed-up error from children assuming we did not prune.

If expected error isless than backed-up error, prune.

Expected Error

If we prune a node, it becomes a leaf labelled, C.

What will be the expected classification error at this leaf?

(This is called the Laplace error estimate - it is based on the assumption that the distribution of probabilities that examples will belong to different classes is uniform.)

S is the set of examples in a node

k is the number of classes

N examples in S

C is the majority class in S

n out of N examples in S belong to C

Backed-up Error

For a non-leaf node

Let chidren of Node be Node1, Node2, etc

Probabilities can be estimated by relative frequencies of attribute values in sets of examples that fall into child nodes.

Pruning

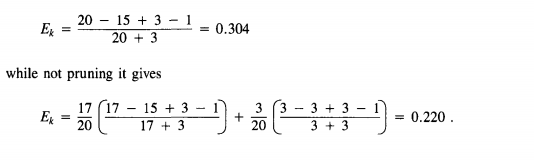

Error Calculation

Left child of b has class frequencies [3, 2]

Right child has error of 0.333, calculated in the same way

Static error estimate E(b) is 0.375, again calculated using the Laplace

error estimate formula, with N=6, n=4, and k=2.

Backed-up error is:

(5/6 and 1/6 because there are 4+2=6 examples handled by node b, of which 3+2=5 go to the left subtree and 1 to the right subtree.

Since backed-up estimate of .413 is greater than static estimate of 0.375, we prune the tree and use static the error of 0.375

MEP Pruning Algorithm is invented in

<Learning decision rules in noisy domains>

Niblett, T , Bratko, I - Conference on Expert Systems - 1986

There are two editions of MEP,the above is the earliest one ,

the other one is in

<on estimating probabilities in tree pruning>

The Second example

Reference:

《An Empirical Comparison of Pruning Methods for Decision Tree induction》