spark學習13之RDD的partitions數目獲取

阿新 • • 發佈:2019-01-07

1解釋

獲取RDD的partitions數目和index資訊

疑問:為什麼純文字的partitions數目與HDFS的block數目一樣,但是.gz的壓縮檔案的partitions數目卻為1?

2.程式碼:

sc.textFile("/xubo/GRCH38Sub/GRCH38L12566578.fna").partitions.lengthsc.textFile("/xubo/GRCH38Sub/GRCH38L12566578.fna.bwt").partitions.foreach(each=>println(each.index))spark1.6中可以直接獲取:

@Since("1.6.0") 3.結果:

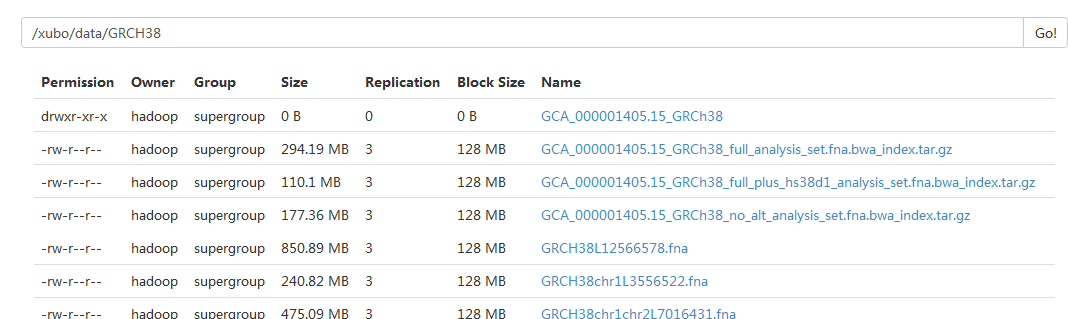

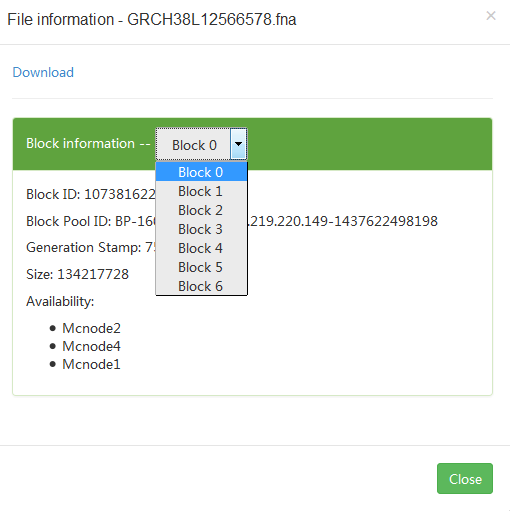

(1)第一個檔案

partitions數:

scala> sc.textFile("/xubo/GRCH38Sub/GRCH38L12566578.fna").partitions.length

res2: Int = 7

詳細資訊:

scala> sc.textFile("/xubo/GRCH38Sub/GRCH38L12566578.fna.bwt").partitions.foreach(each=>println(each.index))

0

1

2 (2)第二個檔案:

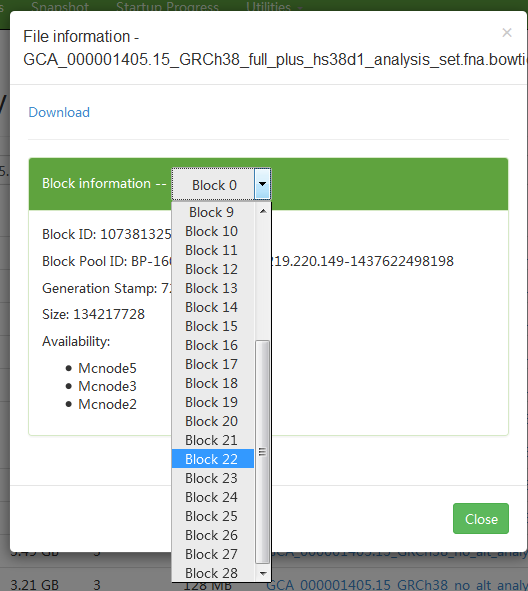

scala> sc.textFile(file).partitions.foreach(each=>println(each.index))

0

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

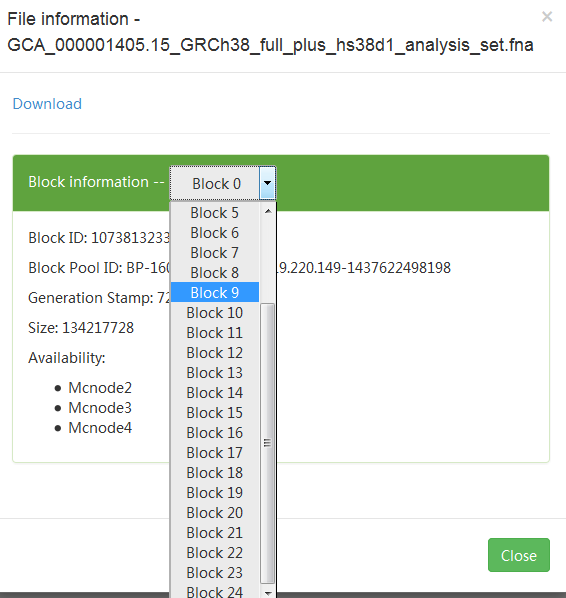

(3)第三個檔案:

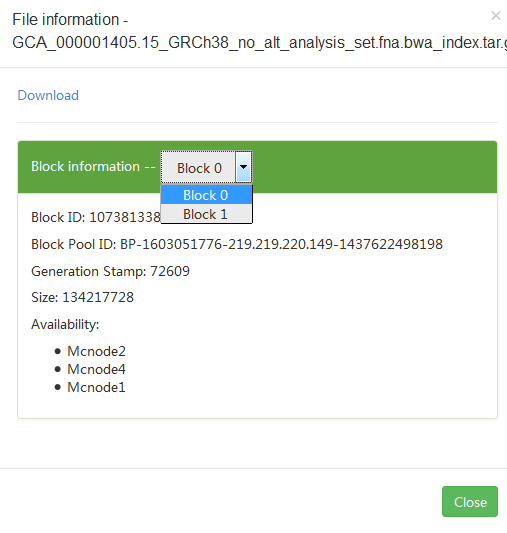

但是gz檔案: 大小差不多,但是partition卻為1

scala> sc.textFile("/xubo/data/GRCH38/GCA_000001405.15_GRCh38_full_analysis_set.fna.bwa_index.tar.gz").partitions index:

scala> sc.textFile("/xubo/data/GRCH38/GCA_000001405.15_GRCh38_full_analysis_set.fna.bwa_index.tar.gz").partitions.foreach(each=>println(each.index))

0

(4)大檔案(3G),同樣的:

scala> val file="/xubo/data/GRCH38/GCA_000001405.15_GRCh38/seqs_for_alignment_pipelines.ucsc_ids/GCA_000001405.15_GRCh38_full_plus_hs38d1_analysis_set.fna.bowtie_index.tar.gz"

file: String = /xubo/data/GRCH38/GCA_000001405.15_GRCh38/seqs_for_alignment_pipelines.ucsc_ids/GCA_000001405.15_GRCh38_full_plus_hs38d1_analysis_set.fna.bowtie_index.tar.gz

scala> sc.textFile(file).partitions.foreach(each=>println(each.index))

0

4.本來想在RDD加一個獲取partitions數量的函式或者屬性,但是已看程式碼,1.6中有人加了:

/**

* Returns the number of partitions of this RDD.

*/

@Since("1.6.0")

final def getNumPartitions: Int = partitions.length

目前不確定為什麼blocks數一樣,生成的partitions數不一樣的原因,所以有待學習