spark從入門到放棄 之 分散式執行jar包

scala程式碼如下:

注意:build path裡不但要用Spark lib資料夾下的spark-assembly-1.5.0-cdh5.5.4-hadoop2.6.0-cdh5.5.4.jar,而且要把Hadoop share/hadoop目錄下的jar新增進來,具體新增哪幾個我也不太清楚,反正都加進去就對了。import org.apache.spark.SparkConf import org.apache.spark.SparkContext import org.apache.spark.SparkContext._ /** * 統計字元出現次數 */ object WordCount { def main(args: Array[String]) { if (args.length < 1) { System.err.println("Usage: <file>") System.exit(1) } val conf = new SparkConf() val sc = new SparkContext(conf) val line = sc.textFile(args(0)) line.flatMap(_.split(" ")).map((_, 1)).reduceByKey(_+_).collect().foreach(println) sc.stop() } }

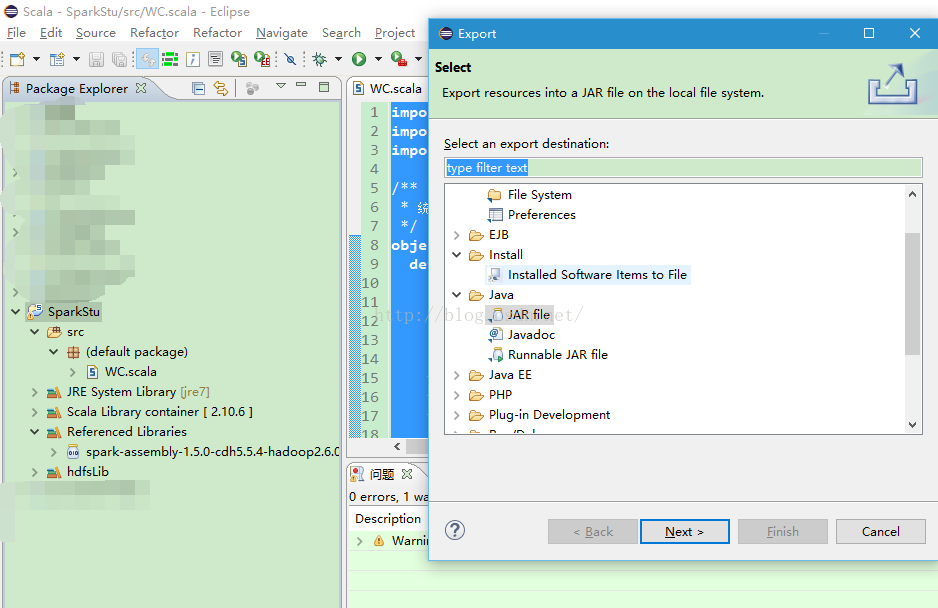

用eclipse將其打成jar包

注意:scala的object名不一定要和檔名相同,這一點和java不一樣。例如我的object名為WordCount,但檔名是WC.scala

上傳伺服器

檢視伺服器上測試檔案內容

-bash-4.1$ hadoop fs -cat /user/hdfs/test.txt 張三 張四 張三 張五 李三 李三 李四 李四 李四 王二 老王 老王

執行spark-submit命令,提交jar包

-bash-4.1$ spark-submit --class "WordCount" wc.jar /user/hdfs/test.txt

16/08/22 15:54:17 INFO SparkContext: Running Spark version 1.5.0-cdh5.5.4

16/08/22 15:54:18 INFO SecurityManager: Changing view acls to: hdfs

16/08/22 15:54:18 INFO SecurityManager: Changing modify acls to: hdfs

16/08/22 15:54:18 INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(hdfs); users with modify permissions: Set(hdfs)

16/08/22 15:54:19 INFO Slf4jLogger: Slf4jLogger started

16/08/22 15:54:19 INFO Remoting: Starting remoting

16/08/22 15:54:19 INFO Remoting: Remoting started; listening on addresses :[akka.tcp:// 執行成功。

相關推薦

spark從入門到放棄 之 分散式執行jar包

scala程式碼如下: import org.apache.spark.SparkConf import org.apache.spark.SparkContext import org.apache.spark.SparkContext._ /** * 統計字元出現

spark從入門到放棄十二: 深度剖析寬依賴與窄依賴

文章地址:http://www.haha174.top/article/details/256658 根據hello world 的例子介紹一個什麼是寬依賴和窄依賴。 窄依賴:英文全名,Narrow Dependence.什麼樣的情況,叫做窄依賴呢?一

spark從入門到放棄一: worldcount-java

<properties> <project.build.sourceEncoding>UTF-8</project.build.sourceEncoding> <spark.vers

spark從入門到放棄三十三:Spark Sql(6)hive sql 案例 查詢分數大於80分的同學

DROP TABLE IF EXISTS student_info"); sqlContext.sql("CREATE TABLE IF NOT EXISTS student_info (name STRING ,age INT)"); System.out.println(

spark從入門到放棄三十二:Spark Sql(5)hive sql 簡述

1 簡述 Spark Sql 支援對Hive 中儲存的資料進行讀寫。操作Hive中的資料時,可以建立HiveContext,而不是SqlContext.HiveContext 繼承自SqlContext,但是增加了在Hive元資料庫中查詢表,以及用Hi

spark從入門到放棄六: RDD 持久化原理

文章地址:http://www.haha174.top/article/details/252484 spark 中一個非常重要的功能特性就是可以將RDD 持久化到記憶體中。當對RDD進行持久化操作時,每個節點都會將自己操作的RDD的partition持久化

Spark從入門到放棄---RDD

什麼是Spark? 關於Spark具體的定義,大家可以去閱讀官網或者百度關於Spark的詞條,在此不再贅述。從一個野生程式猿的角度去理解,作為大資料時代的一個準王者,Spark是一款主流的高效能分散式計算大資料框架之一,和MapReduce,Hive,Flink等其他大資料框架一起支撐了大資料處理方案的一片

Spark視頻教程|Spark從入門到上手實戰

sparkSpark從入門到上手實戰網盤地址:https://pan.baidu.com/s/1uLUPAwsw8y7Ha1qWGjNx7A 密碼:m8l2備用地址(騰訊微雲):https://share.weiyun.com/55RRnmc 密碼:8qywnp 課程是2017年錄制,還比較新,還是有學習的價

Spark從入門到精通(一)

什麼是Spark 大資料計算框架 離線批處理 大資料體系架構圖(Spark) Spark包含了大資料領域常見的各種計算框架:比如Spark Core用於離線計算,Spark SQL用於互動式查詢,Spark Streaming用於實時流式計算,Spark MLib用於機器學習,Spark

大資料基礎之如何匯出jar包並放在hdfs上執行

我口才不好,文字描述也不行,但是基本邏輯是通的。 匯出jar包1.首先完成mapper和reducer還有main方法的編碼2。右鍵點選peopleinfo的包,選擇export-》Java-》JAR file,點選NEXT3.輸入jar包名稱以及匯出地址,點選next->next4.點選Browse

Spark從入門到精通六------RDD的運算元

RDD程式設計API RDD運算元 運算元是RDD中定義的方法,分為轉換(transformantion)和動作(action)。Tranformation運算元並不會觸發Spark提交作業,直至Action運算元才提交任務執行,這是一個延遲計算的設計技巧,

Spark-1.6.0之Application執行資訊記錄器JobProgressListener

JobProgressListener類是Spark的ListenerBus中一個很重要的監聽器,可以用於記錄Spark任務的Job和Stage等資訊,比如在Spark UI頁面上Job和Stage執行狀況以及執行進度的顯示等資料,就是從JobProgres

Spark從入門到精通五----RDD的產生背景---建立方式及分割槽說明

交流QQ: 824203453 彈性分散式資料集RDD RDD概述 產生背景 為了解決開發人員能在大規模的叢集中以一種容錯的方式進行記憶體計算,提出了RDD的概念,而當前的很多框架對迭代式演算法場景與互動性資料探勘場景的處理效能非常

spark-submit執行jar包指令碼命令

找到spark-submit檔案的目錄 目錄/spark-submit --master spark://192.168.172.10:7077 --executor-memory 2g --tota

Spark從入門到精通三------scala版本的wordcount---java版本的wordcount----java-lambda版本的wordcount

交流QQ: 824203453 spark shell僅在測試和驗證我們的程式時使用的較多,在生產環境中,通常會在IDE中開發程式,然後打成jar包,然後提交到叢集,最常用的是建立一個Maven專案,利用Maven來管理jar包的依賴。 交流QQ:

java命令執行jar包的方式

運行 -c 必須 loader 自定義 pan ati tcl stat 大家都知道一個java應用項目可以打包成一個jar,當然你必須指定一個擁有main函數的main class作為你這個jar包的程序入口。 具體的方法是修改jar包內目錄META-INF下的MA

python放棄之 模塊和包

jpg calling 目的 方式 ima 必須 功能 str ron import print(‘frrom the my_module.py‘) money=1000 def rend1(): print(‘my_my_module->reand1->mon

Maven倉庫理解、如何引入本地包、Maven多種方式打可執行jar包

依賴 tro 個人 部署 格式 多種方式 ava null 路徑 轉載博客:http://quicker.iteye.com/blog/2319947 有關MAVEN倉庫的理解參見:http://blog.csdn.net/wanghantong/article/det

Java打包可執行jar包 包含外部文件

star bsp end clas adl pro readline 令行 inpu 外部文件在程序中設置成相對當前工程路徑,執行jar包時,將外部文件放在和jar包平級的目錄。 1 public class Main { 2 3 4 public

eclipse怎麽導出可執行jar包

hot exp 對話框 con java程序 -i -c image jar 在eclpse中找到你要導出的java程序 選中它 單擊文件 -->export 在彈出的export對話框中找到 jar File 單擊選中-->