利用Tensorflow的DNN對北京PM2.5資料集Beijing PM2.5 Data Data Set進行分類

阿新 • • 發佈:2019-01-29

課程作業。

分類效果較差,應該是交叉熵那裡出了問題,接下來再改改,先把作業交上去再說。

被評論裡的大兄弟提醒hidden layer是要加啟用函式的!!

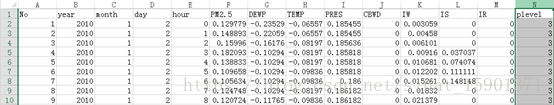

資料來源什麼的在上一篇LSTM裡已經提到了。做LSTM的時候已經對資料進行了標準化處理。

這裡我對資料集做了修改,根據PM2.5的濃度將環境汙染程度進行了歸類,1-4級。

直接上程式碼,毫無疑問程式碼肯定又參考過別人了,不過這次改的基本看不出來了。

# -*- coding: utf-8 -*- """ Created on Mon Jun 11 19:57:29 2018 @author: Administrator """ import tensorflow as tf import pandas as pd import numpy as np import csv tf.reset_default_graph() #2 hidden layers was used here hidden_unit_1 = 30 hidden_unit_2 = 15 batch_size = 72 input_size = 7 output_size = 4 lr = 0.006 f = open('C:\\Users\\Administrator\\Desktop\\BJair\\BjAirDat5.csv',encoding='UTF-8') df = pd.read_csv(f) #read the csv file #get data, use the data between 2010 ans 1013 for train,the data of 2014 as exam weatherdata = df.iloc[0:39312, 6:13] #weather with 7 items, not including PM2.5, for train pollutiondata = df.iloc[0:39312, 13:14] #pollution level data, for train weathertest = df.iloc[39312:, 6:13] #weatherdata with 7 items, not including PM2.5, for exam pollutiontest = df.iloc[39312:, 13:14] #pollution level data, for exam train_x = weatherdata.values test_x = weathertest.values #rebuild lables for train train_y = [] for i in range(len(pollutiondata)): if pollutiondata[i:i+1].values == 1: train_y.append([1,0,0,0]) if pollutiondata[i:i+1].values == 2: train_y.append([0,1,0,0]) if pollutiondata[i:i+1].values == 3: train_y.append([0,0,1,0]) if pollutiondata[i:i+1].values == 4: train_y.append([0,0,0,1]) #rebuild lables for test test_y = [] for i in range(len(pollutiontest)): if pollutiondata[i:i+1].values == 1: test_y.append([1,0,0,0]) if pollutiondata[i:i+1].values == 2: test_y.append([0,1,0,0]) if pollutiondata[i:i+1].values == 3: test_y.append([0,0,1,0]) if pollutiondata[i:i+1].values == 4: test_y.append([0,0,0,1]) #placeholder X = tf.placeholder(tf.float32, [None, input_size]) #a placeholder as the input tensor Y = tf.placeholder(tf.float32, [None, output_size]) #the lable def layer(input_tensor, layer_input_size, layer_output_size): #a hidden layer: output = w*input + b #the dimension is given by dnn(X) and the initial w, b was create randomly. w = tf.Variable(tf.random_normal([layer_input_size, layer_output_size])) b = tf.Variable(tf.random_normal([layer_output_size])) input_ = tf.reshape(input_tensor, [-1, layer_input_size]) output = tf.matmul(input_, w) + b return output def dnn(X): #here we have 2 hidden layers and one output layer. hidden_1 = layer(X, input_size, hidden_unit_1) hidden_2 = layer(hidden_1, hidden_unit_1, hidden_unit_2) pred = layer(hidden_2, hidden_unit_2, output_size) #use relu() as Activation function output_train = tf.nn.relu(pred) #normalize predicted data pred_sum = tf.reduce_sum(pred, 1, keep_dims = True) pred_normalize = tf.div(pred, pred_sum) output_dnn = tf.nn.relu(pred_normalize) return output_dnn, output_train def train_dnn(): print('start train dnn') _, logit = dnn(X) #use Cross entropy as the loss function. #Y is the train_y above, and logit comes from dnn(X) cross_entropy = tf.nn.softmax_cross_entropy_with_logits(labels = Y, logits = logit) loss = tf.reduce_mean(cross_entropy) #use gradient descent method. train_op = tf.train.GradientDescentOptimizer(lr).minimize(loss) saver = tf.train.Saver(tf.global_variables()) with tf.Session() as sess: sess.run(tf.global_variables_initializer()) for i in range(1000): step = 0 start = 0 end = start + batch_size while(end < len(train_x)): loss_ = sess.run([train_op, loss], feed_dict = {X:train_x[start:end], Y:train_y[start:end]}) start += batch_size end += batch_size if step % 200 == 0: print('round: ' , i , ' step: ' , step, ' loss : ' , loss_) if step % 5000 == 0: saver.save(sess, "C:\\Users\\Administrator\\Desktop\\moxing\\model.ckpt") print('save model') step += 1 #train_dnn() def prediction(): print('start predict') predict = [] pred, _ = dnn(X) correct_num = 0 total_num = 0 saver = tf.train.Saver(tf.global_variables()) with tf.Session() as sess: saver.restore(sess, "C:\\Users\\Administrator\\Desktop\\moxing\\model.ckpt") start = 0 end = start+72 while(end < len(test_x)): #get pridicted data from dnn(X) pred_class = sess.run(pred, feed_dict = {X:test_x[start:end]}) #convert the pridicted data to a array contains weather_class. pred_class = sess.run(tf.argmax(pred_class, 1)) predict.append(pred_class) #convert the real data to an array contains weather_class accurate_class = sess.run(tf.argmax(test_y[start:end], 1)) #calculate the correct rate for i in range(len(accurate_class)): if accurate_class[i] == pred_class[i]: correct_num += 1 total_num += len(accurate_class) start += 72 end += 72 print('the number of correct classify: ', correct_num, ' in total : ', total_num) print('correct rate : ', correct_num/total_num) return predict prediction()