TensorFlow官方教程學習筆記之2-用於機器學習初學者學習的MNIST資料集(MNIST For ML Beginners)

阿新 • • 發佈:2019-02-01

1.資料集

MNIST是機器視覺入門級的資料集

2.演算法

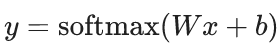

1)核心

迴歸(Regression)演算法:

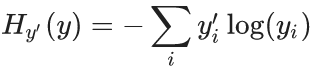

2)代價函式

交叉熵(cross-entropy):

3)優化

梯度下降法

3.程式碼

# Copyright 2015 The TensorFlow Authors. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.