Caffe 程式碼解讀之全連線層concat layer

阿新 • • 發佈:2019-02-08

今天,我們看一下caffe的拼接層,即將兩個或多個layer進行拼接。

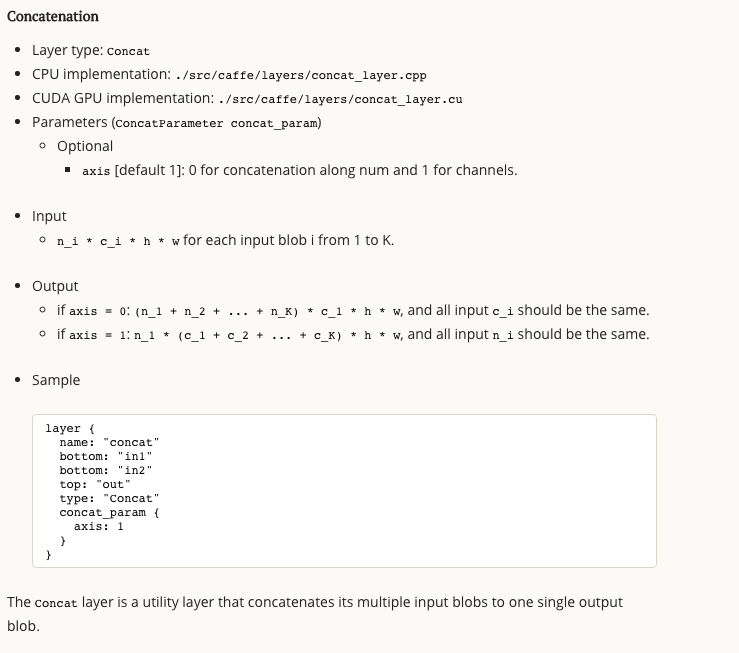

首先,看一下caffe官方文件。

同其他layer一樣,分為setup、reshape、Forward_cpu、Backward_cpu。

//concat_layer 用來實現兩個或者多個blob的連結,即多輸入一輸出

//支援在num 維度上的連結(concat_dim = 0 : (n1+n2+...+nk)∗c∗h∗w )

//和channel維度上的連結(concat_dim = 1 : n∗(c1+c2+...+ck)∗h∗w)。

//axis ,dim :0 為 num 維度連結,1 為 channel 維度連結 1、這裡有一點需要解釋,可以看到,bottom型別為 vector blob,這裡只需要使用bottom[0]給shape賦值就好,其實bottom本身就是一個Blob的vector。

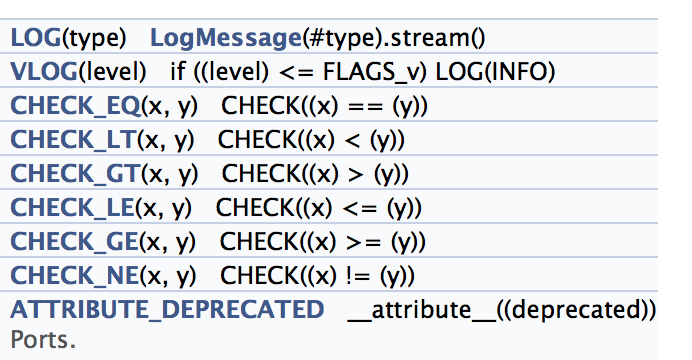

2、CHECK_**,這裡給小白們解釋一下,就是判斷是否相等、小於、大於

3、 count,這看到有好多的count函式,這些函式在blob層實現,解釋如下:

inline int count() const { return count_; }

/**

* @brief Compute the volume of a slice; i.e., the product of dimensions

* among a range of axes.

*

* @param start_axis The first axis to include in the slice.

*

* @param end_axis The first axis to exclude from the slice.

*/

inline int count(int start_axis, int end_axis) const {

CHECK_LE(start_axis, end_axis);

CHECK_GE(start_axis, 0);

CHECK_GE(end_axis, 0);

CHECK_LE(start_axis, num_axes());

CHECK_LE(end_axis, num_axes());

int count = 1;

for (int i = start_axis; i < end_axis; ++i) {

count *= shape(i);

}

return count;

}

/**

* @brief Compute the volume of a slice spanning from a particular first

* axis to the final axis.

*

* @param start_axis The first axis to include in the slice.

*/

inline int count(int start_axis) const {

return count(start_axis, num_axes());

}

前向傳播就是layer的拼接

template <typename Dtype>

void ConcatLayer<Dtype>::Forward_cpu(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top) {

Dtype* top_data = top[0]->mutable_cpu_data();

int offset_concat_axis = 0;

const int top_concat_axis = top[0]->shape(concat_axis_);

//遍歷所有輸入bottom

for (int i = 0; i < bottom.size(); ++i) {

const Dtype* bottom_data = bottom[i]->cpu_data();

const int bottom_concat_axis = bottom[i]->shape(concat_axis_);

//把 各個bottom data 拷貝到輸出 top data 的對應位置

for (int n = 0; n < num_concats_; ++n) {

//case 0:num x channel x h x w;case 1: channel x h x w

//case 0:bottom_data + n x num x channel x h x w ;

//case 1:bottom_data + n x channel x h x w ;

caffe_copy(bottom_concat_axis * concat_input_size_,

bottom_data + n * bottom_concat_axis * concat_input_size_,

top_data + (n * top_concat_axis + offset_concat_axis)

* concat_input_size_);

}

offset_concat_axis += bottom_concat_axis;

}

}反向傳播,就是layer層之間diff和data的傳播

//反向傳播就是對每一個bottom的 diff 做和 data 相同的連結

template <typename Dtype>

void ConcatLayer<Dtype>::Backward_cpu(const vector<Blob<Dtype>*>& top,

const vector<bool>& propagate_down, const vector<Blob<Dtype>*>& bottom) {

const Dtype* top_diff = top[0]->cpu_diff();

int offset_concat_axis = 0;

const int top_concat_axis = top[0]->shape(concat_axis_);

for (int i = 0; i < bottom.size(); ++i) {

if (!propagate_down[i]) { continue; }

Dtype* bottom_diff = bottom[i]->mutable_cpu_diff();

const int bottom_concat_axis = bottom[i]->shape(concat_axis_);

for (int n = 0; n < num_concats_; ++n) {

caffe_copy(bottom_concat_axis * concat_input_size_, top_diff +

(n * top_concat_axis + offset_concat_axis) * concat_input_size_,

bottom_diff + n * bottom_concat_axis * concat_input_size_);

}

offset_concat_axis += bottom_concat_axis;

}

}