【RAC】11gRAC 搭建(VMware+裸裝置)

安裝環境與網路規劃

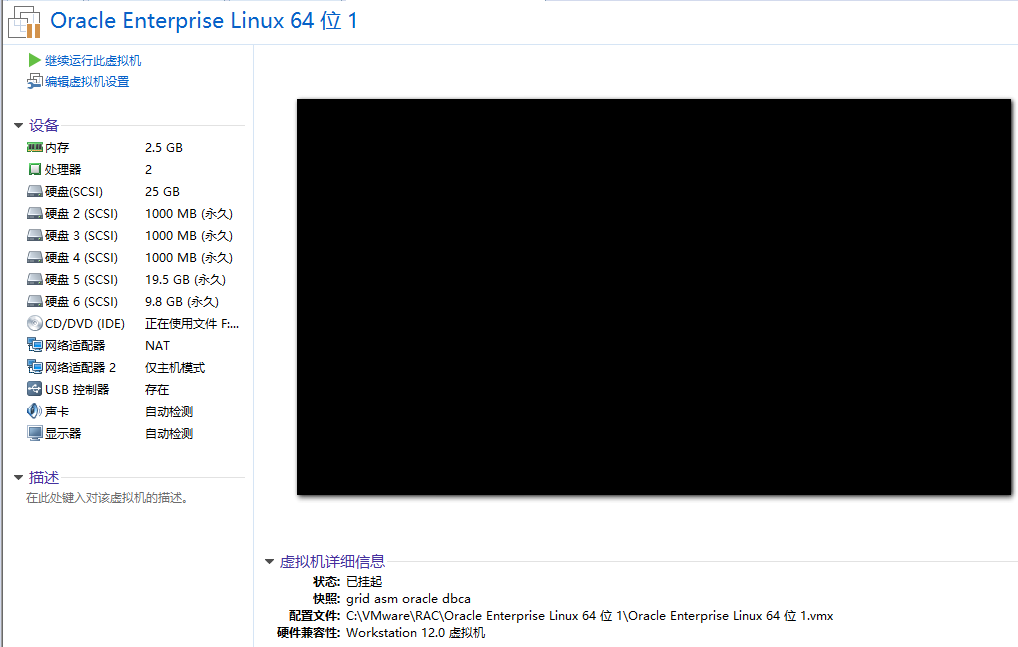

安裝環境

主機作業系統:windows 7

虛擬機器VMware12:兩臺Oracle Linux R6 U5 x86_64

Oracle Database software: Oracle11gR2

Cluster software: Oracle grid infrastructure 11gR2(11.2.0.4.0)

共享儲存:ASM

說明:11.2.0.1.0版本在安裝grid時有個bug

執行指令碼/u01/app/11.2.0/grid/root.sh 出現問題

Adding daemon to inittab

CRS-4124: Oracle High Availability Services startup failed.

CRS-4000: Command Start failed, or completed with errors.

ohasd failed to start: Inappropriate ioctl for device

ohasd failed to start at /u01/app/11.2.0/grid/crs/install/rootcrs.pl line 443.

[[email protected] ~]# lsb_release -a

LSB Version: :base-4.0-amd64:base-4.0-noarch:core-4.0-amd64:core-4.0-noarch:graphics-4.0-amd64:graphics-4.0-noarch:printing-4.0-amd64:printing-4.0-noarch

Distributor ID: OracleServer

Description: Oracle Linux Server release 6.5

Release: 6.5

Codename: n/a

[ 細節說明:

1.每臺主機的記憶體和swap規劃為至少2.5G。硬碟規劃為:boot 300M,其他空間分配為LVM方式管理,LVM劃分2.5G為swap,其他為/。

兩臺Oracle Linux主機名為rac1、rac2

注意這裡安裝的兩個作業系統最好在不同的硬碟中,否則I/O會很吃力。

2. 由於採用的是共享儲存ASM,而且搭建叢集需要共享空間作註冊盤(OCR)和投票盤(votingdisk)。VMware建立共享儲存方式:

進入VMware安裝目錄,cmd命令下:

C:\Program Files (x86)\d: d:\cd WMware\ vmware-vdiskmanager.exe -c -s 1000Mb -a lsilogic -t 2 F:\VMware\Sharedisk\ocr.vmdk vmware-vdiskmanager.exe -c -s 1000Mb -a lsilogic -t 2 F:\VMware\Sharedisk\ocr2.vmdk vmware-vdiskmanager.exe -c -s 1000Mb -a lsilogic -t 2 F:\VMware\Sharedisk\votingdisk.vmdk vmware-vdiskmanager.exe -c -s 20000Mb -a lsilogic -t 2 F:\VMware\Sharedisk\data.vmdk

這裡建立了兩個1G的ocr盤,一個1G的投票盤,一個20G的資料盤,

修改RAC1虛擬機器目錄下的vmx配置檔案:

scsi1.present = "TRUE"

scsi1.virtualDev = "lsilogic"

scsi1.sharedBus = "virtual"

scsi1:1.present = "TRUE"

scsi1:1.mode = "independent-persistent"

scsi1:1.filename = "ocr.vmdk"

scsi1:2.present = "TRUE"

scsi1:2.mode = "independent-persistent"

scsi1:2.filename = "votingdisk.vmdk"

scsi1:3.present = "TRUE"

scsi1:3.mode = "independent-persistent"

scsi1:3.filename = "data.vmdk"

scsi1:4.present = "TRUE"

scsi1:4.mode = "independent-persistent"

scsi1:4.filename = "ocr2.vmdk"

disk.locking = "false"

diskLib.dataCacheMaxSize = "0"

diskLib.dataCacheMaxReadAheadSize = "0"

diskLib.DataCacheMinReadAheadSize = "0"

diskLib.dataCachePageSize = "4096"

diskLib.maxUnsyncedWrites = "0"修改RAC2的vmx配置檔案:

scsi1.sharedBus = "virtual"

disk.locking = "false"

diskLib.dataCacheMaxSize = "0"

diskLib.dataCacheMaxReadAheadSize = "0"

diskLib.DataCacheMinReadAheadSize = "0"

diskLib.dataCachePageSize = "4096"

diskLib.maxUnsyncedWrites = "0"

gui.lastPoweredViewMode = "fullscreen"

checkpoint.vmState = ""

usb:0.present = "TRUE"

usb:0.deviceType = "hid"

usb:0.port = "0"

usb:0.parent = "-1"RAC1和RAC2分別都要網路介面卡

這裡就在RAC2的虛擬機器設定中手動新增建立好的五個虛擬硬碟,要求是獨立永久屬性。

網路規劃

硬體配置要求:

- 每個伺服器節點至少需要2塊網絡卡,一個對外網路介面,一個私有網路介面(心跳)。

- 如果你通過OUI安裝Oracle叢集軟體,需要保證每個節點用於外網或私網介面(網絡卡名)保證一致。比如,node1使用eth0作為對外介面,node2就不能使用eth1作為對外介面。

IP配置要求:

這裡不採用DHCP方式,指定靜態的scan ip(scan ip可以實現叢集的負載均衡,由叢集軟體按情況分配給某一節點)。

每個節點分配一個ip、一個虛擬ip、一個私有ip。

其中ip、vip和scan-ip需要在同一個網段。

非GNS下手動配置IP例項:

| Identity | Home Node | Host Node | Given Name | Type | Address |

|---|---|---|---|---|---|

| RAC1 Public | RAC1 | RAC1 | rac1 | Public | 192.168.248.101 |

| RAC1 VIP | RAC1 | RAC1 | rac1-vip | Public | 192.168.248.201 |

| RAC1 Private | RAC1 | RAC1 | rac1-priv | Private | 192.168.109.101 |

| RAC2 | RAC2 | RAC2 | rac2 | Public | 192.168.248.102 |

| RAC2 VIP | RAC2 | RAC2 | rac2-vip | Public | 192.168.248.202 |

| RAC2 Private | RAC2 | RAC2 | rac2-priv | Private | 192.168.109.102 |

| SCAN IP | none | Selected by Oracle Clusterware | scan-ip | virtual | 192.168.248.110 |

環境配置

預設情況下,下面操作在每個節點下均要進行,密碼均設定oracle

1. 通過SecureCRT建立命令列連線

sqlplus中Backspace出現^H的亂碼

Options->Session Options->Terminal->Emulation->Mapped Keys->Other mappings

勾選Backspace sends deletevi中不能使用delete和home

Options->Session Options->Terminal->Emulation

設定Terminal為Linux

勾選Select an alternate keyboard emulation為Linux

2. 關閉防火牆

[[email protected] ~]# setenforce 0

setenforce: SELinux is disabled

[[email protected] ~]# vi /etc/sysconfig/selinux

SELINUX=disabled

[[email protected] ~]# service iptables stop

[[email protected] ~]# chkconfig iptables off3. 建立必要的使用者、組和目錄,並授權

/usr/sbin/groupadd -g 1000 oinstall

/usr/sbin/groupadd -g 1020 asmadmin

/usr/sbin/groupadd -g 1021 asmdba

/usr/sbin/groupadd -g 1022 asmoper

/usr/sbin/groupadd -g 1031 dba

/usr/sbin/groupadd -g 1032 oper

useradd -u 1100 -g oinstall -G asmadmin,asmdba,asmoper,oper,dba grid

useradd -u 1101 -g oinstall -G dba,asmdba,oper oracle

mkdir -p /u01/app/11.2.0/grid

mkdir -p /u01/app/grid

mkdir /u01/app/oracle

chown -R grid:oinstall /u01

chown oracle:oinstall /u01/app/oraclechmod -R 775 /u01/

passwd oracle

請輸入密碼: oracle

再次輸入: oracle

passwd grid

請輸入密碼: grid

再次輸入: grid參照官方文件,採用GI與DB分開安裝和許可權的策略,對於多例項管理有利。

4. 節點配置檢查

記憶體大小:至少2.5GB Swap大小: 當記憶體為2.5GB-16GB時,Swap需要大於等於系統記憶體。 當記憶體大於16GB時,Swap等於16GB即可。 檢視記憶體和swap大小:

[root@rac1 ~]# grep MemTotal /proc/meminfo

MemTotal: 2552560 kB

[root@rac1 ~]# grep SwapTotal /proc/meminfo

SwapTotal: 2621436 kB5. 系統檔案設定

(1)核心引數設定:

[[email protected] ~]# vi /etc/sysctl.conf

kernel.msgmnb = 65536

kernel.msgmax = 65536

kernel.shmmax = 68719476736

kernel.shmall = 4294967296

fs.aio-max-nr = 1048576

fs.file-max = 6815744

kernel.shmall = 2097152

kernel.shmmax = 1306910720

kernel.shmmni = 4096

kernel.sem = 250 32000 100 128

net.ipv4.ip_local_port_range = 9000 65500

net.core.rmem_default = 262144

net.core.rmem_max = 4194304

net.core.wmem_default = 262144

net.core.wmem_max = 1048586

net.ipv4.tcp_wmem = 262144 262144 262144

net.ipv4.tcp_rmem = 4194304 4194304 4194304

這裡後面檢測要改

kernel.shmmax = 68719476736

確認修改核心

[[email protected] ~]# sysctl -p

-------------------------------------------------------------------------------------------

也可以採用Oracle Linux光碟中的相關安裝包來調整

[[email protected] Packages]# pwd

/mnt/cdrom/Packages

[[email protected] Packages]# ll | grep preinstall

-rw-r–r– 1 root root 15524 Dec 25 2012 oracle-rdbms-server-11gR2-preinstall-1.0-7.el6.x86_64.rpm

這個rpm包有依賴包

需要yum install -y libaio-devel,compat-libstdc*,compat-libcap*

完事後再:

rpm -ivh oracle-rdbms-server-11gR2-preinstall-1.0-7.el6.x86_64.rpm

安裝完後,檢視/etc/sysctl.conf,最後幾行就會有變化,根據11g的oracle需求修改為適當的引數

-------------------------------------------------------------------------------------------

(2)配置oracle、grid使用者的shell限制

[[email protected] ~]# vi /etc/security/limits.conf

grid soft nproc 2047

grid hard nproc 16384

grid soft nofile 1024

grid hard nofile 65536

oracle soft nproc 2047

oracle hard nproc 16384

oracle soft nofile 1024

oracle hard nofile 65536

-------------------------------------------------------------------------------------------

如果執行上了上面的rpm包安裝的話,只需要新增grid的相關引數即可

-------------------------------------------------------------------------------------------

(3)配置login

[[email protected] ~]# vi /etc/pam.d/login

session required pam_limits.so

安裝需要的軟體包

binutils-2.20.51.0.2-5.11.el6 (x86_64)

compat-libcap1-1.10-1 (x86_64)

compat-libstdc++-33-3.2.3-69.el6 (x86_64)

compat-libstdc++-33-3.2.3-69.el6.i686

gcc-4.4.4-13.el6 (x86_64)

gcc-c++-4.4.4-13.el6 (x86_64)

glibc-2.12-1.7.el6 (i686)

glibc-2.12-1.7.el6 (x86_64)

glibc-devel-2.12-1.7.el6 (x86_64)

glibc-devel-2.12-1.7.el6.i686

ksh

libgcc-4.4.4-13.el6 (i686)

libgcc-4.4.4-13.el6 (x86_64)

libstdc++-4.4.4-13.el6 (x86_64)

libstdc++-4.4.4-13.el6.i686

libstdc++-devel-4.4.4-13.el6 (x86_64)

libstdc++-devel-4.4.4-13.el6.i686

libaio-0.3.107-10.el6 (x86_64)

libaio-0.3.107-10.el6.i686

libaio-devel-0.3.107-10.el6 (x86_64)

libaio-devel-0.3.107-10.el6.i686

make-3.81-19.el6

sysstat-9.0.4-11.el6 (x86_64)這裡使用的是配置本地源的方式,自己先進行配置:

[[email protected] ~]# mount /dev/cdrom /mnt/cdrom/

[[email protected] ~]# vi /etc/yum.repos.d/dvd.repo

[dvd]

name=dvd

baseurl=file:///mnt/cdrom

gpgcheck=0

enabled=1

[[email protected] ~]# yum clean all

[[email protected] ~]# yum makecache

[[email protected] ~]# yum install gcc gcc-c++ glibc* glibc-devel* ksh libgcc* libstdc++* libstdc++-devel* make sysstat

6.配置IP和hosts、hostname

(1)配置ip

//這裡的閘道器有vmware中網路設定決定,eth0為連線外網,eth0內網心跳

//rac1主機下:

[[email protected] ~]# vi /etc/sysconfig/network-scripts/ifcfg-eth0

IPADDR=192.168.248.101

PREFIX=24

GATEWAY=192.168.248.2

DNS1=114.114.114.114

[[email protected] ~]# vi /etc/sysconfig/network-scripts/ifcfg-eth1

IPADDR=192.168.109.101

PREFIX=24

//rac2主機下

[[email protected] ~]# vi /etc/sysconfig/network-scripts/ifcfg-eth0

IPADDR=192.168.248.102

PREFIX=24

GATEWAY=192.168.248.2

DNS1=114.114.114.114

[[email protected] ~]# vi /etc/sysconfig/network-scripts/ifcfg-eth1

IPADDR=192.168.109.102

PREFIX=24

(2)配置hostname

//rac1主機下

[[email protected] ~]# vi /etc/sysconfig/network

NETWORKING=yes

HOSTNAME=rac1

GATEWAY=192.168.248.2

NOZEROCONF=yes

//rac2主機下

[[email protected] ~]# vi /etc/sysconfig/network

NETWORKING=yes

HOSTNAME=rac2

GATEWAY=192.168.248.2

NOZEROCONF=yes

(3)配置hosts

rac1和rac2均要新增:

[[email protected] ~]# vi /etc/hosts

192.168.248.101 rac1

192.168.248.201 rac1-vip

192.168.109.101 rac1-priv

192.168.248.102 rac2

192.168.248.202 rac2-vip

192.168.109.102 rac2-priv

192.168.248.110 scan-ip

7.配置grid和oracle使用者環境變數

Oracle_sid需要根據節點不同進行修改

[[email protected] ~]# su - grid

[[email protected] ~]$ vi .bash_profile

export TMP=/tmp

export TMPDIR=$TMP

export ORACLE_SID=+ASM1 # RAC1

#export ORACLE_SID=+ASM2 # RAC2

export ORACLE_BASE=/u01/app/grid

export ORACLE_HOME=/u01/app/11.2.0/grid

export PATH=/usr/sbin:$PATH

export PATH=$ORACLE_HOME/bin:$PATH

export LD_LIBRARY_PATH=$ORACLE_HOME/lib:/lib:/usr/lib

export CLASSPATH=$ORACLE_HOME/JRE:$ORACLE_HOME/jlib:$ORACLE_HOME/rdbms/jlib

umask 022需要注意的是ORACLE_UNQNAME是資料庫名,建立資料庫時指定多個節點是會建立多個例項,ORACLE_SID指的是資料庫例項名

[[email protected] ~]# su - oracle

[[email protected] ~]$ vi .bash_profile

export TMP=/tmp

export TMPDIR=$TMP

export ORACLE_SID=orcl1 # RAC1

#export ORACLE_SID=orcl2 # RAC2

export ORACLE_UNQNAME=orcl

export ORACLE_BASE=/u01/app/oracle

export ORACLE_HOME=$ORACLE_BASE/product/11.2.0/db_1

export TNS_ADMIN=$ORACLE_HOME/network/admin

export PATH=/usr/sbin:$PATH

export PATH=$ORACLE_HOME/bin:$PATH

export LD_LIBRARY_PATH=$ORACLE_HOME/lib:/lib:/usr/lib$ source .bash_profile使配置檔案生效

8.配置oracle使用者ssh互信

這是很關鍵的一步,雖然官方文件中聲稱安裝GI和RAC的時候OUI會自動配置SSH,但為了在安裝之前使用CVU檢查各項配置,還是手動配置互信更優。

RAC1:

ssh-keygen -t rsa

ssh-keygen -t dsa

[[email protected] ~]$ cd .ssh

[[email protected] .ssh]$ cat *.pub >> authorized_keys

[[email protected] .ssh]$ scp authorized_keys rac2:/home/oracle/.ssh/

RAC2:

ssh-keygen -t rsa

ssh-keygen -t dsa

[[email protected] ~]$ cd .ssh

[[email protected] .ssh]$ cat *.pub >> authorized_keys.bak

[[email protected] .ssh]$ cat authorized_keys.bak >> authorized_keys

[[email protected] .ssh]$ scp authorized_keys rac1:/home/oracle/.ssh/

ssh rac1 date

ssh rac2 date

ssh rac1-priv date

ssh rac2-priv date需要注意的是生成金鑰時不設定密碼,授權檔案許可權為600,同時需要兩個節點互相ssh通過一次。

9.配置裸盤(這裡沒有安裝oracleasm相關的三個包)

使用asm管理儲存需要裸盤,前面配置了共享硬碟到兩臺主機上。配置裸盤的方式有兩種(1)oracleasm新增(2)/etc/udev/rules.d/60-raw.rules配置檔案新增(字元方式幫繫結udev) (3)指令碼方式新增(塊方式繫結udev,速度比字元方式快,最新的方法,推薦用此方式)

在配置裸盤之前需要先格式化硬碟:

fdisk /dev/sdb

Command (m for help): n

Command action

e extended

p primary partition (1-4)

p

Partition number (1-4): 1

最後 w 命令儲存更改重複步驟,格式化其他盤,得到如下分割槽

[[email protected] ~]# ls /dev/sd*

/dev/sda /dev/sda2 /dev/sdb1 /dev/sdc1 /dev/sdd1 /dev/sde1 /dev/sdf1 /dev/sda1 /dev/sdb /dev/sdc /dev/sdd /dev/sde /dev/sdf

新增裸盤:

[[email protected] ~]# vi /etc/udev/rules.d/60-raw.rules

ACTION=="add",KERNEL=="/dev/sdb1",RUN+='/bin/raw /dev/raw/raw1 %N"

ACTION=="add",ENV{MAJOR}=="8",ENV{MINOR}=="17",RUN+="/bin/raw /dev/raw/raw1 %M %m"

ACTION=="add",KERNEL=="/dev/sdc1",RUN+='/bin/raw /dev/raw/raw2 %N"

ACTION=="add",ENV{MAJOR}=="8",ENV{MINOR}=="33",RUN+="/bin/raw /dev/raw/raw2 %M %m"

ACTION=="add",KERNEL=="/dev/sdd1",RUN+='/bin/raw /dev/raw/raw3 %N"

ACTION=="add",ENV{MAJOR}=="8",ENV{MINOR}=="49",RUN+="/bin/raw /dev/raw/raw3 %M %m"

ACTION=="add",KERNEL=="/dev/sde1",RUN+='/bin/raw /dev/raw/raw4 %N"

ACTION=="add",ENV{MAJOR}=="8",ENV{MINOR}=="65",RUN+="/bin/raw /dev/raw/raw4 %M %m"

KERNEL=="raw[1-4]",OWNER="grid",GROUP="asmadmin",MODE="660"

[[email protected] ~]# start_udev

Starting udev: [ OK ]

[[email protected] ~]# ll /dev/raw/

total 0

crw-rw---- 1 grid asmadmin 162, 1 Apr 13 13:51 raw1

crw-rw---- 1 grid asmadmin 162, 2 Apr 13 13:51 raw2

crw-rw---- 1 grid asmadmin 162, 3 Apr 13 13:51 raw3

crw-rw---- 1 grid asmadmin 162, 4 Apr 13 13:51 raw4

crw-rw---- 1 root disk 162, 0 Apr 13 13:51 rawctl這裡需要注意的是配置的,前後都不能有空格,否則會報錯。最後看到的raw盤許可權必須是grid:asmadmin使用者。

方法(3):

[[email protected] ~]# for i in b c d e f ;

do

echo "KERNEL==\"sd*\", BUS==\"scsi\", PROGRAM==\"/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev/\$name\", RESULT==\"`/sbin/scsi_id --whitelisted --replace-whitespace --device=/dev/sd$i`\", NAME=\"asm-disk$i\", OWNER=\"grid\", GROUP=\"asmadmin\", MODE=\"0660\"">> /etc/udev/rules.d/99-oracle-asmdevices.rules

done

可能出現問題:vmware中的RHEL scsi_id不顯示虛擬磁碟的wwid的問題

在vmx檔案中新增

disk.EnableUUID = "TRUE"

[[email protected] ~]# start_udev

Starting udev: [ OK ][[email protected] ~]# ll /dev/*asm*

brw-rw—- 1 grid asmadmin 8, 16 Apr 27 18:52 /dev/asm-diskb

brw-rw—- 1 grid asmadmin 8, 32 Apr 27 18:52 /dev/asm-diskc

brw-rw—- 1 grid asmadmin 8, 48 Apr 27 18:52 /dev/asm-diskd

brw-rw—- 1 grid asmadmin 8, 64 Apr 27 18:52 /dev/asm-diske

用這種方式新增,在後面的新增asm磁碟組的時候,需要指定Change Diskcovery Path為/dev/*asm*

10.配置grid使用者ssh互信

同樣。grid使用者也要進行通訊,方法和配置oracle互信的方法完全一樣,但要注意grid和oracle的密碼不同11.掛載安裝軟體資料夾

這裡是主機windows系統開啟資料夾共享,讓後虛擬機器掛載即可

mkdir -p /home/grid/db

mount -t cifs -o username=share,password=123456 //192.168.248.1/DB /home/grid/db

mkdir -p /home/oracle/db

mount -t cifs -o username=share,password=123456 //192.168.248.1/DB /home/oracle/db

12.安裝用於Linux的cvuqdisk

在Oracle RAC兩個節點上安裝cvuqdisk,否則,叢集驗證使用程式就無法發現共享磁碟,當執行(手動執行或在Oracle Grid Infrastructure安裝結束時自動執行)叢集驗證使用程式,會報錯“Package cvuqdisk not installed”

注意使用適用於硬體體系結構(x86_64或i386)的cvuqdisk RPM。

cvuqdisk RPM在grid的安裝介質上的rpm目錄中。

13.手動執行cvu使用驗證程式驗證Oracle叢集件要求(所有節點都執行)

rac1到grid軟體目錄下執行runcluvfy.sh命令:

這裡可能出現問題

wait ...[[email protected] grid]$ Exception in thread "main" java.lang.NoClassDefFoundError

at java.lang.Class.forName0(Native Method)

at java.lang.Class.forName(Class.java:164)

at java.awt.Toolkit$2.run(Toolkit.java:821)

at java.security.AccessController.doPrivileged(Native Method)

at java.awt.Toolkit.getDefaultToolkit(Toolkit.java:804)

at com.jgoodies.looks.LookUtils.isLowResolution(Unknown Source)

at com.jgoodies.looks.LookUtils.<clinit>(Unknown Source)

需要直接登入grid使用者,而不是su - 切換[[email protected] ~]# su - grid

[[email protected] ~]$ cd db/grid/

[[email protected] grid]$ ls

doc readme.html rpm runInstaller stage

install response runcluvfy.sh sshsetup welcome.html

[[email protected] grid]$ ./runcluvfy.sh stage -pre crsinst -n rac1,rac2 -fixup -verbose檢視cvu報告,修正錯誤

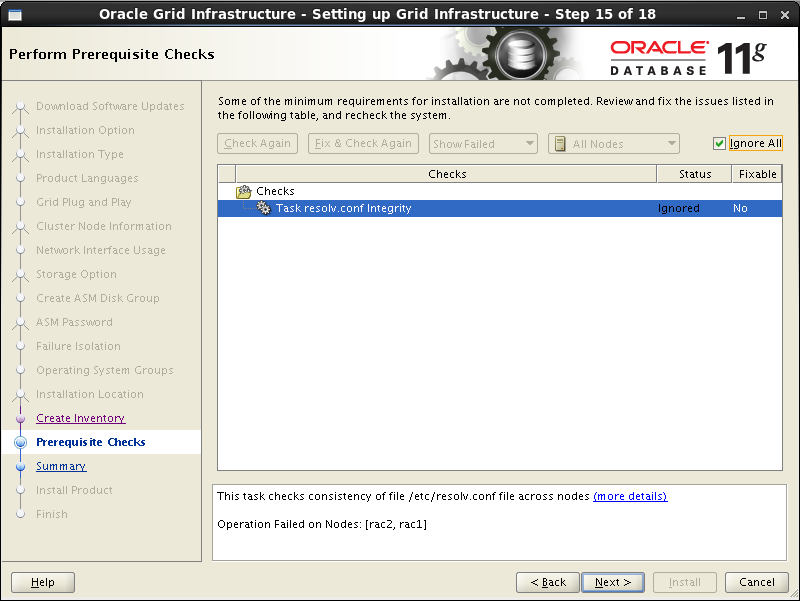

這裡CVU執行的所有其他檢查的結果為”passed”,只出現瞭如下錯誤:

Checking DNS response time for an unreachable node

Node Name Status

rac2 failed

rac1 failed

PRVF-5637 : DNS response time could not be checked on following nodes: rac2,rac1

File “/etc/resolv.conf” is not consistent across nodes

這個錯誤是因為沒有配置DNS,但不影響安裝,後面也會提示resolv.conf錯誤,我們用靜態的scan-ip,所以可以忽略。

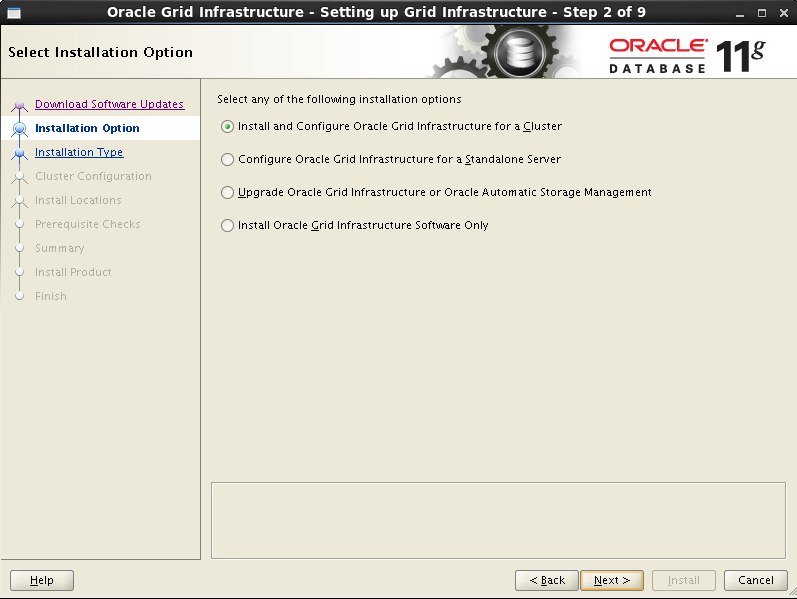

安裝Grid Infrastructure

1.安裝流程

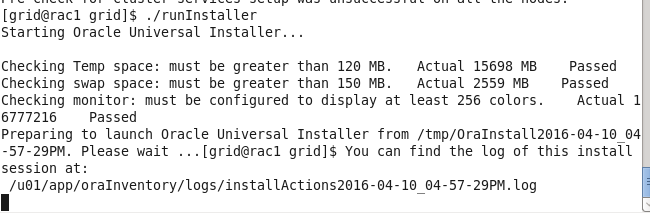

只需要在一個節點上安裝即可,會自動複製到其他節點中,這裡在rac1中安裝。

進入圖形化介面,在grid使用者下進行安裝

[root@rac1 ~]# su - grid

[grid@rac1 ~]$ cd db/grid/

doc/ readme.html rpm/ runInstaller stage/

install/ response/ runcluvfy.sh sshsetup/ welcome.html

[grid@rac1 ~]$ cd db/grid/

[grid@rac1 grid]$ ./runInstaller跳過更新

選擇安裝叢集

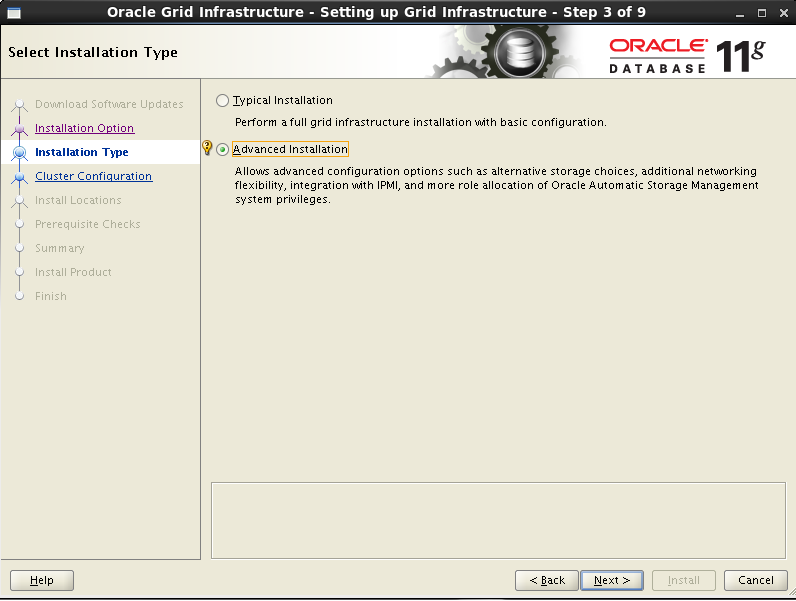

選擇自定義安裝

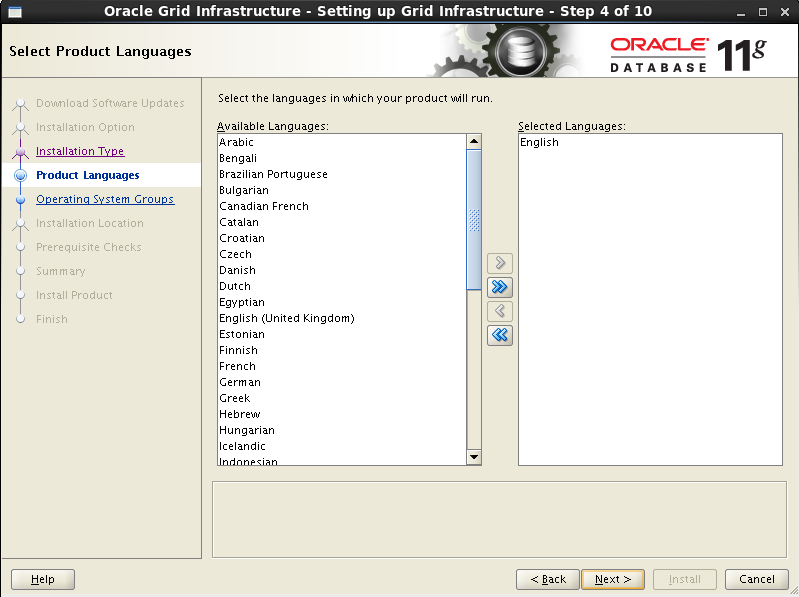

選擇語言為English

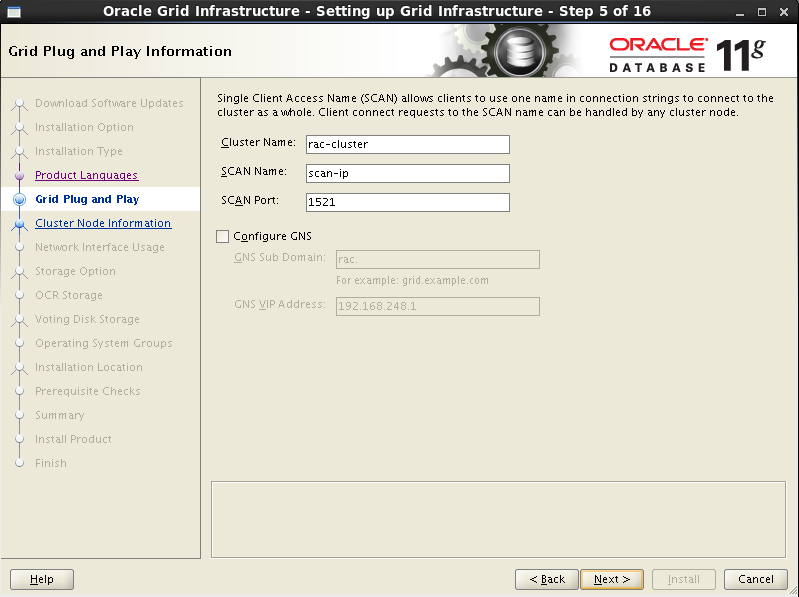

定義叢集名字,SCAN Name 為hosts中定義的scan-ip,取消GNS

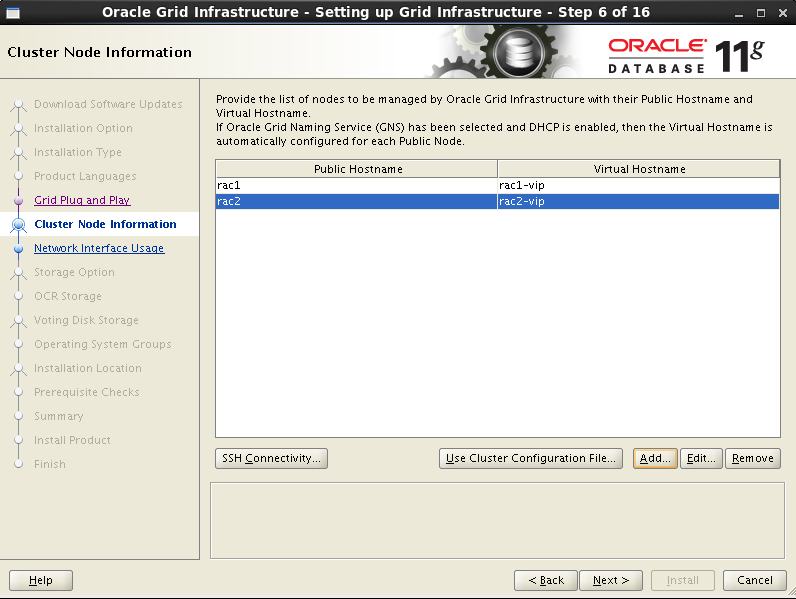

介面只有第一個節點rac1,點選“Add”把第二個節點rac2加上

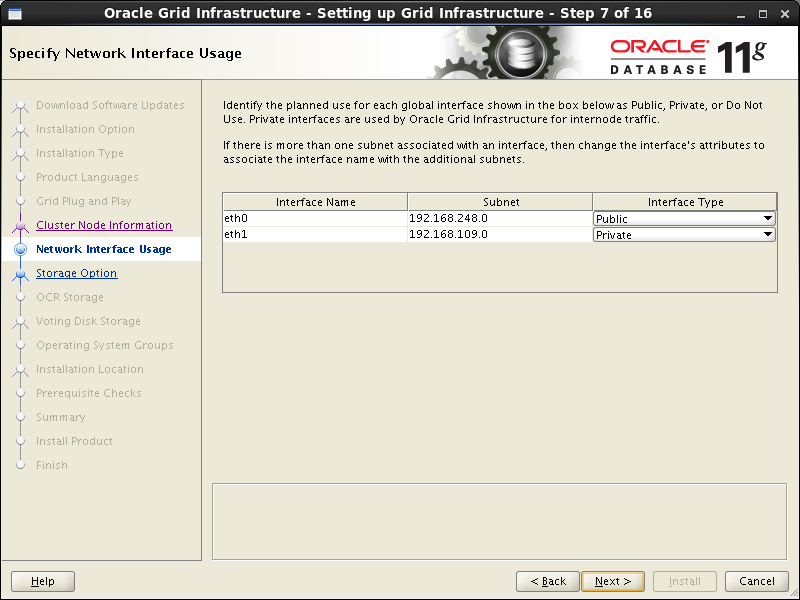

選擇網絡卡

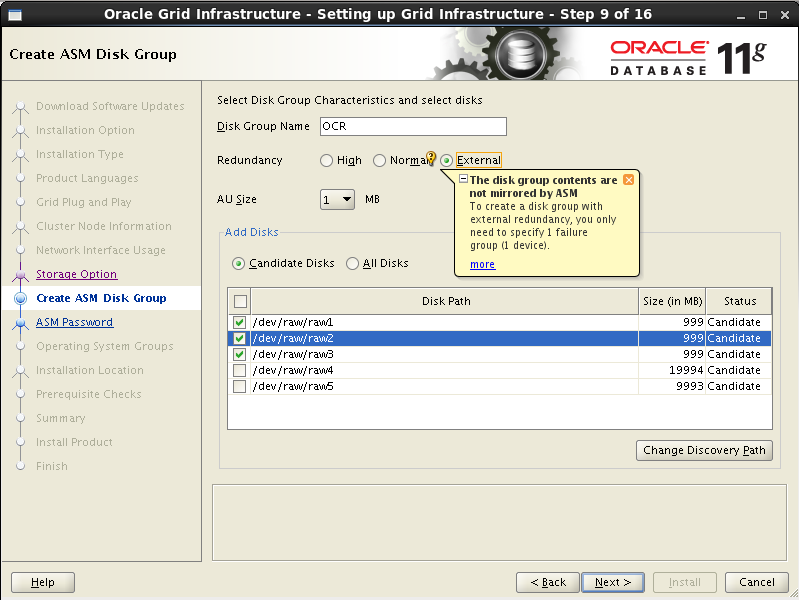

配置ASM,這裡選擇前面配置的裸盤raw1,raw2,raw3,冗餘方式為External即不冗餘。因為是不用於,所以也可以只選一個裝置。這裡的裝置是用來做OCR註冊盤和votingdisk投票盤的。 這裡只選擇OCR硬碟,安裝完集群后,會把其他的硬碟加上

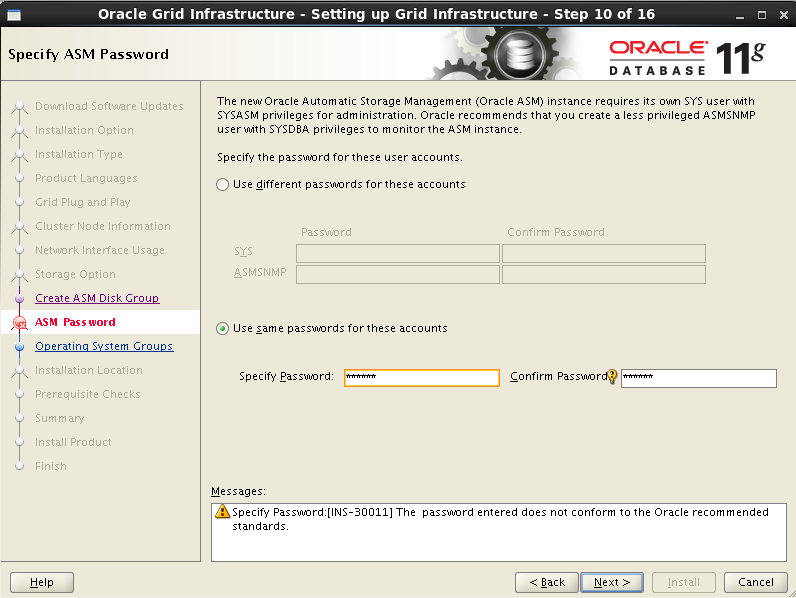

配置ASM例項需要為具有sysasm許可權的sys使用者,具有sysdba許可權的asmsnmp使用者設定密碼,這裡設定統一密碼為oracle,會提示密碼不符合標準,點選OK即可

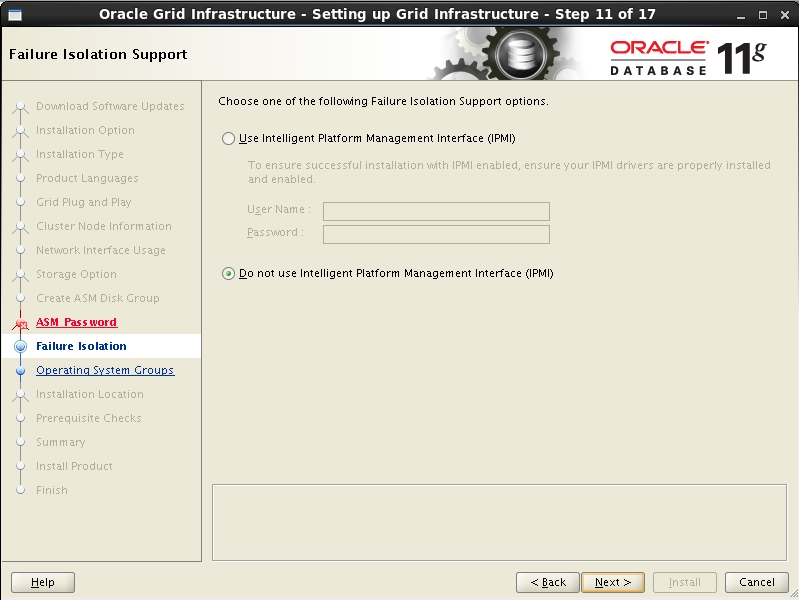

不選擇智慧管理

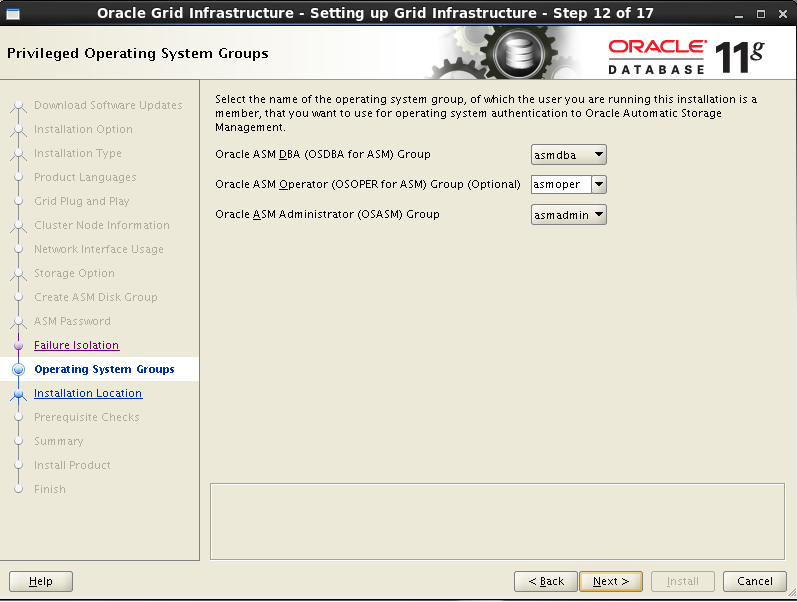

檢查ASM例項許可權分組情況

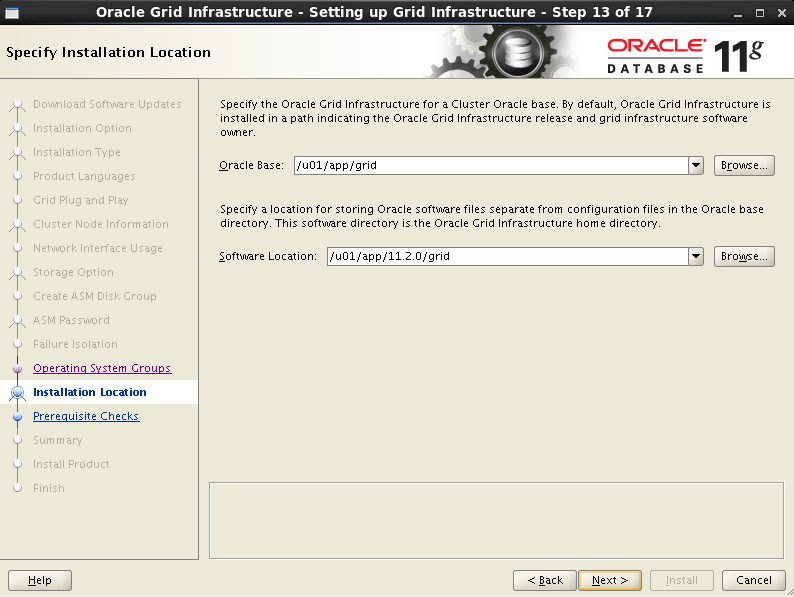

選擇grid軟體安裝路徑和base目錄

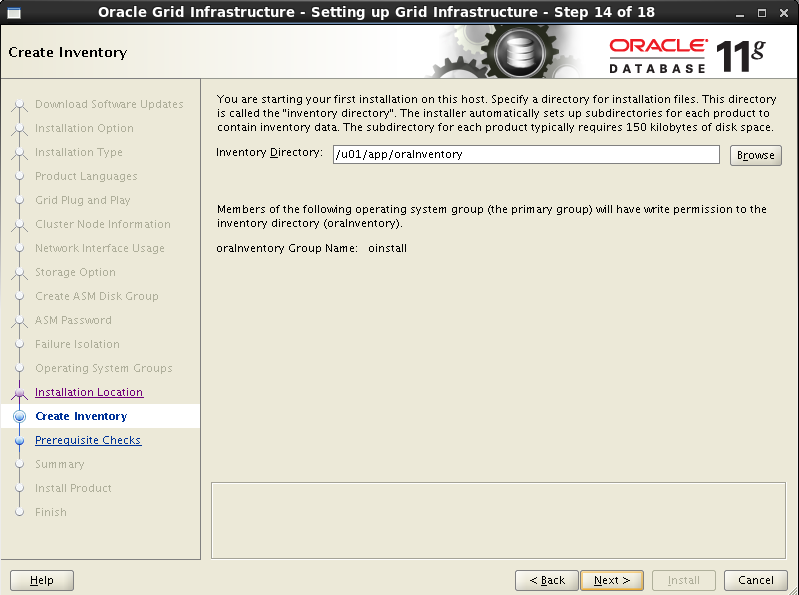

選擇grid安裝清單目錄

環境檢測出現resolv.conf錯誤,是因為沒有配置DNS,可以忽略

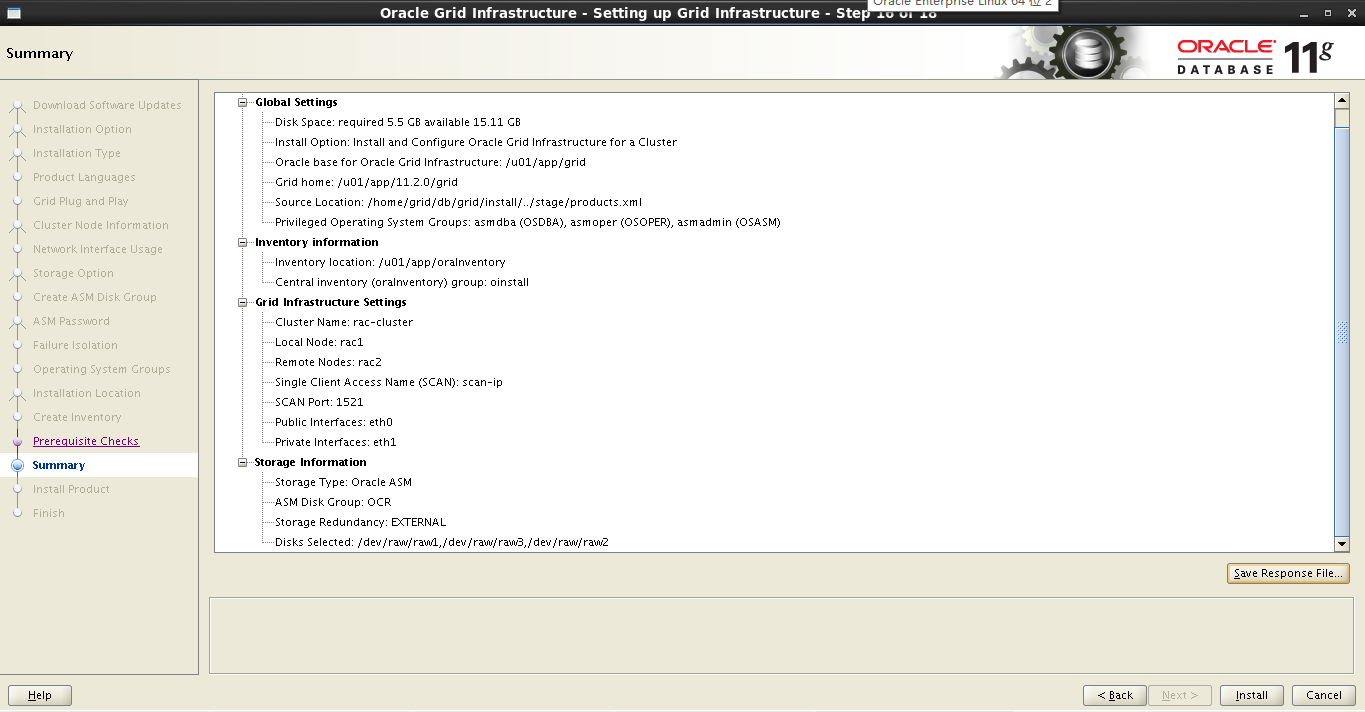

安裝grid概要

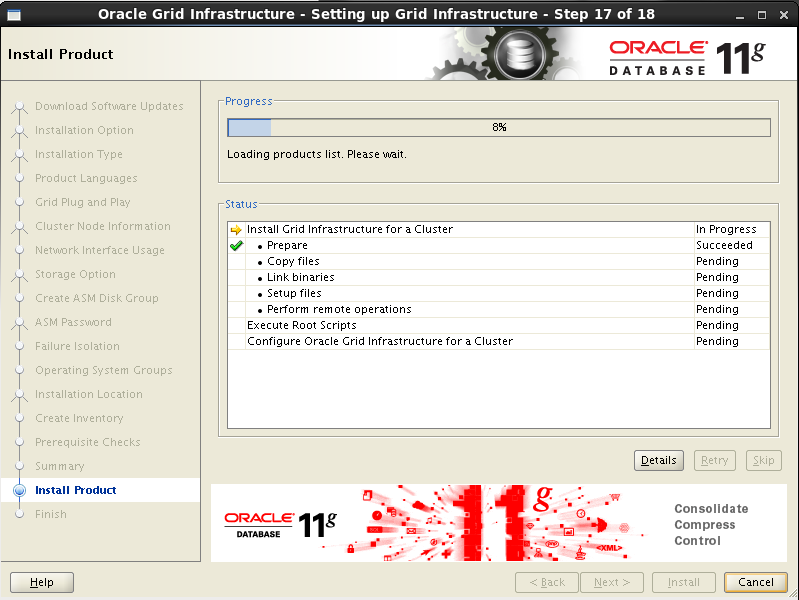

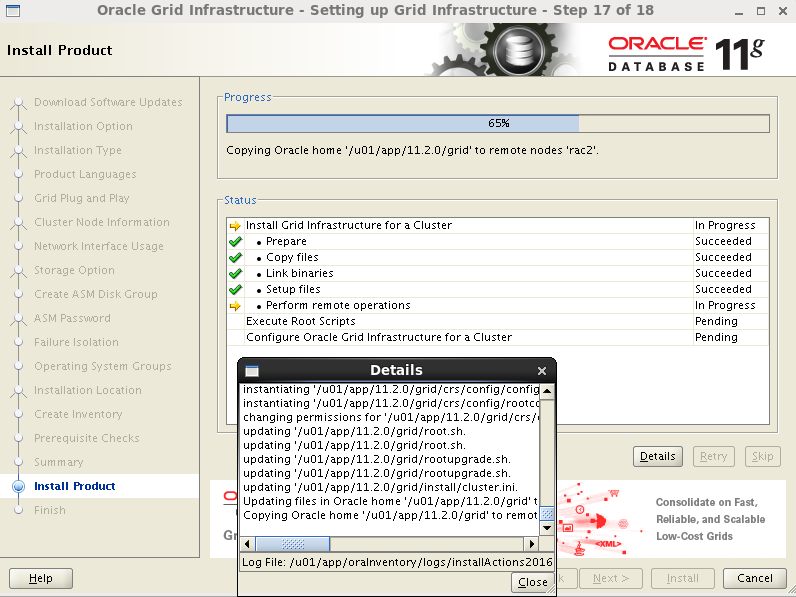

開始安裝

複製安裝到其他節點

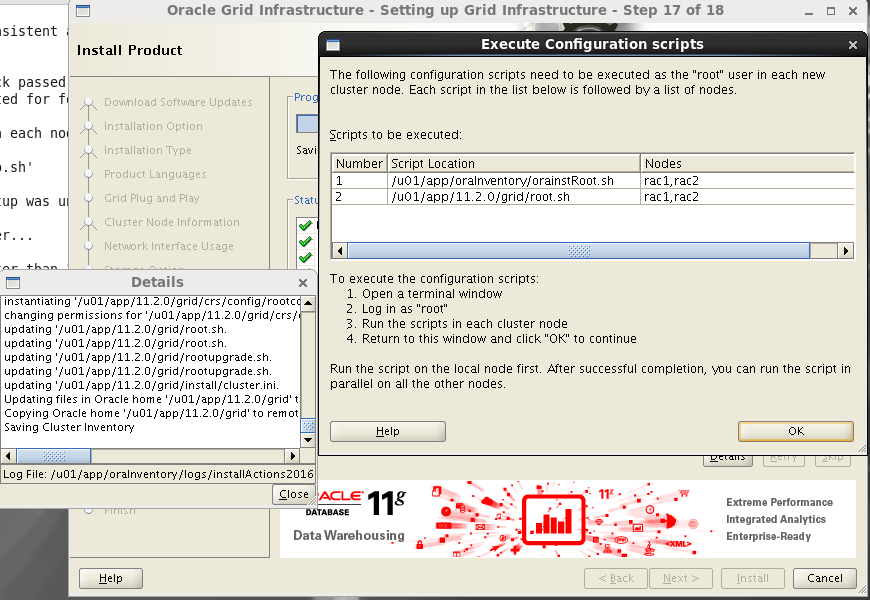

安裝grid完成,提示需要root使用者依次執行指令碼orainstRoot.sh ,root.sh (一定要先在rac1執行完指令碼後,才能在其他節點執行)

在rac1中執行指令碼

[[email protected] rpm]# /u01/app/oraInventory/orainstRoot.sh

Changing permissions of /u01/app/oraInventory.

Adding read,write permissions for group.

Removing read,write,execute permissions for world.

Changing groupname of /u01/app/oraInventory to oinstall.

The execution of the script is complete.

[[email protected] rpm]# /u01/app/

11.2.0/ grid/ oracle/ oraInventory/

[[email protected] rpm]# /u01/app/11.2.0/grid/root.sh

Performing root user operation for Oracle 11g

The following environment variables are set as:

ORACLE_OWNER= grid

ORACLE_HOME= /u01/app/11.2.0/grid

Enter the full pathname of the local bin directory: [/usr/local/bin]:

Copying dbhome to /usr/local/bin ...

Copying oraenv to /usr/local/bin ...

Copying coraenv to /usr/local/bin ...

Creating /etc/oratab file...

Entries will be added to the /etc/oratab file as needed by

Database Configuration Assistant when a database is created

Finished running generic part of root script.

Now product-specific root actions will be performed.

Using configuration parameter file: /u01/app/11.2.0/grid/crs/install/crsconfig_params

Creating trace directory

User ignored Prerequisites during installation

OLR initialization - successful

root wallet

root wallet cert

root cert export

peer wallet

profile reader wallet

pa wallet

peer wallet keys

pa wallet keys

peer cert request

pa cert request

peer cert

pa cert

peer root cert TP

profile reader root cert TP

pa root cert TP

peer pa cert TP

pa peer cert TP

profile reader pa cert TP

profile reader peer cert TP

peer user cert

pa user cert

Adding Clusterware entries to upstart

CRS-2672: Attempting to start 'ora.mdnsd' on 'rac1'

CRS-2676: Start of 'ora.mdnsd' on 'rac1' succeeded

CRS-2672: Attempting to start 'ora.gpnpd' on 'rac1'

CRS-2676: Start of 'ora.gpnpd' on 'rac1' succeeded

CRS-2672: Attempting to start 'ora.cssdmonitor' on 'rac1'

CRS-2672: Attempting to start 'ora.gipcd' on 'rac1'

CRS-2676: Start of 'ora.cssdmonitor' on 'rac1' succeeded

CRS-2676: Start of 'ora.gipcd' on 'rac1' succeeded

CRS-2672: Attempting to start 'ora.cssd' on 'rac1'

CRS-2672: Attempting to start 'ora.diskmon' on 'rac1'

CRS-2676: Start of 'ora.diskmon' on 'rac1' succeeded

CRS-2676: Start of 'ora.cssd' on 'rac1' succeeded

ASM created and started successfully.

Disk Group OCR created successfully.

clscfg: -install mode specified

Successfully accumulated necessary OCR keys.

Creating OCR keys for user 'root', privgrp 'root'..

Operation successful.

CRS-4256: Updating the profile

Successful addition of voting disk 496abcfc4e214fc9bf85cf755e0cc8e2.

Successfully replaced voting disk group with +OCR.

CRS-4256: Updating the profile

CRS-4266: Voting file(s) successfully replaced

## STATE File Universal Id File Name Disk group

-- ----- ----------------- --------- ---------

1. ONLINE 496abcfc4e214fc9bf85cf755e0cc8e2 (/dev/raw/raw1) [OCR]

Located 1 voting disk(s).

CRS-2672: Attempting to start 'ora.asm' on 'rac1'

CRS-2676: Start of 'ora.asm' on 'rac1' succeeded

CRS-2672: Attempting to start 'ora.OCR.dg' on 'rac1'

CRS-2676: Start of 'ora.OCR.dg' on 'rac1' succeeded

Configure Oracle Grid Infrastructure for a Cluster ... succeeded在rac2執行指令碼

[[email protected] grid]# /u01/app/oraInventory/orainstRoot.sh

Changing permissions of /u01/app/oraInventory.

Adding read,write permissions for group.

Removing read,write,execute permissions for world.

Changing groupname of /u01/app/oraInventory to oinstall.

The execution of the script is complete.

[[email protected] grid]# /u01/app/11.2.0/grid/root.sh

Performing root user operation for Oracle 11g

The following environment variables are set as:

ORACLE_OWNER= grid

ORACLE_HOME= /u01/app/11.2.0/grid

Enter the full pathname of the local bin directory: [/usr/local/bin]:

Copying dbhome to /usr/local/bin ...

Copying oraenv to /usr/local/bin ...

Copying coraenv to /usr/local/bin ...

Creating /etc/oratab file...

Entries will be added to the /etc/oratab file as needed by

Database Configuration Assistant when a database is created

Finished running generic part of root script.

Now product-specific root actions will be performed.

Using configuration parameter file: /u01/app/11.2.0/grid/crs/install/crsconfig_params

Creating trace directory

User ignored Prerequisites during installation

OLR initialization - successful

Adding Clusterware entries to upstart

CRS-4402: The CSS daemon was started in exclusive mode but found an active CSS daemon on node rac1, number 1, and is terminating

An active cluster was found during exclusive startup, restarting to join the cluster

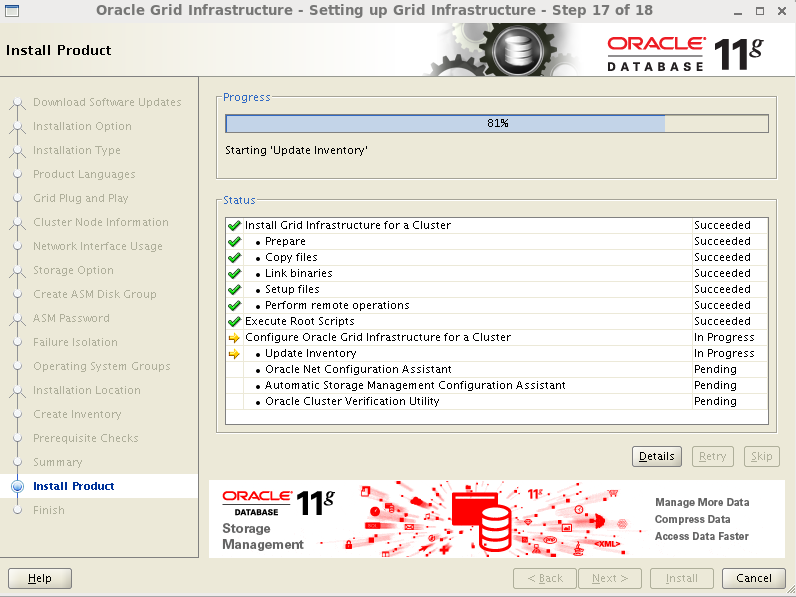

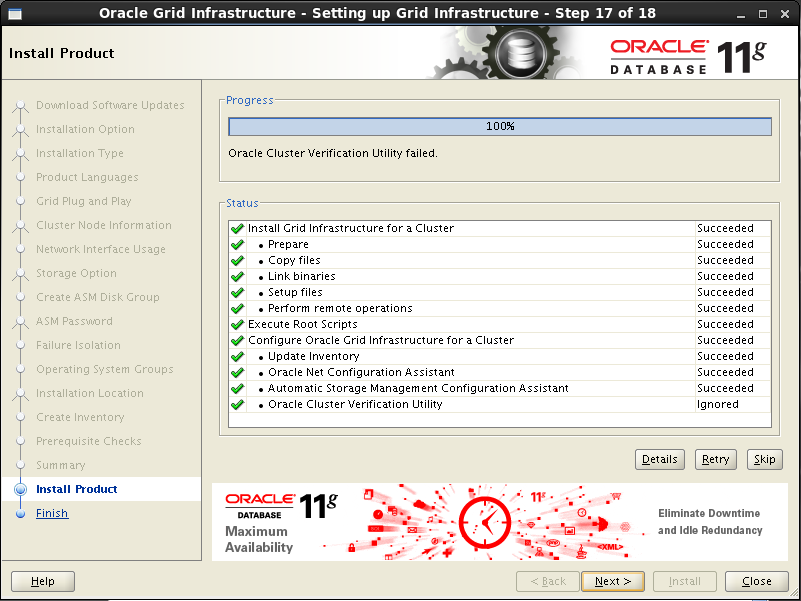

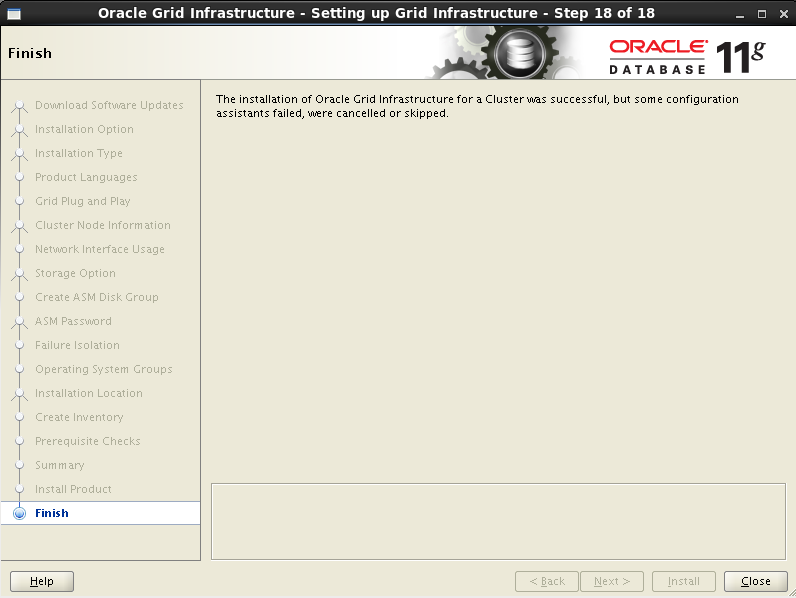

Configure Oracle Grid Infrastructure for a Cluster ... succeeded完成指令碼後,點選OK,Next,下一步

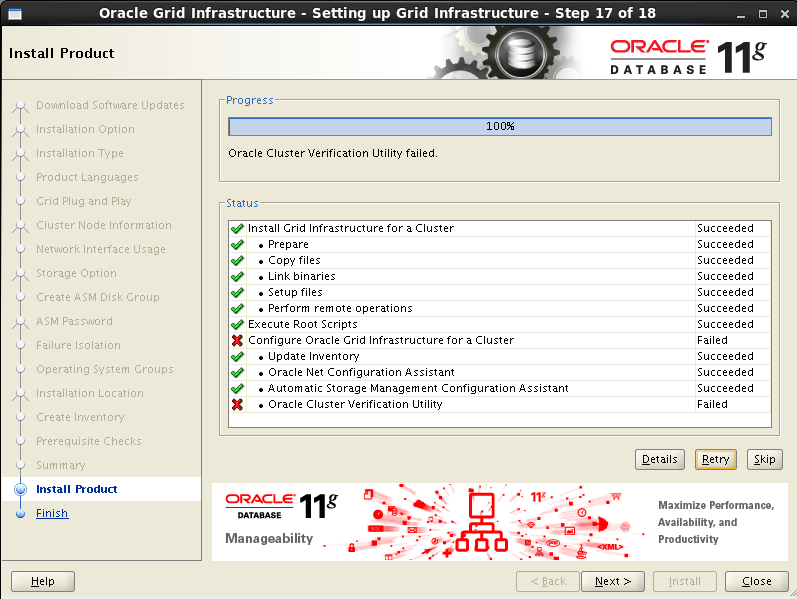

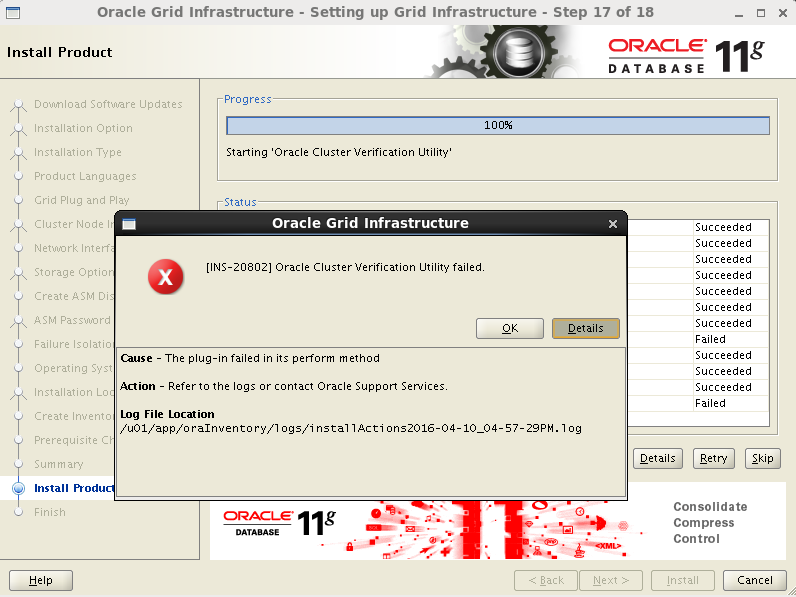

這裡出現了一個錯誤

根據提示檢視日誌

[[email protected] grid]$ vi /u01/app/oraInventory/logs/installActions2016-04-10_04-57-29PM.log

命令模式查詢錯誤:/ERROR

WARNING:

INFO: Completed Plugin named: Oracle Cluster Verification Utility

INFO: Checking name resolution setup for "scan-ip"...

INFO: ERROR:

INFO: PRVG-1101 : SCAN name "scan-ip" failed to resolve

INFO: ERROR:

INFO: PRVF-4657 : Name resolution setup check for "scan-ip" (IP address: 192.168.2

48.110) failed

INFO: ERROR:

INFO: PRVF-4664 : Found inconsistent name resolution entries for SCAN name "scan-i

p"

INFO: Verification of SCAN VIP and Listener setup failed由錯誤日誌可知,是因為沒有配置resolve.conf,可以忽略 ,如果後期不想看到報錯的話,可以把resolve.conf修改為resolve.conf.bak這樣就不會報錯了

安裝完成

安裝grid清單位置

至此grid叢集軟體安裝完成

2.安裝grid後的資源檢查

以grid使用者執行以下命令。 [[email protected] ~]# su - grid

檢查crs狀態

[grid@rac1 ~]$ crsctl check crs

CRS-4638: Oracle High Availability Services is online

CRS-4537: Cluster Ready Services is online

CRS-4529: Cluster Synchronization Services is online

CRS-4533: Event Manager is online檢查Clusterware資源

[[email protected] ~]$ crs_stat -t -v

Name Type R/RA F/FT Target State Host

----------------------------------------------------------------------

ora....ER.lsnr ora....er.type 0/5 0/ ONLINE ONLINE rac1

ora....N1.lsnr ora....er.type 0/5 0/0 ONLINE ONLINE rac1

ora.OCR.dg ora....up.type 0/5 0/ ONLINE ONLINE rac1

ora.asm ora.asm.type 0/5 0/ ONLINE ONLINE rac1

ora.cvu ora.cvu.type 0/5 0/0 ONLINE ONLINE rac1

ora.gsd ora.gsd.type 0/5 0/ OFFLINE OFFLINE

ora....network ora....rk.type 0/5 0/ ONLINE ONLINE rac1

ora.oc4j ora.oc4j.type 0/1 0/2 ONLINE ONLINE rac1

ora.ons ora.ons.type 0/3 0/ ONLINE ONLINE rac1

ora....SM1.asm application 0/5 0/0 ONLINE ONLINE rac1

ora....C1.lsnr application 0/5 0/0 ONLINE ONLINE rac1

ora.rac1.gsd application 0/5 0/0 OFFLINE OFFLINE

ora.rac1.ons application 0/3