使用pytorch adam算法 擬合 正態分布曲線

阿新 • • 發佈:2019-04-23

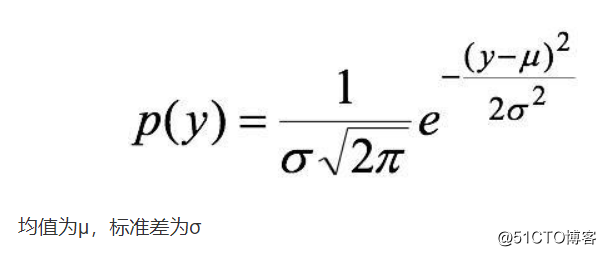

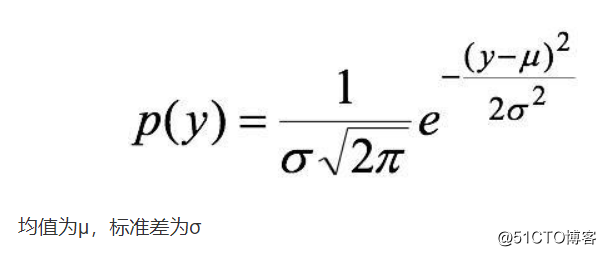

watermark nump array timestamp 需要 type mark variable pro 我們有個交易量的數據 從分布上看符合正態分布 為了合理設置機器的容量 需要對該數據進行測算 找到分布的具體參數

用python寫出來如下:

def normfun(x,mu,sigma):

return np.exp(-((x - mu)*2)/(2sigma*2)) / (sigma np.sqrt(2*np.pi))

用python寫出來如下:

def normfun(x,mu,sigma):

return np.exp(-((x - mu)*2)/(2sigma*2)) / (sigma np.sqrt(2*np.pi))

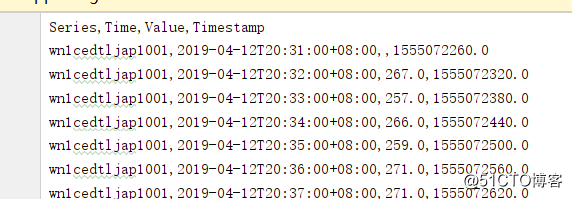

需要擬合的數據如下格式

Value為交易量 Timestamp為時間戳

我們取一天早高峰的數據

df = pd.read_csv(‘trans_grafana_data_export.csv‘)

df = df.fillna(0)

cpu = np.array(df[‘Value‘].tolist()[3118:4279])

然後建立模型開始訓練

#三個參數分別是mu sigma lamda

loss_fn = torch.nn.MSELoss()

def minnormfun(x,cpuindex,cpu):

pdf = torch.exp(-((cpuindex - x[0])*2)/(2x[1]*2)) / (x[1] np.sqrt(2np.pi))

return loss_fn(pdfx[2],cpu)

#運用梯度下降法算出三個參數

#訓練代碼

# with torch.cuda.device(0): # x = Variable(torch.DoubleTensor([100,100,100]),requires_grad = True) # cpu = np.array(df[‘Value‘].tolist()[3586:3860])/300.0 # cpuindex = np.arange(0., len(cpu)) # cpu = torch.from_numpy(cpu) # cpuindex = torch.from_numpy(cpuindex) # print(type(x)) # optimizer = torch.optim.Adam([x],lr=1e-3) # # for step in range(200000): # pred = minnormfun(x,cpuindex,cpu) # optimizer.zero_grad() # pred.backward() # optimizer.step() # if step % 2000 == 0: # print(‘step{}:x={},f(x)={}‘.format(step, x.tolist(), pred.item())) 算出來得到三個參數 #x=[142.95024248449738, 81.2642226162778, 189.98641718925109]

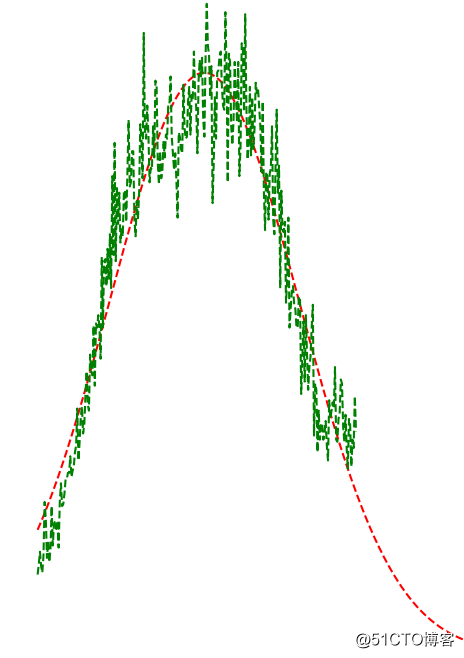

驗證擬合效果

完整代碼如下:

import numpy as np import matplotlib.mlab as mlab import matplotlib.pyplot as plt import pandas as pd from torch.autograd import Variable import torch #正態分布的概率密度函數。可以理解成 x 是 mu(均值)和 sigma(標準差)的函數 def normfun(x,mu,sigma): return np.exp(-((x - mu)**2)/(2*sigma**2)) / (sigma * np.sqrt(2*np.pi)) df = pd.read_csv(‘trans_grafana_data_export.csv‘) df = df.fillna(0) cpu = np.array(df[‘Value‘].tolist()[3118:4279]) x = np.arange(0., len(cpu)) #plt.plot(x, cpu, ‘r--‘) #plt.show() #三個參數分別是mu sigma lamda loss_fn = torch.nn.MSELoss() def minnormfun(x,cpuindex,cpu): pdf = torch.exp(-((cpuindex - x[0])**2)/(2*x[1]**2)) / (x[1] * np.sqrt(2*np.pi)) return loss_fn(pdf*x[2],cpu) #運用梯度下降法算出三個參數 #訓練代碼 # with torch.cuda.device(0): # x = Variable(torch.DoubleTensor([100,100,100]),requires_grad = True) # cpu = np.array(df[‘Value‘].tolist()[3586:3860])/300.0 # cpuindex = np.arange(0., len(cpu)) # cpu = torch.from_numpy(cpu) # cpuindex = torch.from_numpy(cpuindex) # print(type(x)) # optimizer = torch.optim.Adam([x],lr=1e-3) # # for step in range(200000): # pred = minnormfun(x,cpuindex,cpu) # optimizer.zero_grad() # pred.backward() # optimizer.step() # if step % 2000 == 0: # print(‘step{}:x={},f(x)={}‘.format(step, x.tolist(), pred.item())) #x=[142.95024248449738, 81.2642226162778, 189.98641718925109] cpuindex = np.arange(0., len(cpu)) cpu = (np.exp(-((cpuindex - 142.95024248449738)**2)/(2*81.2642226162778**2)) / (81.2642226162778 * np.sqrt(2*np.pi)))*189.98641718925109*300 plt.plot(cpuindex, cpu, ‘r--‘) cpu = np.array(df[‘Value‘].tolist()[3586:3860]) cpuindex = np.arange(0., len(cpu)) plt.plot(cpuindex, cpu, ‘g--‘) plt.show()

使用pytorch adam算法 擬合 正態分布曲線