Hadoop叢集搭建-04安裝配置HDFS

HDFS是配合Hadoop使用的分散式檔案系統,分為

namenode: nn1.hadoop nn2.hadoop

datanode: s1.hadoop s2.hadoop s3.hadoop

(看不明白這5臺虛擬機器的請看前面 01前期準備 )

解壓配置檔案

[hadoop@nn1 hadoop_base_op]$ ./ssh_all.sh mv /usr/local/hadoop/etc/hadoop /usr/local/hadoop/etc/hadoop_back [hadoop@nn1 hadoop_base_op]$ ./scp_all.sh ../up/hadoop.tar.gz /tmp/ [hadoop@nn1 hadoop_base_op]$ #批量將自定義配置 壓縮包解壓到/usr/local/hadoop/etc/ #批量檢查配置是否正確解壓 [hadoop@nn1 hadoop_base_op]$ ./ssh_all.sh head /usr/local/hadoop/etc/hadoop/hadoop-env.sh

[hadoop@nn1 hadoop_base_op]$ ./ssh_root.sh chmown -R hadoop:hadoop /usr/local/hadoop/etc/hadoop

[hadoop@nn1 hadoop_base_op]$ ./ssh_root.sh chmod -R 770 /usr/local/hadoop/etc/hadoop初始化HDFS

流程:

- 啟動zookeeper

- 啟動journalnode

- 啟動zookeeper客戶端,初始化HA的zookeeper資訊

- 對nn1上的namenode進行格式化

- 啟動nn1上的namenode

- 在nn2上啟動同步namenode

- 啟動nn2上的namenode

- 啟動ZKFC

- 啟動dataname

1.檢視zookeeper狀態

[hadoop@nn1 zk_op]$ ./zk_ssh_all.sh /usr/local/zookeeper/bin/zkServer.sh status ssh hadoop@"nn1.hadoop" "/usr/local/zookeeper/bin/zkServer.sh status" ZooKeeper JMX enabled by default Using config: /usr/local/zookeeper/bin/../conf/zoo.cfg Mode: follower OK! ssh hadoop@"nn2.hadoop" "/usr/local/zookeeper/bin/zkServer.sh status" ZooKeeper JMX enabled by default Using config: /usr/local/zookeeper/bin/../conf/zoo.cfg Mode: leader OK! ssh hadoop@"s1.hadoop" "/usr/local/zookeeper/bin/zkServer.sh status" ZooKeeper JMX enabled by default Using config: /usr/local/zookeeper/bin/../conf/zoo.cfg Mode: follower OK!

看到兩個follower和一個leader說明正常執行,如果沒有,就用下邊的命令啟動

[hadoop@nn1 zk_op]$ ./zk_ssh_all.sh /usr/local/zookeeper/bin/zkServer.sh start2.啟動journalnode

這個玩意就是namenode的同步器。

#在nn1上啟動journalnode

[hadoop@nn1 zk_op]$ hadoop-daemon.sh start journalnode

#在nn2上啟動journalnode

[hadoop@nn1 zk_op]$ hadoop-daemon.sh start journalnode

#可以分別開啟log來檢視啟動狀態

[hadoop@nn1 zk_op]$ tail /usr/local/hadoop-2.7.3/logs/hadoop-hadoop-journalnode-nn1.hadoop.log

2019-07-22 17:15:54,164 INFO org.apache.hadoop.ipc.Server: Starting Socket Reader #1 for port 8485

2019-07-22 17:15:54,190 INFO org.apache.hadoop.ipc.Server: IPC Server Responder: starting

2019-07-22 17:15:54,191 INFO org.apache.hadoop.ipc.Server: IPC Server listener on 8485: starting

#發現IPC通訊已經建立起來了,journalnode程序在84853.初始化HA資訊(僅第一次執行,以後不需要)

[hadoop@nn1 zk_op]$ hdfs zkfc -formatZK

[hadoop@nn1 zk_op]$ /usr/local/zookeeper/bin/zkCli.sh

[zk: localhost:2181(CONNECTED) 0] ls /

[zookeeper, hadoop-ha]

[zk: localhost:2181(CONNECTED) 1] quit

Quitting...4.對nn1上的namenode進行格式化(僅第一次執行,以後不需要)

[hadoop@nn1 zk_op]$ hadoop namenode -format

#出現下邊的說明初始化成功

#19/07/22 17:23:09 INFO common.Storage: Storage directory /data/dfsname has been successfully formatted.

5.啟動nn1的namenode

[hadoop@nn1 zk_op]$ hadoop-daemon.sh start namenode

[hadoop@nn1 zk_op]$ tail /usr/local/hadoop/logs/hadoop-hadoop-namenode-nn1.hadoop.log

#

#2019-07-22 17:24:57,321 INFO org.apache.hadoop.ipc.Server: IPC Server Responder: starting

#2019-07-22 17:24:57,322 INFO org.apache.hadoop.ipc.Server: IPC Server listener on 9000: starting

#2019-07-22 17:24:57,385 INFO org.apache.hadoop.hdfs.server.namenode.NameNode: NameNode RPC up at: nn1.hadoop/192.168.10.6:9000

#2019-07-22 17:24:57,385 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: Starting services required for standby state

#2019-07-22 17:24:57,388 INFO org.apache.hadoop.hdfs.server.namenode.ha.EditLogTailer: Will roll logs on active node at nn2.hadoop/192.168.10.7:9000 every 120 seconds.

#2019-07-22 17:24:57,394 INFO org.apache.hadoop.hdfs.server.namenode.ha.StandbyCheckpointer: Starting standby checkpoint thread...

#Checkpointing active NN at http://nn2.hadoop:50070

#Serving checkpoints at http://nn1.hadoop:50070

6.在nn2機器上同步nn1的namenode狀態(僅第一次執行,以後不需要)

我們來到nn2的控制檯!

###########一定要在nn2機器上執行這個!!!!############

[hadoop@nn2 ~]$ hadoop namenode -bootstrapStandby

=====================================================

About to bootstrap Standby ID nn2 from:

Nameservice ID: ns1

Other Namenode ID: nn1

Other NN's HTTP address: http://nn1.hadoop:50070

Other NN's IPC address: nn1.hadoop/192.168.10.6:9000

Namespace ID: 1728347664

Block pool ID: BP-581543280-192.168.10.6-1563787389190

Cluster ID: CID-42d2124d-9f54-4902-aa31-948fb0233943

Layout version: -63

isUpgradeFinalized: true

=====================================================

19/07/22 17:30:24 INFO common.Storage: Storage directory /data/dfsname has been successfully formatted.

7.啟動nn2的namenode

還是在nn2控制檯執行!!

[hadoop@nn2 ~]$ hadoop-daemon.sh start namenode

#檢視log來看看有沒有啟動成功

[hadoop@nn2 ~]$ tail /usr/local/hadoop-2.7.3/logs/hadoop-hadoop-namenode-nn2.hadoop.log8.啟動ZKFC

這時候在nn1和nn2分別啟動ZKFC,這時候兩臺機器的namenode,一個變成active一個變成standby!!ZKFC實現了HA高可用的自動切換!!

#############在nn1執行#################

[hadoop@nn1 zk_op]$ hadoop-daemon.sh start zkfc#############在nn2執行####################

[hadoop@nn2 zk_op]$ hadoop-daemon.sh start zkfc這時候在瀏覽器輸入地址訪問兩臺機器的hadoop介面

http://192.168.10.6:50070/dfshealth.html#tab-overview

http://192.168.10.7:50070/dfshealth.html#tab-overview

這兩個有一個active有一個是standby狀態。

9.啟動dataname就是啟動後三臺機器

########首先確定slaves檔案裡存放了需要配置誰為datanode

[hadoop@nn1 hadoop]$ cat slaves

s1.hadoop

s2.hadoop

s3.hadoop

###########在顯示為active的機器上執行##############

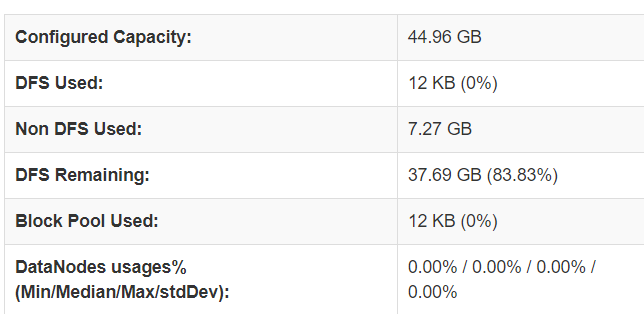

[hadoop@nn1 zk_op]$ hadoop-daemons.sh start datanode10.檢視硬碟容量

開啟剛才hadoop網頁,檢視hdfs的硬碟格式化好了沒有。

這裡是HDFS系統為每臺實體機器的硬碟預設預留了2G(可以在配置檔案hdfs-site.xml裡更改),然後實際用來做hdfs的是每臺機器15G,所以三臺一共45G。

如圖成功配置好HDFS。

之前寫的文章在這裡: