Prometheus Operator 監控Kubernetes

Prometheus Operator 監控Kubernetes

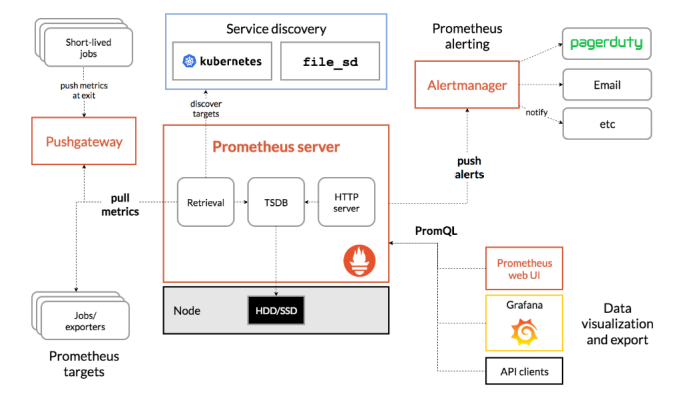

1. Prometheus的基本架構

Prometheus是一個開源的完整監控解決方案,涵蓋資料採集、查詢、告警、展示整個監控流程,下圖是Prometheus的架構圖:

官方文件:https://prometheus.io/docs/introduction/overview/

2. 元件說明

Prometheus生態系統由多個元件組成。其中許多元件都是可選的

- Promethus server

必須安裝,本質是一個時序資料庫,主要負責資料pull、儲存、分析,提供 PromQL 查詢語言的支援;

- Push Gateway

非必選項,支援臨時性Job主動推送指標的中間閘道器

- exporters

部署在客戶端的agent,如 node_exporte, mysql_exporter等

提供被監控元件資訊的 HTTP 介面被叫做 exporter ,目前網際網路公司常用的元件大部分都有 exporter 可以直接使用,比如 Varnish、Haproxy、Nginx、MySQL、Linux 系統資訊 (包括磁碟、記憶體、CPU、網路等等);如:https://prometheus.io/docs/instrumenting/exporters/

- alertmanager

用來進行報警,Promethus server 經過分析, 把出發的警報傳送給 alertmanager 元件,alertmanager 元件通過自身的規則,來發送通知,(郵件,或者webhook)

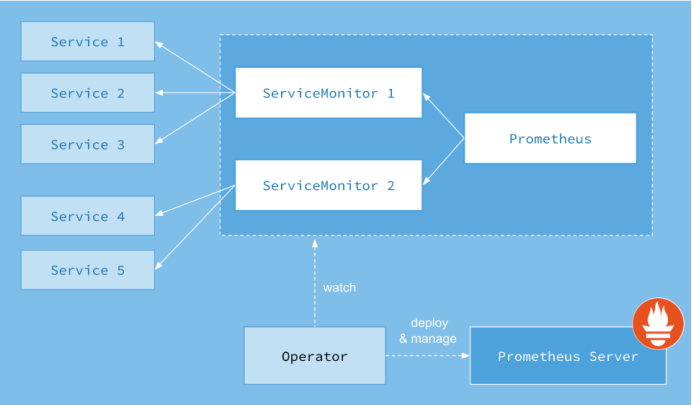

3. Prometheus-Operator

Prometheus-Operator的架構圖:

上圖是Prometheus-Operator官方提供的架構圖,其中Operator是最核心的部分,作為一個控制器,他會去建立Prometheus、ServiceMonitor、AlertManager以及PrometheusRule4個CRD資源物件,然後會一直監控並維持這4個資源物件的狀態。

其中建立的prometheus這種資源物件就是作為Prometheus Server存在,而ServiceMonitor就是exporter的各種抽象,exporter前面我們已經學習了,是用來提供專門提供metrics資料介面的工具,Prometheus就是通過ServiceMonitor提供的metrics資料介面去 pull 資料的,當然alertmanager這種資源物件就是對應的AlertManager的抽象,而PrometheusRule是用來被Prometheus例項使用的報警規則檔案。

這樣我們要在叢集中監控什麼資料,就變成了直接去操作 Kubernetes 叢集的資源物件了,是不是方便很多了。上圖中的 Service 和 ServiceMonitor 都是 Kubernetes 的資源,一個 ServiceMonitor 可以通過 labelSelector 的方式去匹配一類 Service,Prometheus 也可以通過 labelSelector 去匹配多個ServiceMonitor。

4. Prometheus-Operator部署

官方chart地址:https://github.com/helm/charts/tree/master/stable/prometheus-operator

搜尋最新包下載到本地

# 搜尋

helm search prometheus-operator NAME CHART VERSION APP VERSION DESCRIPTION stable/prometheus-operator 6.4.0 0.31.0 Provides easy monitoring definitions for Kubernetes servi...

# 拉取到本地

helm fetch prometheus-operator

安裝

# 新建一個monitoring的namespaces

Kubectl create ns monitoring

# 安裝

helm install -f ./prometheus-operator/values.yaml --name prometheus-operator --namespace=monitoring ./prometheus-operator

# 更新

helm upgrade -f prometheus-operator/values.yaml prometheus-operator ./prometheus-operator

解除安裝prometheus-operator

helm delete prometheus-operator --purge

# 刪除crd

kubectl delete customresourcedefinitions prometheuses.monitoring.coreos.com prometheusrules.monitoring.coreos.com servicemonitors.monitoring.coreos.com kubectl delete customresourcedefinitions alertmanagers.monitoring.coreos.com kubectl delete customresourcedefinitions podmonitors.monitoring.coreos.com

修改配置文件values.yaml

4.1. 郵件告警

config:

global:

resolve_timeout: 5m

smtp_smarthost: 'smtp.qq.com:465'

smtp_from: '[email protected]'

smtp_auth_username: '[email protected]'

smtp_auth_password: 'xreqcqffrxtnieff'

smtp_hello: '163.com'

smtp_require_tls: false

route:

group_by: ['job','severity']

group_wait: 30s

group_interval: 1m

repeat_interval: 12h

receiver: default

routes:

- receiver: webhook

match:

alertname: TargetDown

receivers:

- name: default

email_configs:

- to: '[email protected]'

send_resolved: true

- name: webhook

email_configs:

- to: '[email protected]'

send_resolved: true

這裡有個坑請參考:https://www.cnblogs.com/Dev0ps/p/11320177.html

4.2. prometheus持久化儲存

storage:

volumeClaimTemplate:

spec:

storageClassName: nfs-client

accessModes: ["ReadWriteOnce"]

resources:

requests:

storage: 50Gi

4.3. Grafana持久化

路徑:prometheus-operator/charts/grafana/values.yaml

persistence:

enabled: true

storageClassName: "nfs-client"

accessModes:

- ReadWriteOnce

size: 10Gi

4.4. 自動發現Service

- job_name: 'kubernetes-service-endpoints'

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

- job_name: 'kubernetes-pod'

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

action: replace

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

target_label: __address__

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: kubernetes_pod_name

- job_name: istio-mesh

scrape_interval: 15s

scrape_timeout: 10s

metrics_path: /metrics

scheme: http

kubernetes_sd_configs:

- api_server: null

role: endpoints

namespaces:

names:

- istio-system

relabel_configs:

- source_labels: [__meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

separator: ;

regex: istio-telemetry;prometheus

replacement: $1

action: keep

- job_name: envoy-stats

scrape_interval: 15s

scrape_timeout: 10s

metrics_path: /stats/prometheus

scheme: http

kubernetes_sd_configs:

- api_server: null

role: pod

namespaces:

names: []

relabel_configs:

- source_labels: [__meta_kubernetes_pod_container_port_name]

separator: ;

regex: .*-envoy-prom

replacement: $1

action: keep

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

separator: ;

regex: ([^:]+)(?::\d+)?;(\d+)

target_label: __address__

replacement: $1:15090

action: replace

- separator: ;

regex: __meta_kubernetes_pod_label_(.+)

replacement: $1

action: labelmap

- source_labels: [__meta_kubernetes_namespace]

separator: ;

regex: (.*)

target_label: namespace

replacement: $1

action: replace

- source_labels: [__meta_kubernetes_pod_name]

separator: ;

regex: (.*)

target_label: pod_name

replacement: $1

action: replace

metric_relabel_configs:

- source_labels: [cluster_name]

separator: ;

regex: (outbound|inbound|prometheus_stats).*

replacement: $1

action: drop

- source_labels: [tcp_prefix]

separator: ;

regex: (outbound|inbound|prometheus_stats).*

replacement: $1

action: drop

- source_labels: [listener_address]

separator: ;

regex: (.+)

replacement: $1

action: drop

- source_labels: [http_conn_manager_listener_prefix]

separator: ;

regex: (.+)

replacement: $1

action: drop

- source_labels: [http_conn_manager_prefix]

separator: ;

regex: (.+)

replacement: $1

action: drop

- source_labels: [__name__]

separator: ;

regex: envoy_tls.*

replacement: $1

action: drop

- source_labels: [__name__]

separator: ;

regex: envoy_tcp_downstream.*

replacement: $1

action: drop

- source_labels: [__name__]

separator: ;

regex: envoy_http_(stats|admin).*

replacement: $1

action: drop

- source_labels: [__name__]

separator: ;

regex: envoy_cluster_(lb|retry|bind|internal|max|original).*

replacement: $1

action: drop

- job_name: istio-policy

scrape_interval: 15s

scrape_timeout: 10s

metrics_path: /metrics

scheme: http

kubernetes_sd_configs:

- api_server: null

role: endpoints

namespaces:

names:

- istio-system

relabel_configs:

- source_labels: [__meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

separator: ;

regex: istio-policy;http-monitoring

replacement: $1

action: keep

- job_name: istio-telemetry

scrape_interval: 15s

scrape_timeout: 10s

metrics_path: /metrics

scheme: http

kubernetes_sd_configs:

- api_server: null

role: endpoints

namespaces:

names:

- istio-system

relabel_configs:

- source_labels: [__meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

separator: ;

regex: istio-telemetry;http-monitoring

replacement: $1

action: keep

- job_name: pilot

scrape_interval: 15s

scrape_timeout: 10s

metrics_path: /metrics

scheme: http

kubernetes_sd_configs:

- api_server: null

role: endpoints

namespaces:

names:

- istio-system

relabel_configs:

- source_labels: [__meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

separator: ;

regex: istio-pilot;http-monitoring

replacement: $1

action: keep

- job_name: galley

scrape_interval: 15s

scrape_timeout: 10s

metrics_path: /metrics

scheme: http

kubernetes_sd_configs:

- api_server: null

role: endpoints

namespaces:

names:

- istio-system

relabel_configs:

- source_labels: [__meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

separator: ;

regex: istio-galley;http-monitoring

replacement: $1

action: keep

- job_name: citadel

scrape_interval: 15s

scrape_timeout: 10s

metrics_path: /metrics

scheme: http

kubernetes_sd_configs:

- api_server: null

role: endpoints

namespaces:

names:

- istio-system

relabel_configs:

- source_labels: [__meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

separator: ;

regex: istio-citadel;http-monitoring

replacement: $1

action: keep

- job_name: kubernetes-pods-istio-secure

scrape_interval: 15s

scrape_timeout: 10s

metrics_path: /metrics

scheme: https

kubernetes_sd_configs:

- api_server: null

role: pod

namespaces:

names: []

tls_config:

ca_file: /etc/istio-certs/root-cert.pem

cert_file: /etc/istio-certs/cert-chain.pem

key_file: /etc/istio-certs/key.pem

insecure_skip_verify: true

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

separator: ;

regex: "true"

replacement: $1

action: keep

- source_labels: [__meta_kubernetes_pod_annotation_sidecar_istio_io_status, __meta_kubernetes_pod_annotation_istio_mtls]

separator: ;

regex: (([^;]+);([^;]*))|(([^;]*);(true))

replacement: $1

action: keep

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scheme]

separator: ;

regex: (http)

replacement: $1

action: drop

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]

separator: ;

regex: (.+)

target_label: __metrics_path__

replacement: $1

action: replace

- source_labels: [__address__]

separator: ;

regex: ([^:]+):(\d+)

replacement: $1

action: keep

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

separator: ;

regex: ([^:]+)(?::\d+)?;(\d+)

target_label: __address__

replacement: $1:$2

action: replace

- separator: ;

regex: __meta_kubernetes_pod_label_(.+)

replacement: $1

action: labelmap

- source_labels: [__meta_kubernetes_namespace]

separator: ;

regex: (.*)

target_label: namespace

replacement: $1

action: replace

- source_labels: [__meta_kubernetes_pod_name]

separator: ;

regex: (.*)

target_label: pod_name

replacement: $1

action: replace

4.5. etcd

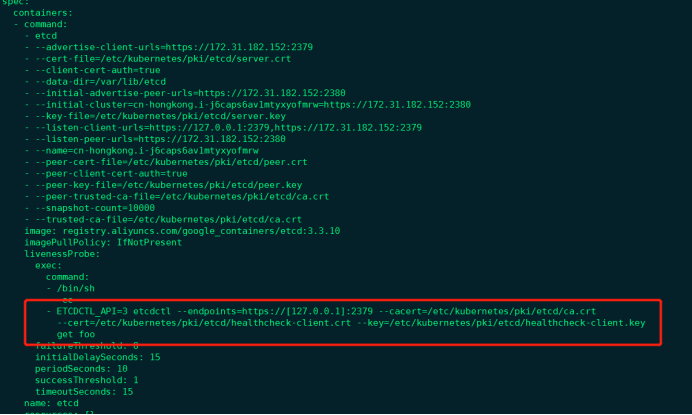

對於 etcd 叢集一般情況下,為了安全都會開啟 https 證書認證的方式,所以要想讓 Prometheus 訪問到 etcd 叢集的監控資料,就需要提供相應的證書校驗。

由於我們這裡演示環境使用的是 Kubeadm 搭建的叢集,我們可以使用 kubectl 工具去獲取 etcd 啟動的時候使用的證書路徑:

[root@cn-hongkong ~]# kubectl get pod etcd-cn-hongkong.i-j6caps6av1mtyxyofmrw -n kube-system -o yaml

我們可以看到 etcd 使用的證書都對應在節點的 /etc/kubernetes/pki/etcd 這個路徑下面,所以首先我們將需要使用到的證書通過 secret 物件儲存到叢集中去:(在 etcd 執行的節點)

1) 手動獲取etcd資訊

curl --cacert /etc/kubernetes/pki/etcd/ca.crt --cert /etc/kubernetes/pki/etcd/healthcheck-client.crt --key /etc/kubernetes/pki/etcd/healthcheck-client.key https://172.31.182.152:2379/metrics

2) 使用prometheus抓取

kubectl -n monitoring create secret generic etcd-certs --from-file=/etc/kubernetes/pki/etcd/healthcheck-client.crt --from-file=/etc/kubernetes/pki/etcd/healthcheck-client.key --from-file=/etc/kubernetes/pki/etcd/ca.crt

3) 新增values.yaml檔案中kubeEtcd配置

## Component scraping etcd

##

kubeEtcd:

enabled: true

## If your etcd is not deployed as a pod, specify IPs it can be found on

##

endpoints: []

## Etcd service. If using kubeEtcd.endpoints only the port and targetPort are used

##

service:

port: 2379

targetPort: 2379

selector:

component: etcd

## Configure secure access to the etcd cluster by loading a secret into prometheus and

## specifying security configuration below. For example, with a secret named etcd-client-cert

##

serviceMonitor:

scheme: https

insecureSkipVerify: true

serverName: localhost

caFile: /etc/prometheus/secrets/etcd-certs/ca.crt

certFile: /etc/prometheus/secrets/etcd-certs/healthcheck-client.crt

keyFile: /etc/prometheus/secrets/etcd-certs/healthcheck-client.key

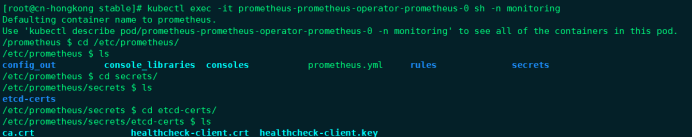

4) 將上面建立的etcd-certs物件配置到prometheus中(特別重要)

## Secrets is a list of Secrets in the same namespace as the Prometheus object, which shall be mounted into the Prometheus Pods.

## The Secrets are mounted into /etc/prometheus/secrets/. Secrets changes after initial creation of a Prometheus object are not

## reflected in the running Pods. To change the secrets mounted into the Prometheus Pods, the object must be deleted and recreated

## with the new list of secrets.

##

secrets:

- etcd-certs

安裝後證書就會出現在prometheus目錄下

4.6抓取自定義Server

我們需要建一個ServiceMonitor,namespaceSelector:的any:true表示匹配 所有名稱空間下面的具有 app= sscp-transaction這個 label 標籤的 Service。

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

labels:

app: sscp-transaction

release: prometheus-operator

name: springboot

namespace: monitoring

spec:

endpoints:

- interval: 15s

path: /actuator/prometheus

port: health

scheme: http

namespaceSelector:

any: true

# matchNames:

# - sscp-dev

selector:

matchLabels:

app: sscp-transaction

# release: sscp

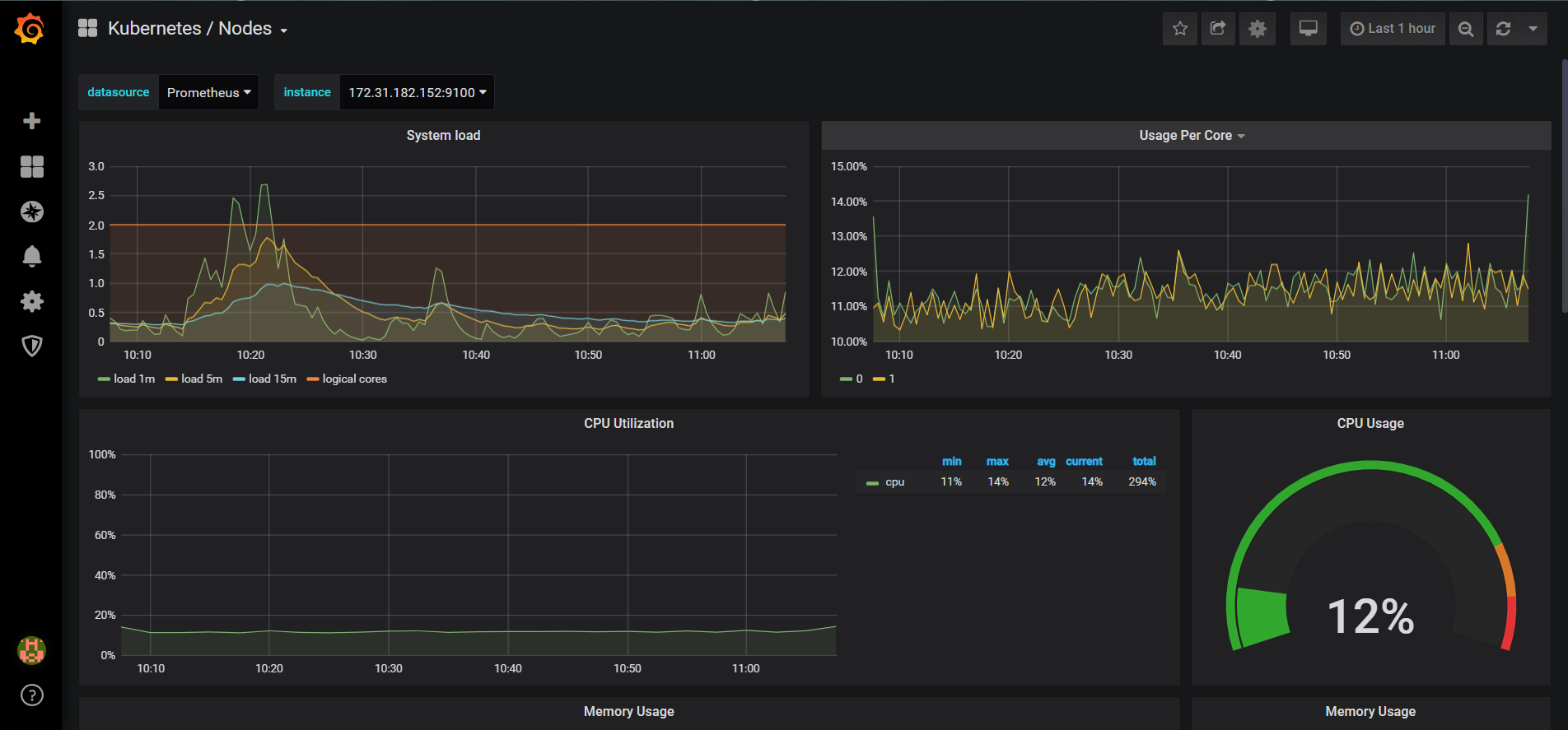

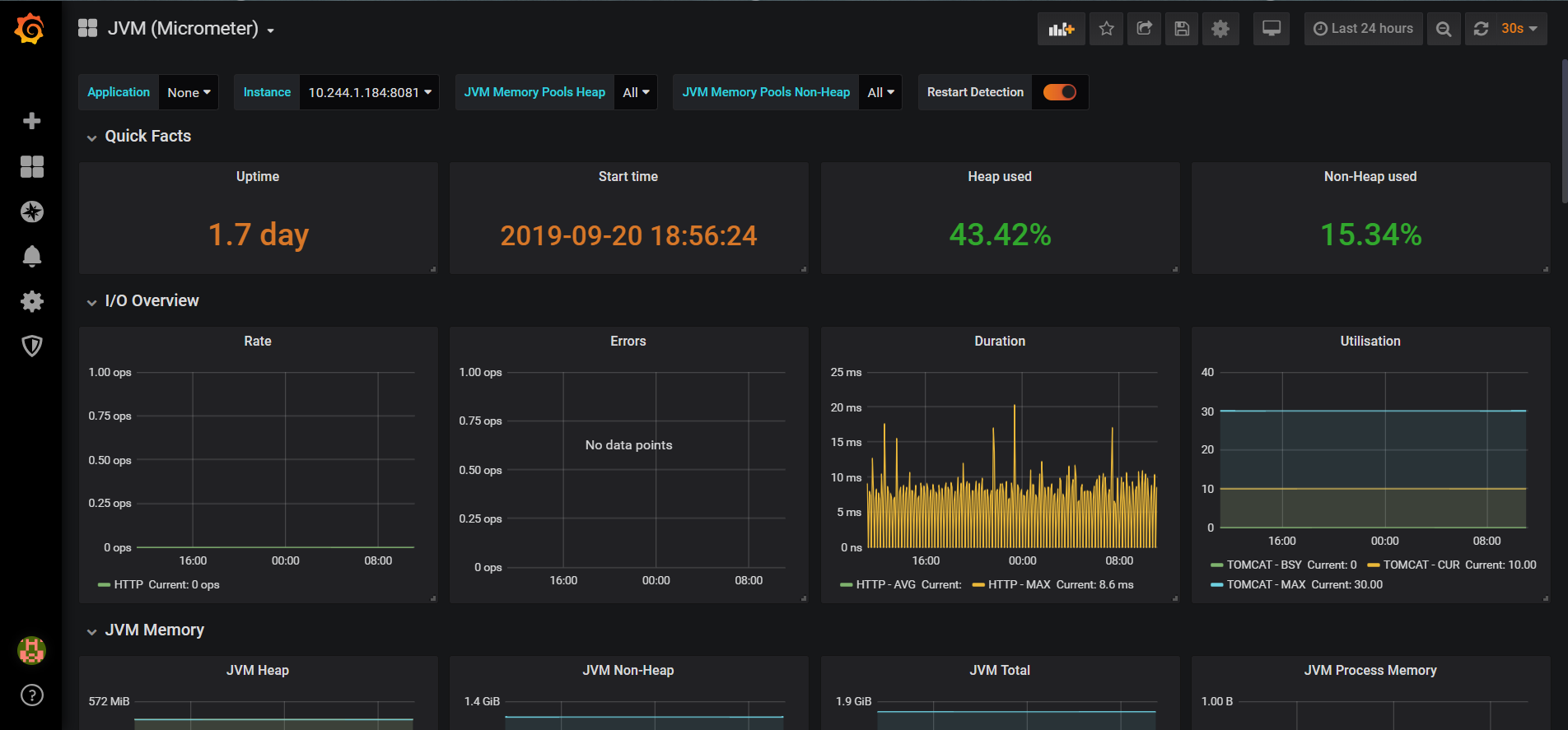

效果圖:

&n