Redis服務之叢集節點管理

上一篇部落格主要聊了下redis cluster的部署配置,以及使用redis.trib.rb工具所需ruby環境的搭建、使用redis.trib.rb工具建立、檢視叢集相關資訊等,回顧請參考https://www.cnblogs.com/qiuhom-1874/p/13442458.html;今天我們接著來了解下redis.trib.rb這個工具來管理redis3/4 cluster 中的節點;

新增節點到現有叢集

環境說明

新增節點到現有叢集,首先我們要和叢集中redis的版本、驗證密碼相同,其次硬體配置都應該相同;然後啟動兩臺redis server;我這裡演示為了節省機器,在node03上直接啟動兩個例項來代替redis server。環境如下

目錄結構

[root@node03 redis]# ll

total 12

drwxr-xr-x 5 root root 40 Aug 5 22:57 6379

drwxr-xr-x 5 root root 40 Aug 5 22:57 6380

drwxr-xr-x 2 root root 134 Aug 5 22:16 bin

-rw-r--r-- 1 root root 175 Aug 8 08:35 dump.rdb

-rw-r--r-- 1 root root 803 Aug 8 08:35 redis-cluster_6379.conf

-rw-r--r-- 1 root root 803 Aug 8 08:35 redis-cluster_6380.conf

[root@node03 redis]# mkdir {6381,6382}/{etc,logs,run} -p

[root@node03 redis]# tree

.

├── 6379

│ ├── etc

│ │ ├── redis.conf

│ │ └── sentinel.conf

│ ├── logs

│ │ └── redis_6379.log

│ └── run

├── 6380

│ ├── etc

│ │ ├── redis.conf

│ │ └── sentinel.conf

│ ├── logs

│ │ └── redis_6380.log

│ └── run

├── 6381

│ ├── etc

│ ├── logs

│ └── run

├── 6382

│ ├── etc

│ ├── logs

│ └── run

├── bin

│ ├── redis-benchmark

│ ├── redis-check-aof

│ ├── redis-check-rdb

│ ├── redis-cli

│ ├── redis-sentinel -> redis-server

│ └── redis-server

├── dump.rdb

├── redis-cluster_6379.conf

└── redis-cluster_6380.conf

17 directories, 15 files

[root@node03 redis]#

複製配置檔案到對應目錄的/etc/目錄下

[root@node03 redis]# cp 6379/etc/redis.conf 6381/etc/ [root@node03 redis]# cp 6379/etc/redis.conf 6382/etc/

修改配置檔案中對應埠資訊

[root@node03 redis]# sed -ri 's@6379@6381@g' 6381/etc/redis.conf [root@node03 redis]# sed -ri 's@6379@6382@g' 6382/etc/redis.conf

確認配置檔案資訊

[root@node03 redis]# grep -E "^(port|cluster|logfile)" 6381/etc/redis.conf port 6381 logfile "/usr/local/redis/6381/logs/redis_6381.log" cluster-enabled yes cluster-config-file redis-cluster_6381.conf [root@node03 redis]# grep -E "^(port|cluster|logfile)" 6382/etc/redis.conf port 6382 logfile "/usr/local/redis/6382/logs/redis_6382.log" cluster-enabled yes cluster-config-file redis-cluster_6382.conf [root@node03 redis]#

提示:如果對應目錄下的配置檔案沒有問題,接下來就可以直接啟動redis服務了;

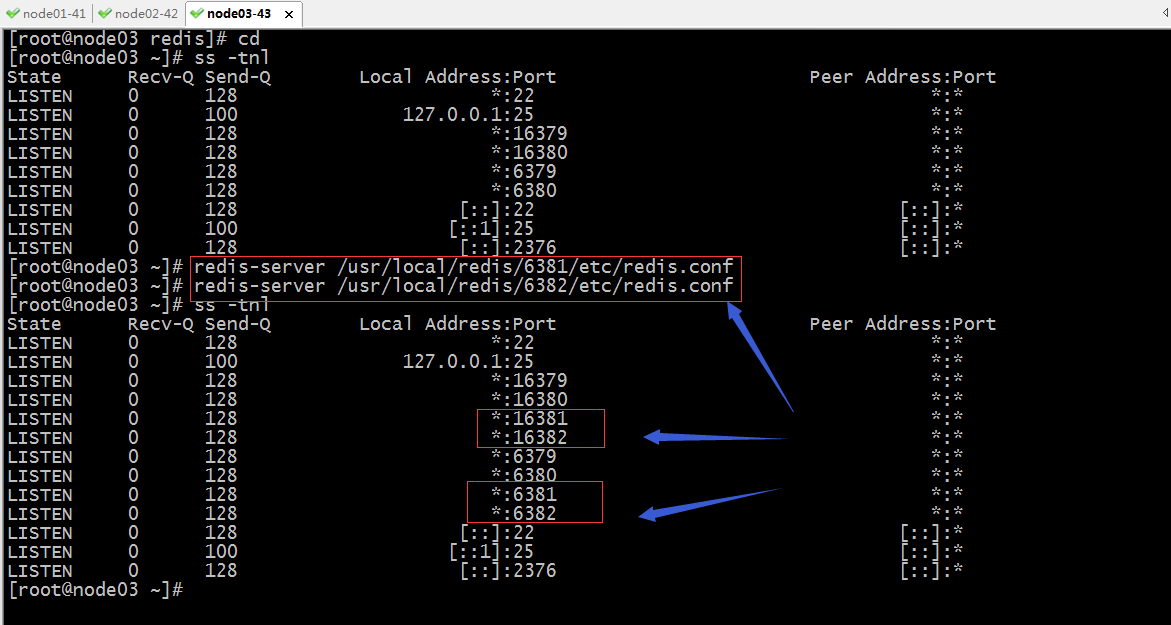

啟動redis

提示:可以看到對應的埠已經處於監聽狀態了;接下來我們就可以使用redis.trib.rb把兩個節點新增到叢集

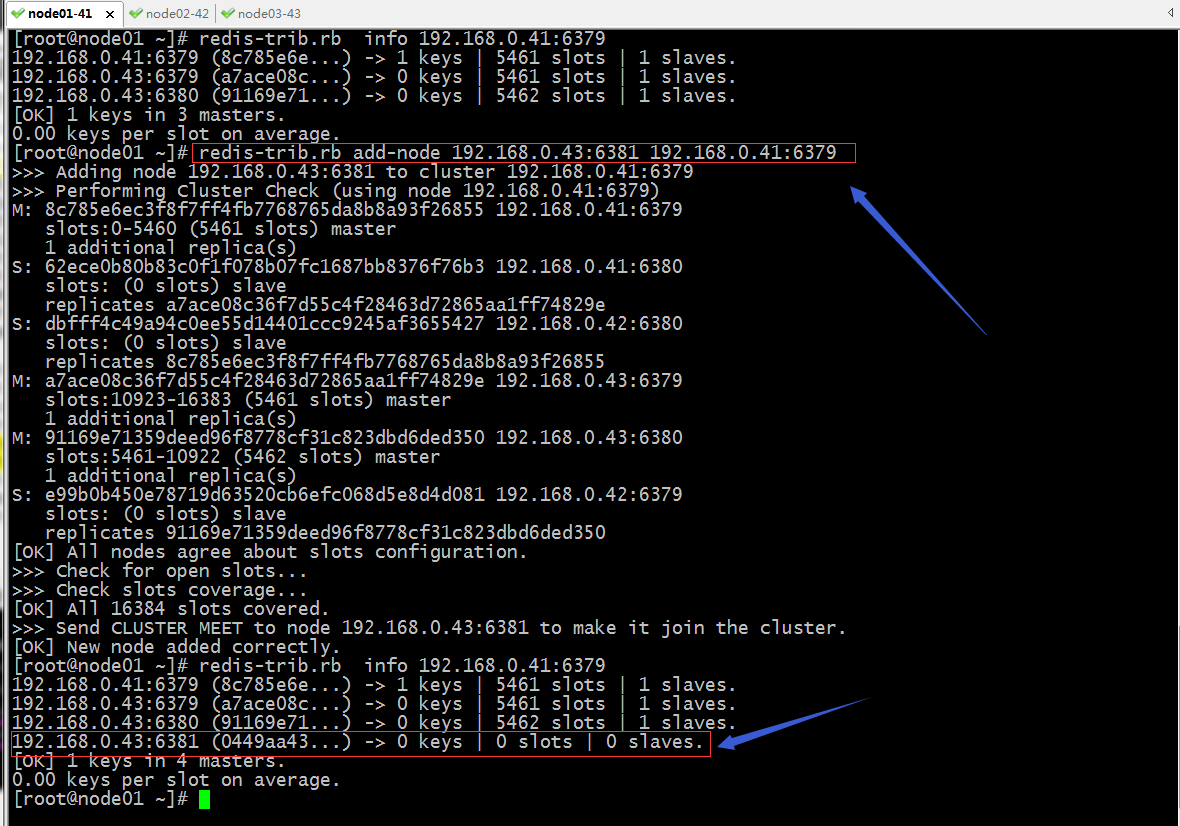

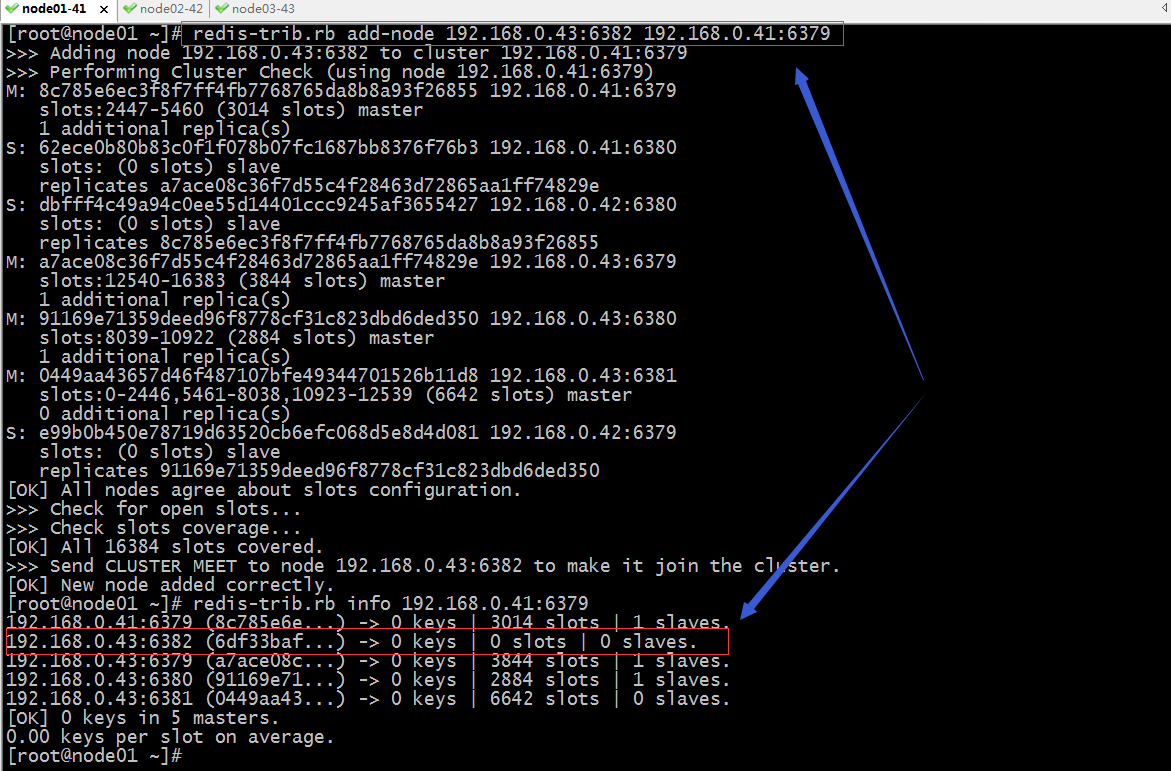

新增新節點到叢集

提示:add-node 表示新增節點到叢集,需要先指定要新增節點的ip地址和埠,後面再跟叢集中已有節點的任意一個ip地址和埠即可;從上面的資訊可以看到192.168.0.43:6381已經成功加入到叢集,但是上面沒有槽位了,也沒有slave;

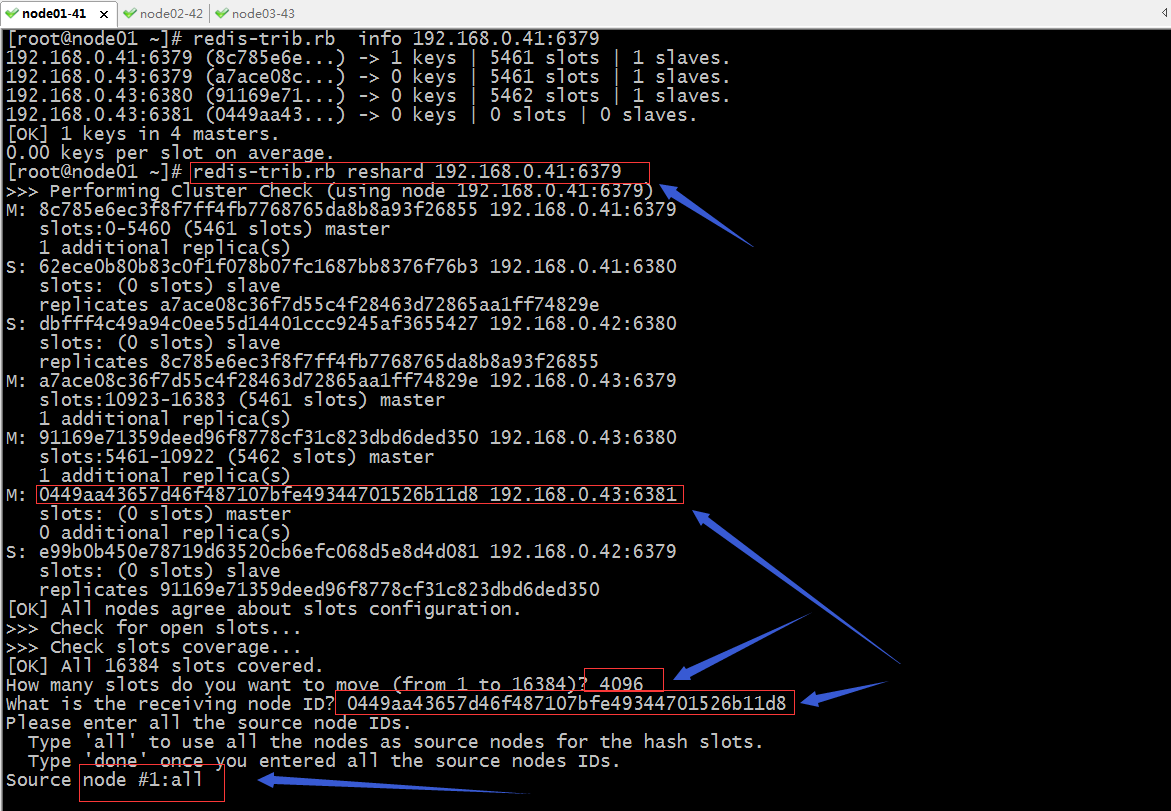

分配槽位給新加的節點

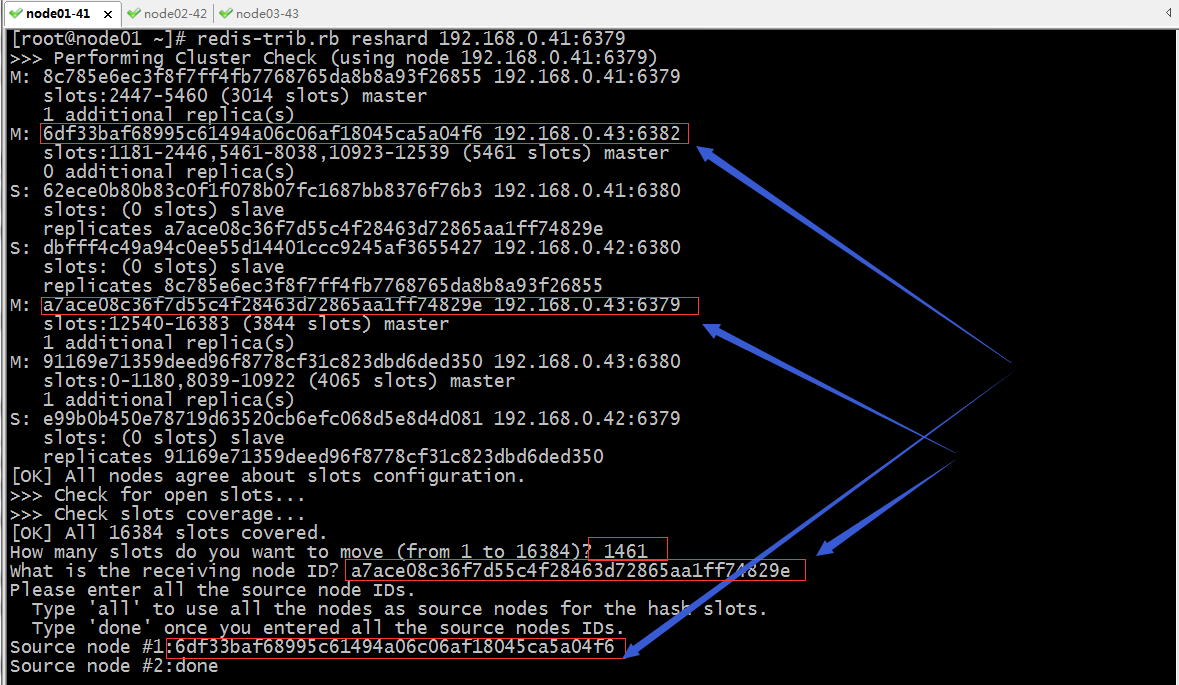

提示:使用reshard 指定叢集中任意節點的地址和埠即可啟動對叢集重新分片操作;重新分配槽位需要指定移動好多個槽位,接收指定數量槽位的節點id,從那些節點上移動指定數量的槽位,all表示叢集中已有槽位的節點上;如果是手動指定,那麼需要指定對應節點的ID,最後如果指定完成,需要使用done表示以上source node指定完成;接下來它會列印一個方案槽位移動方案,讓我們確定。

Ready to move 4096 slots.

Source nodes:

M: 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 192.168.0.41:6379

slots:0-5460 (5461 slots) master

1 additional replica(s)

M: a7ace08c36f7d55c4f28463d72865aa1ff74829e 192.168.0.43:6379

slots:10923-16383 (5461 slots) master

1 additional replica(s)

M: 91169e71359deed96f8778cf31c823dbd6ded350 192.168.0.43:6380

slots:5461-10922 (5462 slots) master

1 additional replica(s)

Destination node:

M: 0449aa43657d46f487107bfe49344701526b11d8 192.168.0.43:6381

slots: (0 slots) master

0 additional replica(s)

Resharding plan:

Moving slot 5461 from 91169e71359deed96f8778cf31c823dbd6ded350

Moving slot 5462 from 91169e71359deed96f8778cf31c823dbd6ded350

Moving slot 5463 from 91169e71359deed96f8778cf31c823dbd6ded350

Moving slot 5464 from 91169e71359deed96f8778cf31c823dbd6ded350

Moving slot 5465 from 91169e71359deed96f8778cf31c823dbd6ded350

Moving slot 5466 from 91169e71359deed96f8778cf31c823dbd6ded350

Moving slot 5467 from 91169e71359deed96f8778cf31c823dbd6ded350

Moving slot 5468 from 91169e71359deed96f8778cf31c823dbd6ded350

……省略部分內容……

Moving slot 12281 from a7ace08c36f7d55c4f28463d72865aa1ff74829e

Moving slot 12282 from a7ace08c36f7d55c4f28463d72865aa1ff74829e

Moving slot 12283 from a7ace08c36f7d55c4f28463d72865aa1ff74829e

Moving slot 12284 from a7ace08c36f7d55c4f28463d72865aa1ff74829e

Moving slot 12285 from a7ace08c36f7d55c4f28463d72865aa1ff74829e

Moving slot 12286 from a7ace08c36f7d55c4f28463d72865aa1ff74829e

Moving slot 12287 from a7ace08c36f7d55c4f28463d72865aa1ff74829e

Do you want to proceed with the proposed reshard plan (yes/no)? yes

提示:輸入yes就表示同意上面的方案;

Moving slot 1177 from 192.168.0.41:6379 to 192.168.0.43:6381: Moving slot 1178 from 192.168.0.41:6379 to 192.168.0.43:6381: Moving slot 1179 from 192.168.0.41:6379 to 192.168.0.43:6381: Moving slot 1180 from 192.168.0.41:6379 to 192.168.0.43:6381: [ERR] Calling MIGRATE: ERR Syntax error, try CLIENT (LIST | KILL | GETNAME | SETNAME | PAUSE | REPLY) [root@node01 ~]#

提示:以上報錯的原因是192.168.0.41:6379上對應1180號槽位繫結的有資料;這裡需要注意一點,在叢集分配槽位的時候,必須是分配沒有繫結資料的槽位,有資料是不行的,所以通常重新分配槽位需要停機把資料拷貝到其他伺服器上,然後把槽位分配好了以後在新增進來即可;

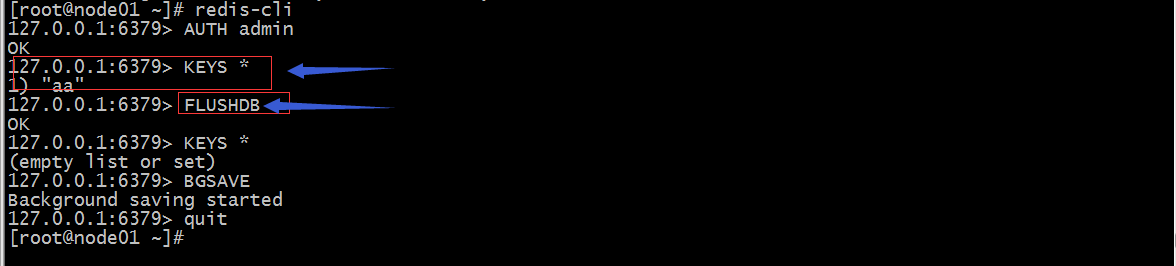

清除資料

修復叢集

再次分配槽位

[root@node01 ~]# redis-trib.rb reshard 192.168.0.41:6379

>>> Performing Cluster Check (using node 192.168.0.41:6379)

M: 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 192.168.0.41:6379

slots:1181-5460 (4280 slots) master

1 additional replica(s)

S: 62ece0b80b83c0f1f078b07fc1687bb8376f76b3 192.168.0.41:6380

slots: (0 slots) slave

replicates a7ace08c36f7d55c4f28463d72865aa1ff74829e

S: dbfff4c49a94c0ee55d14401ccc9245af3655427 192.168.0.42:6380

slots: (0 slots) slave

replicates 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855

M: a7ace08c36f7d55c4f28463d72865aa1ff74829e 192.168.0.43:6379

slots:10923-16383 (5461 slots) master

1 additional replica(s)

M: 91169e71359deed96f8778cf31c823dbd6ded350 192.168.0.43:6380

slots:6827-10922 (4096 slots) master

1 additional replica(s)

M: 0449aa43657d46f487107bfe49344701526b11d8 192.168.0.43:6381

slots:0-1180,5461-6826 (2547 slots) master

0 additional replica(s)

S: e99b0b450e78719d63520cb6efc068d5e8d4d081 192.168.0.42:6379

slots: (0 slots) slave

replicates 91169e71359deed96f8778cf31c823dbd6ded350

[OK] All nodes agree about slots configuration.

>>> Check for open slots...

>>> Check slots coverage...

[OK] All 16384 slots covered.

How many slots do you want to move (from 1 to 16384)? 4096

What is the receiving node ID? 0449aa43657d46f487107bfe49344701526b11d8

Please enter all the source node IDs.

Type 'all' to use all the nodes as source nodes for the hash slots.

Type 'done' once you entered all the source nodes IDs.

Source node #1:all

Ready to move 4096 slots.

Source nodes:

M: 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 192.168.0.41:6379

slots:1181-5460 (4280 slots) master

1 additional replica(s)

M: a7ace08c36f7d55c4f28463d72865aa1ff74829e 192.168.0.43:6379

slots:10923-16383 (5461 slots) master

1 additional replica(s)

M: 91169e71359deed96f8778cf31c823dbd6ded350 192.168.0.43:6380

slots:6827-10922 (4096 slots) master

1 additional replica(s)

Destination node:

M: 0449aa43657d46f487107bfe49344701526b11d8 192.168.0.43:6381

slots:0-1180,5461-6826 (2547 slots) master

0 additional replica(s)

Resharding plan:

Moving slot 10923 from a7ace08c36f7d55c4f28463d72865aa1ff74829e

Moving slot 10924 from a7ace08c36f7d55c4f28463d72865aa1ff74829e

Moving slot 10925 from a7ace08c36f7d55c4f28463d72865aa1ff74829e

Moving slot 10926 from a7ace08c36f7d55c4f28463d72865aa1ff74829e

Moving slot 10927 from a7ace08c36f7d55c4f28463d72865aa1ff74829e

Moving slot 10928 from a7ace08c36f7d55c4f28463d72865aa1ff74829e

Moving slot 10929 from a7ace08c36f7d55c4f28463d72865aa1ff74829e

……省略部分資訊……

Moving slot 8033 from 91169e71359deed96f8778cf31c823dbd6ded350

Moving slot 8034 from 91169e71359deed96f8778cf31c823dbd6ded350

Moving slot 8035 from 91169e71359deed96f8778cf31c823dbd6ded350

Moving slot 8036 from 91169e71359deed96f8778cf31c823dbd6ded350

Moving slot 8037 from 91169e71359deed96f8778cf31c823dbd6ded350

Moving slot 8038 from 91169e71359deed96f8778cf31c823dbd6ded350

Do you want to proceed with the proposed reshard plan (yes/no)? yes

Moving slot 10923 from 192.168.0.43:6379 to 192.168.0.43:6381:

Moving slot 10924 from 192.168.0.43:6379 to 192.168.0.43:6381:

Moving slot 10925 from 192.168.0.43:6379 to 192.168.0.43:6381:

Moving slot 10926 from 192.168.0.43:6379 to 192.168.0.43:6381:

Moving slot 10927 from 192.168.0.43:6379 to 192.168.0.43:6381:

……省略部分資訊……

Moving slot 8035 from 192.168.0.43:6380 to 192.168.0.43:6381:

Moving slot 8036 from 192.168.0.43:6380 to 192.168.0.43:6381:

Moving slot 8037 from 192.168.0.43:6380 to 192.168.0.43:6381:

Moving slot 8038 from 192.168.0.43:6380 to 192.168.0.43:6381:

[root@node01 ~]#

提示:如果再次分配槽位沒有報錯,這說明槽位重新分配完成;

確認現有叢集槽位分配情況

提示:從上面的截圖可以看到,在我們新加的節點分配了6642個槽位,並沒有平均分配,原因是在第一次分配成功了2547個槽位後出錯了,再次分配已經分配成功的它並不會退回到0,所以我們再次分配了4096個槽位給新加的節點,導致最後新加的節點槽位變成了6642個槽位;槽位分配成功了,但是對應master上還沒有slave;

給新加節點配置slave

提示:要給新master加slave節點,首先要把slave節點加入到叢集,然後在配置slave從屬某個master即可;

更改新加的節點從屬192.168.0.43:6382

[root@node01 ~]# redis-trib.rb info 192.168.0.41:6379 192.168.0.41:6379 (8c785e6e...) -> 0 keys | 3014 slots | 1 slaves. 192.168.0.43:6382 (6df33baf...) -> 0 keys | 0 slots | 0 slaves. 192.168.0.43:6379 (a7ace08c...) -> 0 keys | 3844 slots | 1 slaves. 192.168.0.43:6380 (91169e71...) -> 0 keys | 2884 slots | 1 slaves. 192.168.0.43:6381 (0449aa43...) -> 0 keys | 6642 slots | 0 slaves. [OK] 0 keys in 5 masters. 0.00 keys per slot on average. [root@node01 ~]# [root@node01 ~]# redis-cli -h 192.168.0.43 -p 6382 192.168.0.43:6382> AUTH admin OK 192.168.0.43:6382> info replication # Replication role:master connected_slaves:0 master_replid:69716e1d83cd44fba96d10e282a6534983b3ab8c master_replid2:0000000000000000000000000000000000000000 master_repl_offset:0 second_repl_offset:-1 repl_backlog_active:0 repl_backlog_size:1048576 repl_backlog_first_byte_offset:0 repl_backlog_histlen:0 192.168.0.43:6382> CLUSTER NODES 0449aa43657d46f487107bfe49344701526b11d8 192.168.0.43:6381@16381 master - 0 1596851725000 12 connected 0-2446 5461-8038 10923-12539 91169e71359deed96f8778cf31c823dbd6ded350 192.168.0.43:6380@16380 master - 0 1596851725354 8 connected 8039-10922 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 192.168.0.41:6379@16379 master - 0 1596851726377 11 connected 2447-5460 62ece0b80b83c0f1f078b07fc1687bb8376f76b3 192.168.0.41:6380@16380 slave a7ace08c36f7d55c4f28463d72865aa1ff74829e 0 1596851725762 3 connected e99b0b450e78719d63520cb6efc068d5e8d4d081 192.168.0.42:6379@16379 slave 91169e71359deed96f8778cf31c823dbd6ded350 0 1596851724334 8 connected 6df33baf68995c61494a06c06af18045ca5a04f6 192.168.0.43:6382@16382 myself,master - 0 1596851723000 0 connected dbfff4c49a94c0ee55d14401ccc9245af3655427 192.168.0.42:6380@16380 slave 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 0 1596851723000 11 connected a7ace08c36f7d55c4f28463d72865aa1ff74829e 192.168.0.43:6379@16379 master - 0 1596851723311 3 connected 12540-16383 192.168.0.43:6382> CLUSTER REPLICATE 0449aa43657d46f487107bfe49344701526b11d8 OK 192.168.0.43:6382> CLUSTER NODES 0449aa43657d46f487107bfe49344701526b11d8 192.168.0.43:6381@16381 master - 0 1596851781000 12 connected 0-2446 5461-8038 10923-12539 91169e71359deed96f8778cf31c823dbd6ded350 192.168.0.43:6380@16380 master - 0 1596851784708 8 connected 8039-10922 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 192.168.0.41:6379@16379 master - 0 1596851784000 11 connected 2447-5460 62ece0b80b83c0f1f078b07fc1687bb8376f76b3 192.168.0.41:6380@16380 slave a7ace08c36f7d55c4f28463d72865aa1ff74829e 0 1596851782000 3 connected e99b0b450e78719d63520cb6efc068d5e8d4d081 192.168.0.42:6379@16379 slave 91169e71359deed96f8778cf31c823dbd6ded350 0 1596851781000 8 connected 6df33baf68995c61494a06c06af18045ca5a04f6 192.168.0.43:6382@16382 myself,slave 0449aa43657d46f487107bfe49344701526b11d8 0 1596851783000 0 connected dbfff4c49a94c0ee55d14401ccc9245af3655427 192.168.0.42:6380@16380 slave 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 0 1596851783688 11 connected a7ace08c36f7d55c4f28463d72865aa1ff74829e 192.168.0.43:6379@16379 master - 0 1596851785730 3 connected 12540-16383 192.168.0.43:6382> quit [root@node01 ~]# redis-trib.rb info 192.168.0.41:6379 192.168.0.41:6379 (8c785e6e...) -> 0 keys | 3014 slots | 1 slaves. 192.168.0.43:6379 (a7ace08c...) -> 0 keys | 3844 slots | 1 slaves. 192.168.0.43:6380 (91169e71...) -> 0 keys | 2884 slots | 1 slaves. 192.168.0.43:6381 (0449aa43...) -> 0 keys | 6642 slots | 1 slaves. [OK] 0 keys in 4 masters. 0.00 keys per slot on average. [root@node01 ~]#

提示:要給該叢集某個節點從屬某個master,需要連線到對應的slave節點上執行cluster replicate +對應master的ID即可;到此向叢集中新增新節點就完成了;

驗證:在新加的節點上新增資料,看看是否可新增?

[root@node01 ~]# redis-cli -h 192.168.0.43 -p 6381 192.168.0.43:6381> AUTH admin OK 192.168.0.43:6381> get aa (nil) 192.168.0.43:6381> set aa a1 OK 192.168.0.43:6381> get aa "a1" 192.168.0.43:6381> set bb b1 (error) MOVED 8620 192.168.0.43:6380 192.168.0.43:6381>

提示:在新加的master上讀寫資料是可以的;

驗證:把新加master宕機,看看對應slave是否會提升為master?

[root@node01 ~]# redis-cli -h 192.168.0.43 -p 6381 192.168.0.43:6381> AUTH admin OK 192.168.0.43:6381> info replication # Replication role:master connected_slaves:1 slave0:ip=192.168.0.43,port=6382,state=online,offset=1032,lag=1 master_replid:d65b59178dd70a13e75c866d4de738c4f248c84c master_replid2:0000000000000000000000000000000000000000 master_repl_offset:1032 second_repl_offset:-1 repl_backlog_active:1 repl_backlog_size:1048576 repl_backlog_first_byte_offset:1 repl_backlog_histlen:1032 192.168.0.43:6381> quit [root@node01 ~]# redis-cli -h 192.168.0.43 -p 6382 192.168.0.43:6382> AUTH admin OK 192.168.0.43:6382> info replication # Replication role:slave master_host:192.168.0.43 master_port:6381 master_link_status:up master_last_io_seconds_ago:8 master_sync_in_progress:0 slave_repl_offset:1046 slave_priority:100 slave_read_only:1 connected_slaves:0 master_replid:d65b59178dd70a13e75c866d4de738c4f248c84c master_replid2:0000000000000000000000000000000000000000 master_repl_offset:1046 second_repl_offset:-1 repl_backlog_active:1 repl_backlog_size:1048576 repl_backlog_first_byte_offset:1 repl_backlog_histlen:1046 192.168.0.43:6382> quit [root@node01 ~]# ssh node03 Last login: Sat Aug 8 10:07:15 2020 from node01 [root@node03 ~]# ps -ef |grep redis root 1425 1 0 08:34 ? 00:00:18 redis-server 0.0.0.0:6379 [cluster] root 1431 1 0 08:35 ? 00:00:18 redis-server 0.0.0.0:6380 [cluster] root 1646 1 0 09:04 ? 00:00:14 redis-server 0.0.0.0:6381 [cluster] root 1651 1 0 09:04 ? 00:00:07 redis-server 0.0.0.0:6382 [cluster] root 5888 5868 0 10:08 pts/1 00:00:00 grep --color=auto redis [root@node03 ~]# kill -9 1646 [root@node03 ~]# redis-cli -p 6382 127.0.0.1:6382> AUTH admin OK 127.0.0.1:6382> info replication # Replication role:master connected_slaves:0 master_replid:34d6ec0e58f12ffe9bc5fbcb0c16008b5054594f master_replid2:d65b59178dd70a13e75c866d4de738c4f248c84c master_repl_offset:1102 second_repl_offset:1103 repl_backlog_active:1 repl_backlog_size:1048576 repl_backlog_first_byte_offset:1 repl_backlog_histlen:1102 127.0.0.1:6382>

提示:可以看到當master宕機後,對應slave會被提升為master;

刪除節點

刪除叢集某節點,需要保證要刪除的節點上沒有資料即可

節點不空的情況,遷移槽位到其他master上

[root@node01 ~]# redis-trib.rb reshard 192.168.0.41:6379

>>> Performing Cluster Check (using node 192.168.0.41:6379)

M: 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 192.168.0.41:6379

slots:2447-5460 (3014 slots) master

1 additional replica(s)

M: 6df33baf68995c61494a06c06af18045ca5a04f6 192.168.0.43:6382

slots:0-2446,5461-8038,10923-12539 (6642 slots) master

0 additional replica(s)

S: 62ece0b80b83c0f1f078b07fc1687bb8376f76b3 192.168.0.41:6380

slots: (0 slots) slave

replicates a7ace08c36f7d55c4f28463d72865aa1ff74829e

S: dbfff4c49a94c0ee55d14401ccc9245af3655427 192.168.0.42:6380

slots: (0 slots) slave

replicates 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855

M: a7ace08c36f7d55c4f28463d72865aa1ff74829e 192.168.0.43:6379

slots:12540-16383 (3844 slots) master

1 additional replica(s)

M: 91169e71359deed96f8778cf31c823dbd6ded350 192.168.0.43:6380

slots:8039-10922 (2884 slots) master

1 additional replica(s)

S: e99b0b450e78719d63520cb6efc068d5e8d4d081 192.168.0.42:6379

slots: (0 slots) slave

replicates 91169e71359deed96f8778cf31c823dbd6ded350

[OK] All nodes agree about slots configuration.

>>> Check for open slots...

>>> Check slots coverage...

[OK] All 16384 slots covered.

How many slots do you want to move (from 1 to 16384)? 6642

What is the receiving node ID? 91169e71359deed96f8778cf31c823dbd6ded350

Please enter all the source node IDs.

Type 'all' to use all the nodes as source nodes for the hash slots.

Type 'done' once you entered all the source nodes IDs.

Source node #1:6df33baf68995c61494a06c06af18045ca5a04f6

Source node #2:done

Ready to move 6642 slots.

Source nodes:

M: 6df33baf68995c61494a06c06af18045ca5a04f6 192.168.0.43:6382

slots:0-2446,5461-8038,10923-12539 (6642 slots) master

0 additional replica(s)

Destination node:

M: 91169e71359deed96f8778cf31c823dbd6ded350 192.168.0.43:6380

slots:8039-10922 (2884 slots) master

1 additional replica(s)

Resharding plan:

Moving slot 0 from 6df33baf68995c61494a06c06af18045ca5a04f6

Moving slot 1 from 6df33baf68995c61494a06c06af18045ca5a04f6

Moving slot 2 from 6df33baf68995c61494a06c06af18045ca5a04f6

Moving slot 3 from 6df33baf68995c61494a06c06af18045ca5a04f6

Moving slot 4 from 6df33baf68995c61494a06c06af18045ca5a04f6

Moving slot 5 from 6df33baf68995c61494a06c06af18045ca5a04f6

Moving slot 6 from 6df33baf68995c61494a06c06af18045ca5a04f6

……省略部分內容……

Moving slot 12536 from 6df33baf68995c61494a06c06af18045ca5a04f6

Moving slot 12537 from 6df33baf68995c61494a06c06af18045ca5a04f6

Moving slot 12538 from 6df33baf68995c61494a06c06af18045ca5a04f6

Moving slot 12539 from 6df33baf68995c61494a06c06af18045ca5a04f6

Do you want to proceed with the proposed reshard plan (yes/no)? yes

Moving slot 0 from 192.168.0.43:6382 to 192.168.0.43:6380:

Moving slot 1 from 192.168.0.43:6382 to 192.168.0.43:6380:

Moving slot 2 from 192.168.0.43:6382 to 192.168.0.43:6380:

Moving slot 3 from 192.168.0.43:6382 to 192.168.0.43:6380:

……省略部分內容……

Moving slot 1178 from 192.168.0.43:6382 to 192.168.0.43:6380:

Moving slot 1179 from 192.168.0.43:6382 to 192.168.0.43:6380:

Moving slot 1180 from 192.168.0.43:6382 to 192.168.0.43:6380:

[ERR] Calling MIGRATE: ERR Syntax error, try CLIENT (LIST | KILL | GETNAME | SETNAME | PAUSE | REPLY)

[root@node01 ~]#

提示:這個報錯和上面新增節點報錯一樣,都是告訴我們對應槽位綁定了資料造成的;解決辦法就是把對應節點上的資料拷貝出來,然後把資料情況然後在移動槽位即可;這裡說一下,我們要把某個節點上的slot移動到其他master上,需要指定移動多少個slot到那個節點,這裡的節點也是需要用id指定,source node如果是多個分別指定其ID,最後用done表示完成;其實就是和重新分配slot的操作一樣;

清空資料

[root@node01 ~]# redis-cli -h 192.168.0.43 -p 6382 192.168.0.43:6382> AUTH admin OK 192.168.0.43:6382> KEYS * 1) "aa" 192.168.0.43:6382> FLUSHDB OK 192.168.0.43:6382> KEYS * (empty list or set) 192.168.0.43:6382> BGSAVE Background saving started 192.168.0.43:6382> quit [root@node01 ~]#

再次挪動slot到其他節點

提示:再次挪動slot需要先修復叢集,然後才可以重新分配slot

修復叢集

[root@node01 ~]# redis-trib.rb fix 192.168.0.41:6379 >>> Performing Cluster Check (using node 192.168.0.41:6379) M: 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 192.168.0.41:6379 slots:2447-5460 (3014 slots) master 1 additional replica(s) M: 6df33baf68995c61494a06c06af18045ca5a04f6 192.168.0.43:6382 slots:1180-2446,5461-8038,10923-12539 (5462 slots) master 0 additional replica(s) S: 62ece0b80b83c0f1f078b07fc1687bb8376f76b3 192.168.0.41:6380 slots: (0 slots) slave replicates a7ace08c36f7d55c4f28463d72865aa1ff74829e S: dbfff4c49a94c0ee55d14401ccc9245af3655427 192.168.0.42:6380 slots: (0 slots) slave replicates 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 M: a7ace08c36f7d55c4f28463d72865aa1ff74829e 192.168.0.43:6379 slots:12540-16383 (3844 slots) master 1 additional replica(s) M: 91169e71359deed96f8778cf31c823dbd6ded350 192.168.0.43:6380 slots:0-1179,8039-10922 (4064 slots) master 1 additional replica(s) S: e99b0b450e78719d63520cb6efc068d5e8d4d081 192.168.0.42:6379 slots: (0 slots) slave replicates 91169e71359deed96f8778cf31c823dbd6ded350 [OK] All nodes agree about slots configuration. >>> Check for open slots... [WARNING] Node 192.168.0.43:6382 has slots in migrating state (1180). [WARNING] Node 192.168.0.43:6380 has slots in importing state (1180). [WARNING] The following slots are open: 1180 >>> Fixing open slot 1180 Set as migrating in: 192.168.0.43:6382 Set as importing in: 192.168.0.43:6380 Moving slot 1180 from 192.168.0.43:6382 to 192.168.0.43:6380: >>> Check slots coverage... [OK] All 16384 slots covered. [root@node01 ~]# redis-trib.rb info 192.168.0.41:6379 192.168.0.41:6379 (8c785e6e...) -> 0 keys | 3014 slots | 1 slaves. 192.168.0.43:6382 (6df33baf...) -> 0 keys | 5461 slots | 0 slaves. 192.168.0.43:6379 (a7ace08c...) -> 0 keys | 3844 slots | 1 slaves. 192.168.0.43:6380 (91169e71...) -> 0 keys | 4065 slots | 1 slaves. [OK] 0 keys in 4 masters. 0.00 keys per slot on average. [root@node01 ~]#

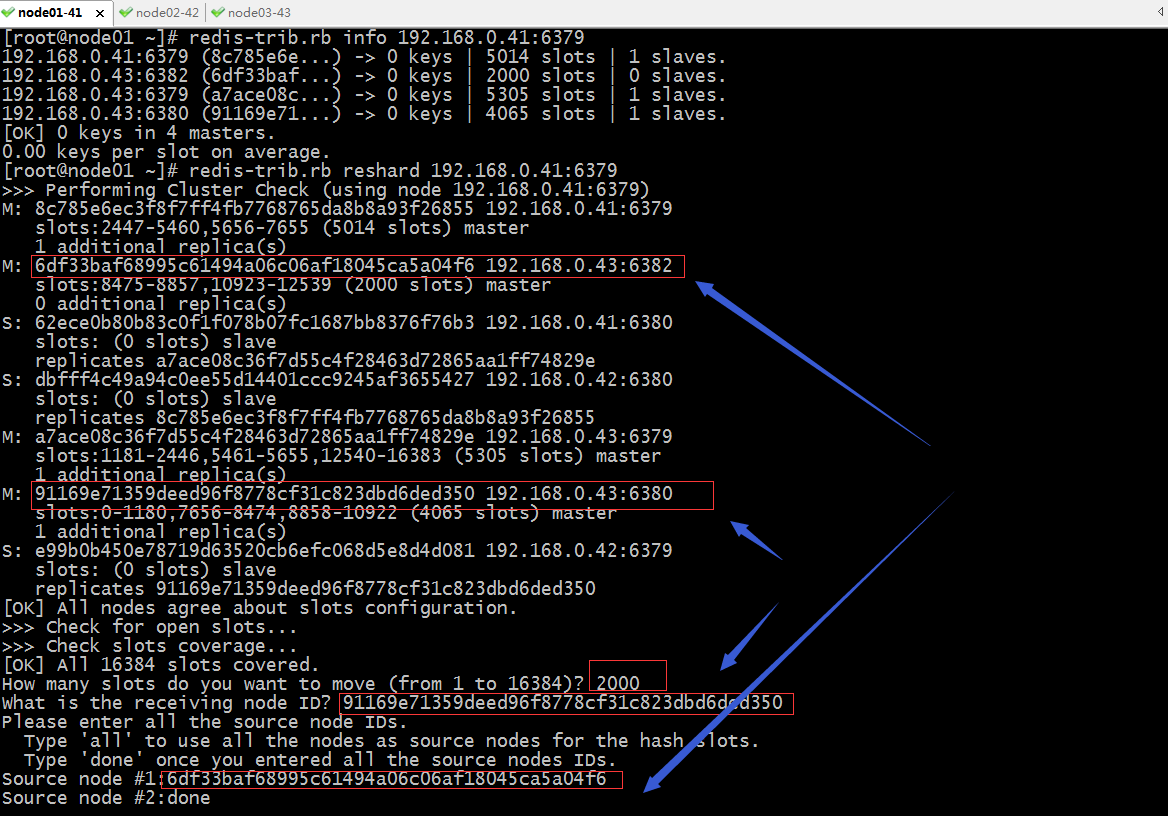

提示:修復集群后,可以看到對應master上還剩下5461個slot,接下來我們再次把這5461個slot分配給其他節點(一次分不完可以多次分);

再次分配slot到其他節點(分配1461個slot給192.168.0.43:6379)

再次分配slot到其他節點(分配12000個slot給192.168.0.41:6379)

再次分配slot到其他節點(分配12000個slot給192.168.0.43:6380)

確認叢集slot分配情況

提示:先可以看到192.168.0.43:6382節點上沒有slots了,接下來我們就可以把它從叢集中刪除

從叢集中刪除節點(192.168.0.43:6382)

提示:從叢集中把某個節點刪除,需要指定叢集中任意一個ip地址,以及要刪除節點的對應ID即可;

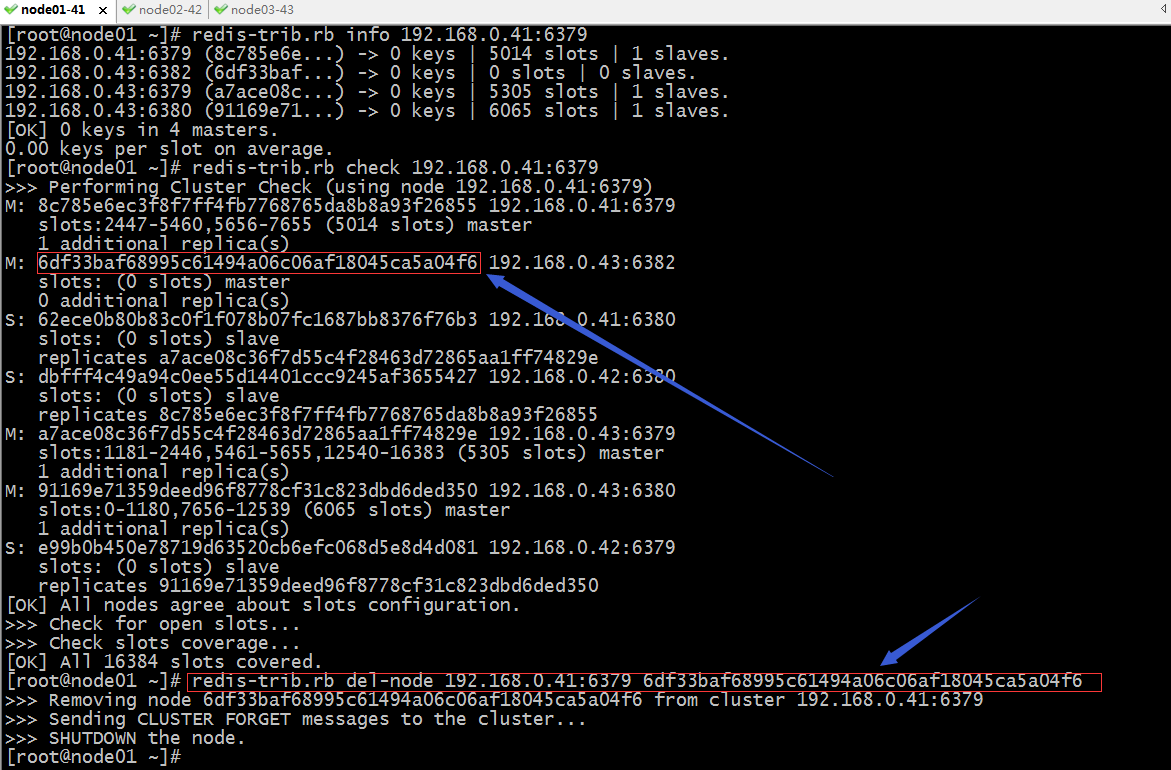

驗證:檢視現有叢集資訊

[root@node01 ~]# redis-trib.rb info 192.168.0.41:6379 192.168.0.41:6379 (8c785e6e...) -> 0 keys | 5014 slots | 1 slaves. 192.168.0.43:6379 (a7ace08c...) -> 0 keys | 5305 slots | 1 slaves. 192.168.0.43:6380 (91169e71...) -> 0 keys | 6065 slots | 1 slaves. [OK] 0 keys in 3 masters. 0.00 keys per slot on average. [root@node01 ~]# redis-trib.rb check 192.168.0.41:6379 >>> Performing Cluster Check (using node 192.168.0.41:6379) M: 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 192.168.0.41:6379 slots:2447-5460,5656-7655 (5014 slots) master 1 additional replica(s) S: 62ece0b80b83c0f1f078b07fc1687bb8376f76b3 192.168.0.41:6380 slots: (0 slots) slave replicates a7ace08c36f7d55c4f28463d72865aa1ff74829e S: dbfff4c49a94c0ee55d14401ccc9245af3655427 192.168.0.42:6380 slots: (0 slots) slave replicates 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 M: a7ace08c36f7d55c4f28463d72865aa1ff74829e 192.168.0.43:6379 slots:1181-2446,5461-5655,12540-16383 (5305 slots) master 1 additional replica(s) M: 91169e71359deed96f8778cf31c823dbd6ded350 192.168.0.43:6380 slots:0-1180,7656-12539 (6065 slots) master 1 additional replica(s) S: e99b0b450e78719d63520cb6efc068d5e8d4d081 192.168.0.42:6379 slots: (0 slots) slave replicates 91169e71359deed96f8778cf31c823dbd6ded350 [OK] All nodes agree about slots configuration. >>> Check for open slots... >>> Check slots coverage... [OK] All 16384 slots covered. [root@node01 ~]#

提示:可以看到目前叢集中就只有3主3從6個節點;

驗證:啟動之前宕機的192.168.0.43:6381,看看是否還會在叢集中呢?

[root@node01 ~]# ssh node03 Last login: Sat Aug 8 10:08:40 2020 from node01 [root@node03 ~]# ss -tnl State Recv-Q Send-Q Local Address:Port Peer Address:Port LISTEN 0 128 *:22 *:* LISTEN 0 100 127.0.0.1:25 *:* LISTEN 0 128 *:16379 *:* LISTEN 0 128 *:16380 *:* LISTEN 0 128 *:6379 *:* LISTEN 0 128 *:6380 *:* LISTEN 0 128 [::]:22 [::]:* LISTEN 0 100 [::1]:25 [::]:* LISTEN 0 128 [::]:2376 [::]:* [root@node03 ~]# redis-server /usr/local/redis/6381/etc/redis.conf [root@node03 ~]# ss -tnl State Recv-Q Send-Q Local Address:Port Peer Address:Port LISTEN 0 128 *:22 *:* LISTEN 0 100 127.0.0.1:25 *:* LISTEN 0 128 *:16379 *:* LISTEN 0 128 *:16380 *:* LISTEN 0 128 *:16381 *:* LISTEN 0 128 *:6379 *:* LISTEN 0 128 *:6380 *:* LISTEN 0 128 *:6381 *:* LISTEN 0 128 [::]:22 [::]:* LISTEN 0 100 [::1]:25 [::]:* LISTEN 0 128 [::]:2376 [::]:* [root@node03 ~]# exit logout Connection to node03 closed. [root@node01 ~]# redis-trib.rb check 192.168.0.41:6379 [ERR] Sorry, can't connect to node 192.168.0.43:6382 >>> Performing Cluster Check (using node 192.168.0.41:6379) M: 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 192.168.0.41:6379 slots:2447-5460,5656-7655 (5014 slots) master 2 additional replica(s) S: 62ece0b80b83c0f1f078b07fc1687bb8376f76b3 192.168.0.41:6380 slots: (0 slots) slave replicates a7ace08c36f7d55c4f28463d72865aa1ff74829e S: dbfff4c49a94c0ee55d14401ccc9245af3655427 192.168.0.42:6380 slots: (0 slots) slave replicates 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 M: a7ace08c36f7d55c4f28463d72865aa1ff74829e 192.168.0.43:6379 slots:1181-2446,5461-5655,12540-16383 (5305 slots) master 1 additional replica(s) M: 91169e71359deed96f8778cf31c823dbd6ded350 192.168.0.43:6380 slots:0-1180,7656-12539 (6065 slots) master 1 additional replica(s) S: 0449aa43657d46f487107bfe49344701526b11d8 192.168.0.43:6381 slots: (0 slots) slave replicates 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 S: e99b0b450e78719d63520cb6efc068d5e8d4d081 192.168.0.42:6379 slots: (0 slots) slave replicates 91169e71359deed96f8778cf31c823dbd6ded350 [OK] All nodes agree about slots configuration. >>> Check for open slots... >>> Check slots coverage... [OK] All 16384 slots covered. [root@node01 ~]# redis-trib.rb info 192.168.0.41:6379 [ERR] Sorry, can't connect to node 192.168.0.43:6382 192.168.0.41:6379 (8c785e6e...) -> 0 keys | 5014 slots | 2 slaves. 192.168.0.43:6379 (a7ace08c...) -> 0 keys | 5305 slots | 1 slaves. 192.168.0.43:6380 (91169e71...) -> 0 keys | 6065 slots | 1 slaves. [OK] 0 keys in 3 masters. 0.00 keys per slot on average. [root@node01 ~]# redis-cli -a admin 127.0.0.1:6379> CLUSTER NODES 62ece0b80b83c0f1f078b07fc1687bb8376f76b3 192.168.0.41:6380@16380 slave a7ace08c36f7d55c4f28463d72865aa1ff74829e 0 1596855739865 15 connected dbfff4c49a94c0ee55d14401ccc9245af3655427 192.168.0.42:6380@16380 slave 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 0 1596855738000 16 connected 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 192.168.0.41:6379@16379 myself,master - 0 1596855736000 16 connected 2447-5460 5656-7655 a7ace08c36f7d55c4f28463d72865aa1ff74829e 192.168.0.43:6379@16379 master - 0 1596855737000 15 connected 1181-2446 5461-5655 12540-16383 91169e71359deed96f8778cf31c823dbd6ded350 192.168.0.43:6380@16380 master - 0 1596855740877 18 connected 0-1180 7656-12539 0449aa43657d46f487107bfe49344701526b11d8 192.168.0.43:6381@16381 slave 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 0 1596855738000 16 connected 30a34b27d343883cbfe9db6ba2ad52a1936d8b67 192.168.0.43:6382@16382 handshake - 1596855726853 0 0 disconnected e99b0b450e78719d63520cb6efc068d5e8d4d081 192.168.0.42:6379@16379 slave 91169e71359deed96f8778cf31c823dbd6ded350 0 1596855739000 18 connected 127.0.0.1:6379>

提示:可以看到當192.168.0.43:6381(源刪除master的slave)啟動後,它會自動從屬叢集節點某一個master;從上面的資訊可以看到現在叢集成了3主4從,192.168.0.43:6381從屬192.168.0.41:6379;還有一個你可能已經發現在node03上對應的6382這個節點也不再了,從叢集node關係看,它的狀態變成了handshake disconnected;

驗證:把192.168.0.43:6382啟動起來,看看它還會在叢集嗎?

[root@node01 ~]# ssh node03 Last login: Sat Aug 8 11:00:50 2020 from node01 [root@node03 ~]# ss -tnl State Recv-Q Send-Q Local Address:Port Peer Address:Port LISTEN 0 128 *:22 *:* LISTEN 0 100 127.0.0.1:25 *:* LISTEN 0 128 *:16379 *:* LISTEN 0 128 *:16380 *:* LISTEN 0 128 *:16381 *:* LISTEN 0 128 *:6379 *:* LISTEN 0 128 *:6380 *:* LISTEN 0 128 *:6381 *:* LISTEN 0 128 [::]:22 [::]:* LISTEN 0 100 [::1]:25 [::]:* LISTEN 0 128 [::]:2376 [::]:* [root@node03 ~]# redis-server /usr/local/redis/6382/etc/redis.conf [root@node03 ~]# ss -tnl State Recv-Q Send-Q Local Address:Port Peer Address:Port LISTEN 0 128 *:22 *:* LISTEN 0 100 127.0.0.1:25 *:* LISTEN 0 128 *:16379 *:* LISTEN 0 128 *:16380 *:* LISTEN 0 128 *:16381 *:* LISTEN 0 128 *:16382 *:* LISTEN 0 128 *:6379 *:* LISTEN 0 128 *:6380 *:* LISTEN 0 128 *:6381 *:* LISTEN 0 128 *:6382 *:* LISTEN 0 128 [::]:22 [::]:* LISTEN 0 100 [::1]:25 [::]:* LISTEN 0 128 [::]:2376 [::]:* [root@node03 ~]# redis-cli 127.0.0.1:6379> AUTH admin OK 127.0.0.1:6379> CLUSTER NODES 0449aa43657d46f487107bfe49344701526b11d8 192.168.0.43:6381@16381 slave 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 0 1596856251000 16 connected a7ace08c36f7d55c4f28463d72865aa1ff74829e 192.168.0.43:6379@16379 myself,master - 0 1596856250000 15 connected 1181-2446 5461-5655 12540-16383 dbfff4c49a94c0ee55d14401ccc9245af3655427 192.168.0.42:6380@16380 slave 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 0 1596856250973 16 connected e99b0b450e78719d63520cb6efc068d5e8d4d081 192.168.0.42:6379@16379 slave 91169e71359deed96f8778cf31c823dbd6ded350 0 1596856253018 18 connected 8c785e6ec3f8f7ff4fb7768765da8b8a93f26855 192.168.0.41:6379@16379 master - 0 1596856252000 16 connected 2447-5460 5656-7655 6df33baf68995c61494a06c06af18045ca5a04f6 192.168.0.43:6382@16382 master - 0 1596856253000 17 connected 62ece0b80b83c0f1f078b07fc1687bb8376f76b3 192.168.0.41:6380@16380 slave a7ace08c36f7d55c4f28463d72865aa1ff74829e 0 1596856252000 15 connected 91169e71359deed96f8778cf31c823dbd6ded350 192.168.0.43:6380@16380 master - 0 1596856254043 18 connected 0-1180 7656-12539 127.0.0.1:6379> quit [root@node03 ~]# exit logout Connection to node03 closed. [root@node01 ~]# redis-trib.rb info 192.168.0.41:6379 192.168.0.41:6379 (8c785e6e...) -> 0 keys | 5014 slots | 2 slaves. 192.168.0.43:6382 (6df33baf...) -> 0 keys | 0 slots | 0 slaves. 192.168.0.43:6379 (a7ace08c...) -> 0 keys | 5305 slots | 1 slaves. 192.168.0.43:6380 (91169e71...) -> 0 keys | 6065 slots | 1 slaves. [OK] 0 keys in 4 masters. 0.00 keys per slot on average. [root@node01 ~]#

提示:可以看到當我們把192.168.0.43:6382啟動起來後,再次檢視叢集資訊,它又回到了叢集,只不過它沒有對應slot,當然沒有slot也就不會有任何連線排程到它上