CS231N assignment1 SVM

阿新 • • 發佈:2018-11-07

from cs231n.classifiers.softmax import softmax_loss_naive

線性分類器SVM,分成兩個部分

1.a score function that maps the raw data to class scores,也就是所謂的f(w,x)函式

2.a loss function that quantifies the agreement between the predicted scores and the ground truth labels

margin:

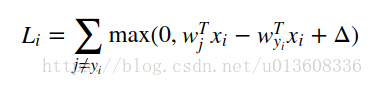

SVM loss function wants the score of the correct class yi to be larger than the incorrect class scores by at least by Δ (delta). If this is not the case, we will accumulate loss.

example

1. loss function

cs231n/classifiers/linear_softmax.py中

softmax_loss_naive

SVM想讓正確類別的score比錯誤類別的score要高出一個固定的margin Δ.

svm的損失函式計算方法

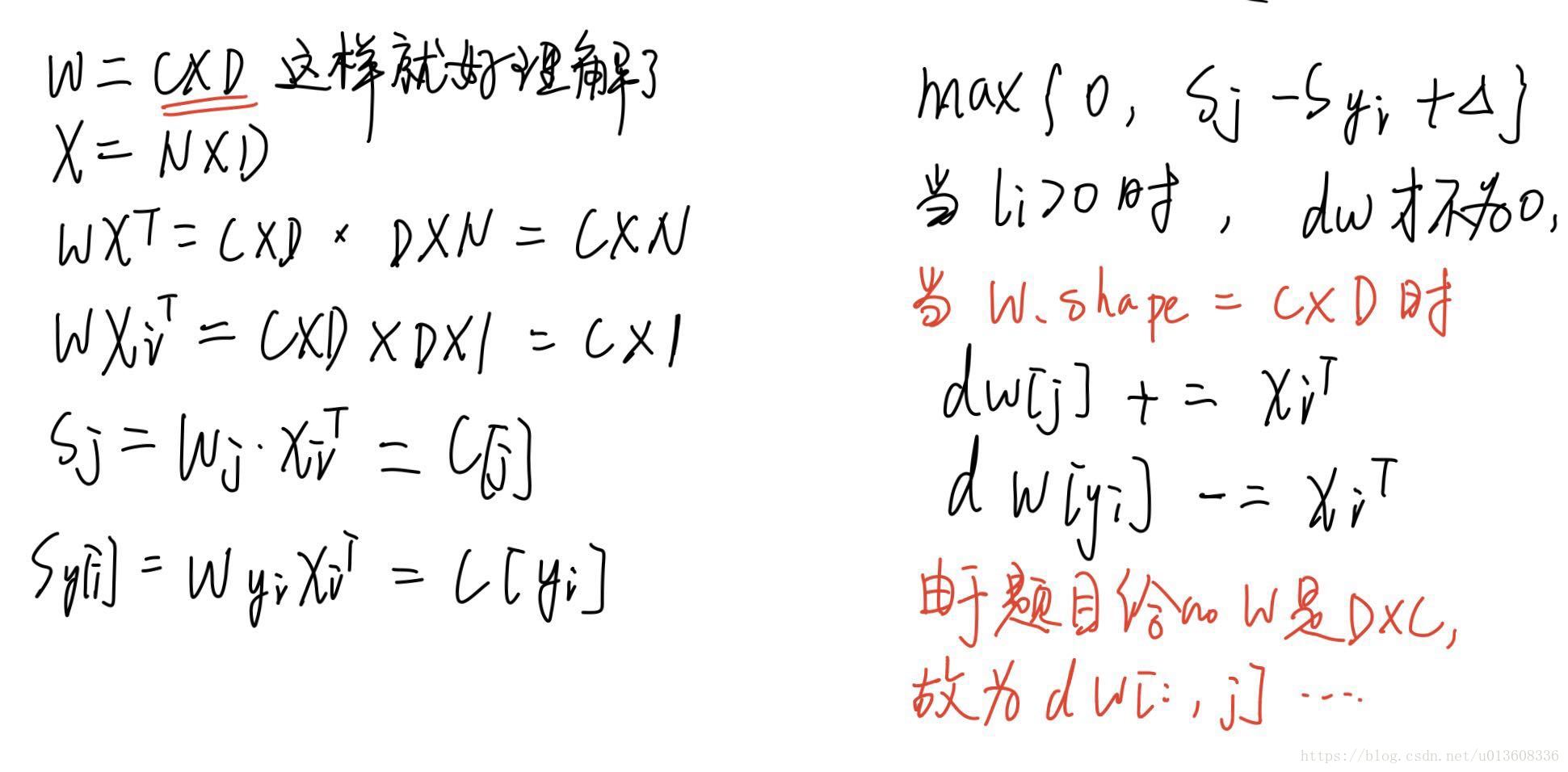

Inputs: - W: A numpy array of shape (D, C) containing weights. - X: A numpy array of shape (N, D) containing a minibatch of data. - y: A numpy array of shape (N,) containing training labels; y[i] = c means that X[i] has label c, where 0 <= c < C. - reg: (float) regularization strength

for i in xrange(num_train):#0-N scores = X[i].dot(W) ##1*C correct_class_score = scores[y[i]] for j in xrange(num_classes):# 0-C if j == y[i]: continue margin = scores[j] - correct_class_score + 1 # note delta = 1 if margin > 0: loss += margin dW[:,j] += X[i].T dW[:,y[i]] -= X[i].T

softmax_loss_vectorized

loss

scores = X.dot(W)

yi_scores = scores[np.arange(scores.shape[0]),y]

margins = np.maximum(0, scores - np.matrix(yi_scores).T + 1)

margins[np.arange(num_train),y] = 0

loss = np.mean(np.sum(margins, axis=1))

loss += 0.5 * reg * np.sum(W * W)

####參考

cs231n linear classifier SVM

https://mlxai.github.io/2017/01/06/vectorized-implementation-of-svm-loss-and-gradient-update.html