深度學習AI美顏系列----基於摳圖的人像特效演算法

美顏演算法的重點在於美顏,也就是增加顏值,顏值的廣定義,可以延伸到整個人體範圍,也就是說,你的顏值不單單和你的臉有關係,還跟你穿什麼衣服,什麼鞋子相關,基於這個定義(這個定義是本人自己的說法,沒有權威性考究),今天我們基於人體摳圖來做一些人像特效演算法。

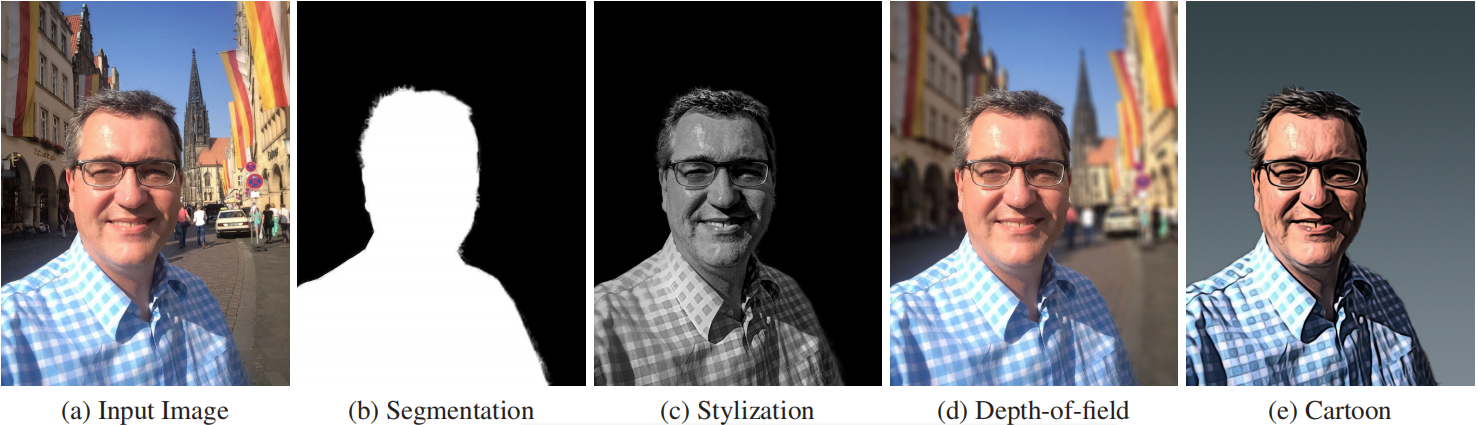

摳圖技術很早之前就有很多論文研究,但是深度學習的出現,大大的提高了摳圖的精度,從CNN到FCN/FCN+/UNet等等,論文層出不窮,比如這篇Automatic Portrait Segmentation for Image Stylization,在FCN的基礎上,提出了FCN+,專門針對人像摳圖,效果如下:

圖a是人像原圖,圖b是分割的Mask,圖cde是基於Mask所做的一些效果濾鏡;

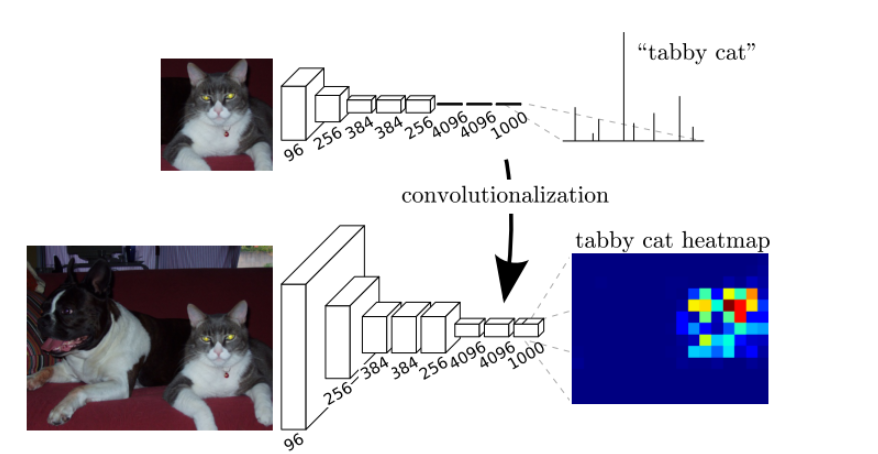

要了解這篇論文,首先我們需要了解FCN,用FCN做影象分割:

該圖中上面部分是CNN做影象分割的網路模型,可以看到,最後是全連線層來處理的,前5層是卷積層,第6層和第7層分別是一個長度為4096的一維向量,第8層是長度為1000的一維向量,分別對應1000個類別的概率;而下圖部分是FCN,它將最後的三個全連線層換成了卷積層,卷積核的大小(通道數,寬,高)分別為(4096,1,1)、(4096,1,1)、(1000,1,1),這樣以來,所有層都是卷積層,因此稱為全卷積網路;

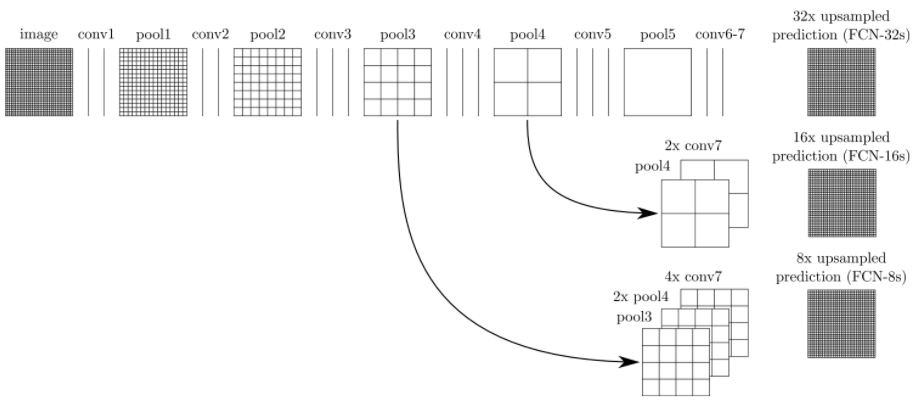

FCN網路流程如下:

在這個網路中,經過5次卷積(和pooling)以後,影象的解析度依次縮小了2,4,8,16,32倍,對於第5層的輸出,是縮小32倍的小圖,我們需要將其進行上取樣反捲積來得到原圖大小的解析度,也就是32倍放大,這樣得到的結果就是FCN-32s,由於放大32倍,所以很不精確,因此,我們對第4層和第3層依次進行了反捲積放大,以求得到更加精細的分割結果,這個就是FCN的影象分割演算法流程。

與傳統CNN相比FCN的的優缺點如下:

優點:

①可以接受任意大小的輸入影象,而不用要求所有的訓練影象和測試影象具有同樣的尺寸;

②更加高效,避免了由於使用畫素塊而帶來的重複儲存和計算卷積的問題;

缺點:

①得到的結果還是不夠精細。進行8倍上取樣雖然比32倍的效果好了很多,但是上取樣的結果還是比較模糊和平滑,對影象中的細節不敏感;

②沒有充分考慮畫素與畫素之間的關係,也就是丟失了空間資訊的考慮;

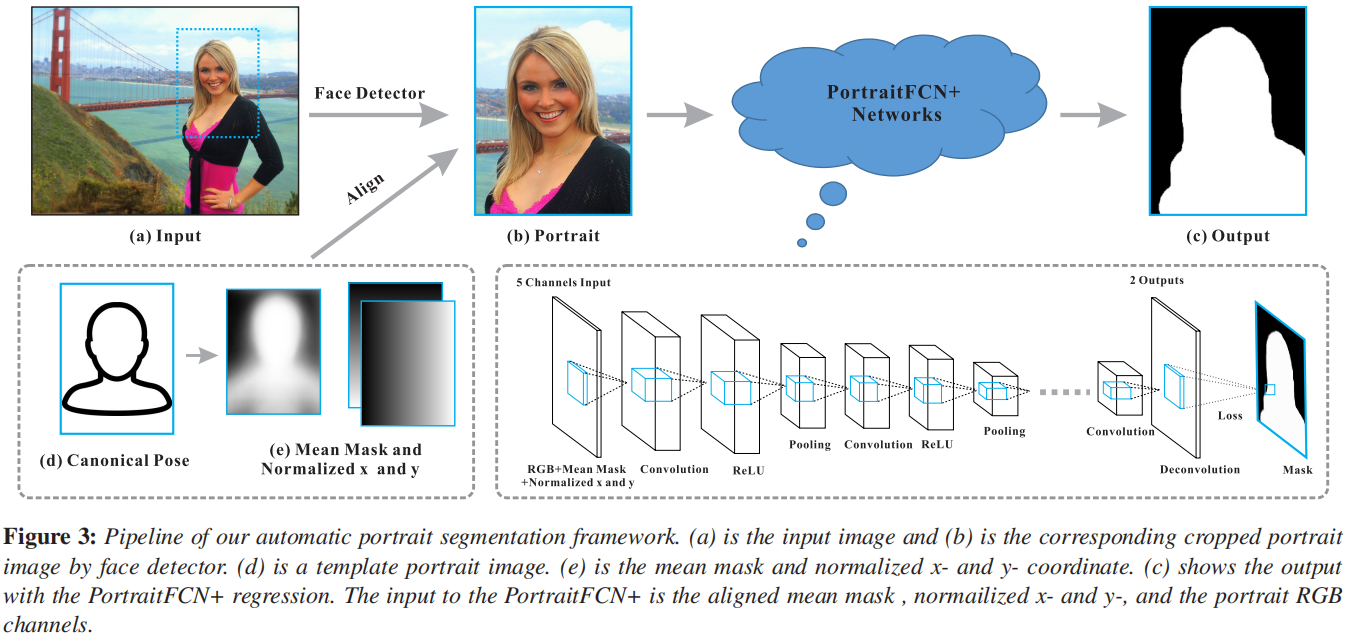

在瞭解了FCN之後,就容易理解FCN+了,Automatic Portrait Segmentation for Image Stylization這篇論文就是針對FCN的缺點,進行了改進,在輸入的資料中添加了人臉的空間位置資訊,形狀資訊,以求得到精確的分割結果,如下圖所示:

對於位置和形狀資料的生成:

位置通道:標識畫素與人臉的相對位置,由於每張圖片位置都不一樣,我們採用歸一化的x和y通道(畫素的座標),座標以第一次檢測到人臉特徵點為準,並預估了匹配到的特徵與人體標準姿勢之間的一個單應變換T,我們將歸一化的x通道定義為T(ximg),其中ximg是以人臉中心位置為0點的x座標,同理y也是如此。這樣,我們就得到了每個畫素相對於人臉的位置(尺寸也有相應於人臉大小的縮放),形成了x和y通道。

形狀通道:參考人像的標準形狀(臉和部分上身),我們定義了一個形狀通道。首先用我們的資料集計算一個對齊的平均人像mask。計算方法為:對每一對人像+mask,用上一步得到的單應變換T對mask做變換,變換到人體標準姿勢,然後求均值。

W取值為0或1,當變換後在人像內的取值為1,否則為0。

然後就可以對平均mask類似地變換以與輸入人像的面部特徵點對齊。

論文對應的程式碼連結:點選開啟連結

主體FCN+程式碼:

from __future__ import print_function

import tensorflow as tf

import numpy as np

import TensorflowUtils_plus as utils

#import read_MITSceneParsingData as scene_parsing

import datetime

#import BatchDatsetReader as dataset

from portrait_plus import BatchDatset, TestDataset

from PIL import Image

from six.moves import xrange

from scipy import misc

FLAGS = tf.flags.FLAGS

tf.flags.DEFINE_integer("batch_size", "5", "batch size for training")

tf.flags.DEFINE_string("logs_dir", "logs/", "path to logs directory")

tf.flags.DEFINE_string("data_dir", "Data_zoo/MIT_SceneParsing/", "path to dataset")

tf.flags.DEFINE_float("learning_rate", "1e-4", "Learning rate for Adam Optimizer")

tf.flags.DEFINE_string("model_dir", "Model_zoo/", "Path to vgg model mat")

tf.flags.DEFINE_bool('debug', "False", "Debug mode: True/ False")

tf.flags.DEFINE_string('mode', "train", "Mode train/ test/ visualize")

MODEL_URL = 'http://www.vlfeat.org/matconvnet/models/beta16/imagenet-vgg-verydeep-19.mat'

MAX_ITERATION = int(1e5 + 1)

NUM_OF_CLASSESS = 2

IMAGE_WIDTH = 600

IMAGE_HEIGHT = 800

def vgg_net(weights, image):

layers = (

'conv1_1', 'relu1_1', 'conv1_2', 'relu1_2', 'pool1',

'conv2_1', 'relu2_1', 'conv2_2', 'relu2_2', 'pool2',

'conv3_1', 'relu3_1', 'conv3_2', 'relu3_2', 'conv3_3',

'relu3_3', 'conv3_4', 'relu3_4', 'pool3',

'conv4_1', 'relu4_1', 'conv4_2', 'relu4_2', 'conv4_3',

'relu4_3', 'conv4_4', 'relu4_4', 'pool4',

'conv5_1', 'relu5_1', 'conv5_2', 'relu5_2', 'conv5_3',

'relu5_3', 'conv5_4', 'relu5_4'

)

net = {}

current = image

for i, name in enumerate(layers):

if name in ['conv3_4', 'relu3_4', 'conv4_4', 'relu4_4', 'conv5_4', 'relu5_4']:

continue

kind = name[:4]

if kind == 'conv':

kernels, bias = weights[i][0][0][0][0]

# matconvnet: weights are [width, height, in_channels, out_channels]

# tensorflow: weights are [height, width, in_channels, out_channels]

kernels = utils.get_variable(np.transpose(kernels, (1, 0, 2, 3)), name=name + "_w")

bias = utils.get_variable(bias.reshape(-1), name=name + "_b")

current = utils.conv2d_basic(current, kernels, bias)

elif kind == 'relu':

current = tf.nn.relu(current, name=name)

if FLAGS.debug:

utils.add_activation_summary(current)

elif kind == 'pool':

current = utils.avg_pool_2x2(current)

net[name] = current

return net

def inference(image, keep_prob):

"""

Semantic segmentation network definition

:param image: input image. Should have values in range 0-255

:param keep_prob:

:return:

"""

print("setting up vgg initialized conv layers ...")

model_data = utils.get_model_data(FLAGS.model_dir, MODEL_URL)

mean = model_data['normalization'][0][0][0]

mean_pixel = np.mean(mean, axis=(0, 1))

weights = np.squeeze(model_data['layers'])

#processed_image = utils.process_image(image, mean_pixel)

with tf.variable_scope("inference"):

image_net = vgg_net(weights, image)

conv_final_layer = image_net["conv5_3"]

pool5 = utils.max_pool_2x2(conv_final_layer)

W6 = utils.weight_variable([7, 7, 512, 4096], name="W6")

b6 = utils.bias_variable([4096], name="b6")

conv6 = utils.conv2d_basic(pool5, W6, b6)

relu6 = tf.nn.relu(conv6, name="relu6")

if FLAGS.debug:

utils.add_activation_summary(relu6)

relu_dropout6 = tf.nn.dropout(relu6, keep_prob=keep_prob)

W7 = utils.weight_variable([1, 1, 4096, 4096], name="W7")

b7 = utils.bias_variable([4096], name="b7")

conv7 = utils.conv2d_basic(relu_dropout6, W7, b7)

relu7 = tf.nn.relu(conv7, name="relu7")

if FLAGS.debug:

utils.add_activation_summary(relu7)

relu_dropout7 = tf.nn.dropout(relu7, keep_prob=keep_prob)

W8 = utils.weight_variable([1, 1, 4096, NUM_OF_CLASSESS], name="W8")

b8 = utils.bias_variable([NUM_OF_CLASSESS], name="b8")

conv8 = utils.conv2d_basic(relu_dropout7, W8, b8)

# annotation_pred1 = tf.argmax(conv8, dimension=3, name="prediction1")

# now to upscale to actual image size

deconv_shape1 = image_net["pool4"].get_shape()

W_t1 = utils.weight_variable([4, 4, deconv_shape1[3].value, NUM_OF_CLASSESS], name="W_t1")

b_t1 = utils.bias_variable([deconv_shape1[3].value], name="b_t1")

conv_t1 = utils.conv2d_transpose_strided(conv8, W_t1, b_t1, output_shape=tf.shape(image_net["pool4"]))

fuse_1 = tf.add(conv_t1, image_net["pool4"], name="fuse_1")

deconv_shape2 = image_net["pool3"].get_shape()

W_t2 = utils.weight_variable([4, 4, deconv_shape2[3].value, deconv_shape1[3].value], name="W_t2")

b_t2 = utils.bias_variable([deconv_shape2[3].value], name="b_t2")

conv_t2 = utils.conv2d_transpose_strided(fuse_1, W_t2, b_t2, output_shape=tf.shape(image_net["pool3"]))

fuse_2 = tf.add(conv_t2, image_net["pool3"], name="fuse_2")

shape = tf.shape(image)

deconv_shape3 = tf.stack([shape[0], shape[1], shape[2], NUM_OF_CLASSESS])

W_t3 = utils.weight_variable([16, 16, NUM_OF_CLASSESS, deconv_shape2[3].value], name="W_t3")

b_t3 = utils.bias_variable([NUM_OF_CLASSESS], name="b_t3")

conv_t3 = utils.conv2d_transpose_strided(fuse_2, W_t3, b_t3, output_shape=deconv_shape3, stride=8)

annotation_pred = tf.argmax(conv_t3, dimension=3, name="prediction")

return tf.expand_dims(annotation_pred, dim=3), conv_t3

def train(loss_val, var_list):

optimizer = tf.train.AdamOptimizer(FLAGS.learning_rate)

grads = optimizer.compute_gradients(loss_val, var_list=var_list)

if FLAGS.debug:

# print(len(var_list))

for grad, var in grads:

utils.add_gradient_summary(grad, var)

return optimizer.apply_gradients(grads)

def main(argv=None):

keep_probability = tf.placeholder(tf.float32, name="keep_probabilty")

image = tf.placeholder(tf.float32, shape=[None, IMAGE_HEIGHT, IMAGE_WIDTH, 6], name="input_image")

annotation = tf.placeholder(tf.int32, shape=[None, IMAGE_HEIGHT, IMAGE_WIDTH, 1], name="annotation")

pred_annotation, logits = inference(image, keep_probability)

#tf.image_summary("input_image", image, max_images=2)

#tf.image_summary("ground_truth", tf.cast(annotation, tf.uint8), max_images=2)

#tf.image_summary("pred_annotation", tf.cast(pred_annotation, tf.uint8), max_images=2)

loss = tf.reduce_mean((tf.nn.sparse_softmax_cross_entropy_with_logits(logits,

tf.squeeze(annotation, squeeze_dims=[3]),

name="entropy")))

#tf.scalar_summary("entropy", loss)

trainable_var = tf.trainable_variables()

train_op = train(loss, trainable_var)

#print("Setting up summary op...")

#summary_op = tf.merge_all_summaries()

'''

print("Setting up image reader...")

train_records, valid_records = scene_parsing.read_dataset(FLAGS.data_dir)

print(len(train_records))

print(len(valid_records))

print("Setting up dataset reader")

image_options = {'resize': True, 'resize_size': IMAGE_SIZE}

if FLAGS.mode == 'train':

train_dataset_reader = dataset.BatchDatset(train_records, image_options)

validation_dataset_reader = dataset.BatchDatset(valid_records, image_options)

'''

train_dataset_reader = BatchDatset('data/trainlist.mat')

sess = tf.Session()

print("Setting up Saver...")

saver = tf.train.Saver()

#summary_writer = tf.train.SummaryWriter(FLAGS.logs_dir, sess.graph)

sess.run(tf.initialize_all_variables())

ckpt = tf.train.get_checkpoint_state(FLAGS.logs_dir)

if ckpt and ckpt.model_checkpoint_path:

saver.restore(sess, ckpt.model_checkpoint_path)

print("Model restored...")

#if FLAGS.mode == "train":

itr = 0

train_images, train_annotations = train_dataset_reader.next_batch()

trloss = 0.0

while len(train_annotations) > 0:

#train_images, train_annotations = train_dataset_reader.next_batch(FLAGS.batch_size)

#print('==> batch data: ', train_images[0][100][100], '===', train_annotations[0][100][100])

feed_dict = {image: train_images, annotation: train_annotations, keep_probability: 0.5}

_, rloss = sess.run([train_op, loss], feed_dict=feed_dict)

trloss += rloss

if itr % 100 == 0:

#train_loss, rpred = sess.run([loss, pred_annotation], feed_dict=feed_dict)

print("Step: %d, Train_loss:%f" % (itr, trloss / 100))

trloss = 0.0

#summary_writer.add_summary(summary_str, itr)

#if itr % 10000 == 0 and itr > 0:

'''

valid_images, valid_annotations = validation_dataset_reader.next_batch(FLAGS.batch_size)

valid_loss = sess.run(loss, feed_dict={image: valid_images, annotation: valid_annotations,

keep_probability: 1.0})

print("%s ---> Validation_loss: %g" % (datetime.datetime.now(), valid_loss))'''

itr += 1

train_images, train_annotations = train_dataset_reader.next_batch()

saver.save(sess, FLAGS.logs_dir + "plus_model.ckpt", itr)

'''elif FLAGS.mode == "visualize":

valid_images, valid_annotations = validation_dataset_reader.get_random_batch(FLAGS.batch_size)

pred = sess.run(pred_annotation, feed_dict={image: valid_images, annotation: valid_annotations,

keep_probability: 1.0})

valid_annotations = np.squeeze(valid_annotations, axis=3)

pred = np.squeeze(pred, axis=3)

for itr in range(FLAGS.batch_size):

utils.save_image(valid_images[itr].astype(np.uint8), FLAGS.logs_dir, name="inp_" + str(5+itr))

utils.save_image(valid_annotations[itr].astype(np.uint8), FLAGS.logs_dir, name="gt_" + str(5+itr))

utils.save_image(pred[itr].astype(np.uint8), FLAGS.logs_dir, name="pred_" + str(5+itr))

print("Saved image: %d" % itr)'''

def pred():

keep_probability = tf.placeholder(tf.float32, name="keep_probabilty")

image = tf.placeholder(tf.float32, shape=[None, IMAGE_HEIGHT, IMAGE_WIDTH, 6], name="input_image")

annotation = tf.placeholder(tf.int32, shape=[None, IMAGE_HEIGHT, IMAGE_WIDTH, 1], name="annotation")

pred_annotation, logits = inference(image, keep_probability)

sft = tf.nn.softmax(logits)

test_dataset_reader = TestDataset('data/testlist.mat')

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

ckpt = tf.train.get_checkpoint_state(FLAGS.logs_dir)

saver = tf.train.Saver()

if ckpt and ckpt.model_checkpoint_path:

saver.restore(sess, ckpt.model_checkpoint_path)

print("Model restored...")

itr = 0

test_images, test_annotations, test_orgs = test_dataset_reader.next_batch()

#print('getting', test_annotations[0, 200:210, 200:210])

while len(test_annotations) > 0:

if itr < 22:

test_images, test_annotations, test_orgs = test_dataset_reader.next_batch()

itr += 1

continue

elif itr > 22:

break

feed_dict = {image: test_images, annotation: test_annotations, keep_probability: 0.5}

rsft, pred_ann = sess.run([sft, pred_annotation], feed_dict=feed_dict)

print(rsft.shape)

_, h, w, _ = rsft.shape

preds = np.zeros((h, w, 1), dtype=np.float)

for i in range(h):

for j in range(w):

if rsft[0][i][j][0] < 0.1:

preds[i][j][0] = 1.0

elif rsft[0][i][j][0] < 0.9:

preds[i][j][0] = 0.5

else:

preds[i][j] = 0.0

org0_im = Image.fromarray(np.uint8(test_orgs[0]))

org0_im.save('res/org' + str(itr) + '.jpg')

save_alpha_img(test_orgs[0], test_annotations[0], 'res/ann' + str(itr))

save_alpha_img(test_orgs[0], preds, 'res/trimap' + str(itr))

save_alpha_img(test_orgs[0], pred_ann[0], 'res/pre' + str(itr))

test_images, test_annotations, test_orgs = test_dataset_reader.next_batch()

itr += 1

def save_alpha_img(org, mat, name):

w, h = mat.shape[0], mat.shape[1]

#print(mat[200:210, 200:210])

rmat = np.reshape(mat, (w, h))

amat = np.zeros((w, h, 4), dtype=np.int)

amat[:, :, 3] = np.round(rmat * 1000)

amat[:, :, 0:3] = org

#print(amat[200:205, 200:205])

#im = Image.fromarray(np.uint8(amat))

#im.save(name + '.png')

misc.imsave(name + '.png', amat)

if __name__ == "__main__":

#tf.app.run()

pred()

到這裡FCN+做人像分割已經講完,當然本文的目的不單單是分割,還有分割之後的應用;

我們將訓練資料擴充到人體分割,那麼我們就是對人體做美顏特效處理,同時對背景做其他的特效處理,這樣整張畫面就會變得更加有趣,更加提高顏值了,這裡我們對人體前景做美顏調色處理,對背景做了以下特效:

①景深模糊效果,用來模擬雙攝聚焦效果;

②馬賽克效果

③縮放模糊效果

④運動模糊效果

⑤油畫效果

⑥線條漫畫效果

⑦Glow夢幻效果

⑧鉛筆畫場景效果

⑨擴散效果

效果舉例如下:

原圖

人體分割MASK

景深模糊效果

馬賽克效果

擴散效果

縮放模糊效果

運動模糊效果

油畫效果

線條漫畫效果

GLOW夢幻效果

鉛筆畫效果

最後給出DEMO連結:點選開啟連結

本人QQ1358009172