Spark叢集安裝和WordCount編寫

阿新 • • 發佈:2019-01-10

一、Spark概述

官網:http://spark.apache.org/ Apache Spark™是用於大規模資料處理的統一分析引擎。 為大資料處理而設計的快速通用的計算引擎。 Spark加州大學伯克利分校AMP實驗室。不同於mapreduce的是一個Spark任務的中間結果儲存到記憶體中。 空間換時間。 Spark啟用的是記憶體分散式資料集。 用scala語言實現,與spark緊密繼承。用scala可以輕鬆的處理分散式資料集。 Spark並不是為了替代hadoop,而為了補充hadoop。 Spark並沒有儲存。可以整合HDFS。

二、Spark特點

1)速度快 與mr對比,磁碟執行的話10倍以上。 記憶體執行的話,100倍以上。 2)便於使用 支援java/scala/python/R 3)通用 不僅支援批處理(SparkSQL) 而且支援流處理(SparkStreaming) 4)相容 相容其它元件 Spark實現了Standalone作為內建的資源管理和排程框架。hdfs/yarn。

三、Spark安裝部署

主節點:Master (192.168.146.150) 從節點:Worker (192.168.146.151、192.168.146.152) 1、準備工作 (1)關閉防火牆 firewall-cmd --state 檢視防火牆狀態 systemctl stop firewalld.service 關閉防火牆 systemctl disable firewalld.service 禁止開機啟動 (2)遠端連線(CRT) (3)永久設定主機名 vi /etc/hostname 三臺機器hostname分別為spark-01、spark-02、spark-03 注意:要reboot重啟生效 (4)配置對映檔案 vi /etc/hosts #127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 #::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 192.168.146.150 spark-01 192.168.146.151 spark-02 192.168.146.152 spark-03 (5)配置ssh免密碼登入 ssh-keygen 生成金鑰對 ssh-copy-id spark-01 ssh-copy-id spark-02 ssh-copy-id spark-03 2、安裝jdk(scala依賴jvm) (1)建立spark安裝的目錄 cd /root 上傳tar包到/root目錄下 (2)解壓tar包 cd /root mkdir sk tar -zxvf jdk-8u144-linux-x64.tar.gz -C /root/sk (3)配置環境變數 vi /etc/profile export JAVA_HOME=/root/sk/jdk1.8.0_144 export PATH=$PATH:$JAVA_HOME/bin source /etc/profile 載入環境變數 (4)傳送到其它機器(其他機器的/root下要先建立sk目錄) cd /root/sk scp -r jdk1.8.0_144/ [email protected]:$PWD scp -r jdk1.8.0_144/ [email protected]:$PWD scp -r /etc/profile spark-02:/etc scp -r /etc/profile spark-03:/etc 注意:載入環境變數 source /etc/profile 3、安裝Spark叢集 (1)上傳tar包到/root目錄下 (2)解壓 cd /root tar -zxvf spark-2.2.0-bin-hadoop2.7.tgz -C sk/ (3)修改配置檔案 cd /root/sk/spark-2.2.0-bin-hadoop2.7/conf mv spark-env.sh.template spark-env.sh vi spark-env.sh export JAVA_HOME=/root/sk/jdk1.8.0_144 export SPARK_MASTER_HOST=spark-01 export SPARK_MASTER_PORT=7077 (4)slaves 加入從節點 cd /root/sk/spark-2.2.0-bin-hadoop2.7/conf mv slaves.template slaves vi slaves spark-02 spark-03 (5)分發到其他機器 cd /root/sk scp -r spark-2.2.0-bin-hadoop2.7/ [email protected]:$PWD scp -r spark-2.2.0-bin-hadoop2.7/ [email protected]:$PWD (6)啟動叢集 cd /root/sk/spark-2.2.0-bin-hadoop2.7 sbin/start-all.sh 瀏覽器訪問http://spark-01:8080/即可看到UI介面 (7)啟動命令列模式 cd /root/sk/spark-2.2.0-bin-hadoop2.7/bin ./spark-shell sc.textFile("/root/words.txt").flatMap(_.split(" ")).map((_,1)).reduceByKey(_+_).sortBy((_,1)).collect

四、啟動sparkshell

cd /root/sk/spark-2.2.0-bin-hadoop2.7/ 本地模式:bin/spark-shell 叢集啟動:bin/spark-shell --master spark://spark-01:7077 --total-executor-cores 2 --executor-memory 512mb 提交執行jar:bin/spark-submit --master spark://spark-01:7077 --class SparkWordCount /root/SparkWC-1.0-SNAPSHOT.jar

hdfs://192.168.146.111:9000/words.txt hdfs://192.168.146.111:9000/sparkwc/out

五、spark叢集角色

Yarn Spark 作用

ResourceManager Master 管理子節點

NodeManager Worker 管理當前節點

YarnChild Executor 處理計算任務

Client+ApplicationMaster SparkSubmit 提交計算任務

六、Shell編寫WordCount

1、本地模式:bin/spark-shell

scala> sc.textFile("/root/words.txt").flatMap(_.split(" ")).map((_,1)).reduceByKey(_+_).collect

res5: Array[(String, Int)] = Array((is,1), (love,2), (capital,1), (Beijing,2), (China,2), (I,2), (of,1), (the,1))

scala>

其中words.txt檔案內容如下

I love Beijing

I love China

2、叢集啟動:bin/spark-shell --master spark://spark-01:7077 --total-executor-cores 2 --executor-memory 512mb

scala> sc.textFile("/root/words.txt").flatMap(_.split(" ")).map((_,1)).reduceByKey(_+_).collect

res5: Array[(String, Int)] = Array((is,1), (love,2), (capital,1), (Beijing,2), (China,2), (I,2), (of,1), (the,1))

scala>

注意:如果叢集啟動使用的是本地檔案words.txt,那麼需要每個節點對應的路徑都有該檔案!!!

如果使用的是HDFS檔案則不需要考慮這個。

scala> sc.textFile("hdfs://192.168.146.111:9000/words.txt").flatMap(_.split("\t")).map((_,1)).reduceByKey(_+_).collect

res6: Array[(String, Int)] = Array((haha,1), (heihei,1), (hello,3), (Beijing,1), (world,1), (China,1))

scala>

HDFS中的words.txt檔案內容如下:

hello world

hello China

hello Beijing

haha heihei

3、IDEA開發WordCount

(1)SparkWordCount類

import org.apache.spark.{SparkConf, SparkContext} //spark-WordCount本地模式測試 object SparkWordCount { def main(args: Array[String]): Unit = { //2.設定引數 setAppName設定程式名 setMaster本地測試設定執行緒數 *多個 val conf: SparkConf = new SparkConf().setAppName("SparkWordCount").setMaster("local[*]") //1.建立spark執行程式的入口 val sc:SparkContext = new SparkContext(conf) //3.載入資料 並且處理 sc.textFile(args(0)).flatMap(_.split("\t")).map((_,1)) .reduceByKey(_+_) .sortBy(_._2,false) //儲存檔案 .saveAsTextFile(args(1)) //4.關閉資源 sc.stop() } }

(2)pom.xml檔案

<?xml version="1.0" encoding="UTF-8"?> <project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"> <modelVersion>4.0.0</modelVersion> <groupId>com.demo.spark</groupId> <artifactId>SparkWC</artifactId> <version>1.0-SNAPSHOT</version> <properties> <maven.compiler.source>1.8</maven.compiler.source> <maven.compiler.target>1.8</maven.compiler.target> <scala.version>2.11.8</scala.version> <spark.version>2.2.0</spark.version> <hadoop.version>2.8.4</hadoop.version> <encoding>UTF-8</encoding> </properties> <dependencies> <!-- scala的依賴匯入 --> <dependency> <groupId>org.scala-lang</groupId> <artifactId>scala-library</artifactId> <version>${scala.version}</version> </dependency> <!-- spark的依賴匯入 --> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-core_2.11</artifactId> <version>${spark.version}</version> </dependency> <!-- hadoop-client API的匯入 --> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-client</artifactId> <version>${hadoop.version}</version> </dependency> </dependencies> <build> <pluginManagement> <plugins> <!-- scala的編譯外掛 --> <plugin> <groupId>net.alchim31.maven</groupId> <artifactId>scala-maven-plugin</artifactId> <version>3.2.2</version> </plugin> <!-- ava的編譯外掛 --> <plugin> <groupId>org.apache.maven.plugins</groupId> <artifactId>maven-compiler-plugin</artifactId> <version>3.5.1</version> </plugin> </plugins> </pluginManagement> <plugins> <plugin> <groupId>net.alchim31.maven</groupId> <artifactId>scala-maven-plugin</artifactId> <executions> <execution> <id>scala-compile-first</id> <phase>process-resources</phase> <goals> <goal>add-source</goal> <goal>compile</goal> </goals> </execution> <execution> <id>scala-test-compile</id> <phase>process-test-resources</phase> <goals> <goal>testCompile</goal> </goals> </execution> </executions> </plugin> <plugin> <groupId>org.apache.maven.plugins</groupId> <artifactId>maven-compiler-plugin</artifactId> <executions> <execution> <phase>compile</phase> <goals> <goal>compile</goal> </goals> </execution> </executions> </plugin> <!-- 打jar包外掛 --> <plugin> <groupId>org.apache.maven.plugins</groupId> <artifactId>maven-shade-plugin</artifactId> <version>2.4.3</version> <executions> <execution> <phase>package</phase> <goals> <goal>shade</goal> </goals> <configuration> <filters> <filter> <artifact>*:*</artifact> <excludes> <exclude>META-INF/*.SF</exclude> <exclude>META-INF/*.DSA</exclude> <exclude>META-INF/*.RSA</exclude> </excludes> </filter> </filters> </configuration> </execution> </executions> </plugin> </plugins> </build> </project>

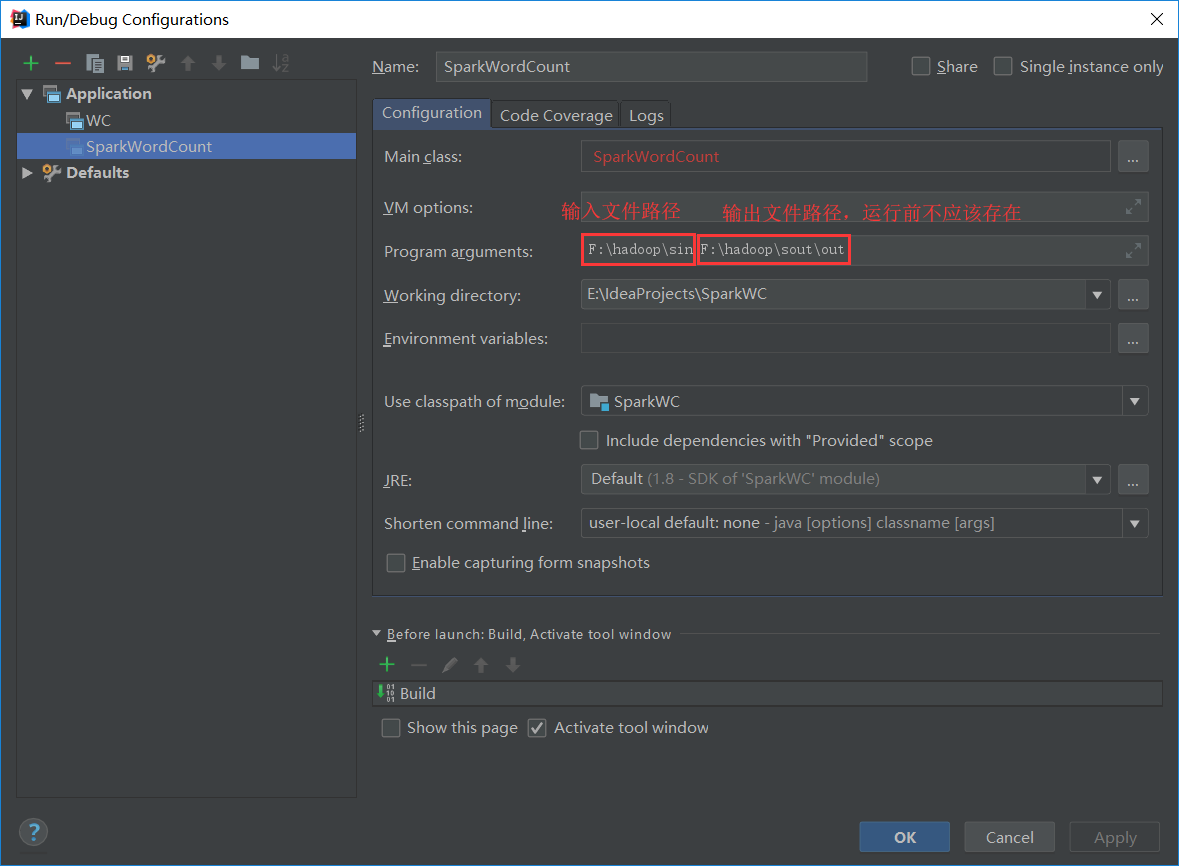

(3)配置類的執行引數

(4)輸入的檔案words.txt

hello world

hello spark

hello China

hello Beijing

hello world

(5)輸出檔案part-00000

(hello,5)

(world,2)

(6)輸出檔案part-00001

(Beijing,1) (spark,1) (China,1)

4、SparkSubmit提交任務

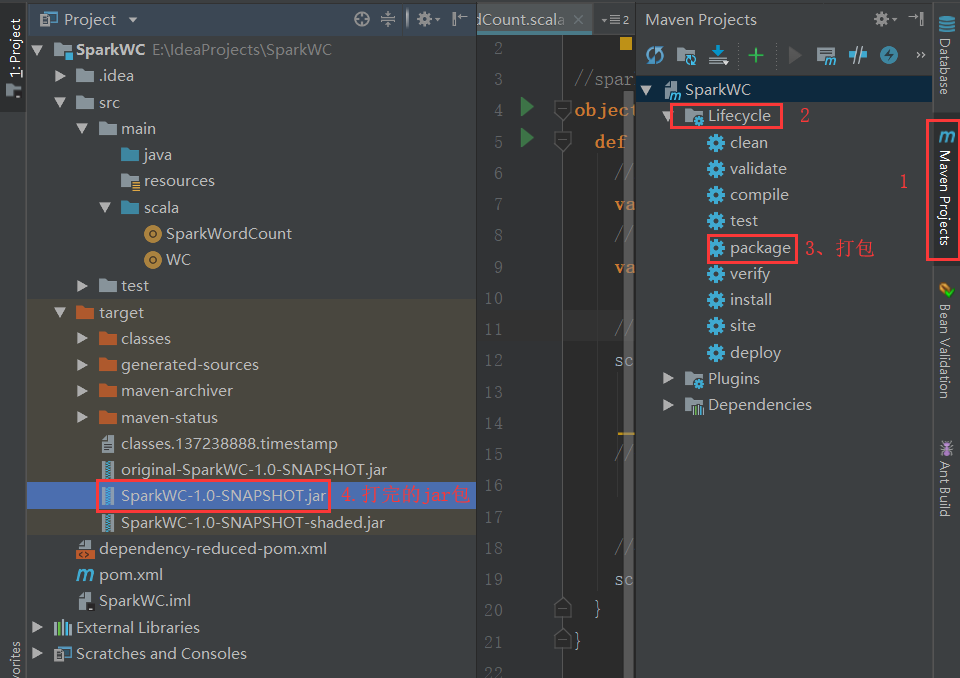

(1)將上一步的工程打成jar包

(2)把SparkWC-1.0-SNAPSHOT.jar放在spark-01機器的/root下

(3)執行以下命令

cd /root/sk/spark-2.2.0-bin-hadoop2.7/ bin/spark-submit --master spark://spark-01:7077 --class SparkWordCount /root/SparkWC-1.0-SNAPSHOT.jar

hdfs://192.168.146.111:9000/words.txt hdfs://192.168.146.111:9000/sparkwc/out

(4)hdfs中words.txt檔案內容如下:

hello world

hello China

hello Beijing

haha heihei

(5)輸出結果

[[email protected] ~]# hdfs dfs -ls /sparkwc/out Found 3 items -rw-r--r-- 3 root supergroup 0 2019-01-10 21:43 /sparkwc/out/_SUCCESS -rw-r--r-- 3 root supergroup 10 2019-01-10 21:43 /sparkwc/out/part-00000 -rw-r--r-- 3 root supergroup 52 2019-01-10 21:43 /sparkwc/out/part-00001 [[email protected] ~]# hdfs dfs -cat /sparkwc/out/part-00000 (hello,3) [[email protected] ~]# hdfs dfs -cat /sparkwc/out/part-00001 (haha,1) (heihei,1) (Beijing,1) (world,1) (China,1)