優達機器學習:神經網路

練習:建立感知

# ----------

#

# In this exercise, you will add in code that decides whether a perceptron will fire based

# on the threshold. Your code will go in lines 32 and 34.

#

# ----------

import numpy as np

class Perceptron:

"""

This class models an artificial neuron with step activation function.

""" 練習:在哪兒訓練感知

權重

練習:感知輸入

每行帶有標籤的數值型矩陣

練習:神經網路輸出

- 一個有向圖

- 一個標量

- 用向量表示的分類資訊

- 每個輸入向量都對應一個輸出向量

練習:感知更新規則

# ----------

#

# In this exercise, you will update the perceptron class so that it can update

# its weights.

#

# Finish writing the update() method so that it updates the weights according

# to the perceptron update rule. Updates should be performed online, revising

# the weights after each data point.

#

# YOUR CODE WILL GO IN LINES 51 AND 59.

# ----------

import numpy as np

class Perceptron:

"""

This class models an artificial neuron with step activation function.

"""

def __init__(self, weights = np.array([1]), threshold = 0):

"""

Initialize weights and threshold based on input arguments. Note that no

type-checking is being performed here for simplicity.

"""

self.weights = weights.astype(float)

self.threshold = threshold

def activate(self, values):

"""

Takes in @param values, a list of numbers equal to length of weights.

@return the output of a threshold perceptron with given inputs based on

perceptron weights and threshold.

"""

# First calculate the strength with which the perceptron fires

strength = np.dot(values,self.weights)

# Then return 0 or 1 depending on strength compared to threshold

return int(strength > self.threshold)

def update(self, values, train, eta=.1):

"""

Takes in a 2D array @param values consisting of a LIST of inputs and a

1D array @param train, consisting of a corresponding list of expected

outputs. Updates internal weights according to the perceptron training

rule using these values and an optional learning rate, @param eta.

"""

# For each data point:

for data_point in xrange(len(values)):

# TODO: Obtain the neuron's prediction for the data_point --> values[data_point]

prediction = self.activate(values[data_point])

# Get the prediction accuracy calculated as (expected value - predicted value)

# expected value = train[data_point], predicted value = prediction

error = train[data_point] - prediction

# TODO: update self.weights based on the multiplication of:

# - prediction accuracy(error)

# - learning rate(eta)

# - input value(values[data_point])

weight_update = eta*error*values[data_point]

self.weights += weight_update

def test():

"""

A few tests to make sure that the perceptron class performs as expected.

Nothing should show up in the output if all the assertions pass.

"""

def sum_almost_equal(array1, array2, tol = 1e-6):

return sum(abs(array1 - array2)) < tol

p1 = Perceptron(np.array([1,1,1]),0)

p1.update(np.array([[2,0,-3]]), np.array([1]))

assert sum_almost_equal(p1.weights, np.array([1.2, 1, 0.7]))

p2 = Perceptron(np.array([1,2,3]),0)

p2.update(np.array([[3,2,1],[4,0,-1]]),np.array([0,0]))

assert sum_almost_equal(p2.weights, np.array([0.7, 1.8, 2.9]))

p3 = Perceptron(np.array([3,0,2]),0)

p3.update(np.array([[2,-2,4],[-1,-3,2],[0,2,1]]),np.array([0,1,0]))

assert sum_almost_equal(p3.weights, np.array([2.7, -0.3, 1.7]))

if __name__ == "__main__":

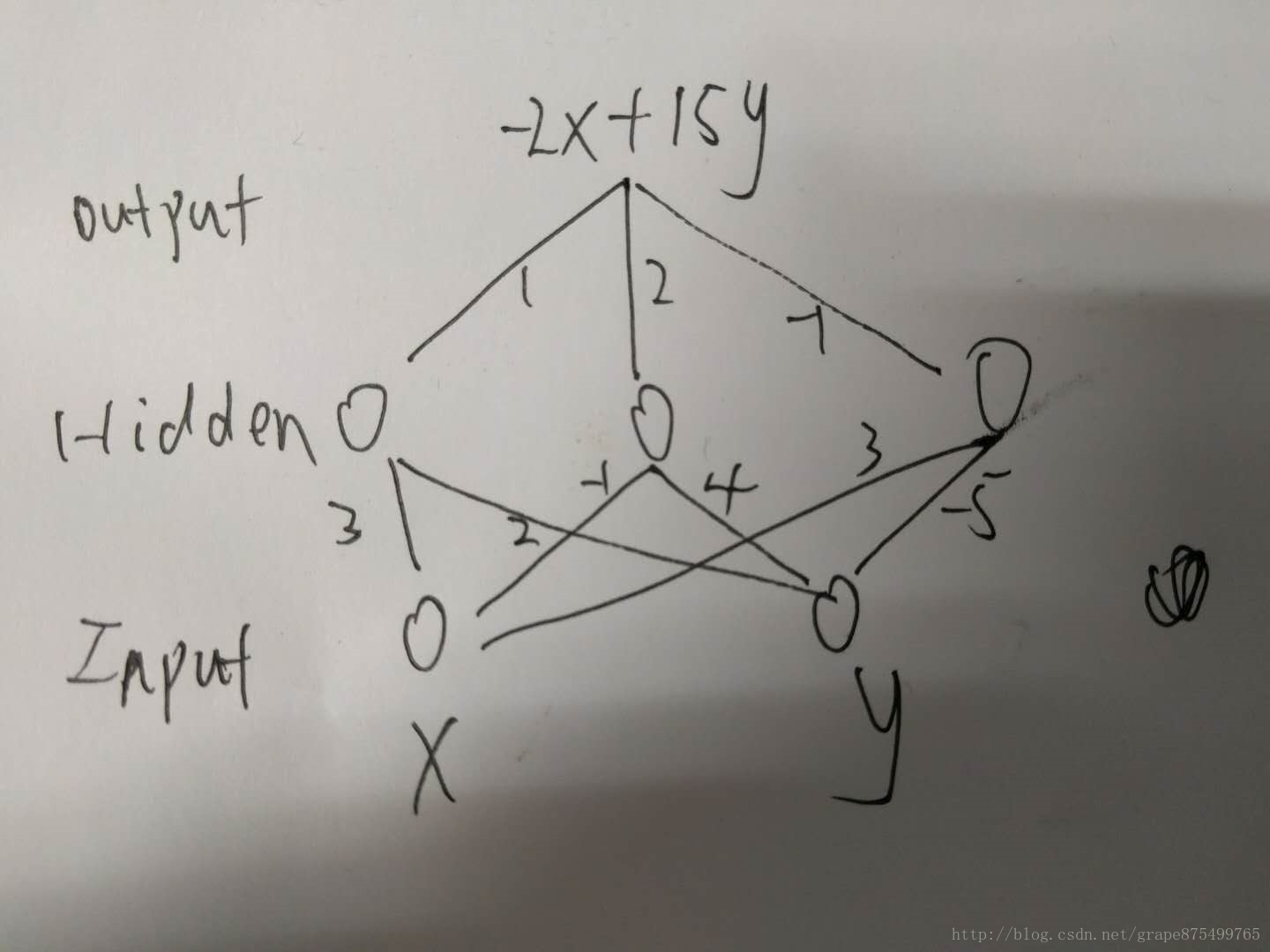

test()練習:多層網路示例

- answer:-25

import numpy as np

X = np.array([1, 2, 3])

weight_hidden = np.array([[1, 1, -5], [3, -4, 2]]).transpose()

weight_output = np.array([[2, -1]]).transpose()

X_hidden = np.dot(X, weight_hidden)

X_output = np.dot(X_hidden, weight_output)

print("{}".format(X_output))練習:線性表正能力

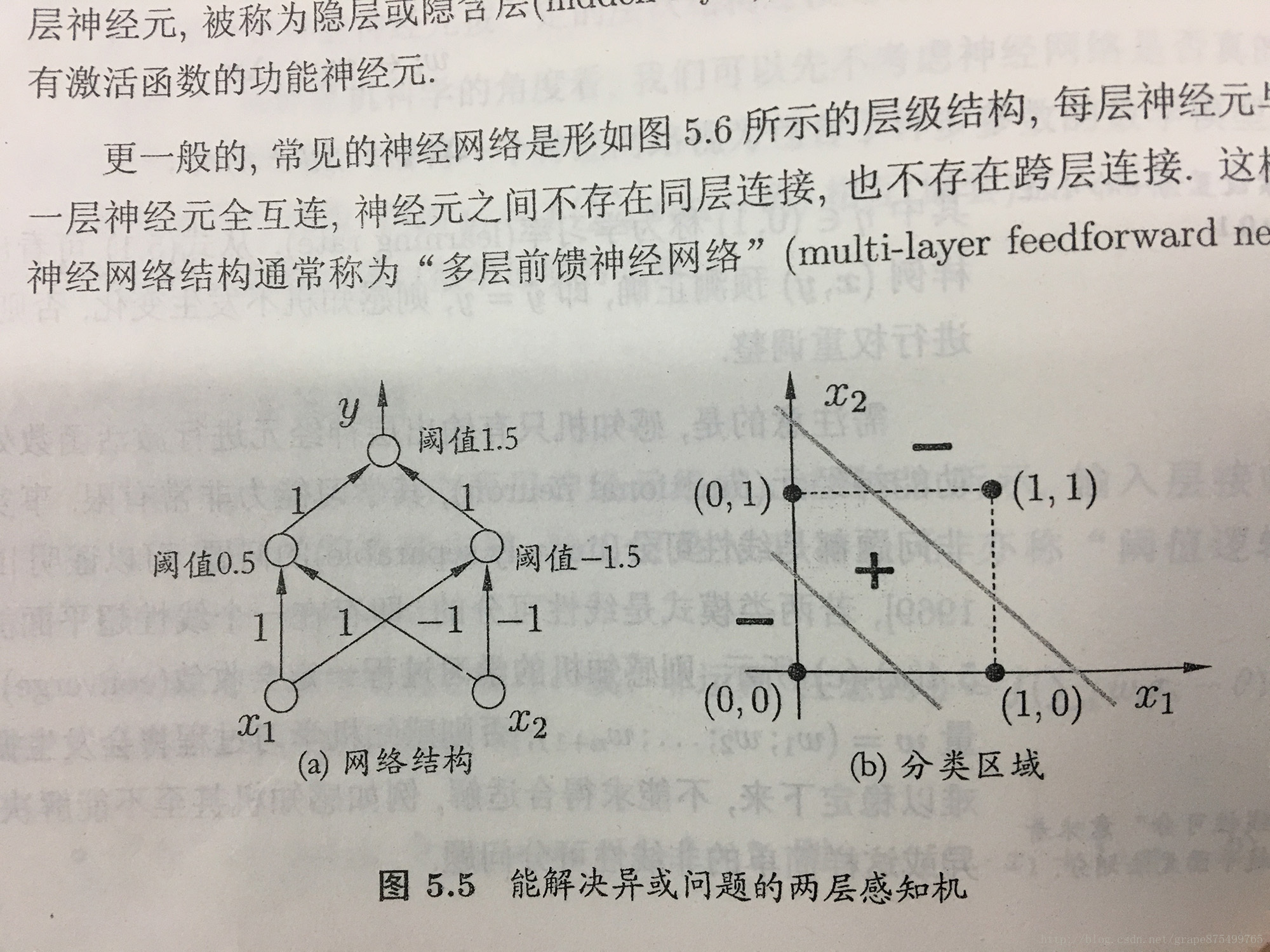

圖來自Udacity論壇

練習:建立XOR網路

下面的演算法依據上圖建立

# ----------

#

# In this exercise, you will create a network of perceptrons that can represent

# the XOR function, using a network structure like those shown in the previous

# quizzes.

#

# You will need to do two things:

# First, create a network of perceptrons with the correct weights

# Second, define a procedure EvalNetwork() which takes in a list of inputs and

# outputs the value of this network.

#

# ----------

import numpy as np

class Perceptron:

"""

This class models an artificial neuron with step activation function.

"""

def __init__(self, weights = np.array([1]), threshold = 0):

"""

Initialize weights and threshold based on input arguments. Note that no

type-checking is being performed here for simplicity.

"""

self.weights = weights

self.threshold = threshold

def activate(self, values):

"""

Takes in @param values, a list of numbers equal to length of weights.

@return the output of a threshold perceptron with given inputs based on

perceptron weights and threshold.

"""

# First calculate the strength with which the perceptron fires

strength = np.dot(values,self.weights)

# Then return 0 or 1 depending on strength compared to threshold

return int(strength > self.threshold)

# Part 1: Set up the perceptron network

Network = [

# input layer, declare input layer perceptrons here

[ Perceptron(np.array([1,1]),0.5),Perceptron(np.array([-1,-1]),-1.5) ], \

# output node, declare output layer perceptron here

[ Perceptron(np.array([1,1]),1.5) ]

]

# Part 2: Define a procedure to compute the output of the network, given inputs

def EvalNetwork(inputValues, Network):

"""

Takes in @param inputValues, a list of input values, and @param Network

that specifies a perceptron network. @return the output of the Network for

the given set of inputs.

"""

# YOUR CODE HERE

p1 = Network[0][0].activate(inputValues)

p2 = Network[0][1].activate(inputValues)

OutputValue = Network[1][0].activate(np.array([p1,p2]))

# Be sure your output value is a single number

return OutputValue

def test():

"""

A few tests to make sure that the perceptron class performs as expected.

"""

print "0 XOR 0 = 0?:", EvalNetwork(np.array([0,0]), Network)

print "0 XOR 1 = 1?:", EvalNetwork(np.array([0,1]), Network)

print "1 XOR 0 = 1?:", EvalNetwork(np.array([1,0]), Network)

print "1 XOR 1 = 0?:", EvalNetwork(np.array([1,1]), Network)

if __name__ == "__main__":

test()練習:離散測驗

- answer:4

類似XOR結構,最多有四種輸出情況,分類區域會出現交叉線,進而分出了四塊區域,也就是四個分類

練習:啟用函式沙盒

# ----------

#

# Python Neural Networks code originally by Szabo Roland and used with

# permission

#

# Modifications, comments, and exercise breakdowns by Mitchell Owen,

# (c) Udacity

#

# Retrieved originally from http://rolisz.ro/2013/04/18/neural-networks-in-python/

#

#

# Neural Network Sandbox

#

# Define an activation function activate(), which takes in a number and

# returns a number.

# Using test run you can see the performance of a neural network running with

# that activation function, where the inputs are 8x8 images of digits (0-9) and

# the outputs are digit predictions made by the network.

#

# ----------

import numpy as np

def activate(strength):

# Try out different functions here. Input strength will be a number, with

# another number as output.

return np.power(strength,2)

def activation_derivative(activate, strength):

#numerically approximate

return (activate(strength+1e-5)-activate(strength-1e-5))/(2e-5)練習:啟用函式 測驗

- Logistic function

練習:感知 v.s. Sigmoid

- 後者給出了更多的資訊,但是兩者的結果會相同

練習:Sigmoid學習

- 運用微積分

練習:梯度下降問題

- 區域性的極值

- 執行太耗時

- 會產生無限次迴圈

- 無法收斂

練習:Sigmoid 程式設計練習

# ----------

#

# As with the previous perceptron exercises, you will complete some of the core

# methods of a sigmoid unit class.

#

# There are two functions for you to finish:

# First, in activate(), write the sigmoid activation function.

# Second, in update(), write the gradient descent update rule. Updates should be

# performed online, revising the weights after each data point.

#

# ----------

import numpy as np

class Sigmoid:

"""

This class models an artificial neuron with sigmoid activation function.

"""

def __init__(self, weights = np.array([1])):

"""

Initialize weights based on input arguments. Note that no type-checking

is being performed here for simplicity of code.

"""

self.weights = weights

# NOTE: You do not need to worry about these two attribues for this

# programming quiz, but these will be useful for if you want to create

# a network out of these sigmoid units!

self.last_input = 0 # strength of last input

self.delta = 0 # error signal

def activate(self, values):

"""

Takes in @param values, a list of numbers equal to length of weights.

@return the output of a sigmoid unit with given inputs based on unit

weights.

"""

# YOUR CODE HERE

# First calculate the strength of the input signal.

strength = np.dot(values, self.weights)

self.last_input = strength

result = self.logistic(strength)

# TODO: Modify strength using the sigmoid activation function and

# return as output signal.

# HINT: You may want to create a helper function to compute the

# logistic function since you will need it for the update function.

return result

def logistic(self, X):

return 1.0/(1+np.exp(-X))

def update(self, values, train, eta=.1):

"""

Takes in a 2D array @param values consisting of a LIST of inputs and a

1D array @param train, consisting of a corresponding list of expected

outputs. Updates internal weights according to gradient descent using

these values and an optional learning rate, @param eta.

"""

# TODO: for each data point...

for X, y_true in zip(values, train):

# obtain the output signal for that point

y_pred = self.activate(X)

# YOUR CODE HERE

error = y_true - y_pred

# TODO: compute derivative of logistic function at input strength

# Recall: d/dx logistic(x) = logistic(x)*(1-logistic(x))

dx = self.logistic(self.last_input) * (1-self.logistic(self.last_input))

# TODO: update self.weights based on learning rate, signal accuracy,

# function slope (derivative) and input value

weight_update = eta*dx*error*X

self.weights += weight_update

def test():

"""

A few tests to make sure that the perceptron class performs as expected.

Nothing should show up in the output if all the assertions pass.

"""

def sum_almost_equal(array1, array2, tol = 1e-5):

return sum(abs(array1 - array2)) < tol

u1 = Sigmoid(weights=[3,-2,1])

assert abs(u1.activate(np.array([1,2,3])) - 0.880797) < 1e-5

u1.update(np.array([[1,2,3]]),np.array([0]))

assert sum_almost_equal(u1.weights, np.array([2.990752, -2.018496, 0.972257]))

u2 = Sigmoid(weights=[0,3,-1])

u2.update(np.array([[-3,-1,2],[2,1,2]]),np.array([1,0]))

assert sum_almost_equal(u2.weights, np.array([-0.030739, 2.984961, -1.027437]))

if __name__ == "__main__":

test()相關推薦

優達機器學習:神經網路

練習:建立感知 # ---------- # # In this exercise, you will add in code that decides whether a perceptron will fire based # on the thre

吳恩達機器學習筆記-神經網路的代價函式和反向傳播演算法

代價函式 在神經網路中,我們需要定義一些新的引數來表示代價函式。 L = total number of layers in the network $s_l$ = number of units (not counting bias unit) in layer

吳恩達-機器學習(4)-神經網路

文章目錄 Neural NetWorking Non-linear Hypotheses Examples and Intuitions Mutlli-class Classification

優達機器學習:決策樹練習題

12 練習:決策樹準確性 這裡優達的執行環境有個坑,就是他時而準確時而錯誤,所以測試的時候就一會兒是對的,一會兒是錯的,同樣的一個程式碼,感覺變數會混淆似的 import sys from class_vis import prettyPicture f

圖解機器學習:神經網路和 TensorFlow 的文字分類

開發人員經常說,如果你想開始機器學習,你應該首先學習演算法。但是我的經驗則不是。 我說你應該首先了解:應用程式如何工作。一旦瞭解了這一點,深入探索演算法的內部工作就會變得更加容易。 那麼,你如何 開發直覺學習,並實現理解機器學習這個目的?一個很好的方法是建立機器學習模型。 假設

優達機器學習:主成分分析(PCA)

主成分是由資料中具有最大方差的方向決定的,因為可以最大程度的保留資訊量 我理解相當於降維,也就是將特徵通過降維的方式減少 方差最大化相當於將所有的距離最小化,這個方差和平時理解的方差不太一樣 PCA可以幫助你發現數據中的隱藏特徵,比如說得到總體上有兩個因素推動

優達機器學習:交叉驗證

練習:在 Sklearn 中訓練/測試分離 #!/usr/bin/python """ PLEASE NOTE: The api of train_test_split changed and moved from sklearn.cross_vali

機器學習:神經網路-多層前饋神經網路淺析(附程式碼實現)

M-P神經元模型神經網路中最基本的組成成分:神經元模型。如下圖是一個典型的“M-P神經元模型”:上圖中,神經元接收到n個其他神經元傳遞過來的輸入訊號,這些訊號通過權重的連線進行傳遞,神經元接收到的總輸入值與神經元的閾值進行比較,並通過“啟用函式”處理產生神經元輸出。常用S函式

機器學習之神經網路:離線安裝tensorflow

Python學習中安裝方面是一個大坑,後面我會把所有的安裝過程都總結下來,這裡先推送一個tensorflow的安裝,大家不用慌,按照下面的過程一步一步來. 1、 準備工作 電腦安裝好anaconda,python(python,我是強烈推薦使用anaconda,

吳恩達《機器學習》 --- 神經網路

待到秋來九月八,我花開後百花殺。 沖天香陣透長安,滿城盡帶黃金甲。 不知怎的寫到這篇文章的時候突然想起了這首詩,想想用這首詩來形容神經網路之於機器學習中一時無兩的地位一點也不過分,特別是在當前這麼一個情況下,真是“滿城”都是神經網路啊! 吳恩達在Coursera上《機

機器學習與神經網路(四):BP神經網路的介紹和Python程式碼實現

前言:本篇博文主要介紹BP神經網路的相關知識,採用理論+程式碼實踐的方式,進行BP神經網路的學習。本文首先介紹BP神經網路的模型,然後介紹BP學習演算法,推導相關的數學公式,最後通過Python程式碼實現BP演算法,從而給讀者一個更加直觀的認識。 1.BP網路模型 為了將理

機器學習與神經網路(二):感知器的介紹和Python程式碼實現

前言:本篇博文主要介紹感知器的相關知識,採用理論+程式碼實踐的方式,進行感知器的學習。本文首先介紹感知器的模型,然後介紹感知器學習規則(Perceptron學習演算法),最後通過Python程式碼實現單層感知器,從而給讀者一個更加直觀的認識。 1.單層感知器模型 單層感知器

人工智慧、機器學習和神經網路計算棒走出試驗室的應用場景

跟著“人工智慧”走出試驗室、逐步有了實踐的應用場景,它成為了一項可能在不久的將來徹底改動人類社會的根底技能,也成為了很多人最愛評論的論題。可是,AI(人工智慧)、機器學習、神經網路計算棒,這些詞看著潮,究竟是指什麼呢? 別慌,咱們試著舉幾個簡略的比方來解釋一下。 人工智慧 “科技

人工智慧,機器學習,神經網路,深度學習的關係

目錄 機器學習 有監督學習和無監督學習 神經網路 剛剛接觸人工智慧的內容時,經常性的會看到人工智慧,機器學習,深度學習還有神經網路的不同的術語,一個個都很高冷,以致於傻傻分不清到底它們之間是什麼樣的關係,很多時候都認為是一個東西的不同表達而已,看了一些具體的介紹後才漸漸有了一個大

【GitChat】從機器學習到神經網路

訂閱地址:從機器學習到神經網路 人工智慧已經是各大媒體經常聚焦的話題,人工智慧、機器學習、深度學習與神經網路之間究竟是怎樣的關係? 神經網路是深度學習的重要基礎,作為實現人工智慧的技術之一,曾經在歷史的長河中沉睡了數十年,為何又能夠重新甦醒、熠熠生輝。本文將詳細介紹神經網路的前生今世

機器學習_3.神經網路之CNN

卷積神經網路 卷積神經網路(Convoltional Neural Networks, CNN)是一類包含卷積或相關計算且具有深度結構的前饋神經網路(Feedforward Neural Networks),是深度學習(deep learning)的代表演算法之一

機器學習_2.神經網路之DBN

深度信念網路(DBN) 深度信念網路是一個概率生成模型,與傳統的判別模型的神經網路相對,生成模型是建立一個觀察資料和標籤之間的聯合分佈,對P(Observation|Label)和 P(Label|Observation)都做了評估,而判別模型僅僅而已評估了後者,也就是P(Label|O

機器學習_1.神經網路的研究和學習(一)

人工神經網路 — —百度百科 人工神經網路(Artificial Neural Network,即ANN ),是20世紀80 年代以來人工智慧領域興起的研究熱點。它從資訊處理角度對

從機器學習到神經網路

人工智慧已經是各大媒體經常聚焦的話題,人工智慧、機器學習、深度學習與神經網路之間究竟是怎樣的關係? 神經網路是深度學習的重要基礎,作為實現人工智慧的技術之一,曾經在歷史的長河中沉睡了數十年,為何又能夠重新甦醒、熠熠生輝。本文將詳細介紹神經網路的前生今世,以及它的基本結構、實現形式和核心要點。歡迎感

Hinton《面向機器學習的神經網路》中文版 視訊教程

Hinton《面向機器學習的神經網路》中文版 開課時間:深度學習鼻祖Hinton公開課視訊,隨到隨學 開課時長:16個章節,系統學習神經網路知識體系 連結: http://www.mooc.ai/course/58 後記