AI-009: 吳恩達教授(Andrew Ng)的機器學習課程學習筆記38-47

本文是學習Andrew Ng的機器學習系列教程的學習筆記。教學視訊地址:

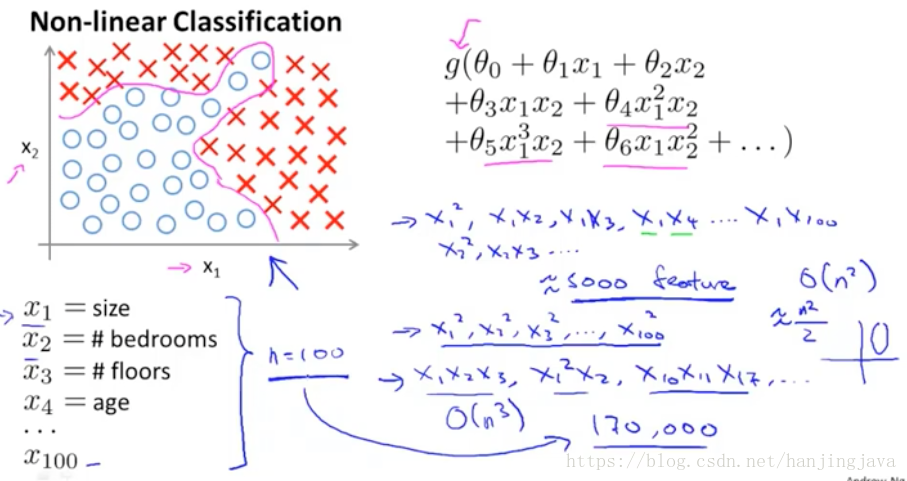

38. Neural Networks - Representation - Non-linear hypotheses

Why neural networks?

Simple linear or logistic regression together with adding in maybe the quadratic or the cubic features, that is not the good way to learn complex nonlinear hypotheses when n is large, because just end up with too many features.

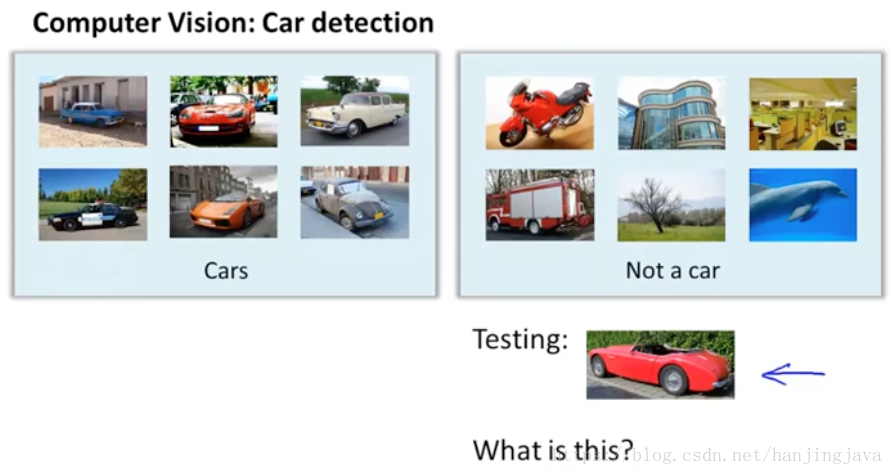

for example: Visual recognition

The computation would be very expensive to find and represent all of these features.

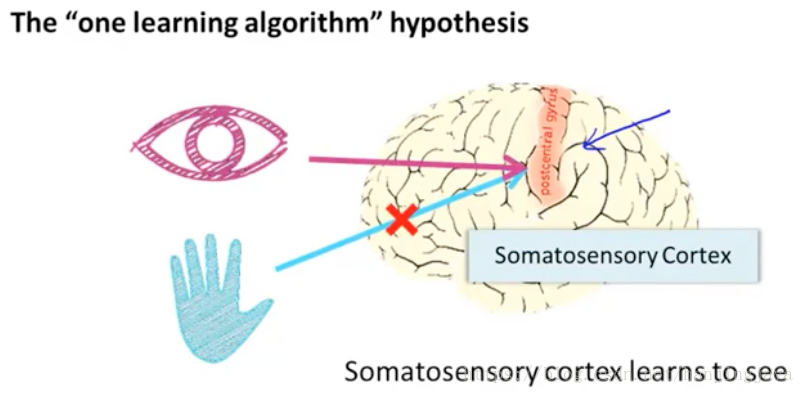

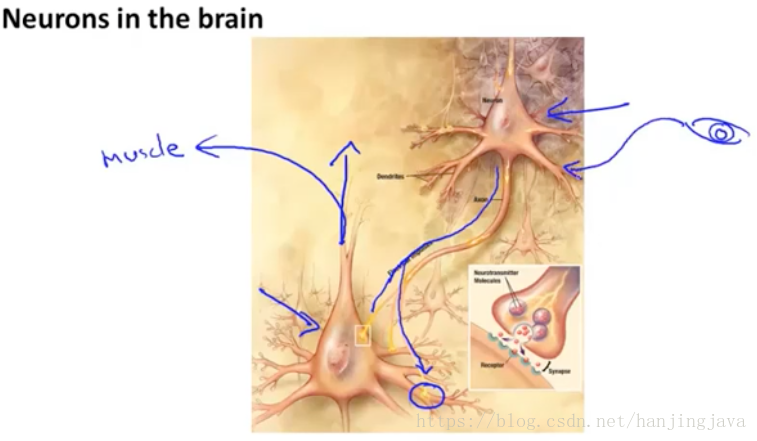

39. Neural Networks - Representation - neurons and the brain

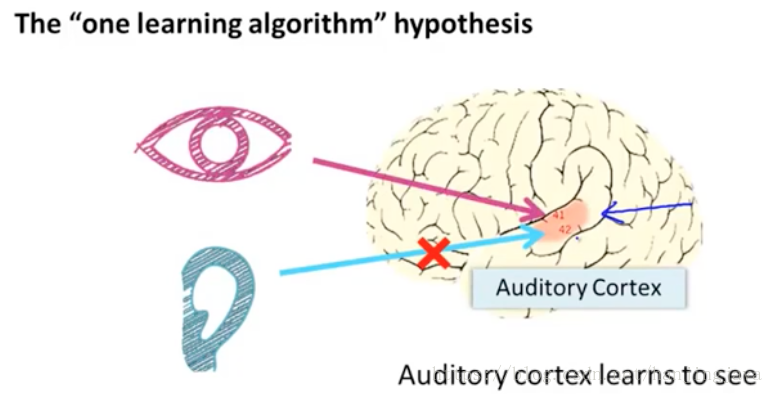

mimic 模仿 大腦可以根據接入的訊號不同產生不同的功能,比如將聽覺區域接入視覺訊號,就能'看'見;

cut ear or hand neural and connect eye neural, this part of brain will learn to see.(neuro-rewiring experiments

try to find out brain’s learning algorithm!

you can plug in almost any sensor to the brain and the brain’s learning algorithm will just figure out how to learn from that data and deal with that data.

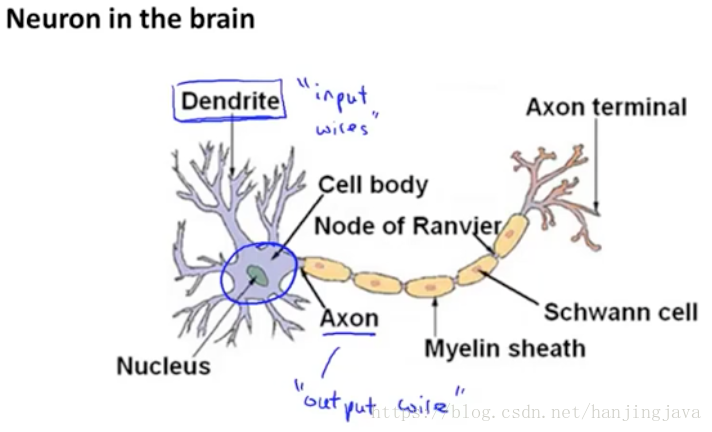

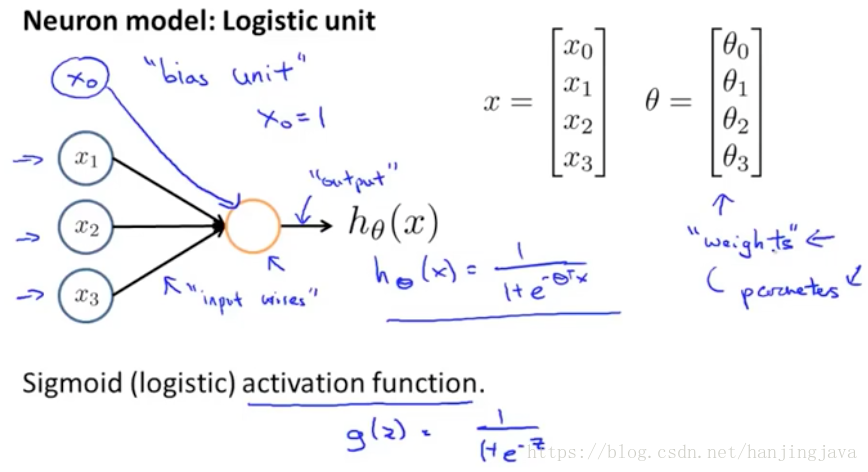

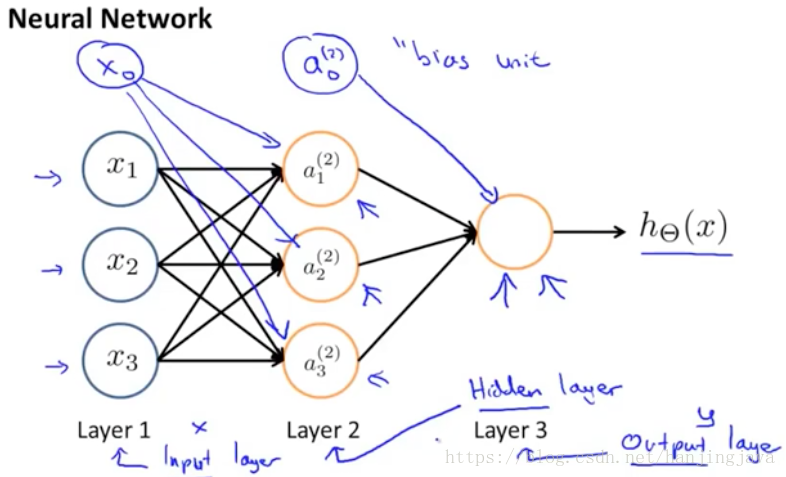

40. Neural networks: Representation - model representation I

Nucleus 核

Dendrite 樹突

Cell body細胞體

Node of Ranvier 蘭氏節

Axon 軸突 output wire

Myelin sheath 髓鞘

Schwann cell 神經膜細胞

Axon terminal 軸突末梢

one neurons send a little pulse of electricity via its axon to some different neuron’s dendrite.

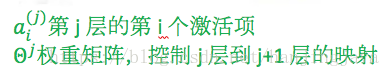

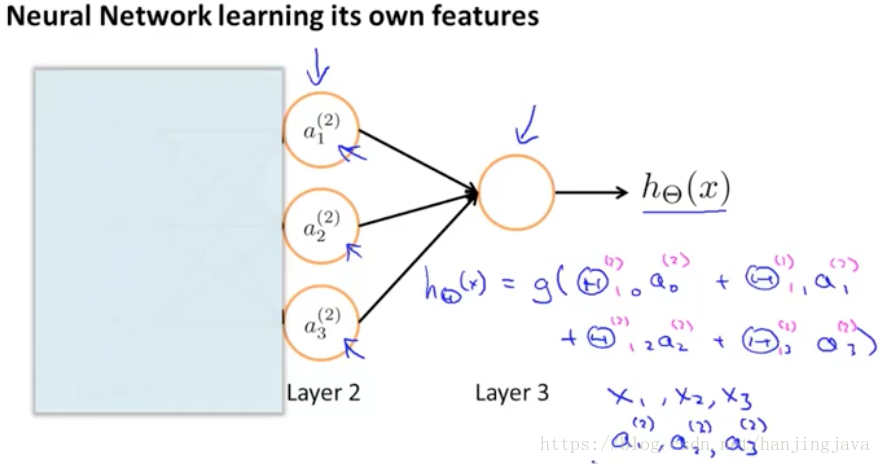

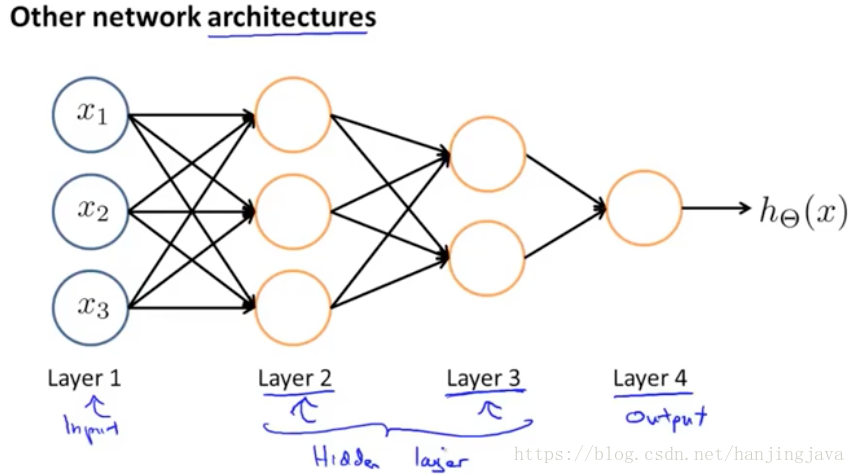

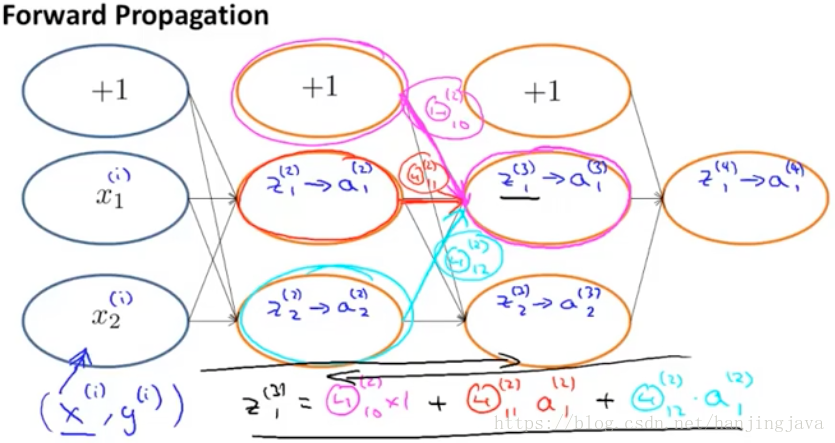

next show the computational steps that are represented by this diagram.

forward propagation 前向傳播

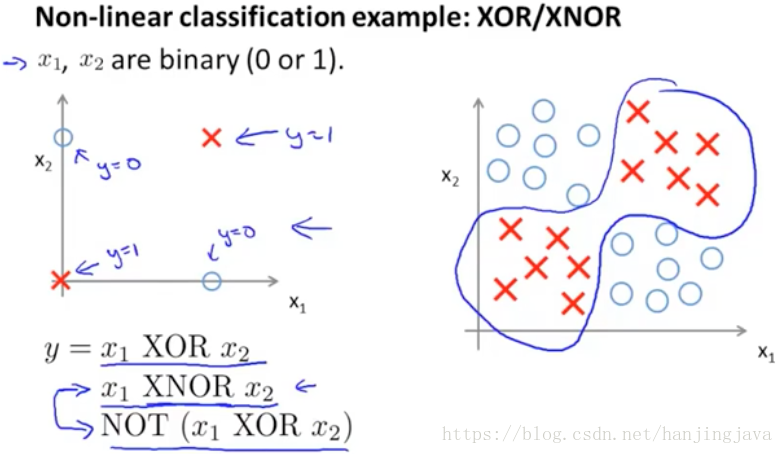

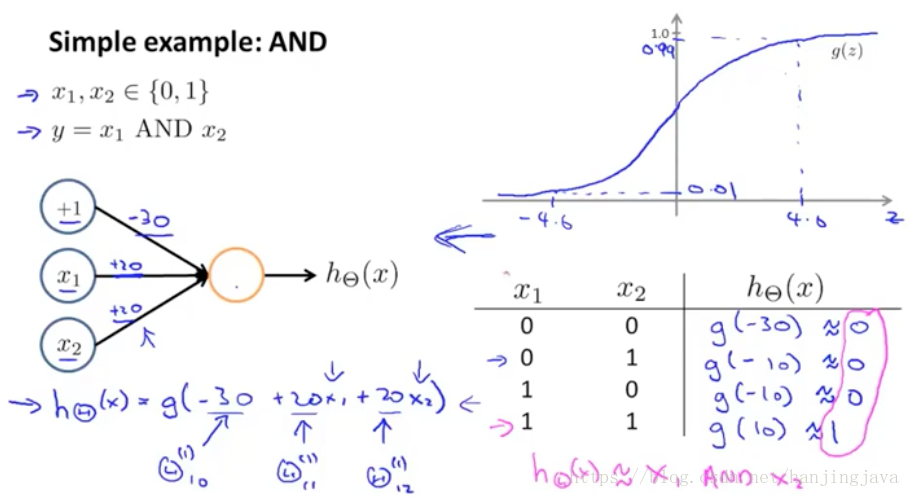

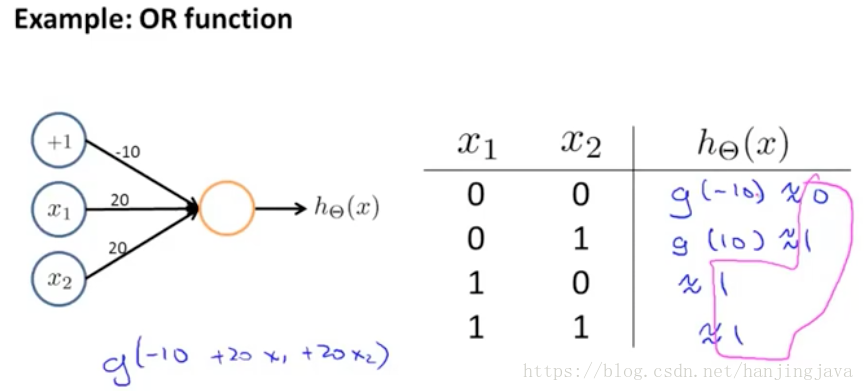

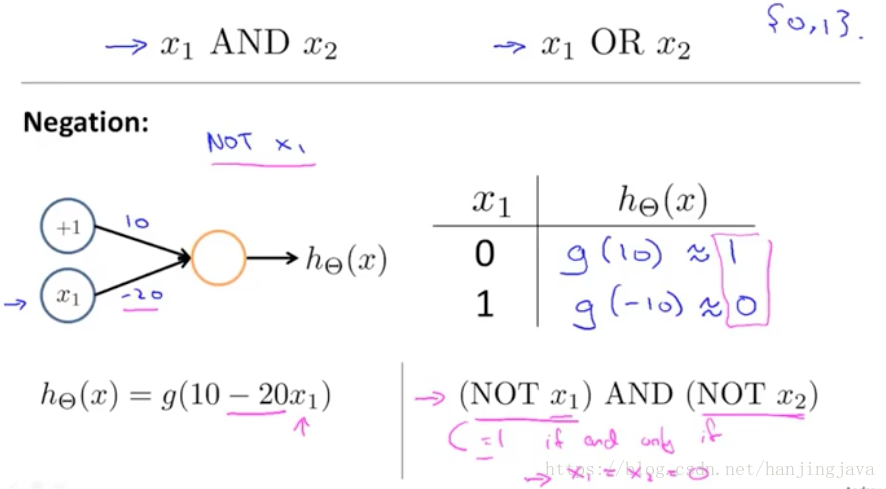

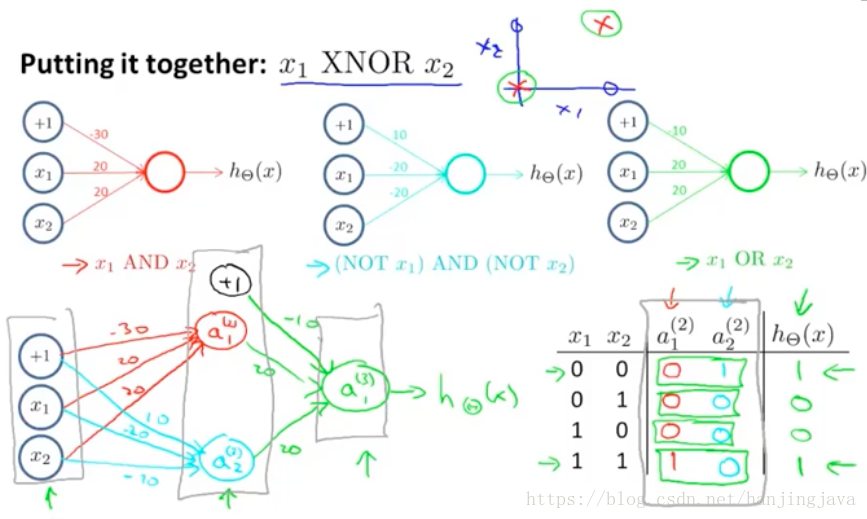

41. Neural Networks - Representation - examples and intuitions

just put a large negative weight in front of the variable you want to negate.

end up with a nonlinear decision boundary 得到一個非線性的決策邊界

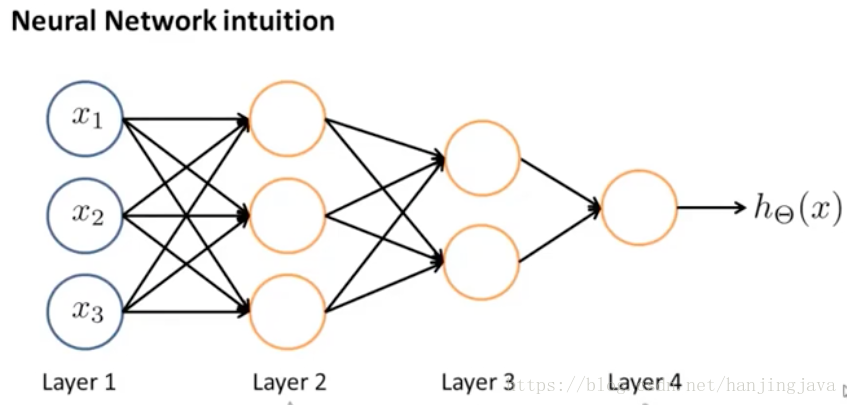

each layer compute even more complex functions, then the neural networks can deal with complex question.

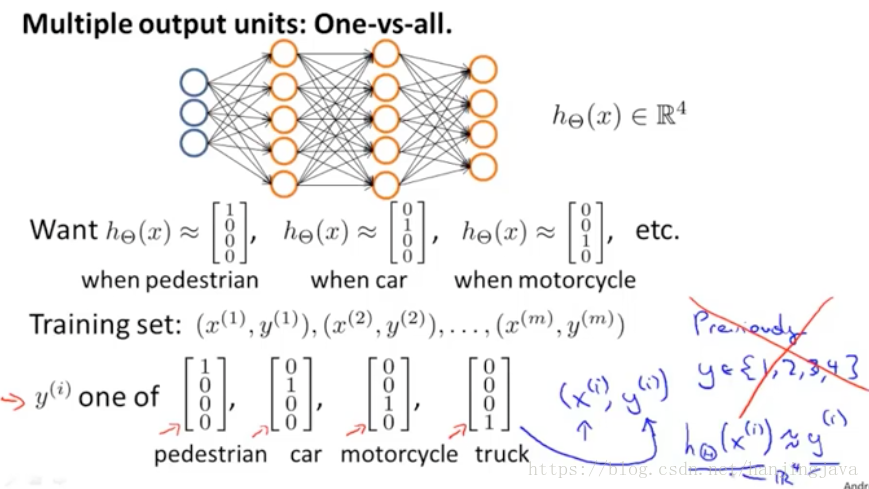

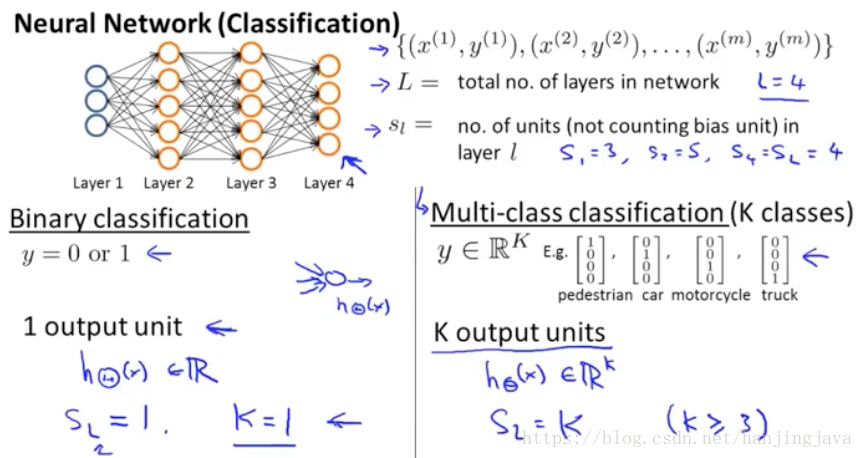

41. Neural Networks - Representation - Mult-class classification

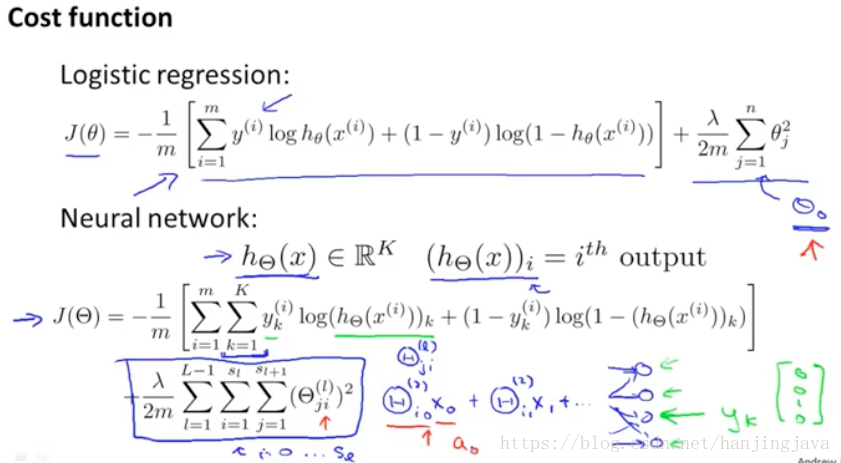

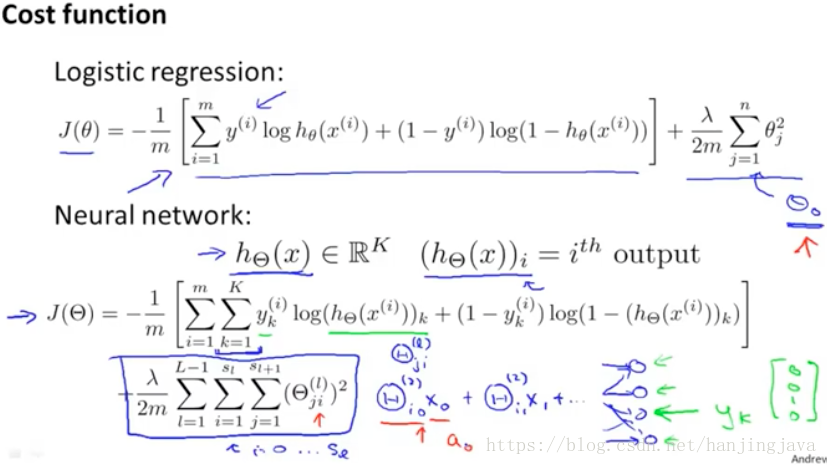

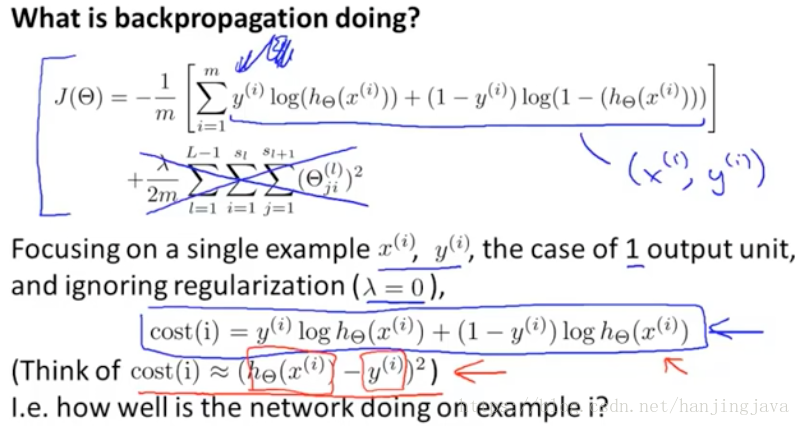

42. Neural Networks - Learning- Cost function

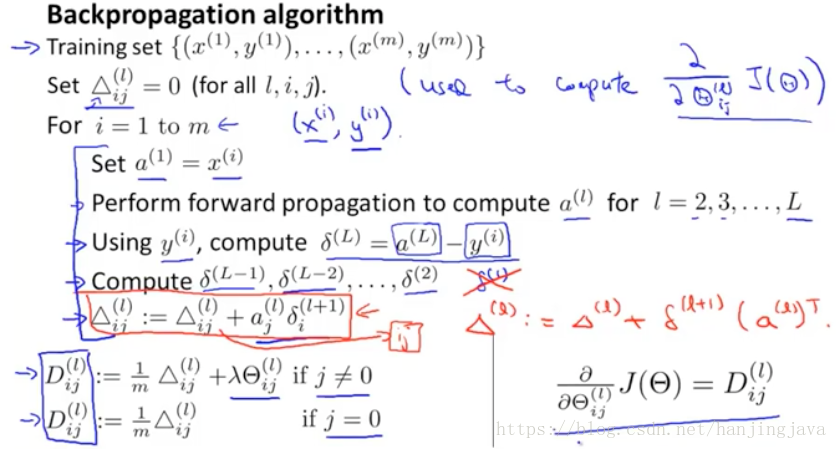

42. Neural Networks - Learning - back propagation algorithm

back propagation algorithm 反向傳播演算法

the key is how to compute these partial derivative terms.

關鍵是如何計算這些偏導項。

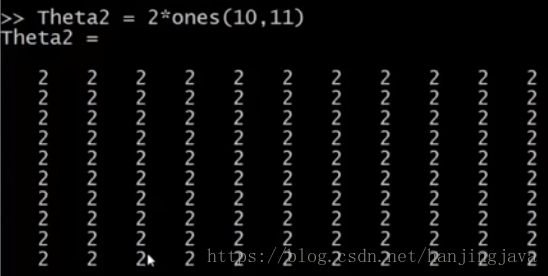

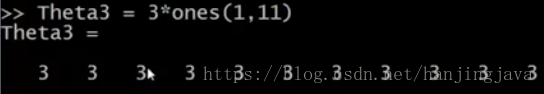

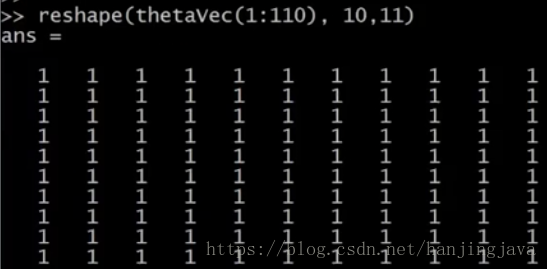

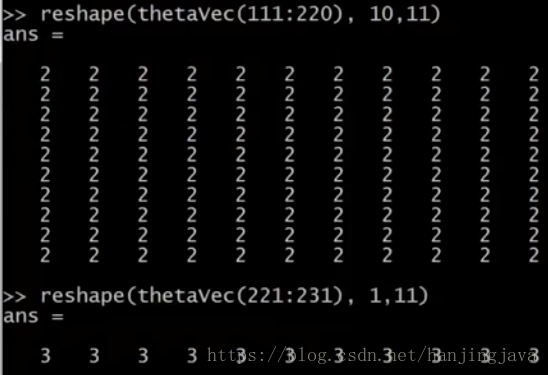

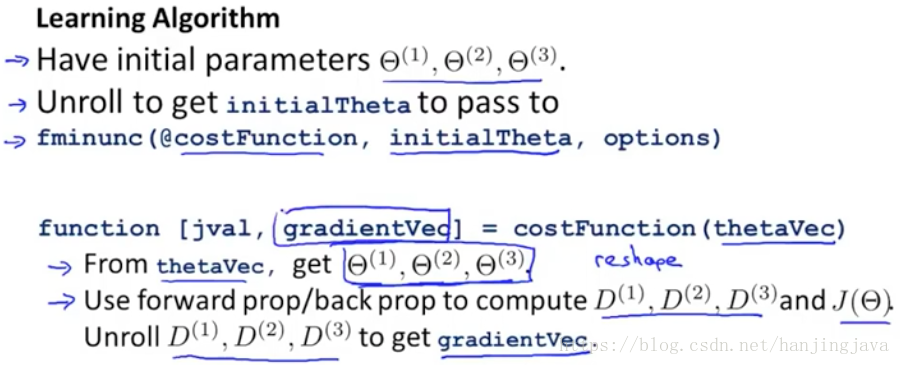

43. Neural Networks - Learning - Implementation note: unrolling parameters

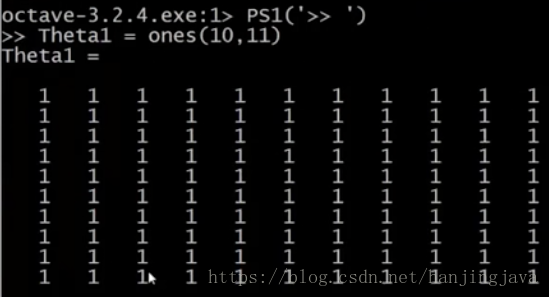

How to onvert back and forth between the matrix representation of the parameters versus the vector representation of the parameters.

The advantage of the matrix representation is that when your parameters are stored as matrices it’s more convenient when you’re doing forward propagation and back propagation and it’s easier when your parameters are stored as matrices to take advantage of the sort of, vectorized implementations.

The advantage of the vector representation when you have like thetaVec or DVec is that when you are using the advanced optimization algorithms. Those algorithms tend to assume that you have all of your parameters unrolled into a big long vector.

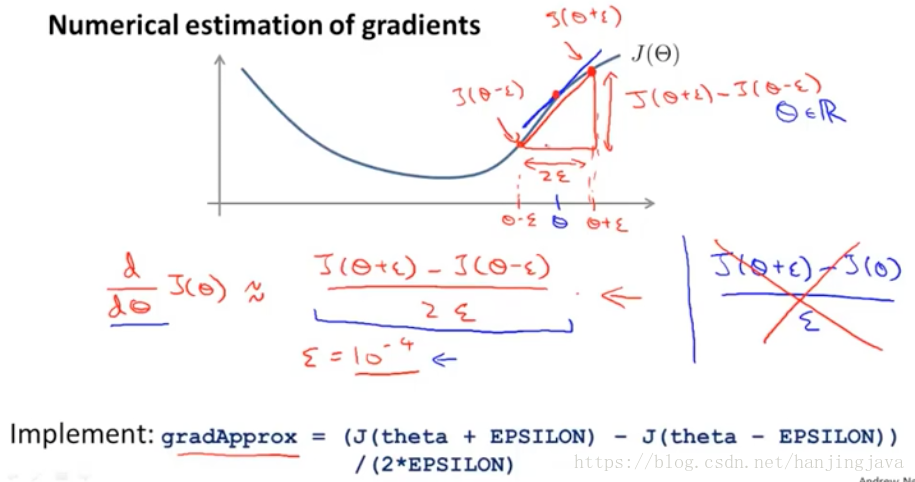

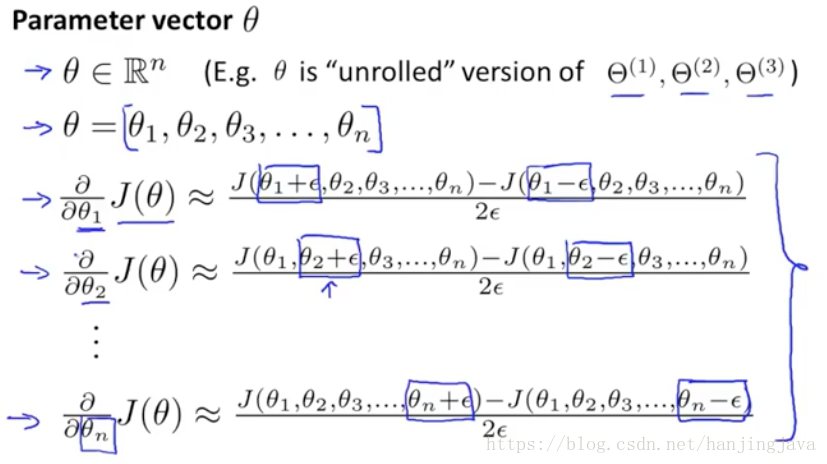

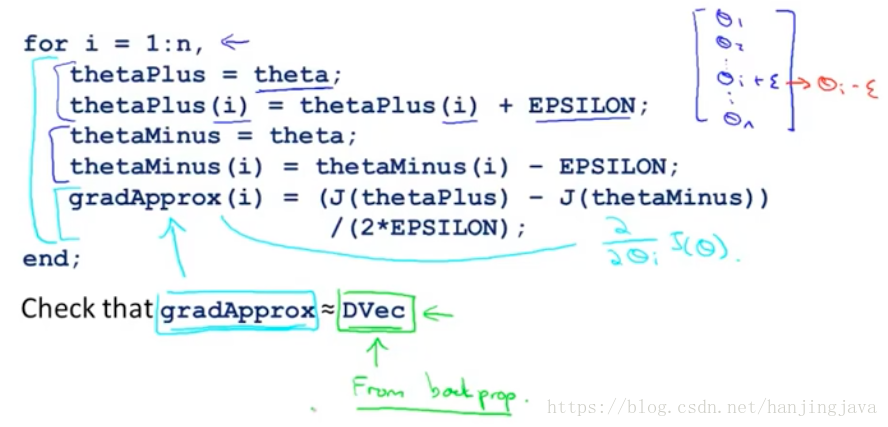

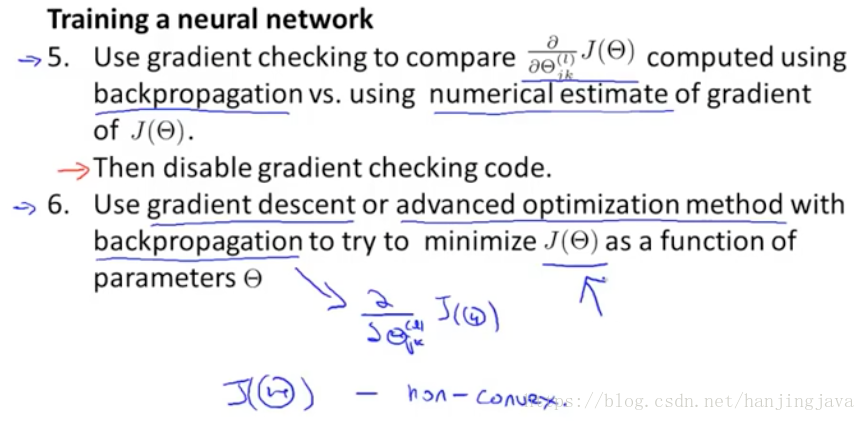

44. Neural Networks - Learning - Gradient checking

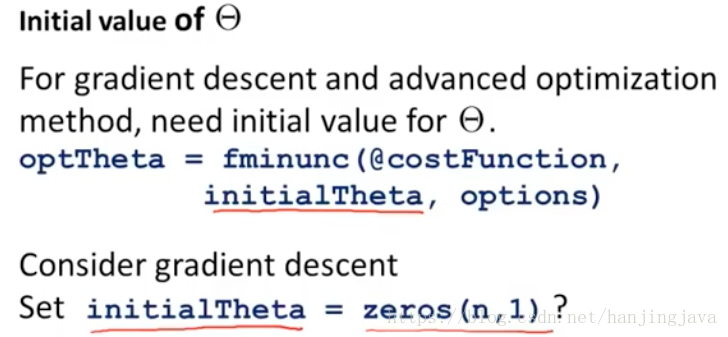

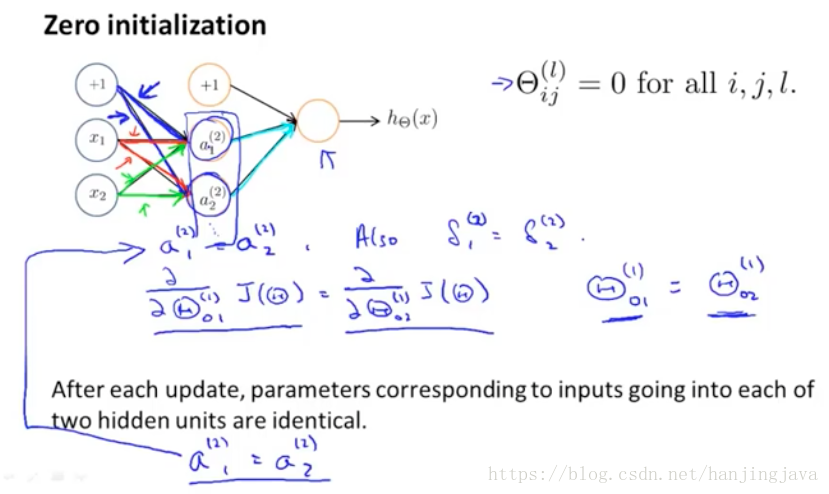

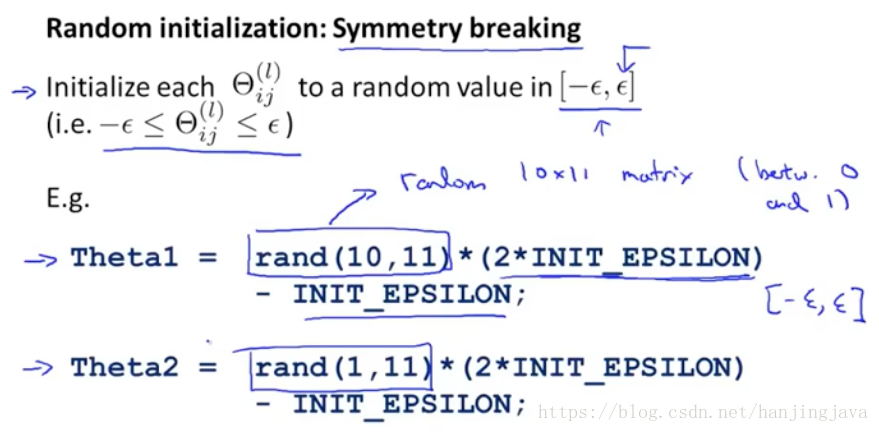

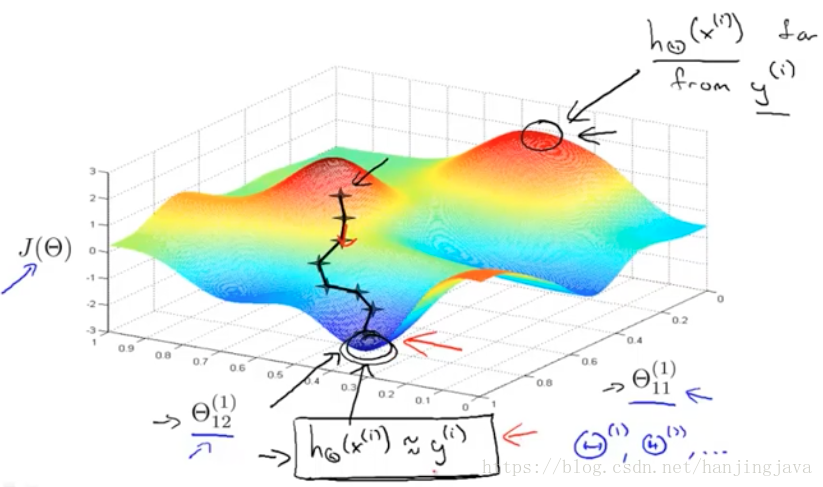

45. Neural Networks - Learning - Random Initialization

all choose 0 will not work

we will only get one features

the epsilon here has no relationship with the epsilon in gradient checking.

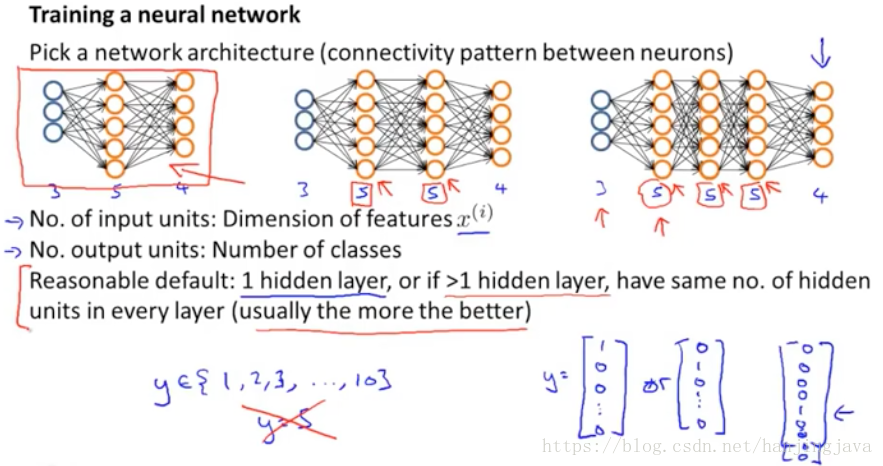

46. Neural Network - Learning - Putting it together

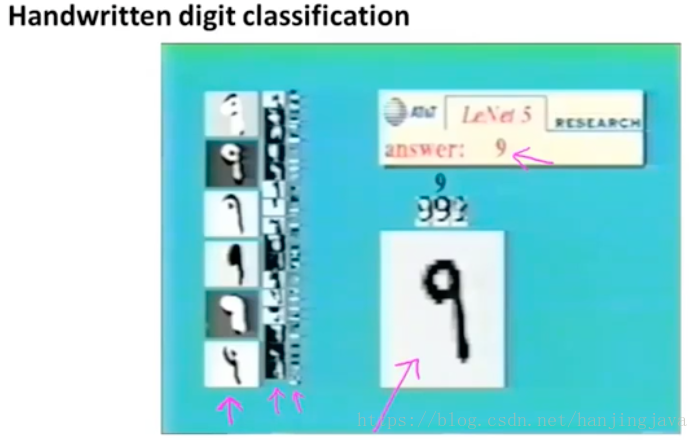

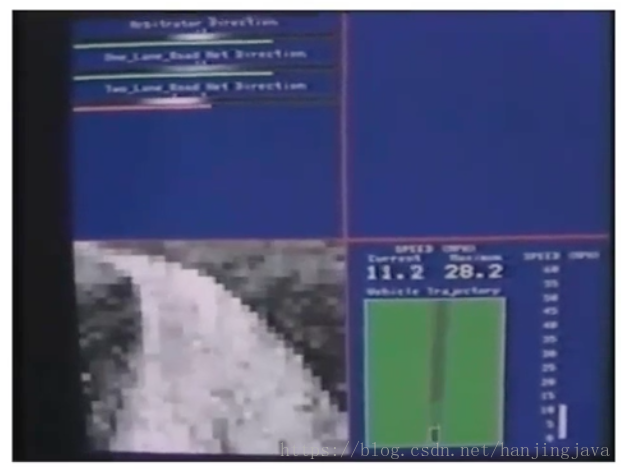

47. Neural Networks - Learning - Autonomouse driving example

left top is man and neural network result.

Left bottom is image of road.

ALVINN

see human drive and after 2 minutes will auto drive.

can auto switch one-lade road and two lane-road weight. 單車道、雙車道