人臉旋轉歸一化 (根據特徵點定位,dlib+opencv)

阿新 • • 發佈:2019-01-07

找工作好無趣,所以來寫幾篇部落格玩一玩

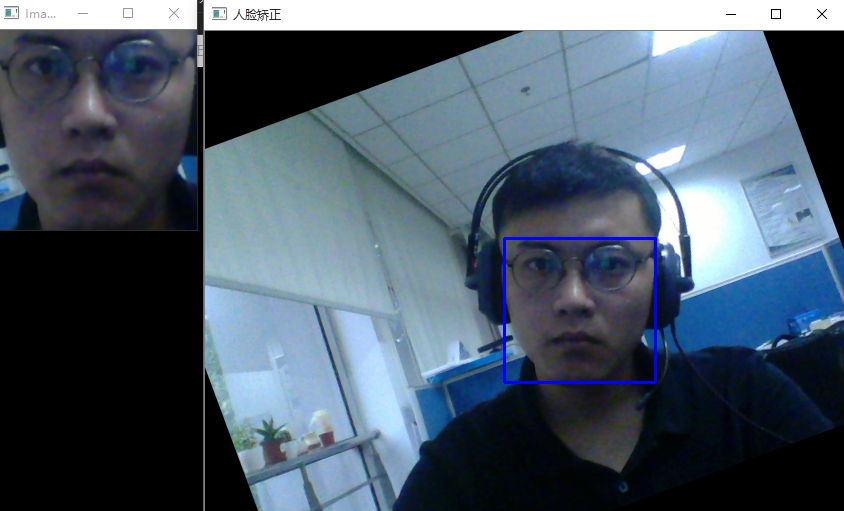

慣例先放結果:

思路:

人臉定位——特徵點——旋轉

//具體實現看程式碼,還是有一定提升空間的,233

下面就是簡單粗暴的程式碼了:

還是畫上特徵點順眼點,2333#include <dlib/opencv.h> #include <opencv2/opencv.hpp> #include <dlib/image_processing/frontal_face_detector.h> #include <dlib/image_processing/render_face_detections.h> #include <dlib/image_processing.h> #include <dlib/gui_widgets.h> #include<algorithm> using namespace dlib; using namespace std; static cv::Rect dlibRectangleToOpenCV(dlib::rectangle r) { return cv::Rect(cv::Point2i(r.left(), r.top()), cv::Point2i(r.right() + 1, r.bottom() + 1)); } static dlib::rectangle openCVRectToDlib(cv::Rect r) { return dlib::rectangle((long)r.tl().x, (long)r.tl().y, (long)r.br().x - 1, (long)r.br().y - 1); } int main() { try { cv::VideoCapture cap(0); if (!cap.isOpened()) { cerr << "Unable to connect to camera" << endl; return 1; } //image_window win; // Load face detection and pose estimation models. frontal_face_detector detector = get_frontal_face_detector(); shape_predictor pose_model; deserialize("shape_predictor_68_face_landmarks.dat") >> pose_model; // Grab and process frames until the main window is closed by the user. while (cv::waitKey(30) != 27) { // Grab a frame cv::Mat temp; cap >> temp; cv_image<bgr_pixel> cimg(temp); // Detect faces std::vector<rectangle> faces = detector(cimg); // Find the pose of each face. std::vector<full_object_detection> shapes; for (unsigned long i = 0; i < faces.size(); ++i) shapes.push_back(pose_model(cimg, faces[i])); if (!shapes.empty()) { //for (int i = 0; i < 68; i++) { // circle(temp, cvPoint(shapes[0].part(i).x(), shapes[0].part(i).y()), 2, cv::Scalar(255, 0, 0), -1); // shapes[0].part(i).x();//68個 // } int width = temp.cols; int length = temp.rows; double x = shapes[0].part(36).x() - shapes[0].part(45).x(); double y = shapes[0].part(36).y() - shapes[0].part(45).y(); double angle = x == 0 ? 0 : atan(y / x); cv::Mat rotation = cv::getRotationMatrix2D(cvPoint(shapes[0].part(30).x(), shapes[0].part(30).y()), angle / 3.14 * 180, 1.0); cv::warpAffine(temp, temp, rotation, cv::Size(width, length)); } cimg = cv_image<bgr_pixel>(temp); faces = detector(cimg); if (faces.size() != 0) { cv::Rect rect = dlibRectangleToOpenCV(faces[0]); full_object_detection points = pose_model(cimg, faces[0]); long xmax = points.part(0).x(); long xmin = points.part(0).x(); long ymin = points.part(0).y(); long ymax = points.part(0).y();; for (int i = 1; i < 68; i++) { xmax = std::max(xmax, points.part(i).x()); xmin = std::min(xmin, points.part(i).x()); ymax = std::max(ymax, points.part(i).y()); ymin = std::min(ymin, points.part(i).y()); } rect.x = xmin ; rect.width = xmax - xmin ; rect.y = ymin; rect.height = ymax - ymin ; cv::Mat imCrop = temp(rect); cv::resize(imCrop, imCrop, cv::Size(200, 200)); imshow("Image", imCrop); cv::rectangle(temp, rect, cv::Scalar(255, 0, 0), 2); } imshow("人臉矯正", temp); ; } } catch (serialization_error& e) { cout << "You need dlib's default face landmarking model file to run this example." << endl; cout << "You can get it from the following URL: " << endl; cout << " http://dlib.net/files/shape_predictor_68_face_landmarks.dat.bz2" << endl; cout << endl << e.what() << endl; system("pause"); } catch (exception& e) { cout << e.what() << endl; system("pause"); } }