輪廓檢測論文解讀 | Richer Convolutional Features for Edge Detection | CVPR | 2017

阿新 • • 發佈:2020-12-16

有什麼問題可以加作者微信討論,cyx645016617 上千人的粉絲群已經成立,氛圍超好。為大家提供一個遇到問題有可能得到答案的平臺。

## 0 概述

- 論文名稱:“Richer Convolutional Features for Edge Detection”

- 論文連結:https://openaccess.thecvf.com/content_cvpr_2017/papers/Liu_Richer_Convolutional_Features_CVPR_2017_paper.pdf

- 縮寫:RCF

這一篇文論在我看來,**是CVPR 2015年 HED網路(holistically-nested edge detection)的一個改進,RCF的論文中也基本上和HED網路處處對比**。

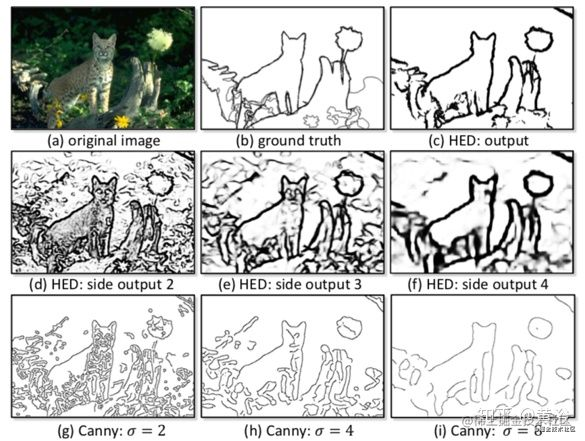

在上一篇文章中,我們依稀記得HED模型有這樣一個圖:

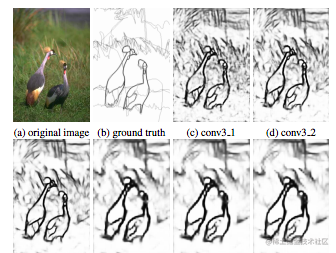

其中有HED的五個side output的特徵圖,下圖是RCF論文中的圖:

我們從這兩個圖的區別中來認識RCF相比HED的改進,大家可以看一看圖。

揭曉答案:

- HED是豹子的圖片,但是RCF是兩隻小鳥的圖片(手動狗頭)

- HED中的是side output的輸出的特徵圖,而RCF中是conv3_1,conv3_2,這意味著**RCF似乎把每一個卷積之後的輸出的特徵圖都作為了一個side output**。

沒錯,HED選取了5個side output,每一個side output都是池化層之前的卷積層輸出的特徵圖;而RCF則對每一次卷積的輸出特徵圖都作為side output,換句話說 **最終的side output中,同一尺寸的輸出可能不止一個**。

如果還沒有理解,請看下面章節,模型結構。

## 1 模型結構

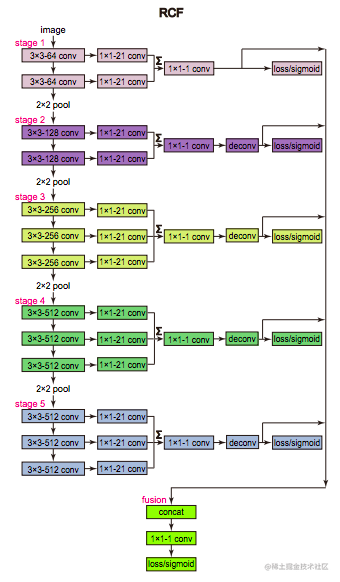

RCF的backbone是VGG模型:

從圖中可以看到:

- 主幹網路上分成state1到5,stage1有兩個卷積層,stage2有兩個卷積層,總共有13個卷積層,每一次卷積輸出的影象,再額外接入一個1x1的卷積,來降低通道數,所以可以看到,圖中有大量的21通道的卷積層。

- 同一個stage的21通道的特徵圖經過通道拼接,變成42通道或者是63通道的特徵圖,然後再經過一個1x1的卷積層,來把通道數降低成1,再進過sigmoid層,輸出的結果就是一個RCF模型中的side output了

## 2 損失函式

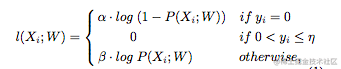

這裡的損失函式其實和HED來說類似:

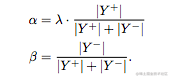

首先整體來看,損失函式依然使用二值交叉熵

其中$|Y^-|$ 表示 negative的畫素值,$|Y^+|$表示positive的畫素值。**一般來說輪廓檢測任務中,positive的樣本應該是較少的,因此$\alpha$的值較小,因此損失函式中第一行,y=0也就是計算非輪廓部分的損失的時候,就會增加一個較小的權重,來避免類別不均衡的問題。**

損失函式中有兩個常數,一個是$\lambda$,這個就是權重常數,預設為1.1;另外一個是$\eta$。論文中的描述為:

> Edge datasets in this community are usually labeled by several annotators using their knowledge about the presences of objects and object parts. Though humans vary in cognition, these human-labeled edges for the same image share high consistency. For each image, we average all the ground truth to generate an edge probability map, which ranges from 0 to 1. Here, 0 means no annotator labeled at this pixel, and 1 means all annotators have labeled at this pixel. We consider the pixels with edge probability higher than η as positive samples and the pixels with edge probability equal to 0 as negative samples. Otherwise, if a pixel is marked by fewer than η of the annotators, this pixel may be semantically controversial to be an edge point. Thus, whether regarding it as positive or negative samples may confuse networks. So we ignore pixels in this category.

大意就是:一般對資料集進行標註,是有多個人來完成的。不同的人雖然有不同的意識,但是他們對於同一個圖片的輪廓標註往往是具有一致性。RCF網路最後的輸出,是由5個side output融合產生的,因此你這個RCF的輸出也應該把大於$\eta$的考慮為positive,然後小於$\eta$的考慮為negative。 **其實這一點我自己在復現的時候並沒有考慮,我看網上的github和官方的程式碼中,都沒有考慮這個,都是直接交叉熵。。。我這就也就多此一舉的講解一下論文中的這個$\eta$的含義**

## 3 pytorch部分程式碼

對於這個RCF論文來說,關鍵就是一個模型的構建,另外一個就是損失函式的構建,這裡放出這兩部分的程式碼,來幫助大家更好的理解上面的內容。

### 3.1 模型部分

下面的程式碼在上取樣部分的寫法比較老舊,因為這個網上找來的pytorch版本估計比較老,當時還沒有Conv2DTrans這樣的函式封裝,但是不妨礙大家通過程式碼來學習RCF。

```python

class RCF(nn.Module):

def __init__(self):

super(RCF, self).__init__()

#lr 1 2 decay 1 0

self.conv1_1 = nn.Conv2d(3, 64, 3, padding=1)

self.conv1_2 = nn.Conv2d(64, 64, 3, padding=1)

self.conv2_1 = nn.Conv2d(64, 128, 3, padding=1)

self.conv2_2 = nn.Conv2d(128, 128, 3, padding=1)

self.conv3_1 = nn.Conv2d(128, 256, 3, padding=1)

self.conv3_2 = nn.Conv2d(256, 256, 3, padding=1)

self.conv3_3 = nn.Conv2d(256, 256, 3, padding=1)

self.conv4_1 = nn.Conv2d(256, 512, 3, padding=1)

self.conv4_2 = nn.Conv2d(512, 512, 3, padding=1)

self.conv4_3 = nn.Conv2d(512, 512, 3, padding=1)

self.conv5_1 = nn.Conv2d(512, 512, kernel_size=3,

stride=1, padding=2, dilation=2)

self.conv5_2 = nn.Conv2d(512, 512, kernel_size=3,

stride=1, padding=2, dilation=2)

self.conv5_3 = nn.Conv2d(512, 512, kernel_size=3,

stride=1, padding=2, dilation=2)

self.relu = nn.ReLU()

self.maxpool = nn.MaxPool2d(2, stride=2, ceil_mode=True)

self.maxpool4 = nn.MaxPool2d(2, stride=1, ceil_mode=True)

#lr 0.1 0.2 decay 1 0

self.conv1_1_down = nn.Conv2d(64, 21, 1, padding=0)

self.conv1_2_down = nn.Conv2d(64, 21, 1, padding=0)

self.conv2_1_down = nn.Conv2d(128, 21, 1, padding=0)

self.conv2_2_down = nn.Conv2d(128, 21, 1, padding=0)

self.conv3_1_down = nn.Conv2d(256, 21, 1, padding=0)

self.conv3_2_down = nn.Conv2d(256, 21, 1, padding=0)

self.conv3_3_down = nn.Conv2d(256, 21, 1, padding=0)

self.conv4_1_down = nn.Conv2d(512, 21, 1, padding=0)

self.conv4_2_down = nn.Conv2d(512, 21, 1, padding=0)

self.conv4_3_down = nn.Conv2d(512, 21, 1, padding=0)

self.conv5_1_down = nn.Conv2d(512, 21, 1, padding=0)

self.conv5_2_down = nn.Conv2d(512, 21, 1, padding=0)

self.conv5_3_down = nn.Conv2d(512, 21, 1, padding=0)

#lr 0.01 0.02 decay 1 0

self.score_dsn1 = nn.Conv2d(21, 1, 1)

self.score_dsn2 = nn.Conv2d(21, 1, 1)

self.score_dsn3 = nn.Conv2d(21, 1, 1)

self.score_dsn4 = nn.Conv2d(21, 1, 1)

self.score_dsn5 = nn.Conv2d(21, 1, 1)

#lr 0.001 0.002 decay 1 0

self.score_final = nn.Conv2d(5, 1, 1)

def forward(self, x):

# VGG

img_H, img_W = x.shape[2], x.shape[3]

conv1_1 = self.relu(self.conv1_1(x))

conv1_2 = self.relu(self.conv1_2(conv1_1))

pool1 = self.maxpool(conv1_2)

conv2_1 = self.relu(self.conv2_1(pool1))

conv2_2 = self.relu(self.conv2_2(conv2_1))

pool2 = self.maxpool(conv2_2)

conv3_1 = self.relu(self.conv3_1(pool2))

conv3_2 = self.relu(self.conv3_2(conv3_1))

conv3_3 = self.relu(self.conv3_3(conv3_2))

pool3 = self.maxpool(conv3_3)

conv4_1 = self.relu(self.conv4_1(pool3))

conv4_2 = self.relu(self.conv4_2(conv4_1))

conv4_3 = self.relu(self.conv4_3(conv4_2))

pool4 = self.maxpool4(conv4_3)

conv5_1 = self.relu(self.conv5_1(pool4))

conv5_2 = self.relu(self.conv5_2(conv5_1))

conv5_3 = self.relu(self.conv5_3(conv5_2))

conv1_1_down = self.conv1_1_down(conv1_1)

conv1_2_down = self.conv1_2_down(conv1_2)

conv2_1_down = self.conv2_1_down(conv2_1)

conv2_2_down = self.conv2_2_down(conv2_2)

conv3_1_down = self.conv3_1_down(conv3_1)

conv3_2_down = self.conv3_2_down(conv3_2)

conv3_3_down = self.conv3_3_down(conv3_3)

conv4_1_down = self.conv4_1_down(conv4_1)

conv4_2_down = self.conv4_2_down(conv4_2)

conv4_3_down = self.conv4_3_down(conv4_3)

conv5_1_down = self.conv5_1_down(conv5_1)

conv5_2_down = self.conv5_2_down(conv5_2)

conv5_3_down = self.conv5_3_down(conv5_3)

so1_out = self.score_dsn1(conv1_1_down + conv1_2_down)

so2_out = self.score_dsn2(conv2_1_down + conv2_2_down)

so3_out = self.score_dsn3(conv3_1_down + conv3_2_down + conv3_3_down)

so4_out = self.score_dsn4(conv4_1_down + conv4_2_down + conv4_3_down)

so5_out = self.score_dsn5(conv5_1_down + conv5_2_down + conv5_3_down)

## transpose and crop way

weight_deconv2 = make_bilinear_weights(4, 1).cuda()

weight_deconv3 = make_bilinear_weights(8, 1).cuda()

weight_deconv4 = make_bilinear_weights(16, 1).cuda()

weight_deconv5 = make_bilinear_weights(32, 1).cuda()

upsample2 = torch.nn.functional.conv_transpose2d(so2_out, weight_deconv2, stride=2)

upsample3 = torch.nn.functional.conv_transpose2d(so3_out, weight_deconv3, stride=4)

upsample4 = torch.nn.functional.conv_transpose2d(so4_out, weight_deconv4, stride=8)

upsample5 = torch.nn.functional.conv_transpose2d(so5_out, weight_deconv5, stride=8)

### center crop

so1 = crop(so1_out, img_H, img_W)

so2 = crop(upsample2, img_H, img_W)

so3 = crop(upsample3, img_H, img_W)

so4 = crop(upsample4, img_H, img_W)

so5 = crop(upsample5, img_H, img_W)

fusecat = torch.cat((so1, so2, so3, so4, so5), dim=1)

fuse = self.score_final(fusecat)

results = [so1, so2, so3, so4, so5, fuse]

results = [torch.sigmoid(r) for r in results]

return results

```

### 3.2 損失函式部分

```python

def cross_entropy_loss_RCF(prediction, label):

label = label.long()

mask = label.float()

num_positive = torch.sum((mask==1).float()).float()

num_negative = torch.sum((mask==0).float()).float()

mask[mask == 1] = 1.0 * num_negative / (num_positive + num_negative)

mask[mask == 0] = 1.1 * num_positive / (num_positive + num_negative)

mask[mask == 2] = 0

cost = torch.nn.functional.binary_cross_entropy(

prediction.float(),label.float(), weight=mask, reduce=False)

return torch.sum(cost)

```

參考文章:

1. https://blog.csdn.net/a8039974/article/details/85696282

2. https://gitee.com/HEART1/RCF-pytorch/blob/master/functions.py

3. https://openaccess.thecvf.com/content_cvpr_2017/papers/Liu_Richer_Convolutional_Features_CVPR_2017_p