TensorFlow實現經典深度學習網路(5):TensorFlow實現自然語言處理基礎網路Word2Vec

TensorFlow實現經典深度學習網路(5):TensorFlow實現自然語言處理

基礎網路Word2Vec

迴圈神經網路RNN是在自然語言處理NLP領域最常使用的神經網路結構,和卷積神經網路在影象識別領域的地位相似,影響深遠。而Word2Vec則是將語言中的字詞轉化為計算機可以理解的稠密向量Dense Vector,進而可以做其他自然語言處理任務,比如文字分類、詞性標註、機器翻譯等。有時,Word2Vec也稱Word Embedings,中文也有很多叫法,比較普遍的是“詞向量”或“詞嵌入”,是一個可以將語言中字詞轉為向量形式表達的模型,是一種計算非常高效,可以從原始語料中學習詞空間向量的預測模型。

隨著21世紀處理技術的不斷髮展,人們逐漸開始從原始的詞向量稀疏表示法過渡到現在的低維空間中的密集表示。自然語言處理技術在Word2Vec出現之前,通常將字詞轉為離散的單獨的符號,這沒有提供任何的關聯資訊,沒有考慮到字詞間可能存在的關係。用稀疏表示法在解決實際問題時經常會遇到維數災難,並且語義資訊無法表示,無法揭示word之間的潛在聯絡;而且將字詞儲存為悉數向量的話,通常需要更多的資料來訓練,效率比較低,計算也非常麻煩。而採用低維空間表示法,不但解決了維數災難問題,並且挖掘了word之間的關聯屬性,從而提高了向量語義上的準確度。

Word2Vec主要分為CBOW和Skip-Gram兩種模式,其中CBOW是從原始語句推測目標單詞;而Skip-Gram則正好相反,它是從目標字詞推測出原始語句,其中CBOW對小型資料比較合適,而Skip-Gram在大型語料中表現更好。本文將主要使用Skip-Gram模式的Word2Vec。在準備工作就緒後,我們就可以搭建網路了。這裡因為要從網路下載資料,因此需要的依賴庫比較多。以下程式碼是根據本人對Word2Vec網路的理解和現有資源(《TensorFlow實戰》、TensorFlow的開源實現等)整理而成,並根據自己認識添加了註釋。程式碼註釋若有錯誤請指正。

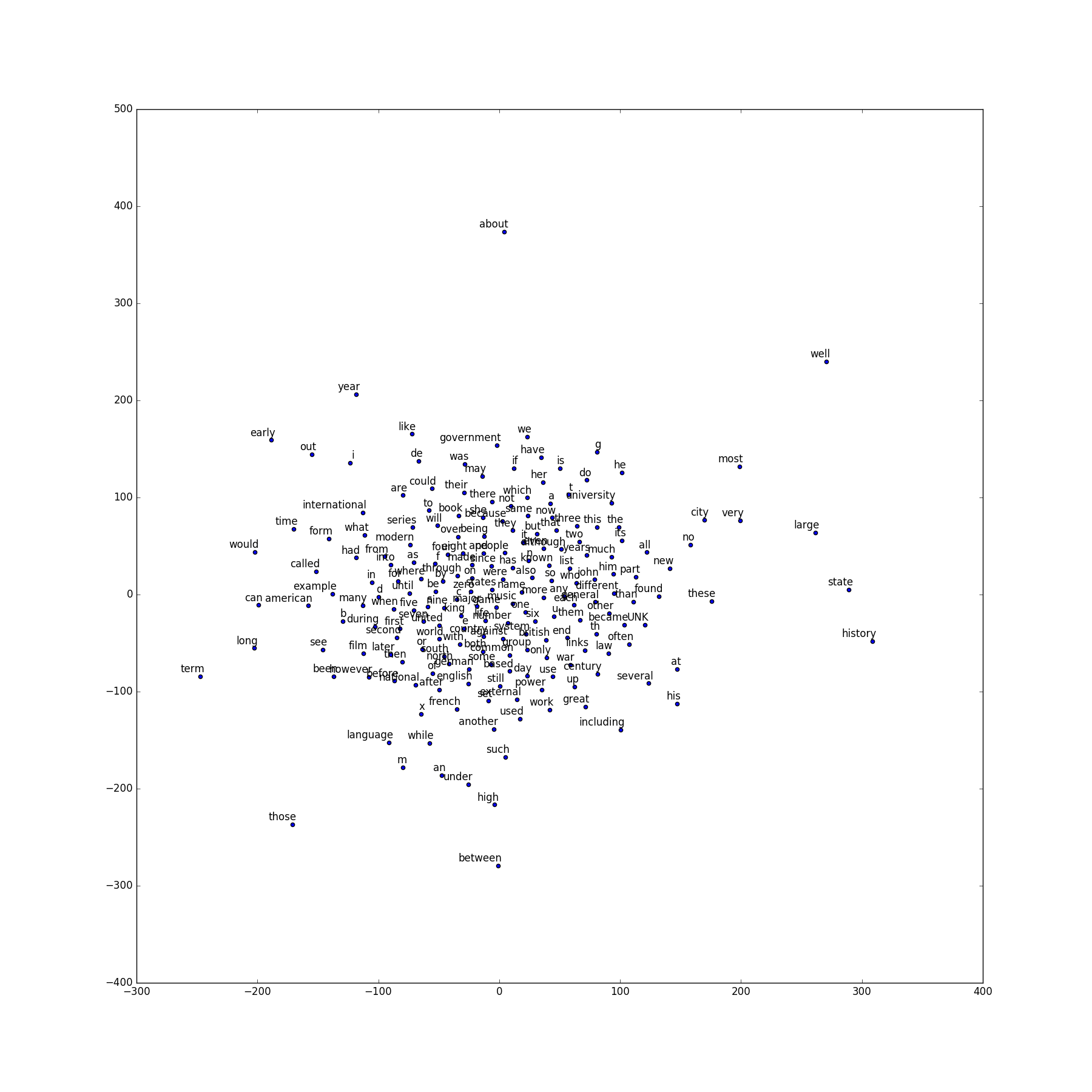

執行程式,我們會看到如下的程式顯示,以下為展示出來的平均損失,以及與驗證單詞相似度最高的單詞,可以看出訓練的模型對名詞、動詞、形容詞等型別的單詞的相似詞彙的識別都非常準確。# -*- coding: utf-8 -*- import os os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2' # TensorFlow實現Word2Vec訓練 # 載入依賴庫 import collections import math import os import random import zipfile import numpy as np import urllib import tensorflow as tf # 定義下載文字資料的函式 # 若已下載檔案則跳過 url = 'http://mattmahoney.net/dc/' def maybe_download(filename, expected_bytes): if not os.path.exists(filename): filename, _ = urllib.request.urlretrieve(url + filename, filename) statinfo = os.stat(filename) if statinfo.st_size == expected_bytes: print('Found and verified', filename) else: print(statinfo.st_size) raise Exception( 'Failed to verify ' + filename + '. Can you get to it with a browser?') return filename filename = maybe_download('text8.zip', 31344016) # 解壓下載的壓縮檔案 # 將資料轉成單詞的列表 def read_data(filename): with zipfile.ZipFile(filename) as f: data = tf.compat.as_str(f.read(f.namelist()[0])).split() return data words = read_data(filename) print('Data size', len(words)) # 建立vocabulary詞彙表 # 建立dict vocabulary_size = 50000 def build_dataset(words): count = [['UNK', -1]] count.extend(collections.Counter(words).most_common(vocabulary_size - 1)) dictionary = dict() for word, _ in count: dictionary[word] = len(dictionary) data = list() unk_count = 0 for word in words: if word in dictionary: index = dictionary[word] else: index = 0 unk_count += 1 data.append(index) count[0][1] = unk_count reverse_dictionary = dict(zip(dictionary.values(), dictionary.keys())) return data, count, dictionary, reverse_dictionary data, count, dictionary, reverse_dictionary = build_dataset(words) # 刪除原始單詞列表,列印最高頻出現的詞彙及其數量 del words print('Most common words (+UNK)', count[:5]) print('Sample data', data[:10], [reverse_dictionary[i] for i in data[:10]]) # 生成Word2Vec訓練樣本 data_index = 0 def generate_batch(batch_size, num_skips, skip_window): global data_index assert batch_size % num_skips == 0 assert num_skips <= 2 * skip_window batch = np.ndarray(shape=(batch_size), dtype=np.int32) labels = np.ndarray(shape=(batch_size, 1), dtype=np.int32) span = 2 * skip_window + 1 buffer = collections.deque(maxlen=span) # 從序號data_index開始,將span個單詞讀入buffer作為初始值 for _ in range(span): buffer.append(data[data_index]) data_index = (data_index + 1) % len(data) for i in range(batch_size // num_skips): target = skip_window targets_to_avoid = [ skip_window ] for j in range(num_skips): while target in targets_to_avoid: target = random.randint(0, span - 1) targets_to_avoid.append(target) batch[i * num_skips + j] = buffer[skip_window] labels[i * num_skips + j, 0] = buffer[target] buffer.append(data[data_index]) data_index = (data_index + 1) % len(data) return batch, labels # 呼叫generate_batch函式簡單測試功能 batch, labels = generate_batch(batch_size=8, num_skips=2, skip_window=1) for i in range(8): print(batch[i], reverse_dictionary[batch[i]], '->', labels[i, 0], reverse_dictionary[labels[i, 0]]) # 建立訓練skip-gram模型 batch_size = 128 embedding_size = 128 skip_window = 1 num_skips = 2 valid_size = 16 # 用來抽取的驗證單詞數 valid_window = 100 # 驗證單詞只從頻數最高的100個單詞中抽取 valid_examples = np.random.choice(valid_window, valid_size, replace=False) num_sampled = 64 # 訓練時用來做負樣本的噪聲單詞數量 # 定義Skip-Gram Word2Vec模型的網路結構 graph = tf.Graph() with graph.as_default(): # 輸入資料 train_inputs = tf.placeholder(tf.int32, shape=[batch_size]) train_labels = tf.placeholder(tf.int32, shape=[batch_size, 1]) valid_dataset = tf.constant(valid_examples, dtype=tf.int32) # 限定所有計算在CPU上執行,因一些計算操作在GPU上可能還沒有實現 with tf.device('/cpu:0'): # 查詢對應的輸入 embeddings = tf.Variable( tf.random_uniform([vocabulary_size, embedding_size], -1.0, 1.0)) embed = tf.nn.embedding_lookup(embeddings, train_inputs) # 使用NCE Loss作為訓練優化目標,初始化 nce_weights = tf.Variable( tf.truncated_normal([vocabulary_size, embedding_size], stddev=1.0 / math.sqrt(embedding_size))) nce_biases = tf.Variable(tf.zeros([vocabulary_size])) # 計算詞向量embedding在訓練資料上的loss,並彙總 loss = tf.reduce_mean( tf.nn.nce_loss(weights=nce_weights, biases=nce_biases, labels=train_labels, inputs=embed, num_sampled=num_sampled, num_classes=vocabulary_size)) # 定義優化器為SGD,且學習率為1.0 optimizer = tf.train.GradientDescentOptimizer(1.0).minimize(loss) # 計算驗證單詞的嵌入向量與詞表中所有單詞的相似性 norm = tf.sqrt(tf.reduce_sum(tf.square(embeddings), 1, keep_dims=True)) normalized_embeddings = embeddings / norm valid_embeddings = tf.nn.embedding_lookup( normalized_embeddings, valid_dataset) similarity = tf.matmul( valid_embeddings, normalized_embeddings, transpose_b=True) # 初始化所有模型引數 init = tf.global_variables_initializer() # 訓練,定義迭代最大次數為10萬次 num_steps = 100001 with tf.Session(graph=graph) as session: init.run() print("Initialized") average_loss = 0 for step in range(num_steps): batch_inputs, batch_labels = generate_batch( batch_size, num_skips, skip_window) feed_dict = {train_inputs : batch_inputs, train_labels : batch_labels} # 使用session.run()執行一次優化器運算和損失計算 # 將此步訓練的loss累累積到average_loss _, loss_val = session.run([optimizer, loss], feed_dict=feed_dict) average_loss += loss_val # 每2000次迴圈,計算並顯示loss if step % 2000 == 0: if step > 0: average_loss /= 2000 print("Average loss at step ", step, ": ", average_loss) average_loss = 0 # 每10000次迴圈,計算一次驗證單詞與全部單詞的相似度,並顯示8個最相似的單詞 if step % 10000 == 0: sim = similarity.eval() for i in range(valid_size): valid_word = reverse_dictionary[valid_examples[i]] top_k = 8 nearest = (-sim[i, :]).argsort()[1:top_k+1] log_str = "Nearest to %s:" % valid_word for k in range(top_k): close_word = reverse_dictionary[nearest[k]] log_str = "%s %s," % (log_str, close_word) print(log_str) final_embeddings = normalized_embeddings.eval() # 定義視覺化Word2Vec效果函式 def plot_with_labels(low_dim_embs, labels, filename='tsne.png'): assert low_dim_embs.shape[0] >= len(labels), "More labels than embeddings" plt.figure(figsize=(18, 18)) for i, label in enumerate(labels): x, y = low_dim_embs[i, :] plt.scatter(x, y) # 顯示散點圖 plt.annotate(label, xy=(x, y), xytext=(5, 2), textcoords='offset points', ha='right', va='bottom') plt.savefig(filename) # 儲存圖片到本地 # 使用sklearn.manifold.TSNE實現降維,再進行顯示 try: from sklearn.manifold import TSNE import matplotlib.pyplot as plt tsne = TSNE(perplexity=30, n_components=2, init='pca', n_iter=5000) plot_only = 200 low_dim_embs = tsne.fit_transform(final_embeddings[:plot_only,:]) labels = [reverse_dictionary[i] for i in range(plot_only)] plot_with_labels(low_dim_embs, labels) except ImportError: print("Please install sklearn, matplotlib, and scipy to visualize embeddings.")

Found and verified text8.zip

Data size 17005207

Most common words (+UNK) [['UNK', 418391], ('the', 1061396), ('of', 593677), ('and', 416629), ('one', 411764)]

Sample data [5236, 3081, 12, 6, 195, 2, 3137, 46, 59, 156] ['anarchism', 'originated', 'as', 'a', 'term', 'of', 'abuse', 'first', 'used', 'against']

3081 originated -> 5236 anarchism

3081 originated -> 12 as

12 as -> 3081 originated

12 as -> 6 a

6 a -> 12 as

6 a -> 195 term

195 term -> 6 a

195 term -> 2 of

Initialized

Average loss at step 0 : 305.99029541

Nearest to state: hring, compel, freebsd, accolades, wollheim, otherworldly, abdel, vigorous,

Nearest to d: memorial, magical, maul, turns, nanjing, renn, hutu, marmite,

Nearest to between: persona, currencies, weird, hydrodynamics, liturgy, sieve, microcomputer, dir,

Nearest to three: wembley, korn, perpendicularly, avery, alban, blondie, corrosion, gotland,

Nearest to in: tara, flown, boomerangs, bets, lammas, mishnayot, marysville, denotation,

Nearest to if: sexes, robes, jewish, conversation, outer, murmur, biopolymers, lanes,

Nearest to however: footballers, parliamentarian, guaranteeing, discretion, thief, faber, elgar, coursing,

Nearest to his: twa, parakeet, ostwald, booker, meme, localized, seam, ecclesiastica,

Nearest to nine: lat, leon, confederations, demolish, bulldogs, timepieces, disaffection, leonid,

Nearest to time: reelected, blyth, chifley, nosed, elaborates, coasts, discipleship, jacob,

Nearest to s: rages, abacus, skipping, aforementioned, thames, mummified, exclude, latins,

Nearest to with: silmarillion, hospitable, burundi, enters, grandmaster, daw, bytecode, eleusinian,

Nearest to often: within, reorganisation, kievan, cree, rattus, belle, unido, renouncing,

Nearest to people: cathal, psycho, barrels, gtb, guarded, kronecker, triadic, inventories,

Nearest to states: thickened, diphthongs, sync, readers, mantras, inordinate, melee, unsaturated,

Nearest to new: dropouts, squares, befitting, capability, shall, unenforceable, delusion, dissociation 至此,Word2Vec的基本原理和TensorFlow實現Word2Vec的工作就完成了,並取得了非常好的效果。

在後續工作中,我將繼續為大家展現TensorFlow和深度學習網路帶來的無盡樂趣,我將和大家一起探討深度學習的奧祕。當然,如果你感興趣,我的Weibo將與你一起分享最前沿的人工智慧、機器學習、深度學習與計算機視覺方面的技術。