資料湖-Apache Hudi

阿新 • • 發佈:2021-01-30

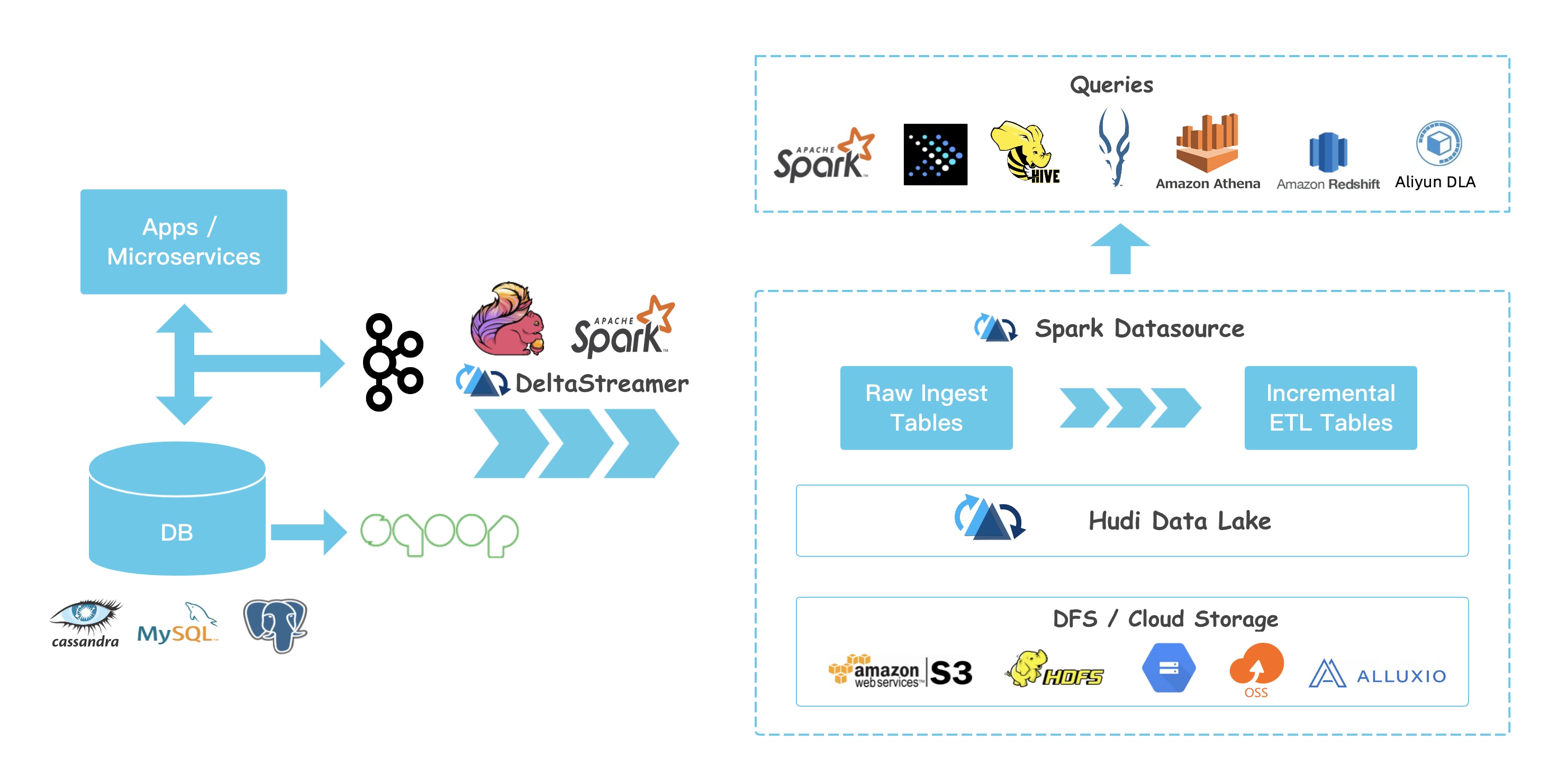

## Hudi特性

- 資料湖處理非結構化資料、日誌資料、結構化資料

- 支援較快upsert/delete, 可插入索引

- Table Schema

- 小檔案管理Compaction

- ACID語義保證,多版本保證 並具有回滾功能

- savepoint 使用者資料恢復的儲存點

- 支援多種分析引擎 spark、hive、presto

## 編譯Hudi

git clone https://github.com/apache/hudi.git && cd hudi

mvn clean package -DskipTests

**hudi 高度耦合spark**

執行spark-shell測試Hudi

```

bin/spark-shell --packages org.apache.spark:spark-avro_2.11:2.4.5 --conf 'spark.serializer=org.apache.spark.serializer.KryoSerializer' --jars /Users/macwei/IdeaProjects/hudi-master/packaging/hudi-spark-bundle/target/hudi-spark-bundle_2.11-0.6.1-SNAPSHOT.jar

```

**hudi 寫入資料**

```

// spark-shell

import org.apache.hudi.QuickstartUtils._

import scala.collection.JavaConversions._

import org.apache.spark.sql.SaveMode._

import org.apache.hudi.DataSourceReadOptions._

import org.apache.hudi.DataSourceWriteOptions._

import org.apache.hudi.config.HoodieWriteConfig._

val tableName = "hudi_trips_cow"

val basePath = "file:///tmp/hudi_trips_cow"

val dataGen = new DataGenerator

// spark-shell

val inserts = convertToStringList(dataGen.generateInserts(10))

val df = spark.read.json(spark.sparkContext.parallelize(inserts, 2))

df.write.format("hudi").

options(getQuickstartWriteConfigs).

option(PRECOMBINE_FIELD_OPT_KEY, "ts").

option(RECORDKEY_FIELD_OPT_KEY, "uuid").

option(PARTITIONPATH_FIELD_OPT_KEY, "partitionpath").

option(TABLE_NAME, tableName).

mode(Overwrite).

save(basePath)

```

**讀取hudi資料:**

```

val tripsSnapshotDF = spark.

read.

format("hudi").

load(basePath + "/*/*/*/*")

tripsSnapshotDF.createOrReplaceTempView("hudi_trips_snapshot")

spark.sql("select fare, begin_lon, begin_lat, ts from hudi_trips_snapshot where fare > 20.0").show()

+------------------+-------------------+-------------------+-------------+

| fare| begin_lon| begin_lat| ts|

+------------------+-------------------+-------------------+-------------+

| 64.27696295884016| 0.4923479652912024| 0.5731835407930634|1609771934700|

| 93.56018115236618|0.14285051259466197|0.21624150367601136|1610087553306|

| 33.92216483948643| 0.9694586417848392| 0.1856488085068272|1609982888463|

| 27.79478688582596| 0.6273212202489661|0.11488393157088261|1610187369637|

|34.158284716382845|0.46157858450465483| 0.4726905879569653|1610017361855|

| 43.4923811219014| 0.8779402295427752| 0.6100070562136587|1609795685223|

| 66.62084366450246|0.03844104444445928| 0.0750588760043035|1609923236735|

| 41.06290929046368| 0.8192868687714224| 0.651058505660742|1609838517703|

+------------------+-------------------+-------------------+-------------+

spark.sql("select _hoodie_commit_time, _hoodie_record_key, _hoodie_partition_path, rider, driver, fare from hudi_trips_snapshot").show()

+-------------------+--------------------+----------------------+---------+----------+------------------+

|_hoodie_commit_time| _hoodie_record_key|_hoodie_partition_path| rider| driver| fare|

+-------------------+--------------------+----------------------+---------+----------+------------------+

| 20210110225218|3c7ef0e7-86fb-444...| americas/united_s...|rider-213|driver-213| 64.27696295884016|

| 20210110225218|222db9ca-018b-46e...| americas/united_s...|rider-213|driver-213| 93.56018115236618|

| 20210110225218|3fc72d76-f903-4ca...| americas/united_s...|rider-213|driver-213|19.179139106643607|

| 20210110225218|512b0741-e54d-426...| americas/united_s...|rider-213|driver-213| 33.92216483948643|

| 20210110225218|ace81918-0e79-41a...| americas/united_s...|rider-213|driver-213| 27.79478688582596|

| 20210110225218|c76f82a1-d964-4db...| americas/brazil/s...|rider-213|driver-213|34.158284716382845|

| 20210110225218|73145bfc-bcb2-424...| americas/brazil/s...|rider-213|driver-213| 43.4923811219014|

| 20210110225218|9e0b1d58-a1c4-47f...| americas/brazil/s...|rider-213|driver-213| 66.62084366450246|

| 20210110225218|b8fccca1-9c28-444...| asia/india/chennai|rider-213|driver-213|17.851135255091155|

| 20210110225218|6144be56-cef9-43c...| asia/india/chennai|rider-213|driver-213| 41.06290929046368|

+-------------------+--------------------+----------------------+---------+----------+------------------+

```

## 對比

資料匯入至hadoop方案: maxwell、canal、flume、sqoop

hudi是通用方案

- hudi 支援presto、spark sql下游查詢

- hudi儲存依賴hdfs

- hudi可以當作資料來源或資料庫,支援PB級別

## 概念

Timeline: 時間戳

state:即時狀態

原子寫入操作

compaction: 後臺協調hudi中差異資料

rollback: 回滾

savepoint: 資料還原

任何操作都有以下狀態:

- Requested 已安排操作行為,但是沒有開始

- Inflight 正在執行當前操作

- Completed 已完成操作

hudi提供兩種表型別:

- CopyOnWrite 適用全量資料,列式儲存,寫入過程執行同步合併重寫檔案

- MergeOnRead 增量資料,基於列式(parquet)和行式(avro)儲存,更新記錄到增量檔案(日誌檔案),壓縮同步和非同步生成新版本檔案,延遲更低

hudi查詢型別:

- 快照查詢 查詢最新快照表資料,如果是MergeOnRead表,動態合併最新版本基本資料和增量資料用於顯示查詢;如果是CopyOnWrite,直接查詢Parquet表,同時提供upsert、delete操作

- 增量查詢 只能看到寫入表的新資料

- 優化讀查詢 給定時間段的一個查詢

## 資料參考

- Docker Demo: https://hudi.apache.org/docs/docker_demo.html Hudi 官方建議程式碼測試可在Docker進行, 如果在Docker執行有問題也可以進行Remote Debugger

- Hudi 目前程式碼寫的很多實現其實有點不太好, 如果有想貢獻提交PR的可參考: [如何進行開源貢獻](https://blog.csdn.net/hejinbiao_521/article/details/103